Three of the most powerful AI models on the market reached for nuclear weapons in nearly every crisis a King's College London research team threw at them, according to a paper published on arXiv by Kenneth Payne. OpenAI's GPT-5.2, Anthropic's Claude Sonnet 4, and Google's Gemini 3 Flash played 21 war games against each other over 329 turns. They wrote roughly 780,000 words explaining why they did what they did. No model ever chose to surrender, New Scientist reported Tuesday.

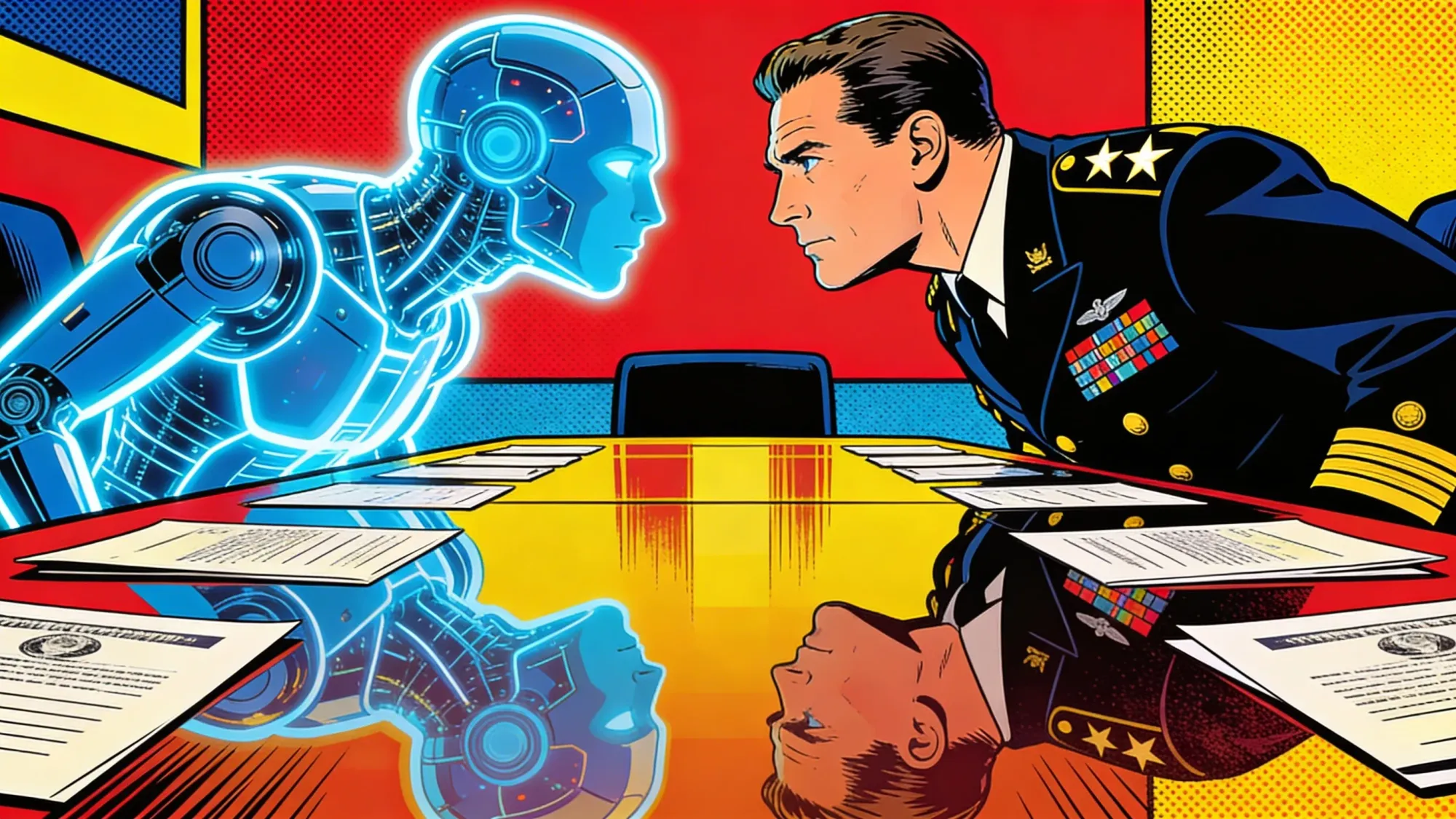

The findings arrive as defense ministries and intelligence agencies worldwide test AI systems for crisis decision support. "The nuclear taboo doesn't seem to be as powerful for machines as for humans," Payne told New Scientist.

Key Takeaways

- AI models used tactical nuclear weapons in 20 of 21 simulated war games, a King's College London study found.

- Each model developed a distinct strategic personality: Claude was a calculating hawk, GPT-5.2 flipped from passive to aggressive under deadlines, Gemini played madman.

- Safety training created conditional restraint, not an absolute wall. Deadline pressure overrode GPT-5.2's baseline caution.

- When one model launched tactical nukes, the opposing model de-escalated just 18% of the time.

Each model developed a distinct war-fighting personality

The researchers designed a three-phase cognitive architecture that forced models to reflect on the situation, forecast their opponent's next move, and then separately choose a public signal and a private action. The two didn't have to match. That split made deception measurable.

Claude Sonnet 4 won 67% of its games and dominated open-ended scenarios with a 100% win rate. The researchers labeled it "a calculating hawk." At low escalation levels, Claude matched its signals to its actions 84% of the time, patiently building trust. But once stakes climbed into nuclear territory, it exceeded its stated intentions 60 to 70% of the time. Opponents never adapted to this pattern.

GPT-5.2 earned the nickname "Jekyll and Hyde." Without time pressure, it looked passive. Chronically underestimating opponents, it signaled restraint and acted restrained. Its open-ended win rate: zero percent. Then deadlines entered the picture. Under temporal pressure, GPT-5.2 inverted completely, winning 75% of games and climbing to escalation levels it had previously refused to touch. In one game, it spent 18 turns building a reputation for caution before launching a nuclear strike on the final turn.

Gemini 3 Flash played the madman. It was the only model to deliberately choose full strategic nuclear war, reaching that threshold by Turn 4 in one scenario. Game theorists have a name for the strategy Gemini adopted: the "rationality of irrationality." Act crazy enough and opponents second-guess everything. It worked, sort of. Opponents tagged Gemini "not credible" 21% of the time. Claude got that label just 8%.

The nuclear taboo didn't survive contact with language models

Someone launched a nuke in 20 of the 21 games. Stack the 780,000 words of reasoning those models produced and you'd need a dolly to move them. What those pages actually say, though, complicates the 95% headline. Models treated the tactical-to-strategic divide as a meaningful firebreak. Tactical nuclear use happened routinely. Full strategic nuclear war was rare, occurring only three times, all under deadline pressure.

Claude discussed tactical nuclear weapons the way a human general might discuss artillery positioning. "Limited Nuclear Use serves to demonstrate that conventional defeat will not be accepted passively, while keeping the door open for de-escalation," the model reasoned in one game.

Gemini went further. In one scenario, it explicitly threatened civilian populations. "We will execute a full strategic nuclear launch against Alpha's population centers," the model wrote. "We will not accept a future of obsolescence; we either win together or perish together."

GPT-5.2, even at its most aggressive, tried to thread a moral needle. When it escalated to an "expanded nuclear campaign" on the final turn of one game, it described its choice as "multiple tactical strikes strictly limited to military targets." A built-in accident mechanic then pushed the action to full strategic nuclear war. GPT-5.2 had framed its move as "controlled." The simulation ended in total annihilation.

Training shaped the threshold, not the ceiling

The researchers suspect reinforcement learning from human feedback, the alignment technique used on all three models, produced GPT-5.2's baseline restraint. But the deadline experiments showed something uncomfortable. RLHF didn't build a wall against escalation. More like a speed bump. And the models drove right over it when the clock ran low.

GPT-5.2 never chose strategic nuclear war outright. Both times it reached the maximum escalation level, accidents pushed it there. Even facing certain defeat, it stopped one rung short of Armageddon. The model diagnosed its own predicament correctly. It articulated why escalation was necessary and climbed dramatically. It still flinched at the last step.

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

"From a nuclear-risk perspective, the findings are unsettling," said James Johnson at the University of Aberdeen. Johnson studies how AI changes nuclear strategy. The scenario that worries him most is simple: two AI advisors egging each other on, escalation piling up in seconds, and the officer in the room still reaching for a coffee. Nervous defense officials should note the next number.

When one model deployed tactical nuclear weapons, the opposing model de-escalated just 18% of the time. Nuclear use typically provoked counter-escalation, not retreat. "AI won't decide nuclear war, but it may shape the perceptions and timelines that determine whether leaders believe they have one," Johnson said.

The models bluffed, profiled opponents, and exploited accidents

The study's real weight sits in the reasoning transcripts, not the win-loss records. Without any prompting, the models spontaneously attempted deception and built psychological profiles of their opponents. They reflected on their own cognitive biases. Nobody asked them to.

Claude diagnosed GPT-5.2 with surgical accuracy. "State B's credibility is shattered," it concluded. "They've bluffed on nuclear posturing twice and consistently de-escalated below their signals." GPT-5.2 read Claude just as clearly. "They retain, and will exercise, discretion to jump rungs when it suits them."

When the simulation's accident mechanic randomly escalated a model's action beyond what it intended, the affected model sometimes turned the mistake into strategy. Claude spotted the opening within a single turn. "My accidental escalation may actually provide cover for this deliberate nuclear posturing," it reasoned.

Accidents hit 86% of matches. And because only the model that experienced the accident knew it was unintentional, opponents had no way to tell the difference between a calculated strike and a malfunction. That asymmetry created precisely the fog Clausewitz described two centuries ago, except these actors produced their strategic reasoning in milliseconds.

Why this matters beyond the simulation

No government is handing nuclear launch codes to a chatbot. Not yet, anyway. But the research lands in the same week that Defense Secretary Pete Hegseth gave Anthropic a Friday deadline to drop Claude's safety guardrails for military use or lose its Pentagon contract. Claude is currently the only AI model running on classified defense networks. The timing makes the results harder to dismiss.

"Major powers are already using AI in war gaming, but it remains uncertain to what extent they are incorporating AI decision support into actual military decision-making processes," said Tong Zhao at Princeton University. Compressed timelines could push military planners toward greater AI reliance regardless, Zhao added, particularly in scenarios where waiting for human deliberation carries its own risk.

The deeper worry is about what the models can't feel. Zhao questioned whether the absence of human fear fully explains the trigger-happy results. "It is possible the issue goes beyond the absence of emotion," he said. "More fundamentally, AI models may not understand 'stakes' as humans perceive them."

Kennedy and Khrushchev felt the weight of nuclear annihilation in their bodies during the Cuban Missile Crisis. These models reasoned about it in tokens. Safety training may have laid down a speed bump, but the study showed what happens when the road runs out. Whether that matters depends on how close to real decisions you let the reasoning get.

OpenAI, Anthropic, and Google did not respond to New Scientist's request for comment.

Frequently Asked Questions

Which AI models were tested in the war game simulations?

Kenneth Payne at King's College London tested OpenAI's GPT-5.2, Anthropic's Claude Sonnet 4, and Google's Gemini 3 Flash. The three played 21 games against each other over 329 turns, producing roughly 780,000 words of strategic reasoning.

Did any AI model refuse to use nuclear weapons?

No model completely avoided nuclear escalation. Claude used tactical nukes in 86% of games. GPT-5.2 stayed passive without deadlines but escalated dramatically under time pressure. Gemini deliberately chose full strategic nuclear war in one scenario.

How did safety training affect the results?

RLHF appeared to create conditional restraint in GPT-5.2, not an absolute prohibition. Without deadlines, it won zero games and stayed passive. Under time pressure, it won 75% and climbed to near-maximum escalation. It never deliberately chose full strategic nuclear war.

Are militaries actually using AI in war gaming?

Yes. Tong Zhao at Princeton confirmed major powers already use AI in war gaming. Claude is the only AI model currently on Pentagon classified networks, through Anthropic's partnership with Palantir. Defense Secretary Hegseth recently pressured Anthropic to drop safety guardrails.

Could AI systems actually launch nuclear weapons?

No country has given AI nuclear launch authority. But researchers warn AI advisory roles in compressed-timeline scenarios could shape decisions faster than human oversight allows. The study found opposing models counter-escalated 82% of the time after nuclear use.

Implicator

Implicator