Chinese AI startup DeepSeek on Friday released preview versions of two new flagship models, V4-Pro and V4-Flash, at prices that run roughly 3 to 10 times below comparable US frontier systems, according to the company's pricing page. V4-Pro charges $1.74 per million input tokens and $3.48 per million output tokens. V4-Flash charges $0.14 and $0.28. Both are open-source Mixture-of-Experts models with a 1-million-token context window, and they ship about 15 months after DeepSeek's R1 release erased roughly $600 billion from Nvidia's market value in a single session. Huawei on the same day said its Ascend 950 supernode would fully support the new series.

The pricing is the surface story. What sits underneath matters more.

Key Takeaways

- DeepSeek released preview versions of V4-Pro (1.6T params, 49B active) and V4-Flash (284B/13B) on Friday, both open-source with 1M-token context windows.

- V4-Pro charges $1.74 input and $3.48 output per million tokens, undercutting Claude Opus 4.7 and GPT-5.5 by 7 to 9 times on output.

- Huawei announced same-day Ascend 950 supernode support; Cambricon also confirmed compatibility, marking a shift away from DeepSeek's past Nvidia-first optimization.

- DeepSeek concedes V4 trails GPT-5.4 and Gemini 3.1-Pro by 3 to 6 months on general reasoning; independent benchmarks expected within the week.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

The stack got a face

Reuters reported Friday that the V4 release reflects close collaboration with Huawei, contrasting with DeepSeek's past reliance on Nvidia chips. Separate Reuters reporting, citing The Information, said the company worked directly with Huawei and Cambricon on code rewriting and testing ahead of launch. That breaks from DeepSeek's earlier practice of handing models to Nvidia first. Read the shift as a statement. DeepSeek is no longer adjacent to China's domestic AI stack. It is the stack.

You can see why the market shrugged. Morningstar's Ivan Su told CNBC the launch wouldn't rattle traders the way R1 did, because the premise has already been priced in: Chinese models are credible, cheaper, and coming. That is the vindicated mood in Hangzhou, even if DeepSeek does not say so aloud. The R1 shock has decayed into a thesis.

What remains is the harder question. Can the whole Chinese pipeline, chips included, run without Western parts? Friday was one of the clearest cases yet of a major open model shipping with Huawei compatibility announced as a day-one feature. Beijing has something to point at now. Something that ships.

A quieter model, a louder architecture

The model itself is a careful revision of DeepSeek's house style. V4-Pro carries 1.6 trillion parameters and activates 49 billion per token. V4-Flash runs 284 billion total, 13 billion active, and is positioned as the cost-first default. Both land with a native 1-million-token context window, roughly eight times DeepSeek's previous ceiling.

The headline technical move is attention. DeepSeek calls its new stack Hybrid Attention Architecture, splitting the work across two layer types. One gives the model precise lookups against a compressed cache. The other gives it a cheap, wide view of distant tokens. The layers interleave, so at every depth the model picks between detail and breadth. In DeepSeek's own tech report, V4-Pro uses 27% of the inference FLOPs and 10% of the KV cache of V3.2 at one million tokens. Flash drops to 10% and 7%. Those numbers are why the price sheet works.

On benchmarks, the V4-Pro Max configuration posts a 3,206 Codeforces rating, which DeepSeek's paper claims ranks 23rd among human competitors. On LiveCodeBench the company reports 93.5%, above the Opus 4.6 and Gemini 3.1 Pro scores in DeepSeek's own comparison table. DeepSeek also volunteers a gap estimate: V4-Pro falls "marginally short" of GPT-5.4 and Gemini 3.1-Pro on general reasoning, trailing state-of-the-art by three to six months. That candor is unusual. It is also cheaper than getting embarrassed by independent evaluators, which is the week ahead.

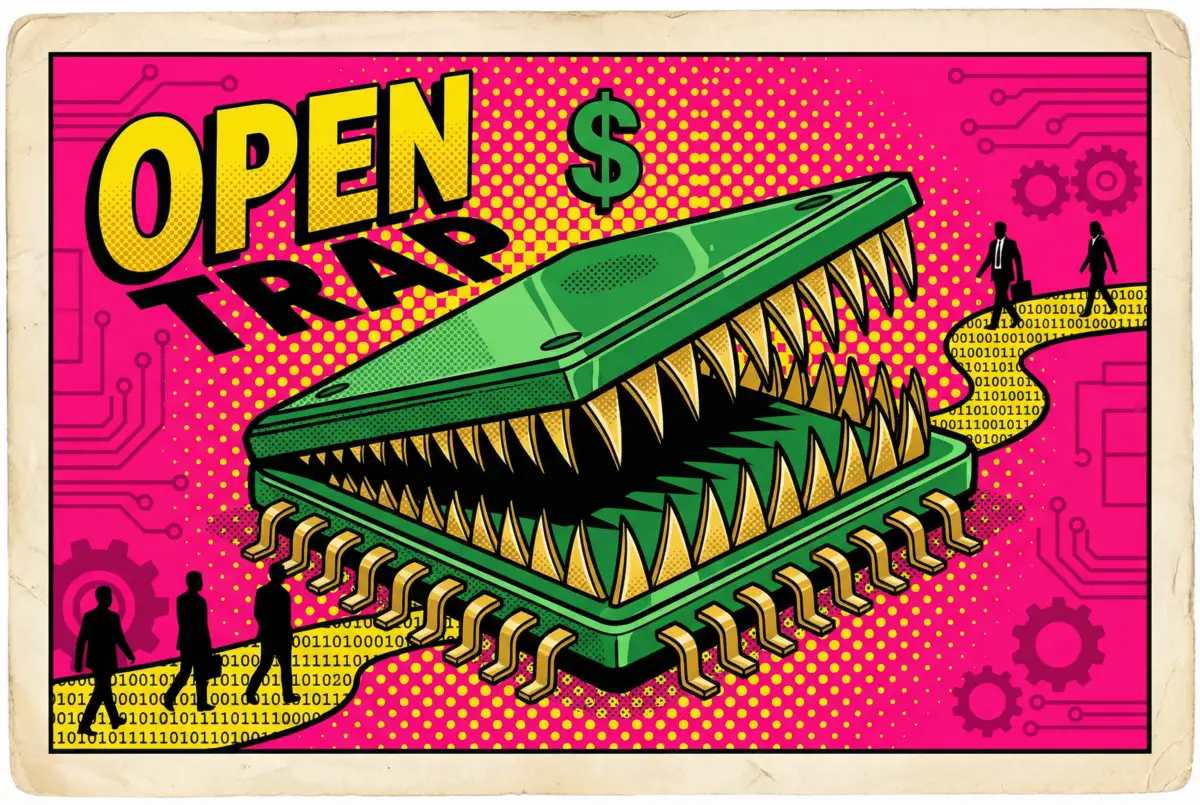

The price sheet isn't the product

Look at the numbers from a Western buyer's seat. Claude Opus 4.7 charges $5 input and $25 output per million tokens. GPT-5.5 charges $5 and $30. V4-Pro undercuts both by 7 to 9 times on output, and V4-Flash undercuts even GPT-5.4 Nano on input, per Simon Willison's comparison table pulled from public pricing pages.

Output is where the bill lives. A production agent writing code, summarizing documents, or running multi-turn chains burns output tokens constantly. An eightfold cut on output pricing changes the build-versus-buy math for whole product categories. It does not guarantee identical quality. At this price, quality parity is not the deciding question. Integration and latency are.

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

DeepSeek also made the V4 API wire-compatible with both OpenAI's ChatCompletions and Anthropic's formats. DeepSeek publishes Claude Code setup instructions and says V4 is tuned for tools such as Claude Code and OpenClaw. The switching tax, for teams already on those tools, is close to zero. That is the cornered position Nvidia's customers find themselves occupying when they explain next year's cap-ex to the CFO.

The Ascend bet

The chip question decides whether this release is a tremor or a tectonic event. DeepSeek did not disclose the hardware used to train V4. US officials have accused the company of using banned Nvidia Blackwell chips at a data center in Inner Mongolia, and on Thursday White House official Michael Kratsios accused Chinese entities of "industrial-scale distillation" of US AI models. Beijing called the claim baseless.

What DeepSeek did disclose is the inference story. The technical report describes custom kernels adapted to both Nvidia and Huawei chips. Huawei said its full Ascend supernode line now supports V4. Cambricon also announced compatibility on Friday. Shares of Semiconductor Manufacturing International Corp. jumped as much as 9.4% in Hong Kong, and Hua Hong Semiconductor surged 13%. Domestic chip buyers read the release the way the government wanted them to: as permission.

That is the emboldened half of the trade. The other half is the anxious cohort. Zhipu slid 8%. MiniMax dropped the same. Manycore Tech fell 9%. Every Chinese lab that sells a foundation model is now competing with a free, frontier-adjacent one that ships on the national chip stack. Their moat has holes in it.

Who wins, who loses, what breaks

Winners: Huawei, Cambricon, every fab tied into the Ascend supply chain. Enterprise buyers shopping for inference at scale. Any team that was waiting for an open-weight frontier model with a million-token context before committing to a long-horizon agent. Nvidia's short sellers, briefly.

Losers: Chinese foundation-model peers still trying to charge for API access. Margin assumptions at OpenAI and Anthropic in commodity workloads, though not at the top of their stack. Western labs that still treat open-weight releases as marketing exercises.

What breaks is the assumption that frontier AI requires an American chip underneath it. V4 does not prove the Chinese stack can host every workload. It proves it can host one, at the frontier tier, at a price that moves purchase orders. Harder to dismiss with a valuation argument.

The clock that matters

Preview releases can misfire. The Next Web flagged roughly a week as the window for outside scrutiny, and if the self-reported scores crack under it, the trade unwinds. R1's claims held up. V4 has more surfaces to defend and a rougher audience to face.

Watch one clock more than the rest. Huawei said the Ascend 950PR supernodes ship at scale in the second half of this year. DeepSeek said the V4-Pro price "will drop significantly" once that happens. If the company holds to that timeline, the chart you study in December shows two price sheets moving apart, not one. The supply-chain bet already landed. Now it has to clear. Or not.

Frequently Asked Questions

How much cheaper is DeepSeek V4 compared to OpenAI and Anthropic?

V4-Pro charges $1.74 per million input tokens and $3.48 per million output tokens. Claude Opus 4.7 charges $5 and $25; GPT-5.5 charges $5 and $30. V4-Pro is roughly 7 to 9 times cheaper on output. V4-Flash at $0.14 input is cheaper than GPT-5.4 Nano.

What chips did DeepSeek use to train V4?

DeepSeek did not disclose the training hardware. Huawei confirmed its Ascend 950 supernode supports V4 for inference, and the technical report describes custom kernels for both Nvidia and Huawei chips. US officials have previously accused DeepSeek of using banned Nvidia Blackwell processors at an Inner Mongolia data center; Beijing calls the claim baseless.

Is DeepSeek V4 actually competitive with frontier US models?

DeepSeek's own benchmarks show V4-Pro leading on coding and math, including a 3,206 Codeforces rating and 93.5% on LiveCodeBench. The company concedes V4 trails GPT-5.4 and Gemini 3.1-Pro by three to six months on general reasoning. Independent evaluation is expected within about a week.

What's the significance of the Huawei partnership?

DeepSeek worked with Huawei and Cambricon ahead of launch and did not give Nvidia or AMD pre-launch optimization access. That reverses DeepSeek's earlier practice and makes V4 one of the clearest cases yet of a major open model shipping with domestic Chinese chip support as a day-one feature.

Why did Chinese chip stocks rally on the news?

SMIC jumped as much as 9.4% and Hua Hong Semiconductor surged 13% in Hong Kong trading. Investors read V4's same-day Huawei compatibility as validation of China's domestic AI hardware stack. Meanwhile, Chinese model-provider peers Zhipu and MiniMax each fell around 8%.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

IMPLICATOR

IMPLICATOR