OpenAI's new image generator transforms anything into Studio Ghibli's iconic style. Users raced to recreate everything from wedding photos to historical tragedies. The results look disturbingly authentic.

The feature sparked an instant viral craze. Pet owners morphed their cats into anime stars. Couples reimagined their portraits with Ghibli's signature warmth. Then things got weird. Users started Ghibli-fying the Twin Towers on 9/11 and JFK's assassination.

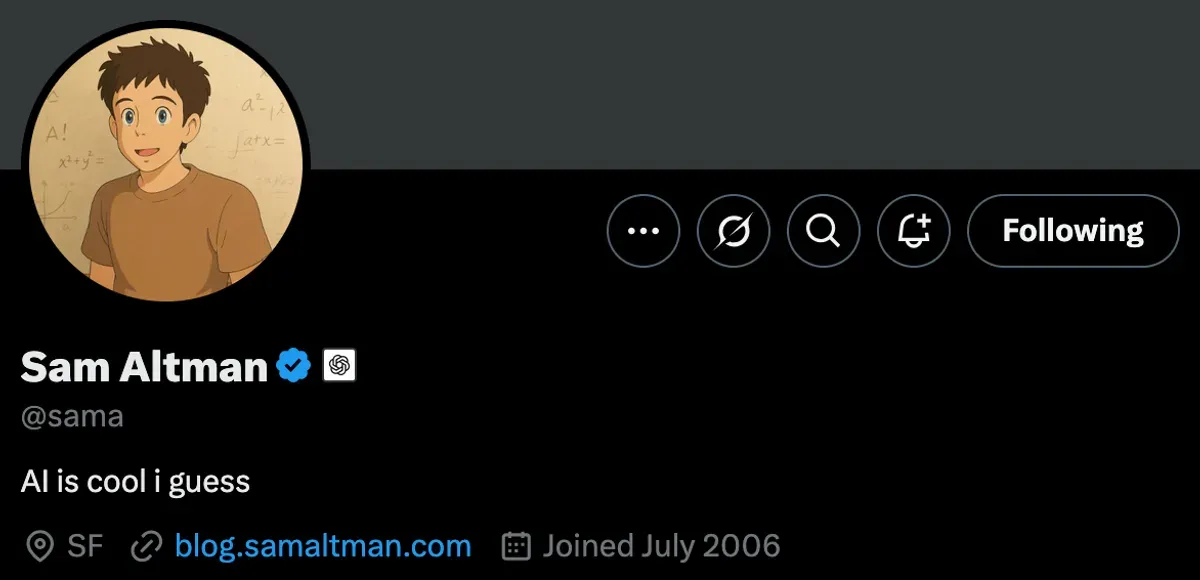

Even OpenAI's CEO Sam Altman joined the party. He swapped his profile picture for a Ghibli version and encouraged followers to make more. The irony? Studio Ghibli's founder Hayao Miyazaki once called AI art "an insult to life itself."

OpenAI claims its guidelines prevent copying living artists' styles. Yet Miyazaki remains very much alive. The company's workaround? They allow "broader studio styles" while blocking individual artists.

Legal experts watch closely. Copyright law doesn't protect artistic style, but OpenAI's perfect Ghibli mimicry suggests extensive training on their work. Similar issues fuel The New York Times' ongoing lawsuit against the company.

Users keep pushing boundaries. They've recreated everything from Hitler in Paris to Mark Zuckerberg's congressional testimony. OpenAI's response? A swift policy change blocking direct requests for public figures. The platform now refuses to generate images of celebrities or cartoon characters.

The trend expanded beyond Ghibli. Users discovered the tool could copy Rick & Morty, The Simpsons, and other iconic styles. None of these studios have commented on their work being transformed without permission.

The controversy highlights a deeper problem. Tech companies rush to copy artistic styles without asking permission. They call it "innovation." Artists call it theft.

Consider the numbers. OpenAI's image generator processed over 10 million requests in its first week. Each request potentially copied someone's artistic style. The company pockets the profits. Artists get nothing.

Their legal defense? They claim AI "transforms" art into something new. Critics argue it just remixes existing work. The distinction matters. Copyright law protects against copying. It doesn't stop "transformation."

Meanwhile, smaller studios watch nervously. If OpenAI can copy Ghibli, what stops them from targeting independent artists? Some creators already report seeing their styles pop up in AI-generated art.

The timing couldn't be worse for OpenAI. They face mounting pressure over copyright issues. The New York Times lawsuit threatens their entire training model. Congress debates AI regulation. Now they've angered one of animation's most respected studios.

The company's response follows a familiar pattern. Launch first, apologize later. Block the most controversial uses. Hope everyone forgets. But this time might be different. Studio Ghibli commands massive respect in the art world. Their objections carry weight.

The incident exposes AI companies' core strategy: Move fast, copy art, and let the lawyers sort it out. It worked for tech giants during the social media boom. Whether it works for AI remains unclear.

Why this matters:

- OpenAI built a tool that copies art styles with unprecedented accuracy - then acted shocked when users did exactly that

- The company's distinction between "individual" and "studio" styles reveals a desperate attempt to dodge copyright issues

Implicator

Implicator