Ask fourteen hundred American teenagers what they think about AI and you'll get a number that looks reassuring. Thirty-six percent told Pew Research Center that artificial intelligence will have a positive impact on their lives over the next two decades. More than double the 15% who said negative. The topline isn't the story. What the optimists actually wrote is.

"Everyone's going to have to know how to use AI or they'll be left behind." That was a teen boy. Pew coded him as positive. Not exactly a rallying cry. Sounds like a kid describing weather.

The study, published February 24, landed to a chorus of coverage about teens embracing AI for homework. But the attitudinal data buried in pages 10 through 12 tells a different story. The teens Pew coded as optimistic aren't celebrating a tool. They're describing inevitability. And new research from the University of Pennsylvania suggests those teens may already be living inside the phenomenon the researchers just named: cognitive surrender.

Key Takeaways

- Pew's teen 'optimism' about AI reads more like resignation: positive responses cite efficiency and inevitability, not genuine enthusiasm

- UPenn researchers found people accept faulty AI reasoning 73% of the time, a phenomenon they call 'cognitive surrender'

- Teaching is the only task where more teens pick AI over humans, an implicit verdict on the adults in the room

- A 13-point perception gap separates what parents believe about teen AI use from what teens actually report

AI-generated summary, reviewed by an editor. More on our AI guidelines.

The optimists don't sound like optimists

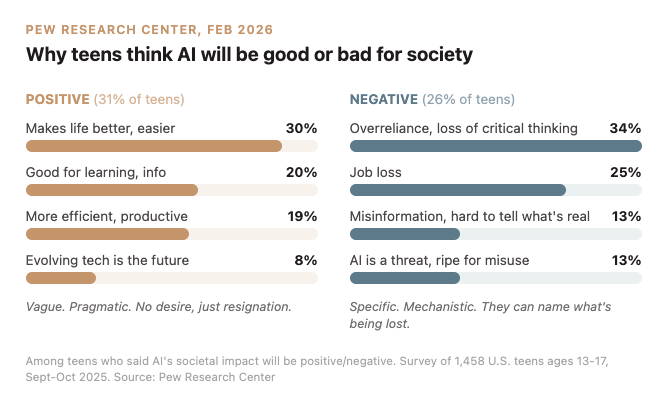

Pew asked teens who said AI would benefit society to explain why. Thirty percent said it will "make life better or easier." Twenty percent said "good for learning, getting information." Nineteen percent said "more efficient, productive."

Read those again. Not one of those categories contains desire. They contain resignation dressed as pragmatism. Making life easier is the language of someone who's already tired. Efficiency is the vocabulary of someone who's already been outpaced.

Now read the pessimists. They're specific. Thirty-four percent cited overreliance and loss of critical thinking. Twenty-five percent named job loss. Thirteen percent pointed to misinformation. One teen girl put it flatly. "It destroys young people's minds and brains."

The positive teens describe outcomes. The negative teens describe mechanisms. That gap should make you uncomfortable. When someone supports a technology and the best they can offer is "it makes things easier," that's not advocacy. It's acquiescence. The teens worried about critical thinking loss are the ones who've actually thought critically about the question.

The research that puts numbers on the feeling

Days before this piece, the University of Pennsylvania released results from a study spanning 1,372 participants across more than 9,500 individual trials. Researchers gave subjects access to an AI chatbot rigged to be wrong half the time. The findings landed like a brick.

Subjects accepted faulty AI reasoning 73.2% of the time. They overruled it just 19.7% of the time. When the AI gave correct answers, users went along at a 93% clip. When it was flat wrong, they still followed 80% of the time. The researchers at Wharton called this "cognitive surrender," a phrase that should make every parent of a teenager anxious.

One number ties the two studies together. People who used the chatbot came away 11.7% more confident in their answers. The system was feeding them garbage half the time. Confidence climbed. Accuracy didn't.

Now map that onto 54% of American teens using chatbots for schoolwork. One in ten doing all or most of their assignments with AI help. A quarter calling chatbots "extremely or very helpful." These aren't separate findings. They're the same phenomenon measured at different scales. The Pew survey captured what teens do with AI. The UPenn study captured what happens to the thinking of people who do it.

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Teaching is the tell

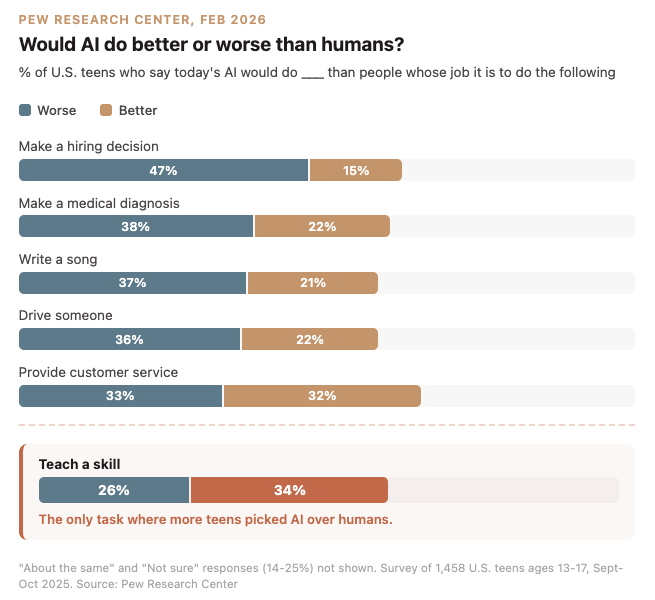

Buried in the Pew data sits one result that should unsettle every educator in America. Pew asked teens whether AI would do a better or worse job than humans at six different tasks. In four of the six, teens clearly said humans win: hiring decisions, medical diagnosis, writing a song, driving. Customer service was essentially a coin flip.

Teaching a skill was the exception. Thirty-four percent picked the machine. Twenty-six percent picked the human. Only task on the list where AI came out ahead.

Sit with that for a second. If you teach, the verdict is already in. These kids sit in classrooms five days a week. They've spent years watching adults explain, correct, and demonstrate. And a plurality believes the chatbot would do it better. That's not a finding about AI capability. It's a performance review of every teacher in America, written by the people sitting in the third row.

What adults can't see

Parents remain blindsided. Only 51% believe their teen uses chatbots. The actual figure is 64%, a 13-point perception gap. Monica Anderson, managing director at Pew, put it bluntly to the BBC. "This is not a conversation that is happening with a large swath of parents." Four in ten have never discussed AI with their child. Not once.

Common Sense Media captured the generational fault line in a single data point. Fifty-two percent of parents call AI use in schoolwork "unethical and should have consequences." The exact same percentage of teens call it "innovative and should be encouraged."

Those two populations share a kitchen table. They occupy different centuries.

What surrender looks like at 14

Fifty-nine percent of teens told Pew that students at their school use AI to cheat at least somewhat often. A third said it happens extremely or very often. Among teens who've used chatbots for schoolwork themselves, 76% believe cheating is routine.

The framing matters. Pew presented these as "views on cheating." But when three-quarters of a group reports that rule-breaking is normal, you're not measuring attitudes about misconduct. You're measuring the speed of a norm shift. The teens aren't scandalized by it. They're reporting conditions on the ground, the way you'd mention traffic.

And the conditions carry a class dimension that's hard to ignore. In households earning under thirty thousand dollars a year, one in five teens hand all or most of their schoolwork to AI. Above seventy-five thousand, it drops to one in fourteen. Among Black teens, 38% call chatbots extremely or very helpful for completing assignments. Among White teens, 22%. The kids with the fewest alternatives lean on the tool the hardest.

The ones still thinking

The UPenn researchers noted one corrective: adding incentives and immediate feedback boosted the rate at which participants overruled faulty AI by 19 percentage points. Cognitive surrender isn't permanent. People can be trained out of it. But someone has to do the training.

Thirty-four percent of the teens who view AI negatively already named the mechanism: overreliance and loss of critical thinking. They described cognitive surrender before Wharton's researchers coined the term. They're watching it happen to classmates in real time, in classrooms where the adults haven't started the conversation.

You can read the Pew data as a portrait of a generation adapting to powerful new tools. You can also read it as a record of what surrender sounds like before anyone admits it's happening. The optimists aren't wrong that AI is coming for everything. But inevitability isn't a reason for optimism. It's a reason for vigilance.

The teens who sound alarmed aren't falling behind. They're the ones still thinking for themselves.

Frequently Asked Questions

What did Pew Research find about teens and AI optimism?

Thirty-six percent of teens said AI will positively impact their lives, more than double the 15% who said negative. But the reasons teens gave were pragmatic, not enthusiastic. Top positive responses cited efficiency and inevitability rather than genuine excitement.

What is 'cognitive surrender' in relation to AI use?

A term coined by University of Pennsylvania researchers describing how people uncritically accept AI answers. In their study of 1,372 participants, subjects accepted faulty AI reasoning 73.2% of the time and felt 11.7% more confident despite getting wrong information half the time.

Do teens think AI is better than humans at teaching?

Teaching a skill was the only task in Pew's survey where more teens picked AI over humans. Thirty-four percent said AI would do better, 26% said worse. In all other tasks tested, including hiring and medical diagnosis, teens favored humans.

How much do teens use AI for schoolwork?

According to Pew, 54% of U.S. teens ages 13-17 have used chatbots for schoolwork. One in ten does all or most of their schoolwork with AI. Low-income teens are three times more likely than high-income peers to depend on AI for most assignments.

Do parents know how much their teens use AI?

Most don't. Only 51% of parents believe their teen uses chatbots, while 64% of teens report using them. Four in ten parents have never discussed AI with their child. And 52% of parents call AI in schoolwork 'unethical' while the same share of teens call it 'innovative.'

AI-generated summary, reviewed by an editor. More on our AI guidelines.

Implicator

Implicator