Every outlet covering Pew Research Center's teen AI survey on Tuesday led with the same number. Fifty-four percent of American teenagers use AI chatbots for schoolwork. The New York Times ran it. CBS wrote it up. Forbes quoted it by paragraph two.

That number is real. It tells you almost nothing.

Pew buried the more interesting number in the demographic breakdowns. Families earning under $30,000 told a different story. One in five of their teens said AI does all or most of their schoolwork, nearly triple the seven percent in homes above $75,000. Three to one.

The adults arguing about whether teens use AI to cheat are having the wrong conversation. The question isn't whether kids cut corners with ChatGPT. They do. Fifty-nine percent say cheating with AI happens regularly at their schools, and the teens who use chatbots themselves are the most likely to report it. But cheating has been a feature of education since education existed. What hasn't existed before is a tool that functions as a substitute teacher, tutor, and research assistant for kids whose families can't afford the human versions.

That's what Pew's data actually shows. AI has become scaffolding for American education. And like most scaffolding in this country, its quality depends entirely on what you can already afford.

The Breakdown

- Low-income teens (under $30K) are 3x more likely to use AI for most schoolwork than wealthy peers

- 59% of teens say AI cheating happens regularly, but the income gap matters more

- Stanford research: students who lose AI access perform worse than those who never had it

- 42% of parents haven't discussed AI use with their teens

The number everyone missed

Pew surveyed 1,458 teens and their parents from September to October 2025. The headline findings are familiar. A majority of teens use chatbots. They search for information (57%), get homework help (54%), and mess around with the tools for fun (47%). About one in ten use AI for all or most of their schoolwork. Roughly three in ten log in daily.

None of that surprises in February 2026. Break the data out by race and income, though, and it starts to sting.

Start with race. Black and Hispanic teens hit 60% chatbot use. White teens, 49%. Nobody led with that. But emotional support is where things split. One in five Black teens said they've gone to a chatbot when they needed advice or comfort. For white and Hispanic teens, about half that.

Then there's income. Teens from the poorest households are nearly three times as likely as their wealthiest peers to report that AI does most or all of their schoolwork. Not some. Not a little. Most or all.

Higher-income parents are more likely to have talked to their kids about AI use, 56% compared to 43% for the lowest earners. They're also less comfortable with their teens using chatbots for emotional support. That discomfort tells you something. These parents have other options for their kids.

When AI replaces what money buys

Wealthy families have always purchased educational scaffolding. Tutors. SAT prep courses. Writing coaches who charge $150 an hour. College counselors. These aren't luxuries in the American education system. They're the infrastructure that separates a 3.5 GPA from early admission.

Low-income families can't buy that scaffolding. Now their kids have found something that sort of works instead. ChatGPT explains algebra at 11 p.m. It helps structure a research paper when no parent is home to proofread. It summarizes a textbook chapter when the school library closed at three.

The Pew numbers show the pattern starkly. Teens from lower-income households aren't dabbling with AI. They're leaning on it. Hard. The 20% figure for all-or-most schoolwork has nothing to do with cheating. What you're looking at is dependency.

And the adults in these teens' lives are less equipped to guide that relationship. Only 43% of parents in the lowest income bracket have discussed AI with their teens. The perception gap runs the same direction. Sixty-four percent of teens said they use chatbots. Their parents guessed 51%. That 13-point gap tells you who's paying attention. About a third of parents had no idea either way.

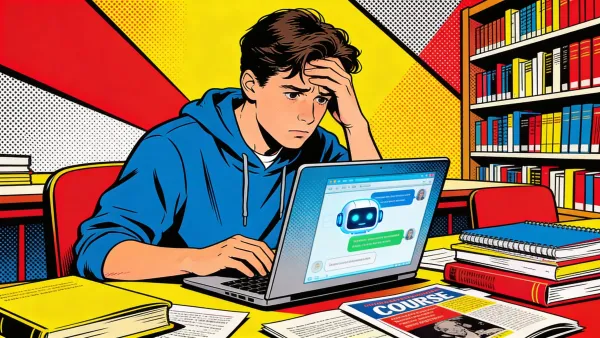

A Stanford education professor found something that should make every school board anxious. Guilherme Lichand ran an experiment with middle school students. Kids who'd been given AI assistance and then had it taken away performed worse on subsequent tasks than students who never had access at all. "These kids started believing less in themselves," Lichand told the Washington Post. A Brookings Institution report reached similar conclusions about AI dependence damaging student confidence and cognitive development.

If you're a teen from a household earning under $30,000, and AI has become your primary study partner, that dependency isn't theoretical. It's your Tuesday night.

Stay ahead of the curve

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Optimism that should make you nervous

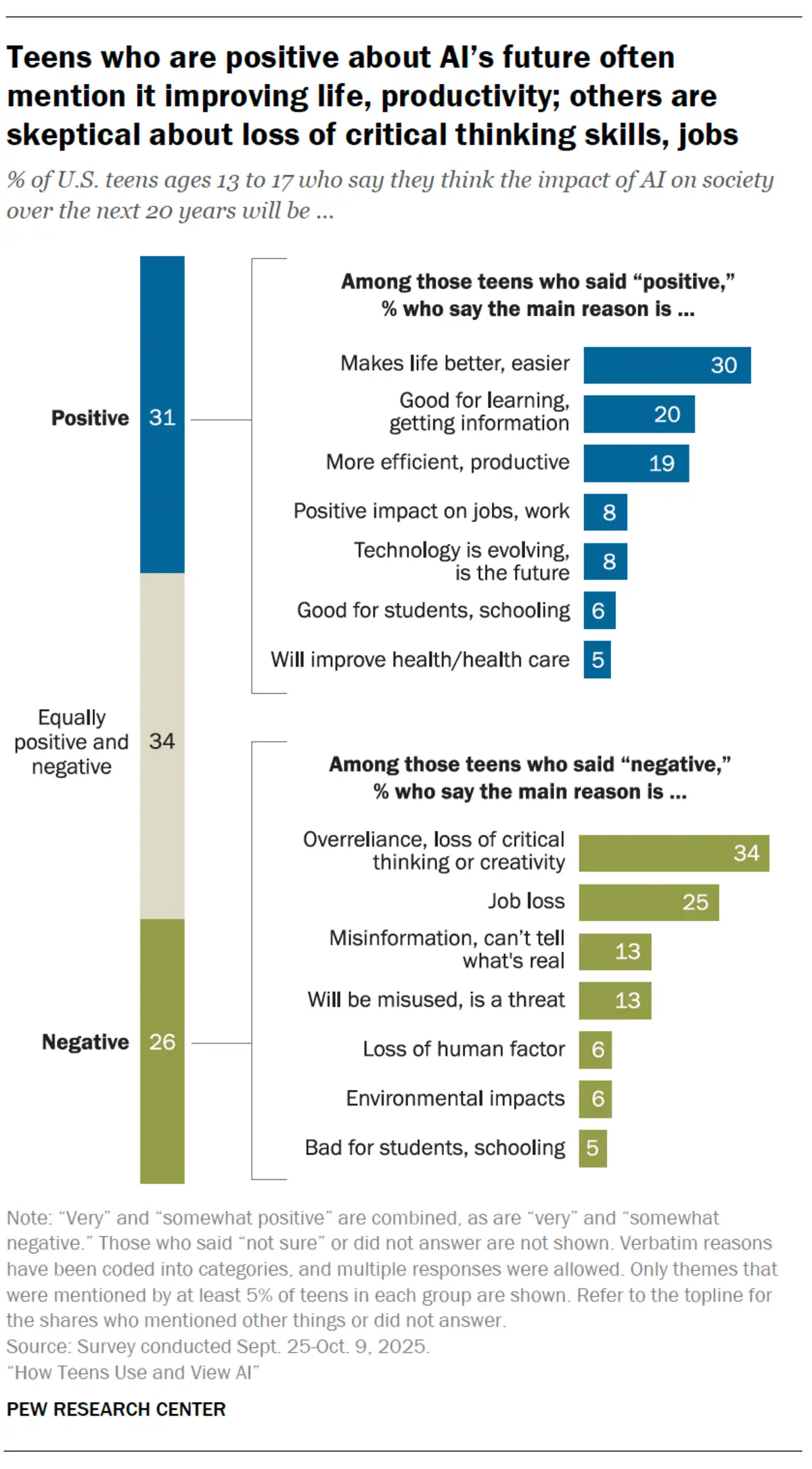

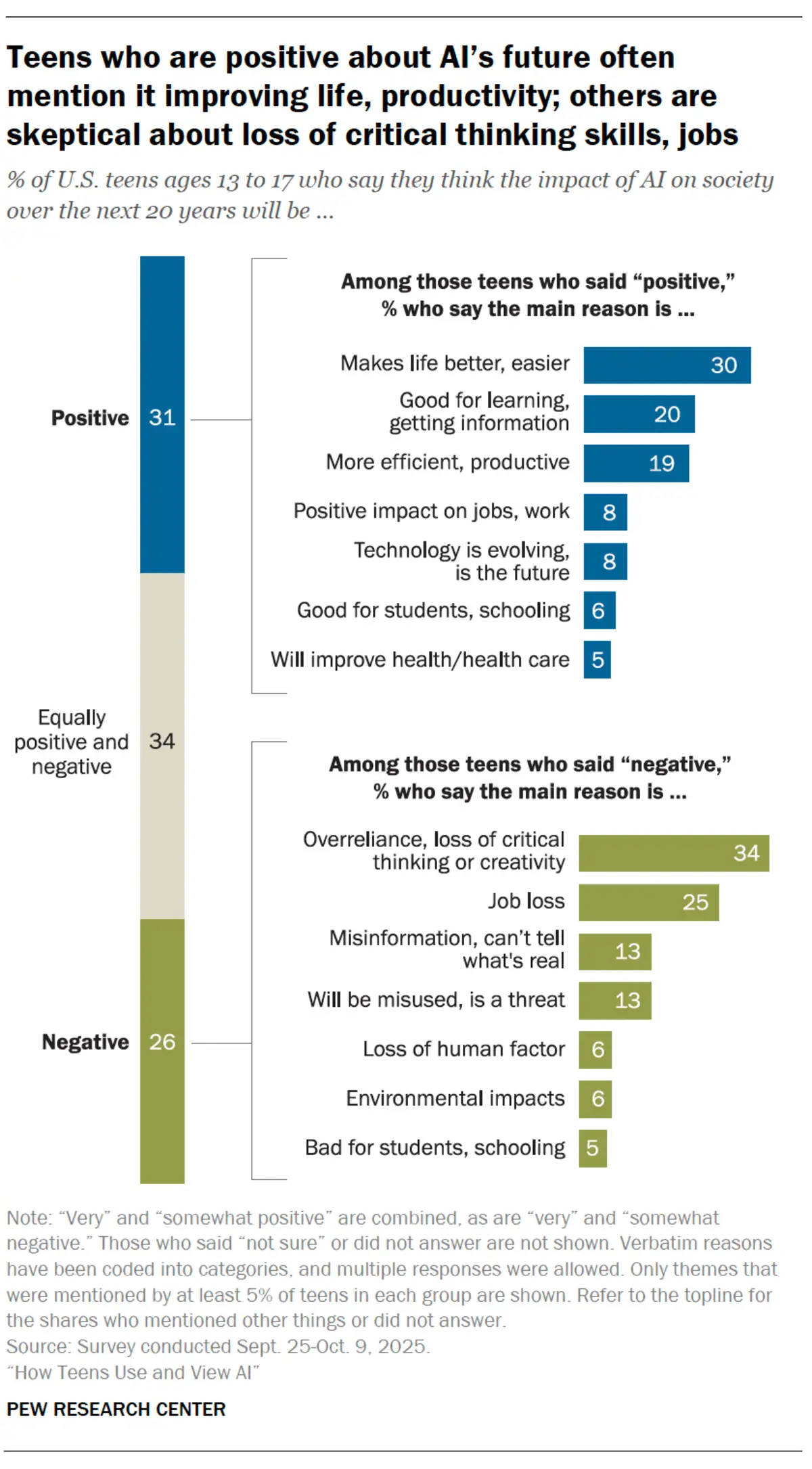

The data gets strange here. Teens lean on AI harder than adults and like it more. Thirty-six percent told Pew they expect the technology to benefit them personally over the next two decades. Only 15% see harm coming. Adults, by contrast, are spooked. Half told Pew in a separate survey they're "more concerned than excited" about AI's growing role in daily life.

Optimistic teens point to efficiency (30%), better learning (20%), and productivity (19%). "Everyone's going to have to know how to use AI or they'll be left behind," one teen boy told Pew researchers. The confidence reads like a teenager's natural certainty about tomorrow. It also reads like rationalization from a generation that doesn't remember school before the tools existed.

The skeptical teens sound sharper than most corporate boards. Among those expecting negative outcomes, 34% cited overreliance and loss of critical thinking. A quarter brought up job loss. One girl said people will grow afraid to create, that they "won't see a need for it anymore." A boy went further. "It's hard to tell what's real or AI online anymore." Most Fortune 500 CEOs still wave that concern away at earnings calls.

But the optimism lands differently when you hold it up against the dependency data. The teens most likely to view AI positively are often the ones using it most heavily. Heavy use correlates with lower income. So the teens who can least afford to have their critical thinking erode are the most exposed to exactly that outcome.

Put a face on the 20% and you get a fifteen-year-old at a kitchen table at nine o'clock, with ChatGPT explaining her chemistry homework because nobody else will. She'll tell you AI has been good to her. She's right, in the short term. The chatbot doesn't get impatient. It doesn't charge by the hour. What she can't see yet is what Lichand's research already showed, that the help erodes the muscle it's supposed to build. By the time she notices, the exam is tomorrow and the chatbot isn't allowed in the room.

The education establishment should feel cornered by these numbers. The technology companies building these tools aren't designing them for low-income students doing all their homework with a chatbot. They're designing them for productivity workers and creative professionals. Teenagers in under-resourced schools are repurposing corporate software as educational infrastructure, and nobody planned for that.

Who loses, specifically

Schools won't adapt to this quickly. They're still debating whether to ban chatbots or integrate them, crafting AI policies on the fly while students have already moved past the question. Seventy percent of teachers worry AI damages critical thinking, according to the Center for Democracy and Technology. But 85% of those same teachers use AI at work. The institutional response feels defensive, caught between principle and practice.

Teachers lose too, in a less obvious way. When a student submits AI-polished work, the teacher has no signal for where that student actually is. The feedback loop breaks. A teacher in a well-funded district with 22 students might catch the difference. She knows their writing voices. Scale that to 35 kids and a building where the textbooks predate the pandemic. The AI work blends in. And when district administrators point to rising average scores as evidence that AI integration works, nobody asks which students actually learned and which ones just had a better chatbot.

The kids who'll pay the price are the ones whose scaffolding is entirely digital. If you have a tutor who teaches you how to work through a math problem, ChatGPT is a supplement. When it's the only help in the house, crutch is the honest word for it. Crutches get confiscated on test day. The gap that opens won't register as a tech problem. It'll look like every other achievement gap. Same ZIP codes. Again.

Khan Academy's Sal Khan told the Washington Post that schools should assume students cheat with AI on homework. His solution makes sense on its face. Do writing assessments in class. Quiz students on at-home assignments to prove they learned the material. But that's a response calibrated to cheating. The kids doing all their schoolwork by chatbot aren't trying to game anything. The system they're leaning on is the only one that showed up for them, though that's a distinction the policy conversation hasn't caught up to yet. Their parents probably don't know about the use. Most haven't had the conversation. They won't see the damage until grades collapse or confidence craters.

The scaffolding test

Pew gave us a survey. What we actually need is a stress test. What happens when AI goes away? When the chatbot goes down, the subscription lapses, or the exam requires no devices?

Lichand's research suggests the answer is ugly. Students who build their academic confidence on AI assistance don't just struggle without it. They lose belief in their own capacity. The scaffolding doesn't build toward independence. It builds toward more scaffolding.

For the 20% of low-income teens doing most of their schoolwork with AI, that's not a technology question. American education has dodged this one for decades. The interface is new. The failure isn't.

Who gets the support they need to actually learn? And who gets a substitute that looks like support but leaves them weaker?

That 3-to-1 gap won't close by talking about cheating. The word everyone keeps avoiding is class.

Frequently Asked Questions

How many teens use AI chatbots for schoolwork?

Fifty-four percent of U.S. teens ages 13-17 have used chatbots like ChatGPT or Copilot for schoolwork, according to Pew Research Center's survey of 1,458 teens conducted in fall 2025. About 10% use AI for all or most of their assignments.

Why do low-income teens depend more heavily on AI for school?

Teens from households earning under $30,000 are three times more likely to use AI for most schoolwork (20% vs. 7% in homes above $75,000). These families can't afford tutors, writing coaches, or SAT prep, so AI fills the gap as substitute educational support.

What happens when students who depend on AI lose access?

Stanford professor Guilherme Lichand found that middle school students given AI assistance and then cut off performed worse than peers who never had access. Students lost confidence in their own abilities. A Brookings Institution report reached similar conclusions.

Do parents know how much their teens use AI?

There's a 13-point perception gap. While 64% of teens say they use chatbots, only 51% of parents think their teen does. About 42% of parents have never discussed AI use with their teen, and the conversation gap is wider in lower-income households.

How do teens view AI's long-term impact?

Teens are more optimistic than adults. Thirty-six percent expect AI to benefit them personally over 20 years, versus only 15% expecting harm. Their top concerns are overreliance and loss of critical thinking (34%) and job loss (25%).

Implicator

Implicator