On Wednesday, Google announced that its Gemini chatbot now supports "notebooks," a feature for organizing chats, files, and custom instructions into dedicated project spaces. The Verge's Jay Peters noticed the obvious: "Notebooks sound a lot like ChatGPT's Projects feature, which launched in 2024."

On the surface, he's right. Dig below it, and you find something different.

ChatGPT Projects lets you group conversations. Claude's Projects does roughly the same thing. Both are organizational features bolted onto chat interfaces. Google's version does something neither competitor can match: it syncs every notebook bidirectionally with NotebookLM, Google's document-grounded research tool. Sources you add in Gemini appear in NotebookLM. Conversations from Gemini become citable sources inside NotebookLM. Two products, one knowledge layer.

That distinction marks a structural shift in how AI companies think about value. The chat thread is dying as the basic unit of AI work. The persistent knowledge base is replacing it. And Google is the only company positioned to own that transition, because Google already owns the files.

Key Takeaways

- Google's new Gemini notebooks sync bidirectionally with NotebookLM, creating a unified knowledge layer no competitor can replicate.

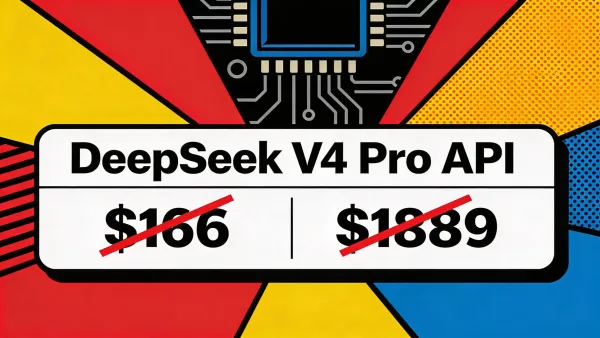

- Free users get 100 notebooks with 50 sources each; Ultra subscribers get 500 notebooks with up to 600 sources, a ceiling of 300,000 documents per account.

- The competitive advantage isn't the feature itself but Google's control of the underlying data layer: Drive, Docs, Gmail, the entire Workspace orbit.

- Every major AI company now offers project workspaces, but only Google owns both the chatbot and the file infrastructure underneath it.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

The chat thread was always disposable

For three years, AI companies built their products around conversation. You open ChatGPT, type a question, get a response. Close the tab. The interaction evaporates unless you deliberately save it. That model borrowed from messaging apps, and it inherited their worst quality: conversations are ephemeral by design.

OpenAI recognized the problem in 2024 when it launched Projects. Users could pin files and custom instructions to a workspace, giving the model persistent context across sessions. Anthropic built something similar into Claude. Both fixed the immediate pain of context amnesia. Neither fixed the deeper structural weakness underneath.

The deeper weakness: chat-based AI treats your knowledge as disposable. Every session still starts cold. You re-upload the same PDFs, re-explain the same constraints, re-establish the same preferences. OpenAI recently expanded Projects to pull sources from Slack channels and Google Drive folders, calling it "a living knowledge base." That's real progress. But it's progress built on top of someone else's infrastructure.

Google doesn't have that dependency. Google is the infrastructure.

When you create a Gemini notebook, you're pulling from Drive files, Google Docs, PDFs already living inside Google's ecosystem. The notebook doesn't just organize your AI conversations. It connects them to the same data layer where you already write, store, and share everything else. No API bridge required. No third-party connector. The plumbing is native.

NotebookLM changes the math

NotebookLM started as a side project from Google Labs. It looked minor. Upload a few PDFs, ask questions, get answers. But the product evolved into something more specific: a document-grounded research workspace where every claim cites its source, every response stays anchored to uploaded materials, and hallucinations drop sharply because the AI cannot invent what isn't in the corpus.

That grounding mechanism is exactly what chatbots lack. ChatGPT will confidently fabricate a statistic. Claude will hedge with qualifiers. NotebookLM will point you to paragraph three of the report you uploaded yesterday. As XDA's Beatrice Manuel wrote: "Perplexity and ChatGPT treat documents as context for answers. NotebookLM treats them as a corpus to be structured and cross-referenced."

Now picture those two capabilities fused. You're researching a competitor in Gemini, pulling together web results, asking follow-up questions, sharpening your understanding across a dozen open tabs. One click sends that entire conversation into a notebook. That notebook syncs to NotebookLM, where the same material gets citation-level grounding, mind maps, infographics, and the audio overviews that turn your documents into something resembling a private podcast.

Two products feeding each other. The chatbot generates raw insight. The research tool structures and verifies it. Nobody else in the industry can offer that loop, because nobody else has both a mass-market AI chatbot and a dedicated document-grounding engine running on the same infrastructure.

Google's tier structure reveals how aggressively they're scaling this. Free users get 100 notebooks with 50 sources each. Google AI Ultra subscribers get 500 notebooks with 600 sources per notebook. That ceiling is 300,000 source documents tied to a single account. A corporate research team might process fewer sources in a year.

The switching cost nobody is discussing

Every file you add to a Gemini notebook lives inside Google's ecosystem. Custom instructions, curated source collections, conversation threads, all of it compounds over time into something genuinely painful to abandon. You aren't just organizing your AI interactions. You're building a knowledge graph that only Google's products can read.

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

That should make OpenAI anxious. ChatGPT Projects creates similar gravity in theory. But OpenAI doesn't own your file storage, your email, your calendar, or your document editor. Google does. A Gemini notebook can pull directly from Drive. It can reference a Google Doc drafted last quarter. The roadmap all but guarantees Gmail threads, Calendar events, and the rest of the Workspace orbit will follow. The notebook becomes connective tissue between the AI and your entire professional life, and Google controls both ends.

This wasn't accidental. Android Authority had spotted Google testing a "Projects" feature inside Gemini back in December. By March, code references suggested the feature would be renamed to "Notebooks," tying it directly to NotebookLM. That name change wasn't cosmetic. It bound two products under a single metaphor, one that describes exactly the kind of persistent, accumulating workspace that builds lock-in by design.

And here's the part that stings: every competitor can copy the feature. None of them can copy the data layer underneath it. OpenAI can integrate with Google Drive through an API. Google doesn't need an API. The files are already home.

What Google solved by skipping

There's a quiet irony buried in this launch. Google still doesn't have a real notebook app. Not the AI kind. The actual pen-and-paper-replacement kind.

One Android Police writer spent a month migrating from Microsoft to Google and hit a wall at OneNote. Google Keep handles sticky notes. Google Docs handles manuscripts. Nothing handles the freeform, hierarchical, canvas-style thinking that OneNote nailed a decade ago. Google's ecosystem, he concluded, has a "massive missing middle."

Google's answer is to skip the traditional notebook entirely. Instead of competing with OneNote on formatting and freeform canvases, Google built an AI-native knowledge layer that treats "notebook" as a living, contextual workspace rather than a blank page.

The bet is bold, maybe too bold. It assumes the future of note-taking isn't freeform canvases and stylus input. It assumes people want their thinking tools and their AI tools to be the same thing. If Google is wrong, it just handed the "missing middle" to Microsoft and Apple for another decade. If Google is right, it made the traditional notebook app as obsolete as the filing cabinet.

What this forces you to decide

The AI industry just split into two architectures. One treats AI as a reasoning tool. You bring context, the model thinks, and the conversation ends when the window closes. Memory and projects exist, but they're features. Not substrate.

The other treats AI as a knowledge operating system. The chatbot and the research tool share a foundation. Documents, conversations, and instructions persist across products, accumulate over time, and compound in value the longer you stay.

Both architectures will produce capable AI. Only one makes it progressively harder to walk away. And if you're Anthropic or OpenAI, watching Google quietly wire its AI into the same infrastructure where billions of people already store their work, the word for what you're feeling is exposed.

If you're evaluating AI tools for your organization, the model benchmarks matter less than the question Google is now forcing: where does your institutional knowledge actually live? A company running on Google Workspace that starts loading research into Gemini notebooks is building an AI relationship that grows stickier with every uploaded file and saved conversation. Six months from now, switching to ChatGPT means abandoning that accumulated context. A year from now, it means rebuilding an entire knowledge infrastructure from scratch.

Whoever holds the knowledge layer holds the relationship. Google just made its argument that the knowledge layer isn't a feature you bolt on. It's the product itself.

The notebooks look modest. Organized chats, synced files, shared sources. But the infrastructure underneath them is anything but modest. That's the tell. Google didn't build a better chat organizer. It built the floor.

Frequently Asked Questions

What are Gemini notebooks?

Notebooks are organized project spaces inside Google's Gemini chatbot where you can group chats, files, and custom instructions by topic. They sync bidirectionally with NotebookLM, meaning sources added in either app appear in both automatically.

How do Gemini notebooks differ from ChatGPT Projects?

Both organize AI conversations by topic. The key difference is that Gemini notebooks sync with NotebookLM's document-grounding engine, creating a research-plus-chatbot loop. Google also controls the underlying file infrastructure, while OpenAI relies on third-party integrations.

How many notebooks can I create?

It depends on your Google AI plan. Free users get 100 notebooks with 50 sources each. Plus gets 200 notebooks with 100 sources, Pro gets 500 with 300, and Ultra gets 500 notebooks with up to 600 sources per notebook.

Who can access Gemini notebooks right now?

Google AI Ultra, Pro, and Plus subscribers can access notebooks on the web starting this week. Mobile access, European expansion, and free-tier availability will follow in the coming weeks.

Why does the NotebookLM integration matter strategically?

NotebookLM grounds AI responses in your uploaded documents with inline citations, reducing hallucinations. Combined with Gemini's conversational capabilities, it creates a loop where the chatbot generates insights and the research tool verifies them against your sources.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

IMPLICATOR

IMPLICATOR