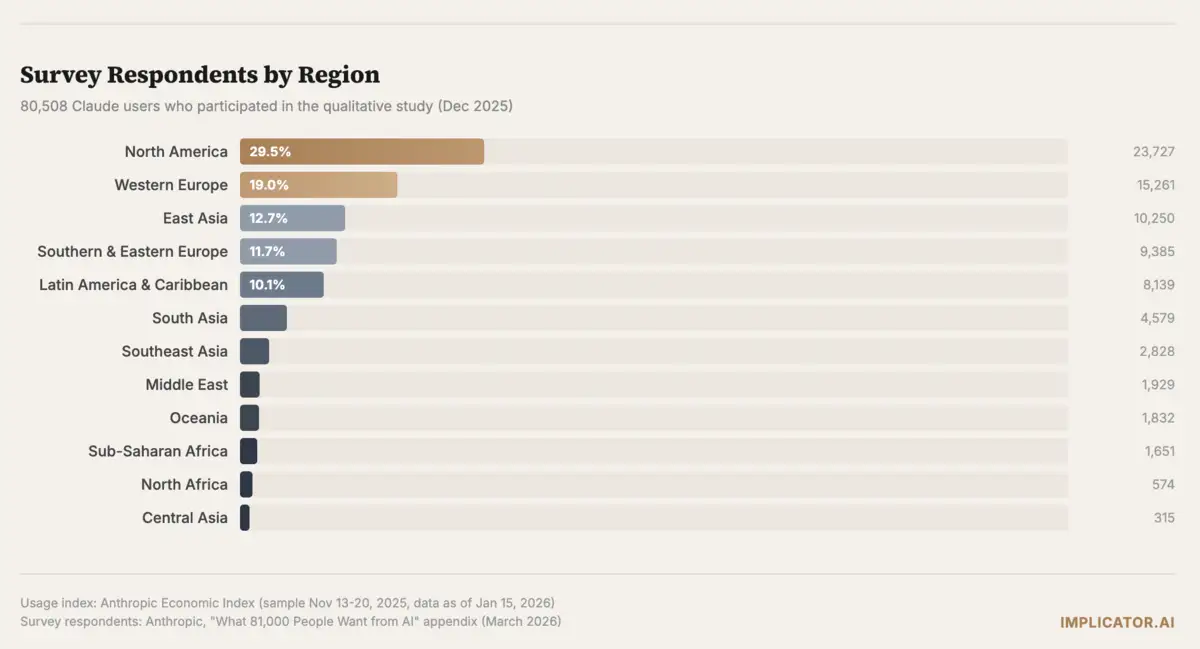

Anthropic published what it calls the largest qualitative AI study ever conducted on Wednesday. Over one week in December, 80,508 Claude users across 159 countries and 70 languages sat down with Anthropic Interviewer, a version of Claude built to conduct adaptive one-on-one conversations about AI hopes and fears. Nineteen percent named professional excellence as their top desire. Researchers pushed respondents on why they wanted productivity gains. The answers kept drifting somewhere else. Better lives outside of work.

"Using AI to automate emails became, in actuality, a desire to spend more time with family," the researchers wrote. A third of all visions collapsed into a single request: make room for the parts of life that modern work has crowded out.

Key Takeaways

- 80,508 Claude users named productivity as their top AI wish, but follow-ups revealed they actually wanted more personal time.

- Eighty-one percent reported concrete AI benefits, with productivity gains at 32% and cognitive partnership at 17% leading.

- Decision-making was the only tension where negatives outweighed positives: 37% cited unreliability vs. 22% praising AI judgment.

- Anthropic acknowledged selection bias, noting respondents were active Claude users from disproportionately wealthy, technical backgrounds.

The productivity mask

Anthropic Interviewer asked each participant a fixed set of questions, then adapted follow-ups based on answers. Claude-powered classifiers sorted every conversation into categories covering what people want, what scares them, and whether AI has delivered on any of it.

Nine clusters emerged from the central question: "If you could wave a magic wand, what would AI do for you?" After professional excellence at 19%, personal transformation scored 14%, life management 14%, time freedom 11%, financial independence 10%. Entrepreneurship, learning, societal transformation, and creative expression each pulled between 6% and 9%.

Read the labels and you see a productivity wish list. Dig into the follow-up answers and the picture shifts. A software engineer in Mexico who wanted AI to automate tasks explained what he actually meant. "With AI support I can now leave work on time to pick up my kids from school, feed them, and play with them." A freelancer in Japan wanted to "use less brain power on client problems" so she could "have time to read more books."

Anthropic grouped the underlying motivations into three bands. Roughly a third wanted AI to free up time, money, or mental bandwidth by offloading current burdens. A quarter wanted more fulfilling work, not less work but better work. A fifth wanted to become someone better through learning, healing, or personal growth. Productivity was the language. Freedom was the ask.

One respondent from Germany captured the gap between the AI industry's pitch and user reality in a single line. "AI should be cleaning windows and emptying the dishwasher so I can paint and write poetry. Right now it's exactly the other way around."

People who cited societal transformation, about 9% of respondents, often grounded their visions in personal loss. They wanted AI to detect cancer earlier, speed up drug discovery, or break the link between educational quality and wealth. A software engineer in Poland framed it through his daughter's neural disorder: she would "have equal chances in the world if AI acceleration contributes to finding a cure."

What is already working

Asked whether AI had ever taken a concrete step toward their vision, 81% said yes. Productivity gains dominated at 32%, cognitive partnership at 17%, learning at 10%. Technical accessibility, where people build things they could not have built alone, accounted for 9%.

Some of the strongest responses came from that last category. A mute user in Ukraine described building a text-to-speech bot with Claude that lets them communicate with friends "almost in live format." A tradesworker in the United States with a learning disorder said AI could finally "read past" it. An entrepreneur in Chile who spent twenty years running a butcher shop described launching a tech business having touched a computer only two or three times in his life.

Research synthesis pulled 7% of responses. A freelancer in the United States who had been misdiagnosed for over nine years said Claude "put the historical pieces together" and led to a proper diagnosis. A physician in Israel used AI to identify a severe neurological condition that local specialists had missed.

But 19% said AI had fallen short. The complaints were specific and grounded. Hallucinated citations. Inaccurate outputs that required so much verification the time savings evaporated. "I had to take photos to convince the AI it was wrong," one respondent in Brazil wrote. "It felt like talking to a person who wouldn't admit their mistake."

And then there was emotional support. Only 6% of respondents described it as a benefit, making it the smallest category by far. These responses carried weight that percentages do not capture. A Ukrainian soldier said AI was what "pulled me back to life" in moments "when death breathed in my face, when dead people remained nearby." A woman processing grief after her mother's death explained that Claude has "unlimited patience to listen to me" because she has "neither friends nor family to confide in."

The cost of that accessibility showed up in the same conversations. A user in South Korea described turning to Claude when a friendship became strained. "But it was a stupid choice," the person wrote. "I should have talked with that friend, not you. That's how I lost that friend."

Join 10,000+ AI professionals

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Five tensions, one person

Anthropic identified five recurring patterns where AI's benefits and harms grow from the same root. People learn with AI and worry their thinking will atrophy. They save time but watch the treadmill speed up. The same availability that makes Claude a grief counselor at 3 a.m. also makes it easier to avoid a difficult conversation with a friend. Wealth-building and displacement anxiety share a zip code. And every professional who has trusted AI judgment on something that mattered has a story about getting burned.

Here is the finding that matters most. These tensions do not sort people into camps. They coexist inside the same individual. Someone who values emotional support from AI is three times more likely to also fear becoming dependent on it, Anthropic found. That ratio held across all five tensions the researchers measured.

Decision-making was the only tension where the negative outweighed the positive. Twenty-two percent of respondents praised AI as a judgment aid, while 37% flagged unreliability that impedes good decisions. Nearly half of all lawyers in the sample said they had run into AI unreliability firsthand. Lawyers also reported the highest rates of realized decision-making benefits. That's the tell. Lean hardest on AI for high-stakes judgment, get burned most often. That was the pattern across the data.

Educators saw it from the other side. Teachers and academics reported seeing cognitive atrophy in their students at two and a half to three times the average rate. Students reported more learning benefits from AI than any other group. But 16% of them also noticed signs of atrophy in their own work. Outside the classroom, the picture looked different. Tradespeople who learned with AI almost never reported atrophy. The effect may hit hardest where AI replaces effort rather than feeding curiosity.

On average, respondents voiced 2.3 distinct concerns. Unreliability led at 27%, followed by jobs and economy at 22% and loss of autonomy at 22%. After that, the numbers bunched together. Cognitive atrophy at 16%, governance at 15%, misinformation at 14%. Roughly one in nine said they had no concerns at all, most of them comparing AI to electricity or the internet. Just another tool.

Regional patterns showed up clearly in the data. East Asian respondents worried most about cognitive atrophy and loss of meaning. Western Europe focused on surveillance and privacy. North America fixated on governance gaps. In lower-income regions across Africa, South Asia, and Latin America, concerns concentrated on unreliability and jobs, the immediate and practical, rather than abstract institutional risks.

Who sat for these interviews

Selection bias sits in plain view. These are active Claude users who already found the product valuable enough to keep using it. The interview design asked for positive visions first and concerns second, a sequencing that may have nudged respondents toward optimism.

Anthropic acknowledged both points. It argued the format still yields something surveys and checkboxes cannot. Eighty thousand open-ended conversations in seventy languages, the company said, bridge a gap between depth and volume that has bottlenecked qualitative research for decades.

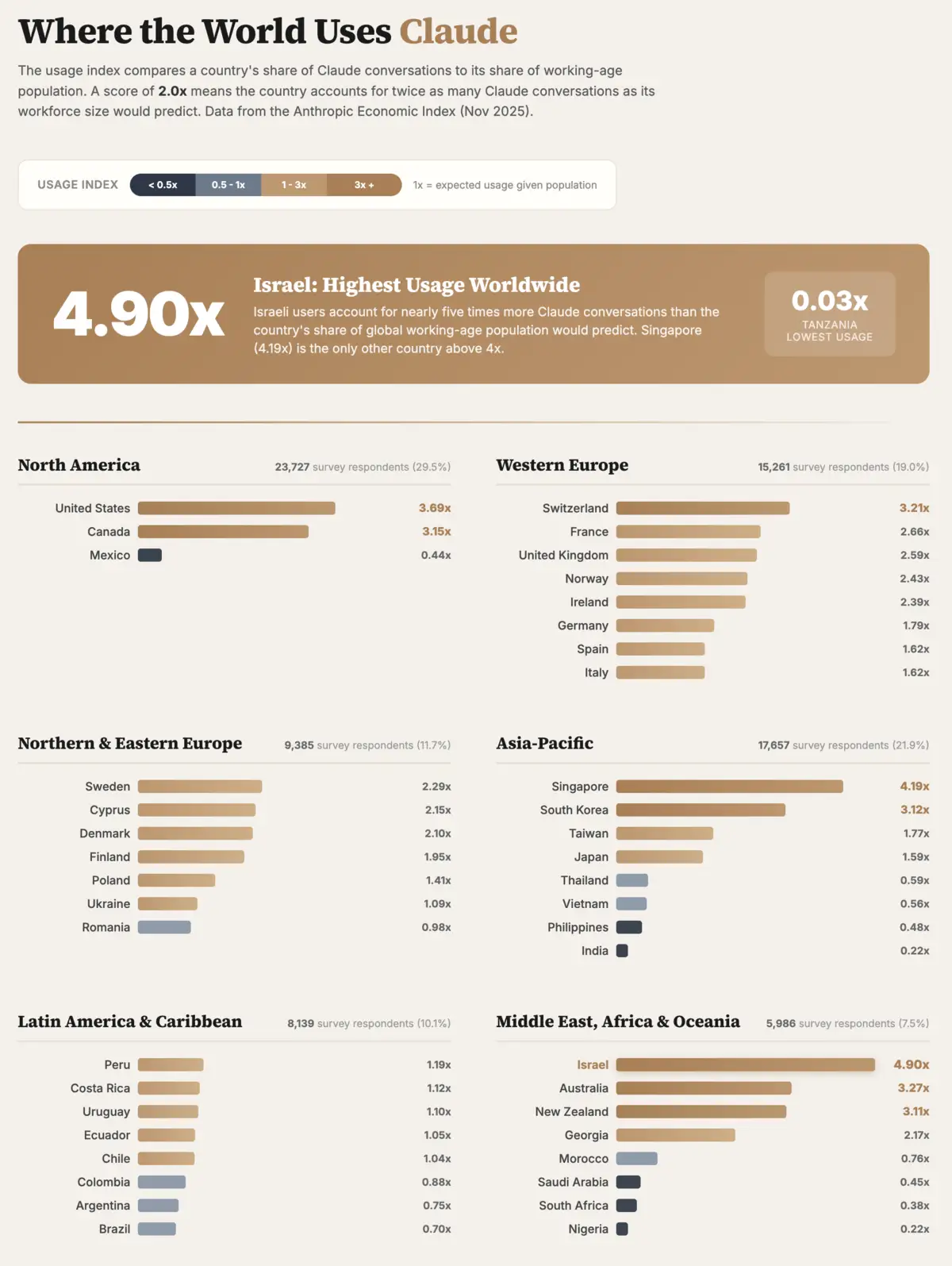

Timing matters here. Claude's business subscriptions grew 4.9% month over month in February, according to Ramp data reported by The Register. Seventy percent of businesses choosing an AI provider for the first time now pick Anthropic. Consumer adoption has surged in parallel. ChatGPT's U.S. mobile market share fell from 69.1% to 45.3% between January 2025 and January 2026 as competitors gained ground, and daily time spent with Claude tripled from ten minutes to over thirty in the same period, according to Apptopia.

Anthropic's public fight with the Pentagon adds another dimension. The Defense Department designated the company a supply chain risk in early March after Anthropic refused to remove model guardrails for military applications. That dispute boosted Claude's public profile and, by several measures, accelerated its growth. You can read Anthropic's decision to publish eighty thousand user voices as a company emboldened by its own momentum, or as one anxious to understand a user base expanding faster than anyone planned for.

Israel leads Claude adoption worldwide at 4.9 times expected usage relative to workforce size, according to Anthropic's own usage index published last week. Singapore sits at 4.2 times. The United States, despite having the largest raw user base, comes in at 3.7.

Which means the 80,508 people who sat for these interviews are not a random sample. Not even close. They are early adopters, often technical, disproportionately from wealthy countries, and already committed to the product. The study captures what devoted AI users want. It does not capture what the world wants.

What comes next

Anthropic said it plans to run the next Anthropic Interviewer study shortly, with a smaller group of Claude users tracked over time. The company will shift focus from what people want to whether Claude is actually delivering, measuring effects on wellbeing rather than collecting snapshots.

It also flagged economic displacement concerns in the data as an area where it is "designing further research and updating our thinking." Given that jobs and economy was the strongest single predictor of overall AI sentiment in the study, that work carries weight.

For now, the study's clearest contribution is its method and its core finding. Eighty thousand people, given the space to talk rather than click boxes, described wanting something quieter than the AI industry typically promises. Not superintelligence. Not the automation of everything. A healthcare worker in the United States put it plainly: since AI lifted the documentation burden, "I have more patience with nurses, more time to explain things to family members." That is what better looks like, and it has nothing to do with throughput.

Frequently Asked Questions

How did Anthropic conduct this study?

Anthropic built a specialized version of Claude called Anthropic Interviewer that conducted adaptive one-on-one conversations with 80,508 users across 159 countries and 70 languages over one week in December. The system asked fixed questions, then generated follow-ups based on answers. Claude-powered classifiers categorized responses into themes covering desires, fears, and realized benefits.

What were the nine categories of AI wishes?

Professional excellence led at 19%, followed by personal transformation (14%), life management (14%), time freedom (11%), financial independence (10%), entrepreneurship (9%), societal transformation (9%), learning (8%), and creative expression (6%). Anthropic grouped the underlying motivations into three bands: offloading burdens, finding more fulfilling work, and personal growth.

What concerns did respondents raise about AI?

Respondents averaged 2.3 concerns each. Unreliability led at 27%, followed by jobs and economic impact at 22% and loss of autonomy at 22%. Cognitive atrophy hit 16%, governance gaps 15%, and misinformation 14%. About one in nine had no concerns. Regional patterns varied: East Asia worried about cognitive atrophy, Western Europe about privacy, North America about governance.

How representative is this study?

Not very, by Anthropic's own admission. All respondents were active Claude users who already valued the product. The interview asked about positive visions before concerns, potentially nudging toward optimism. Respondents skewed technical and came disproportionately from wealthy countries. Israel led usage at 4.9 times expected rates relative to workforce size.

What did the study find about emotional support from AI?

Only 6% cited emotional support as a benefit, the smallest category, but these responses carried outsized weight. A Ukrainian soldier credited AI with pulling him back to life. However, one user described choosing AI over a difficult conversation with a friend, ultimately losing that friendship. People who valued emotional support were three times more likely to also fear dependence.

Implicator

Implicator