Aria Networks uses the same Broadcom Tomahawk ASICs that power switches from Arista, Cisco, and every whitebox vendor chasing the AI networking market. Same silicon. Same open-source SONiC operating system. Same Ethernet standards. The company raised $125 million in a Series A on Tuesday anyway, backed by Sutter Hill Ventures, Atreides Management, Valor Equity Partners, and Eclipse Ventures. That alone tells you the money isn't following the hardware.

It's following what Aria claims to read from it.

Key Takeaways

- Aria Networks raised $125M in Series A funding for AI-native Ethernet switches using the same Broadcom silicon as competitors

- The startup's telemetry engine claims 100 to 10,000x finer data resolution than existing tools, with AI agents optimizing clusters in real time

- CEO Mansour Karam previously sold networking startup Apstra to Juniper for $190M; Aria targets neoclouds, not hyperscalers

- Competes against Arista ($120B market cap), Cisco, DriveNets ($375M raised), and Arrcus in a $12.8B market

AI-generated summary, reviewed by an editor. More on our AI guidelines.

The 10% that controls the other 90%

The network inside an AI data center typically accounts for 10 to 15 percent of total cluster cost. GPUs eat the rest. But when that network misfires, packets drop, latency spikes, and accelerators worth millions sit idle while the fabric catches up. Aria's own models estimate that suboptimal networking in a 10,000-GPU cluster forfeits $4.4 million in annual revenue. The math is blunt: your most expensive assets bleed value because the pipes between them choke.

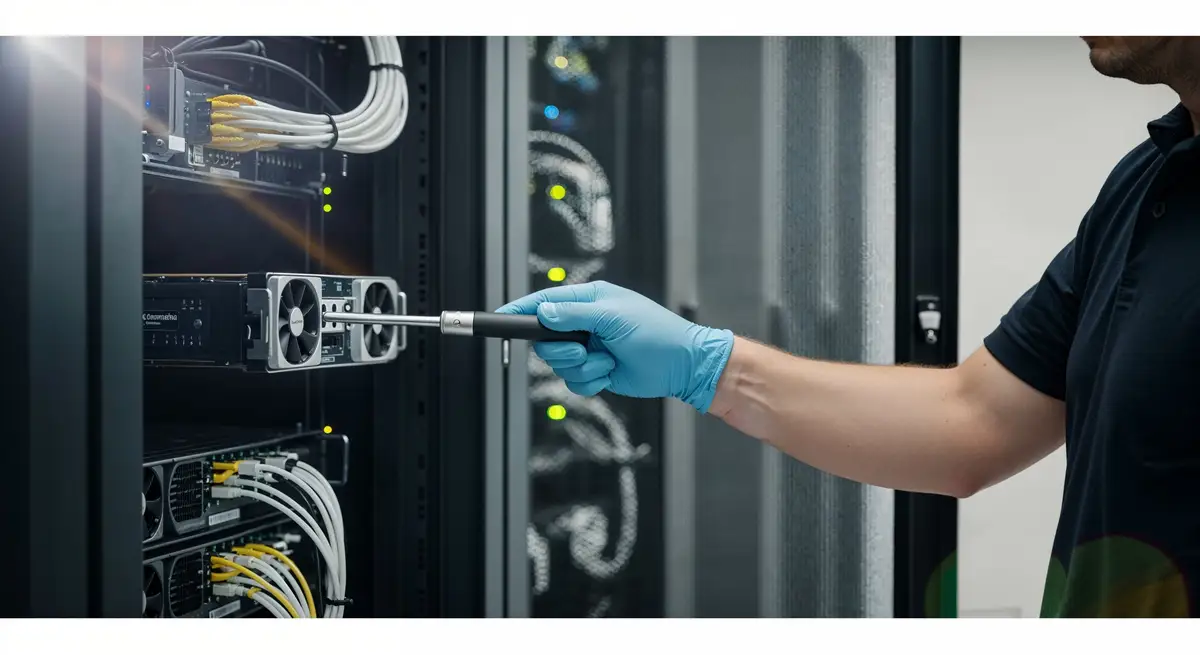

CEO Mansour Karam has made this argument before. He built Apstra, sold it to Juniper Networks for roughly $190 million in 2021. At Apstra, the pitch was automation. At Aria, it's telemetry, the fine-grained data streaming off switching silicon that most vendors collect but few actually use. "These ASICs from Broadcom have tons of telemetry at the microsecond resolution," Karam told Network World. "The challenge is figuring out how to effectively extract, store, process and act on it at scale."

Reading what the silicon already knows

Aria's platform, branded "Deep Networking," ships 800G and 1.6T Ethernet switches with a hardened SONiC distribution and a telemetry engine the company says captures data at 100 to 10,000 times finer resolution than competing tools. Aria layers AI agents over that data stream. Traffic spikes and the agents rebalance loads. Congestion starts building, they flag it before packets drop. Something breaks at 3 a.m.? The operator punches a plain-English question into a chat box instead of spelunking through log files.

The approach sounds incremental. It is not. Traditional networking vendors think switch by switch. Karam argues the AI era demands path-level optimization, tracking traffic end-to-end across the entire fabric, not just at individual nodes. "It's no longer just about the switch itself," he told Network World. "It's really about the end-to-end path."

That shift mirrors what happened with storage a decade ago. Flash drives used the same NAND chips across vendors. The winners were companies that built smarter controllers and software layers on top of commodity hardware. Aria is making the same play for networking. Same Broadcom silicon. Different brain.

Why neoclouds buy from a startup

Aria's customers are not hyperscalers. Microsoft and Meta have their own networking teams and buy from Arista, a company with a $120 billion market cap and close to $10 billion in annual revenue. Aria is targeting neoclouds, the GPU rental operators scaling clusters to 100,000 accelerators without the engineering depth of a Meta or a Google.

These buyers are anxious. Billions committed to GPU infrastructure, every accelerator needs to run at maximum utilization to recoup the investment. In-house teams capable of tuning networking at the microsecond level? Most neoclouds don't have them. And Arista's AI networking revenue alone hit $1.6 billion in 2025, growing toward $3.25 billion this year. That's the competitive gravity pulling at every deal Aria tries to close.

Aria's pitch to these operators is a white-glove deployment model. The company embeds field engineers directly in customer environments, Karam told CRN, with the expectation that partners will eventually take over that role. The strategy is part product, part consulting, and if you've spent any time watching infrastructure startups, you know the pattern. It works with a handful of deployments. Whether it scales is a different question.

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

The constraints that don't show up in the press release

Aria is chip-agnostic, supporting Nvidia, AMD, and Google accelerators. AMD has certified the platform for its Pensando Pollara 400 AI NICs. That flexibility matters for operators hedging their GPU supply risk.

But flexibility alone is not a moat.

The AI data center networking market stands at $12.8 billion in 2026 and is projected to reach $30 billion by 2035. Arista owns the largest share. Cisco limps back into the fight with $3 billion in AI infrastructure revenue guidance for fiscal 2026, clawing back hyperscaler deals it had been losing for years. DriveNets raised $375 million for disaggregated platforms. Arrcus pulled in $145 million for whitebox software.

Against that lineup, $125 million buys relevance but not dominance. Aria is a 15-month-old company selling into a market where trust is expensive and switching costs are high. One bad deployment in a neocloud's production fabric could end the relationship. Drew Conry-Murray at Packet Pushers put it simply: "Aria Networks will have to make a strong case to neoclouds to get them to risk their critical network fabrics on a newcomer."

The bet Karam has to win twice

Both of Aria's founders, Karam and CTO Subhachandra Chandra, spent years at Arista before building Apstra. After the Juniper exit, both landed as entrepreneurs-in-residence at Sutter Hill Ventures. Aria came out of that residency in late 2024. The investor conviction is real. Gavin Baker of Atreides Management and Stefan Dyckerhoff of Sutter Hill joined the board. Karam frames the economics simply: "A 10% gain in tokens per second is a 10% gain in revenue."

That framing reveals Aria's core gamble. The company needs to prove two things, not one. First, that its telemetry-driven approach actually delivers measurable MFU gains over competitors using identical silicon. Second, that neoclouds will trust those gains enough to bet their production infrastructure on a startup.

Karam won the first bet at Apstra. Intent-based networking worked. Juniper paid $190 million for it. But Apstra was a software overlay, not a hardware company. Aria sells physical switches into environments where a single fabric failure can idle thousands of GPUs. The stakes are higher, the margin for error smaller, and Arista is not Juniper. It is a $120 billion company that built its reputation doing exactly what Aria claims to do better.

Aria says it already has customer orders and is actively deploying. The company has gone from founding to production in 15 months, a pace Karam highlighted in his launch materials. That speed is real. But the test is not whether Aria can ship switches. The test is whether, when a neocloud operator's 10,000-GPU cluster drops packets at 2 a.m., they trust the 15-month-old company to fix it before millions in lost throughput compound into something worse.

The next earnings cycle at Arista, and the first public deployment metrics from Aria's customers, will answer that question. Until then, the $125 million is a bet that the most important part of an AI data center is the part nobody used to think about.

Frequently Asked Questions

What does Aria Networks do?

Aria Networks builds Ethernet switches and software for AI data centers. Its Deep Networking platform uses telemetry from Broadcom silicon to optimize network performance, targeting neoclouds that operate large GPU clusters.

How much funding has Aria Networks raised?

The company raised $125 million in its first Series A round, led by Sutter Hill Ventures with participation from Atreides Management, Valor Equity Partners, and Eclipse Ventures.

Who founded Aria Networks?

CEO Mansour Karam and CTO Subhachandra Chandra, both former Arista engineers. Karam previously founded Apstra, which Juniper Networks acquired for roughly $190 million in 2021.

How does Aria Networks differ from Arista or Cisco?

Aria uses the same Broadcom chips and SONiC operating system as rivals. The differentiation is software: a telemetry engine that captures network data at up to 10,000x finer resolution, plus AI agents that optimize performance in real time.

What is token efficiency?

Token efficiency measures how much useful AI output a data center produces relative to its running costs. Aria argues that network performance directly determines this metric, making the network the highest-leverage investment in an AI cluster.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

IMPLICATOR

IMPLICATOR