Anthropic launched Claude Code Channels on Friday, connecting its coding agent to Telegram and Discord through a research-preview feature that lets developers message Claude from their phones. MacStories editor John Voorhees spent a few hours testing the Telegram integration and found it powerful enough to compile iOS projects, run CLI tools, and kick off podcast transcriptions from an iPhone. But he flagged one missing piece: "there is no support for voice messaging."

That gap stings. Everybody who has used Telegram knows the move. Hold the mic button, talk, let go. Try typing the same thought with your thumbs and you will give up halfway through. The detail disappears. The nuance disappears. You end up sending "fix the auth thing" instead of the three sentences that would actually explain what you mean. Messaging a coding agent from your phone only works if the input method does not fight you, and text on glass fights you.

The fix requires roughly 120 lines of code split between a Python transcription endpoint and a TypeScript voice handler. No new services. No new dependencies. Both patches plug into infrastructure that already exists. Total setup time with a local GPU: about 20 minutes. Slightly less with a cloud transcription API. The full code, patches, and setup instructions are available on GitHub.

The Breakdown

- Claude Code Channels launched Friday with text and photo support but no voice messaging

- A 120-line patch adds voice via local Whisper GPU transcription in about 20 minutes

- Cloud alternatives (Groq free tier, OpenAI at $0.006/min) work without GPU hardware

- OpenClaw handled voice by improvising with FFmpeg and curl; Channels requires a manual patch

What you need before you start

Three things must already be working.

A Claude Code session with the Telegram channel running. Follow Anthropic's setup instructions to create a bot through BotFather, install the Telegram plugin, configure your token, and pair your account. The launch command:

claude --channels plugin:telegram@claude-plugins-official

A transcription server. This guide uses ytwhisper, a Flask app backed by faster-whisper on an NVIDIA GPU. But the architecture treats the transcription backend as a black box. Any endpoint that accepts a file upload and returns {"text": "...", "duration": N} works as a drop-in replacement. No GPU? Skip ahead to the cloud alternatives section.

And a willingness to patch two files. One route added to a Python file, one handler added to a TypeScript file. Both changes are additive. Nothing existing gets modified.

How the pieces fit

Three components on a local network. The Telegram plugin (server.ts) is an MCP server running alongside Claude Code. It polls Telegram's Bot API for new messages. If the sender is on the allowlist, the message gets shoved into the session. If not, dropped. Whisper runs on a CUDA GPU in its own Flask process. Claude Code sits at the far end and does not care how the words got there. Typed or spoken, same plain text.

Phone → Telegram Bot API → server.ts → .ogg download → POST to Whisper → transcript → Claude Code

A 15-second voice message clears this pipeline in under two seconds on a warm GPU. The model loads into VRAM on the first request, roughly three seconds, and stays resident for every call after that. After that first load, a 15-second recording transcribes in under a second. Faster than you can switch apps.

Step 1: Add the transcription endpoint

One new Flask route. Upload comes in, lands in a temp directory. The model chews through it. JSON comes back out.

@app.route("/transcribe-file", methods=["POST"])

def transcribe_file():

if "file" not in request.files:

return jsonify({"error": "No file provided"}), 400

uploaded = request.files["file"]

uploaded.seek(0, 2)

size = uploaded.tell()

uploaded.seek(0)

if size > 25 * 1024 * 1024:

return jsonify({"error": "File too large (25MB max)"}), 413

ext = Path(uploaded.filename).suffix or ".ogg"

tmp_path = DOWNLOADS_DIR / f"voice_{uuid.uuid4().hex[:8]}{ext}"

try:

uploaded.save(str(tmp_path))

duration = get_audio_duration(tmp_path)

model = get_model()

segments, info = model.transcribe(

str(tmp_path),

beam_size=BEAM_SIZE,

language="en",

initial_prompt=DEFAULT_VOCAB,

temperature=[0.0, 0.2, 0.4],

vad_filter=True,

vad_parameters=dict(min_silence_duration_ms=500),

)

text = " ".join(seg.text.strip() for seg in segments)

text = post_process_transcript(text)

return jsonify({"text": text, "duration": round(duration, 1)})

except Exception as e:

app.logger.error(f"transcribe-file error: {e}")

return jsonify({"error": str(e)}), 500

finally:

tmp_path.unlink(missing_ok=True)

Two settings matter. The temperature parameter uses a narrow range, [0.0, 0.2, 0.4], because phone microphone audio is clean enough that Whisper handles it at temperature zero. Wider ranges exist for noisier sources like YouTube videos. The vad_filter enables Voice Activity Detection to skip the half-second of dead air that phone recordings typically start and end with. Without it, Whisper sometimes fills those quiet stretches with hallucinated filler text.

Cleanup happens in a finally block. The temp file gets deleted whether transcription succeeds or fails. The original .ogg stays in the Telegram plugin's inbox directory as a backup.

Deploy and verify:

scp app.py root@your-server:/opt/ytwhisper/app/

ssh root@your-server "systemctl restart ytwhisper"

# Should return 400 (route exists, no file provided)

curl -s -o /dev/null -w "%{http_code}" -X POST http://your-server:5000/transcribe-file

Daily at 6am PST

Stay ahead of the curve

No breathless headlines. No "everything is changing" filler. Just who moved, what broke, and why it matters.

Free. No spam. Unsubscribe anytime.

Step 2: Patch the Telegram plugin

The plugin lives at ~/.claude/plugins/cache/claude-plugins-official/telegram/0.0.1/server.ts. Four additions, all self-contained. Nothing gets deleted or moved.

Add the server URL constant near the top of the file, after the INBOX_DIR definition:

const YTWHISPER_URL = process.env.YTWHISPER_URL || 'http://localhost:5000'

Configure it in your environment:

echo "YTWHISPER_URL=http://your-server:5000" >> ~/.claude/channels/telegram/.env

Register the voice handler after the existing photo handler:

bot.on('message:voice', async ctx => {

await handleInboundVoice(ctx)

})

Add the handler function after handleInbound. This is the core of the patch:

async function handleInboundVoice(ctx: Context): Promise<void> {

const result = gate(ctx)

if (result.action === 'drop') return

if (result.action === 'pair') {

await ctx.reply(`Pairing required`)

return

}

void bot.api.sendChatAction(chat_id, 'typing').catch(() => {})

const voice = ctx.message!.voice!

const file = await ctx.api.getFile(voice.file_id)

const url = `https://api.telegram.org/file/bot${TOKEN}/${file.file_path}`

const res = await fetch(url)

const buf = Buffer.from(await res.arrayBuffer())

writeFileSync(path, buf)

const form = new FormData()

form.append('file', new Blob([buf]), 'voice.ogg')

const transcribeRes = await fetch(`${YTWHISPER_URL}/transcribe-file`, {

method: 'POST',

body: form,

signal: AbortSignal.timeout(30000),

})

const content = transcribeRes.ok

? `[voice] ${(await transcribeRes.json()).text}`

: '[voice message — transcription failed]'

void mcp.notification({

method: 'notifications/claude/channel',

params: { content, meta: { chat_id, voice_path, voice_duration, user, ts } },

})

}

Access control runs before anything downloads, so unauthorized senders cannot fill your inbox with audio files. A 30-second timeout on the transcription request keeps the handler from blocking forever if the GPU server goes down. Anthropic's posture here is cautious by design, and the plugin follows suit.

When transcription works, Claude sees [voice] Hello, can you refactor the auth module... in the terminal. The [voice] prefix tells Claude the input was spoken. When it fails, the notification reads [voice message — transcription failed], and Claude asks you to type instead. No crash, no silent drop.

Update the MCP instructions array with a line documenting that voice messages arrive as [voice] followed by the transcribed text, so Claude understands the format and knows where the raw .ogg file lives on disk.

Step 3: Restart and test

claude --channels plugin:telegram@claude-plugins-official

Open Telegram. Find your bot. Hold the mic button, say something, release. In Claude Code's terminal, the message should arrive as [voice] <your words>. Claude responds as if you typed it. The full loop works: voice in, text out.

Test the failure path too. Stop your Whisper server, send another voice message, and confirm Claude sees [voice message — transcription failed]. Restart the server. Normal operation resumes immediately.

Done. Anthropic's code? Untouched. Your additions live next to it, not inside it.

Performance numbers

We tested on an RTX 3080 Ti, large-v3-turbo in float16:

| Audio length | Transcription | End-to-end |

|---|---|---|

| 10 seconds | ~500ms | ~1.5s |

| 30 seconds | ~1.2s | ~2s |

| 60 seconds | ~2.5s | ~3.5s |

VRAM footprint: 2.7GB out of 12GB. Plenty of headroom. And here is the funny part. Most of the wait you actually feel is Telegram's servers handing over the file, not your GPU crunching audio. Which raises the obvious question: do you even need the GPU?

No GPU? Use a cloud API instead

Swap the transcription backend without touching the Telegram plugin. The contract is simple: accept a file, return JSON with a text field.

Groq blows everything else away on speed, and they give you a free tier to start. Because their API mimics OpenAI's, the adapter barely exists:

@app.route("/transcribe-file", methods=["POST"])

def transcribe_file():

uploaded = request.files["file"]

import openai

client = openai.OpenAI(

base_url="https://api.groq.com/openai/v1",

api_key=os.environ["GROQ_API_KEY"],

)

result = client.audio.transcriptions.create(

model="whisper-large-v3-turbo", file=uploaded,

)

return jsonify({"text": result.text, "duration": 0})

OpenAI's Whisper API runs $0.006 per minute. Not as fast as Groq, but if your production stack already talks to OpenAI you know it will stay up. Swap the base URL in the adapter and you are done.

CPU fallback keeps audio off the internet entirely. Set WHISPER_DEVICE=cpu, set WHISPER_COMPUTE=int8, drop to the base model. Your audio never leaves the machine. The tradeoff is raw speed. About five to eight seconds per 15-second recording on a decent Intel or AMD machine. For a quick one-off question, totally fine. Try having a real back-and-forth at that pace and you will start reaching for the keyboard again.

| Backend | Speed | Cost | Privacy |

|---|---|---|---|

| Local GPU | <1s | Free | Full |

| Groq | <1s | Free tier | Cloud |

| OpenAI Whisper | 1-3s | $0.006/min | Cloud |

| CPU fallback | 5-8s | Free | Full |

Own a GPU? Use it. No GPU? Groq's free tier will have you talking to Claude inside ten minutes.

Limitations worth knowing

Transcription is locked to English for now. Whisper itself speaks over 100 languages. Flipping it to auto-detect is a one-line edit in the Flask route. Just nobody has gotten around to it.

Plugin updates overwrite server.ts. The patch files on GitHub make reapplying a five-minute job. The voice handler is additive, nothing existing is modified.

Only audio voice messages work. Telegram's round video notes are a different message type and get ignored.

And the 30-second fetch timeout may clip very long dictations. Most voice messages fall well under that, but bump the value in the code if you tend to monologue.

Claude Code Channels vs. OpenClaw

Origin

OpenClaw started as a weekend hack by Peter Steinberger in November 2025. He wanted to check on his computer from his phone while on a trip to Morocco. The project grew to 300,000 lines of code and 200,000 GitHub stars before OpenAI hired Steinberger in February 2026. Claude Code Channels is Anthropic's first-party answer, built on the Model Context Protocol the company donated to the Linux Foundation.

Platforms

OpenClaw connects to WhatsApp, iMessage, Slack, Telegram, Discord, and nearly every other major messaging app. Channels currently supports only Telegram and Discord, plus a localhost demo called Fakechat.

Scope

OpenClaw manages flights, controls smart home devices, monitors security cameras, and runs social media campaigns. It is a general-purpose autonomous agent. Channels focuses on developer workflows: code, tests, builds, and debugging.

Security

OpenClaw grants broad system access with minimal guardrails, which spawned safety-focused forks like NanoClaw and KiloClaw. Channels implements sender allowlists, per-session opt-in via the --channels flag, and local-only configuration. Enterprise and Team plans have it disabled by default.

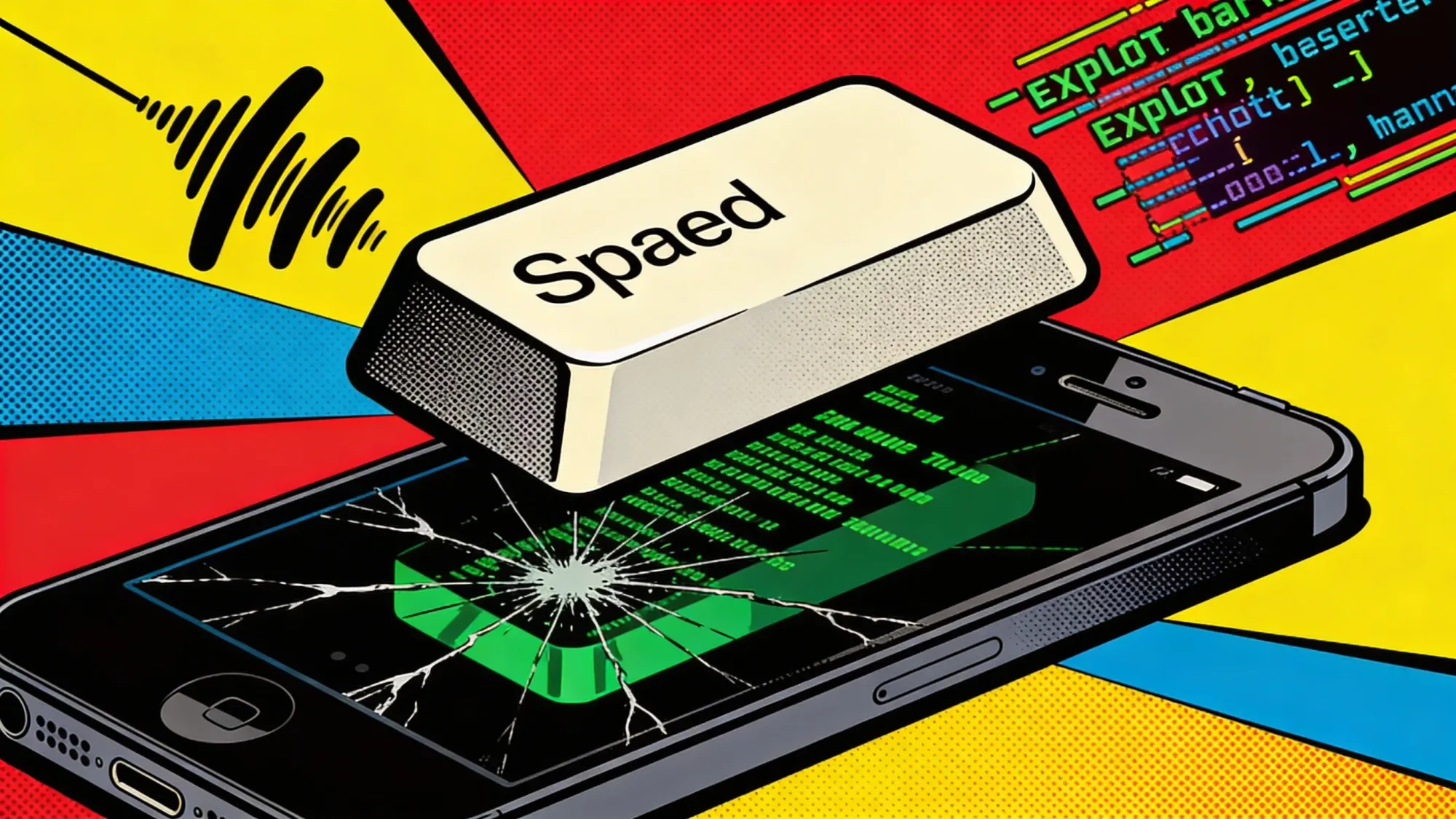

Voice

Steinberger never built voice support into OpenClaw. The agent figured it out on its own. It found FFmpeg on his machine, converted the audio file to WAV, discovered an OpenAI API key, called the Whisper API via curl, and replied with the transcript. Channels ships without voice capability. Adding it requires the patch described in this article.

Persistence

OpenClaw runs as a background process, always on. Channels requires an active Claude Code session. Close the terminal, and messages stop.

The short version

If you want a personal AI that books flights and adjusts your bed temperature, OpenClaw was designed for that. If you want a coding agent you can message from your phone with the security posture of a first-party Anthropic product, Channels is the cleaner path.

Frequently Asked Questions

Does Claude Code Channels officially support voice messages?

No. Channels launched as a research preview with text and photo support only. Voice requires patching the Telegram plugin's server.ts and adding a transcription endpoint. The full patch is about 120 lines of code across Python and TypeScript.

Can I use this without an NVIDIA GPU?

Yes. The transcription backend is swappable. Groq offers free cloud Whisper with sub-second latency. OpenAI charges $0.006 per minute. You can also run Whisper on CPU with the base model, though expect 5-8 second delays per clip.

What happens when the transcription server goes down?

Claude receives a fallback notification reading 'voice message, transcription failed' and asks you to type instead. A 30-second timeout prevents the handler from hanging indefinitely. No crash, no silent failure.

Will plugin updates break the voice patch?

Yes. Claude Code plugin updates overwrite server.ts. The patch is additive and does not modify existing code, so reapplying takes about five minutes using the reference files on GitHub.

How does this compare to how OpenClaw handles voice?

OpenClaw's agent figured out voice transcription on its own. It found FFmpeg on the user's machine, converted audio to WAV, discovered an OpenAI API key, and called Whisper via curl. Channels ships with zero voice support. This patch adds it manually.

IMPLICATOR

IMPLICATOR