The hottest AI project on GitHub right now requires full access to your emails, messages, bank accounts, and shell commands. Hundreds of users handed over those keys without a second thought. Security researchers found the doors wide open.

Here is an AI assistant that racked up sixty-nine thousand GitHub stars in a month. Peter Steinberger, a developer from Austria, built Moltbot to run nonstop on your machine. It used to be called Clawdbot. The thing reads your calendar, fires off emails, books dinner, runs code. Connects through WhatsApp and Telegram and Signal and iMessage and Slack and Discord. Most chatbots sit there waiting for you to type. This one does not wait. Morning briefings. Schedule reminders. Task alerts. The Iron Man Jarvis fantasy, finally real.

Getting it running takes about an hour. Maybe. You clone a repo, wrestle with environment variables, figure out reverse proxy rules, hand over your messaging credentials, and grant filesystem access. The documentation makes it look simple. One veteran developer with twelve months of Claude Code experience posted that he spent four hours and still could not get it to do anything. The gap between the marketing and the reality mirrors the gap between the promise and the risk.

The fantasy requires access to everything. That access is exactly what makes it dangerous.

The exposure nobody expected

Jamieson O'Reilly, founder of red-teaming firm Dvuln, started scanning the internet last week. He found hundreds of Moltbot instances exposed to the web with no protection.

The Security Breakdown

• Researchers found 1,862 MCP servers exposed with zero authentication; all 119 tested responded without credentials

• 22% of enterprise customers have employees running Moltbot without IT approval, creating shadow AI with full system privileges

• A proof-of-concept supply chain attack via MoltHub compromised 16 developers in 7 countries within 8 hours

• Three critical MCP vulnerabilities (CVSS 9.4-9.6) disclosed in six months, all stemming from optional authentication

"Of the instances I've examined manually, eight were open with no authentication at all and exposing full access to run commands and view configuration data," O'Reilly told The Register. The rest showed varying levels of protection. Some appeared to be test deployments. Others were misconfigured in ways that reduced but did not eliminate exposure.

One user had linked their Signal account to a public-facing Moltbot server. Full read access. O'Reilly found the device linking URI and QR codes sitting there for anyone to grab. Tap it on a phone with Signal installed and you are paired to that account. Complete access to encrypted messages. The user thought they were setting up a personal assistant. They created a public window into their private conversations.

Knostic ran a broader scan and found 1,862 MCP servers exposed with no authentication. They tested 119. Every single one responded without requiring credentials.

The problem runs deeper than misconfigurations. Moltbot stores credentials in plaintext Markdown and JSON files under ~/.clawdbot/. No encryption at rest. Hudson Rock researchers warned that infostealer malware families including RedLine, Lumma, and Vidar are already adapting to target these local-first directory structures. Your AI assistant becomes a goldmine for credential harvesting.

The architecture that invites attack

Security researchers have grown visibly frustrated repeating the same diagnosis. Moltbot is not broken. Moltbot works exactly as designed. The design itself is the vulnerability.

"The deeper issue is that we've spent 20 years building security boundaries into modern operating systems," O'Reilly said. "Sandboxing, process isolation, permission models, firewalls, separating the user's internal environment from the internet. All of that work was designed to limit blast radius and prevent remote access to local resources."

AI agents tear all of that down by design. They need to read your files. Access your credentials. Execute commands. Interact with external services. The value proposition requires punching holes through every boundary we spent decades building. When these agents are exposed to the internet or compromised through supply chains, attackers inherit all of that access. The walls come down.

Forrester analyst Jeff Pollard put it bluntly in a blog post: "From a security perspective, it looks like a very effective way to drop a new and very powerful actor into your environment with zero guardrails."

Token Security surveyed its enterprise customers and found that 22% have employees actively using Moltbot. Most without IT approval. Shadow AI in production environments, with full system privileges, connected to corporate messaging and email accounts.

The supply chain that poisoned itself

O'Reilly decided to test how easy it would be to compromise Moltbot users through the official skill library. Skills are packaged instruction sets that extend what Moltbot can do. The MoltHub registry lets developers share them publicly.

He built a skill with a minimal "ping" payload. Uploaded it to MoltHub. Then he artificially inflated the download count until it became the most popular asset on the platform.

Within eight hours, 16 developers in seven countries downloaded his poisoned skill. If you had grabbed it that morning, your machine would have been compromised before lunch.

Join 10,000+ AI professionals

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

"The payload pinged my server to prove execution occurred, but I deliberately excluded hostnames, file contents, credentials, and everything else I could have taken," O'Reilly explained. "This was a proof of concept. In the hands of someone less scrupulous, those developers would have had their SSH keys, AWS credentials, and entire codebases exfiltrated before they knew anything was wrong."

MoltHub states in its developer notes that all code downloaded from the library will be treated as trusted code. No moderation process. Developers are responsible for vetting anything they download. The platform designed for convenience became a vector for compromise.

The protocol underneath shares the same flaws

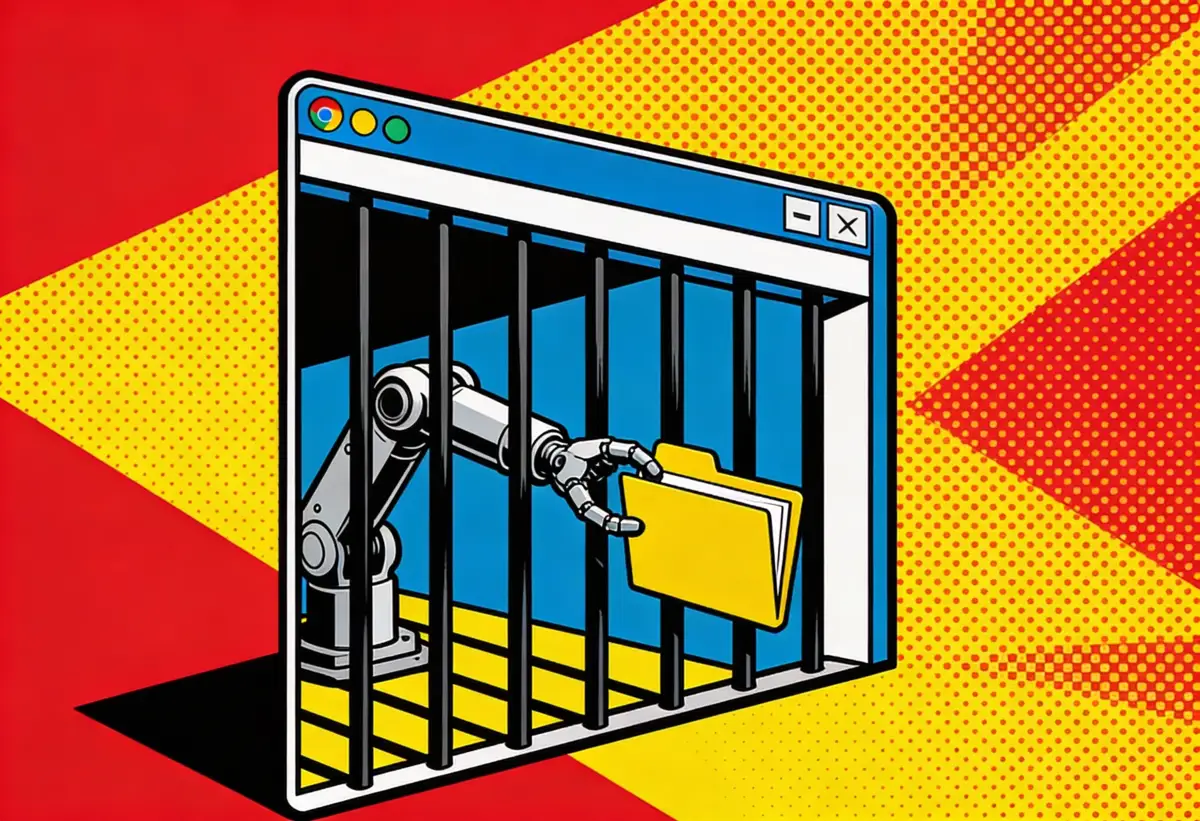

Moltbot runs on the Model Context Protocol, Anthropic's open standard for connecting AI models to external systems. MCP shipped without mandatory authentication. Authorization frameworks arrived six months after widespread deployment.

Itamar Golan sold Prompt Security to SentinelOne last year for an estimated $250 million. This week he posted a warning on X: "Disaster is coming. Thousands of Clawdbots are live right now on VPSs with open ports to the internet and zero authentication. This is going to get ugly."

The vulnerabilities keep stacking. CVE-2025-49596 exposed unauthenticated access in Anthropic's MCP Inspector, scoring 9.4 on the CVSS scale. Bad. CVE-2025-6514 hit 9.6 and enabled command injection through mcp-remote, an OAuth proxy that had been downloaded 437,000 times before anyone noticed. Worse. CVE-2025-68143 allowed path traversal in the official MCP Git server, the reference implementation that developers copy when building their own tools.

Three critical vulnerabilities in six months. Three different attack vectors. One root cause. Authentication was always optional, and developers treated optional as unnecessary.

Equixly analyzed popular MCP implementations and found that 43% contained command injection flaws, 30% permitted unrestricted URL fetching, and 22% leaked files outside intended directories. Pynt's research showed that deploying just 10 MCP plugins creates a 92% probability of exploitation.

Prompt injection makes everything worse. Security researcher Johann Rehberger disclosed a file exfiltration vulnerability back in October. An attacker could hide instructions inside a document. Moltbot reads the document, follows the hidden instructions, and uploads sensitive files to an attacker's server. The user never sees it happen.

Anthropic launched Cowork this month, expanding MCP-based agents to a broader, less security-aware audience. Same vulnerability, immediately exploitable. PromptArmor demonstrated the attack with a malicious document that manipulated the agent into uploading sensitive financial data. Anthropic knew. They shipped anyway.

The company's official mitigation guidance reads like a shrug: Users should watch for "suspicious actions that may indicate prompt injection." Good luck spotting the attack you never see.

When to use MCP Servers

Standardized integrations that work across Claude, ChatGPT, and VS Code with better isolation.

• Enterprise tool integration — Connect AI to Slack, Asana, Figma, Salesforce with audit trails

• Interactive dashboards — MCP Apps render forms, charts, and data tables inside the chat

• Multi-user environments — Shared AI capabilities across teams with centralized controls

When to use Moltbot

Always-on personal assistant with proactive messaging and persistent memory.

• 24/7 personal automation — Morning briefings, reminders, and alerts without prompting

• Cross-platform messaging — Interact through WhatsApp, Signal, iMessage, Telegram

• Long-term memory — Recalls conversations from weeks ago via local SQLite and Markdown

The rebrand that attracted scammers

Anthropic asked Steinberger to change the project name last Monday. Trademark concerns. "Clawd" sounds too much like "Claude." He agreed to the rebrand from Clawdbot to Moltbot. The name references molting. Lobsters do it when they outgrow their armor. They crack open, crawl out bigger, and spend days soft and defenseless while the new shell forms. Steinberger leaned into the metaphor: "Same lobster soul, new shell."

During the transition, Steinberger tried to rename the GitHub organization and X handle simultaneously. He released the old name. Crypto scammers grabbed it before he could claim the new one. Ten seconds, maybe less.

Fake tokens launched using the project name. One reached a $16 million market cap before crashing. Steinberger responded on X: "Any project that lists me as a coin owner is a SCAM. No, I will not accept fees. You are actively damaging the project."

A malicious VSCode extension impersonating Clawdbot appeared on the marketplace. Aikido researchers caught it installing ScreenConnect RAT on developers' machines.

The people who know better keep using it anyway

Despite everything, adoption accelerated. Cloudflare stock surged 14% last week, driven entirely by speculation that Moltbot uses Cloudflare's infrastructure to connect with commercial AI models. People bought Mac Minis specifically to run dedicated Moltbot instances around the clock. The M4 Mac Mini starts at $599. Add an Anthropic API subscription and you are paying hundreds of dollars monthly in token costs. One user burned through three hundred dollars in a day. Just tokens.

A few people tried to limit the damage. They gave Moltbot its own Apple account for iMessage, its own Gmail for signups, its own GitHub handle for code. Separate identities that could not touch the owner's real credentials. Others ran it inside virtual machines using UTM, isolating the assistant from their actual files.

The excitement drowned out the warnings. Tech insiders treated the security disclosures as background noise while celebrating the hardware purchases.

Daily at 6am PST

The AI news your competitors read first

No breathless headlines. No "everything is changing" filler. Just who moved, what broke, and why it matters.

Free. No spam. Unsubscribe anytime.

MacStories editor Federico Viticci spent a week testing the tool and described it as "Claude with hands." 1Password's Jason Meller documented how Moltbot built him a fully featured kanban board within an hour. Another user reported that when Moltbot could not book a restaurant through OpenTable, it downloaded its own AI voice software and called the restaurant directly.

That capability is precisely the problem. Moltbot improvises. It grabs whatever tools it needs. It can apply general world knowledge, specific skills, and near-perfect memory toward objectives you set. And toward objectives it decides to set for itself.

Heather Adkins, VP of security engineering at Google Cloud, has spent her career building security systems. She posted five words on X: "Don't run Clawdbot."

Eric Schwake, director of cybersecurity strategy at Salt Security, described the gap between enthusiasm and expertise: "A significant gap exists between the consumer enthusiasm for Clawdbot's one-click appeal and the technical expertise needed to operate a secure agentic gateway."

Many users unintentionally create massive visibility voids by failing to track which tokens they have shared with the system. Without enterprise-level insight into these hidden connections, even a small misconfiguration turns a useful tool into an open back door.

The future that security cannot catch

Moltbot is not an anomaly. It is a preview.

AI agents require broad access to be useful. Narrow permissions defeat the purpose. Every major AI lab is building tools that read your email, browse your files, execute your code, and interact with your services. The architecture demands trust. The internet rewards exploitation.

Palo Alto Networks chief security officer Wendi Whitmore warned earlier this month that AI agents could represent the new era of insider threats. Deployed across organizations, trusted to carry out tasks autonomously, they become attractive targets for attackers looking to hijack these agents for personal gain.

The security community has been shouting into the wind for months. They documented the risks. They published the warnings. They watched developers deploy anyway, users connect their most sensitive accounts anyway.

Steinberger wanted to show what personal AI assistants could become. The lobster shed its old shell and grew larger. It remains soft and exposed, waiting for armor that may never harden. The holes in the walls are still there. The attackers are already walking through.

Frequently Asked Questions

Q: What is Moltbot and why did it change its name from Clawdbot?

A: Moltbot is an open-source AI assistant that runs locally on your machine and connects through messaging apps like WhatsApp and Signal. Anthropic asked developer Peter Steinberger to rename it from Clawdbot due to trademark concerns, as "Clawd" sounds too similar to "Claude."

Q: How do attackers exploit exposed Moltbot instances?

A: Misconfigured Moltbot deployments expose admin interfaces to the internet without authentication. Attackers can access conversation histories, retrieve API keys, execute commands, and steal credentials stored in plaintext files. One researcher found Signal account QR codes sitting on a public server.

Q: What is the Model Context Protocol (MCP) and why is it vulnerable?

A: MCP is Anthropic's open standard for connecting AI models to external systems. It shipped without mandatory authentication, and authorization frameworks arrived six months after deployment. Three critical CVEs have been disclosed, with 43% of implementations containing command injection flaws.

Q: How much does running Moltbot cost?

A: Beyond hardware costs (M4 Mac Mini starts at $599), users pay for API tokens. Heavy use can run hundreds of dollars monthly, with one user reporting $300 spent in a single day. The assistant makes many API calls behind the scenes for agentic tasks.

Q: Can Moltbot be run securely?

A: Some users isolate risk by giving Moltbot separate accounts for iMessage, Gmail, and GitHub that cannot touch real credentials. Others run it in virtual machines using UTM. Security experts recommend strong authentication, firewall rules, and treating the agent like an employee who will execute any instruction literally.

Related Stories

IMPLICATOR

IMPLICATOR