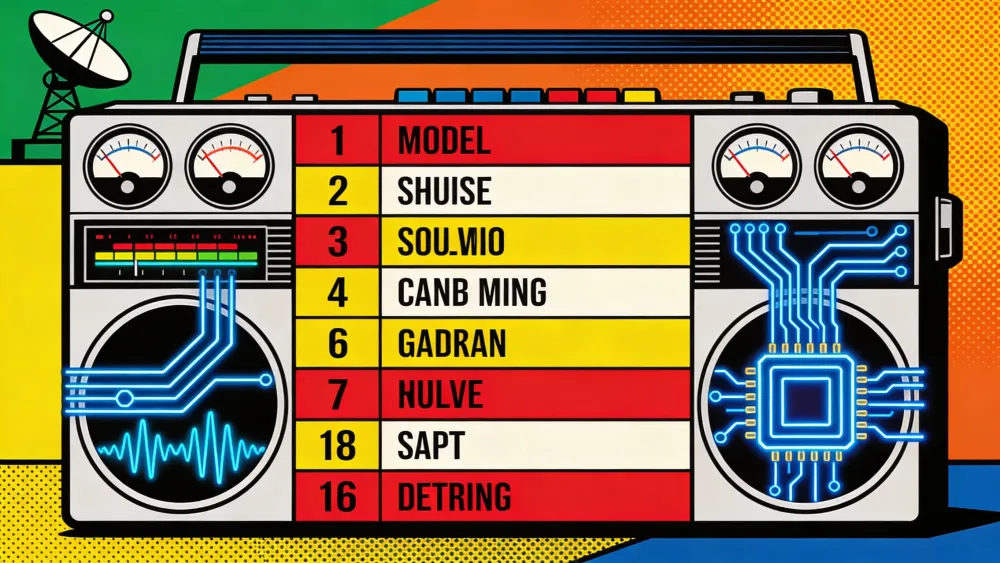

Implicator.ai on Friday released the AI Top 40, a weekly chart that scrapes 10 independent benchmarks and boils them down to one number per model. Forty models from 18 labs made the cut. The chart updates every Saturday and anyone can embed it for free.

The system pulls raw scores from Chatbot Arena, SWE-bench Verified, GPQA Diamond, ARC-AGI, Humanity's Last Exam, LiveCodeBench, MMLU-Pro, HELM, Artificial Analysis, and the HuggingFace Open LLM Leaderboard. It normalizes them using Z-score standardization and applies a three-tier weighting system we call the Implicator Algorithm.

Key Takeaways

- The AI Top 40 aggregates 10 independent benchmarks into a single 0-100 composite score, updated every Saturday

- Contamination-resistant benchmarks like SWE-bench and ARC-AGI carry 4x the weight of Chatbot Arena in the composite

- GPT-5.4 leads with 100.0 despite Claude Opus 4.6 topping Arena, because it qualifies on 8 benchmarks vs. Claude's 5

- The chart ranks 40 models from 18 labs and is free to embed on any website via a public GitHub widget

AI-generated summary, reviewed by an editor. More on our AI guidelines.

How the weighting works

Not all benchmarks carry equal influence. The algorithm assigns 2.0x weight to five Tier 1 benchmarks that resist data contamination and use objective pass/fail scoring: SWE-bench, LiveCodeBench, GPQA Diamond, ARC-AGI, and HLE. HELM, Artificial Analysis, and HuggingFace get 1.0x as Tier 2. Chatbot Arena and MMLU-Pro sit at 0.5x, meaning Tier 1 benchmarks contribute four times more to a model's composite than Tier 3.

That last decision will draw attention. Chatbot Arena, the crowdsourced benchmark that has become AI's most influential ranking, gets the lowest weight. The reason is blunt: the game is rigged. The "Leaderboard Illusion" paper by researchers at Cohere, Stanford, MIT, and Allen AI audited 2 million Arena battles and found that select labs could privately test dozens of model variants before publishing only the best score. Meta tested 27 private Llama-4 variants in a single month before the public launch. Twenty-seven.

A model must appear on at least 5 of 10 benchmarks to qualify. An earlier experiment with a minimum of 3 caused statistical inflation, allowing models that happened to score well on a narrow set of favorable tests to outrank models measured honestly across eight.

GPT-5.4 holds the top spot

The current rankings, published March 28, put OpenAI's GPT-5.4 at #1 with a perfect 100.0 composite. Claude Opus 4.6 sits at #2 with 93.2. Grok 4 takes third at 86.6. Sharp-eyed readers will notice every model currently shows 12 weeks on the chart. That is because we seeded the system by backfilling 12 weeks of historical data from the same benchmark snapshot. Everyone entered on the same starting line. The counter will start diverging as new models qualify and older ones drop off in future weeks.

Get the AI Top 40 in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

That result contradicts Chatbot Arena, where Claude Opus 4.6 holds #1 with an Elo rating of 1,504. But Arena carries 0.5x weight in the composite. GPT-5.4 qualifies on eight benchmarks, including all five in Tier 1. Claude qualifies on five and is absent from GPQA Diamond, MMLU-Pro, and HELM, three benchmarks where GPT-5.4 scores near the top.

Same logic as a decathlon. Win the 100-meter sprint by a mile, still lose to the athlete who placed well in eight events. The algorithm rewards breadth of verified performance, not dominance on a single structurally compromised leaderboard.

The open-weight picture

Alibaba's Qwen 3 235B ranks #8 overall. That makes it the highest open-weight model on the chart, sitting above Claude Opus 4, o3, o4-mini, Gemini 2.5 Pro, and GPT-4.1. DeepSeek's two entries, R1 at #16 and V3 at #21. Meta's Llama 4 Maverick landed at #31.

Eleven of the 40 qualifying models carry open weights. The chart splits into three views generated from the same underlying data: an Open Weights board (green), a Commercial board (gold), and an All Models board covering both. Here is the live Open Weights board:

Why another ranking

Benchmark data is abundant but scattered across a dozen sites with a dozen scoring systems. SWE-bench reports percentage of resolved GitHub issues. Arena spits out Elo ratings. MMLU-Pro gives you a number out of 100. Lining those up side by side tells you nothing, and cherry-picking whichever benchmark makes your model look best has become standard practice among labs announcing new models. Anyone who's tried to compare five models across five leaderboards knows the frustration firsthand.

The AI Top 40 sits alongside Implicator.ai's LLM Popularity Meter, where the editorial team assigns subjective satisfaction scores based on daily use. Vibes vs. data. Both update weekly, and they complement each other: the Popularity Meter captures which model a writer reaches for at 2 AM on deadline, while the AI Top 40 captures which model survives ten independent exams.

The format borrows from music

Casey Kasem would recognize it. The name pays tribute to the radio host who turned chart countdowns into a weekly American habit, and the design borrows accordingly. Rank numbers sit inside circles. Movement arrows show who climbed and who fell. "New entry" badges flag first appearances. Open it on your phone and the feeling is immediate: you're reading a printed chart from 1986, monospace type and all, except the entries are language models instead of pop singles.

Publishers and developers can grab the chart for their own pages. The widget ships as one JavaScript file, wrapped in a Shadow DOM so it stays out of your stylesheet's way. Two lines of HTML gets it running. Four chart variants, data from a public GitHub repository, cached on jsDelivr. Free to embed, attribution required. The Commercial board below is a live embed, running right here on this page with the same two lines of code:

Next update drops April 5. The full chart, methodology breakdown, and embed codes are live at implicator.ai/ai-top-40.

Frequently Asked Questions

What is the AI Top 40?

A weekly composite leaderboard from Implicator.ai that ranks large language models by aggregating scores from 10 independent benchmarks into a single 0-100 score using Z-score standardization and tier-weighted scoring.

Why does GPT-5.4 rank above Claude Opus 4.6 when Claude leads Chatbot Arena?

Chatbot Arena carries only 0.5x weight in the composite due to documented integrity issues. GPT-5.4 qualifies on 8 benchmarks including all five Tier 1 tests, while Claude qualifies on 5 and is absent from GPQA Diamond, MMLU-Pro, and HELM.

Which benchmarks carry the most weight?

Five Tier 1 benchmarks get 2.0x weight: SWE-bench Verified, LiveCodeBench, GPQA Diamond, ARC-AGI, and Humanity's Last Exam. These resist data contamination and use objective pass/fail scoring.

How many models qualify for the chart?

Forty models from 18 labs currently qualify. A model must appear on at least 5 of the 10 benchmarks to make the cut. Eleven are open-weight, 29 are commercial.

Can I embed the AI Top 40 on my website?

Yes. The embeddable widget requires two lines of HTML and is free to use with attribution. Four chart variants are available: all models, open weights, commercial, and a three-board combo. Code and data live in a public GitHub repository.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

Implicator

Implicator