Inception on Tuesday released Mercury 2, a language model built on diffusion architecture that the startup says generates text more than five times faster than comparable systems, Bloomberg reported. The Palo Alto-based company, founded by Stanford computer science professor Stefano Ermon, claims Mercury 2 processes 1,009 tokens per second on standard Nvidia GPUs at $0.25 per million input tokens and $0.75 per million output tokens. Menlo Ventures led a $50 million seed round in the startup last year, with backing from Microsoft's M12, Snowflake Ventures, Databricks, and angel investors including former Google CEO Eric Schmidt, Andrew Ng, and Andrej Karpathy.

The speed claim arrives at a moment when the AI industry is visibly anxious about latency. Most production AI no longer works as a single prompt returning a single answer. Agents chain dozens of inference calls per task. Retrieval pipelines stack multiple model queries in sequence. Voice interfaces need sub-second responses or users hang up. In every one of these scenarios, milliseconds lost per call compound across every step in the loop.

Key Takeaways

- Mercury 2 generates 1,009 tokens per second on standard Nvidia GPUs at $0.25 per million input tokens

- Diffusion architecture produces multiple tokens simultaneously, scrapping one-at-a-time autoregressive decoding

- Google and Together AI are pursuing similar diffusion approaches, validating the core architecture

- Inception targets latency-sensitive workloads: voice AI, code editors, and agentic loops where milliseconds compound

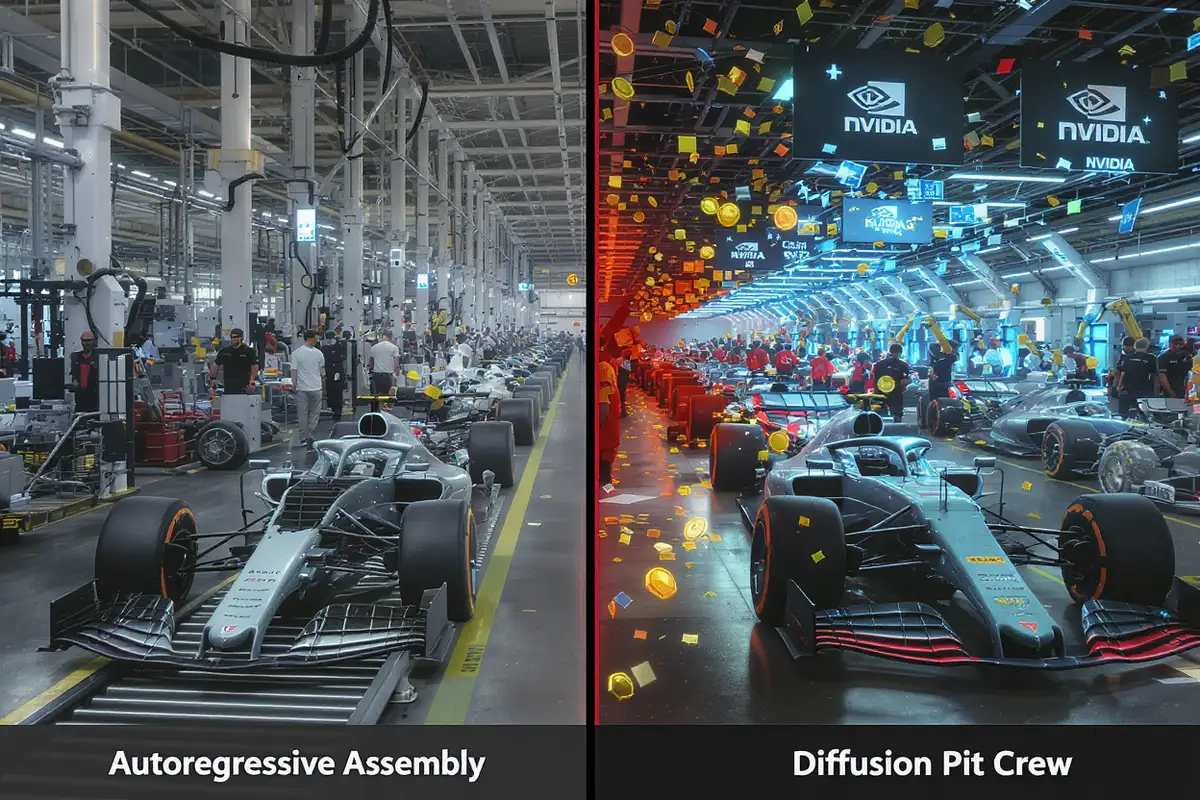

How diffusion flips text generation

Standard language models generate text one token at a time. One word, then the next, then the next after that. Always waiting. That's autoregressive decoding, the same bottleneck running inside GPT, Claude, and everything else on the market. Faster GPUs don't help. The constraint is in the sequencing, not the silicon.

Mercury 2 abandons that constraint. Instead of sequential generation, it produces multiple tokens at once through parallel refinement. Ermon described the process to Bloomberg as starting "with a sketch of the answer and then it refines it in parallel." The output begins as noise and converges toward coherent text over a small number of denoising steps. Ermon helped build the diffusion technology behind Stable Diffusion and DALL-E at Stanford. His bet with Inception is that the same trick works for text.

A typist grinds through a page one keystroke at a time. An editor reworks the whole thing in passes. The typist hits a speed ceiling no matter how fast the fingers move. The editor works on everything simultaneously. That structural gap is what Inception is exploiting. The company's founders didn't try to build a faster autoregressive model. They scrapped autoregressive decoding entirely.

This parallel approach carries a second advantage beyond raw throughput. Because diffusion models process entire sequences at once, they squeeze more output from the same number of GPUs. For companies running AI at production scale, that means serving more users on existing hardware or running larger models within the same latency budget. GPU costs dominate every AI company's income statement right now. The math gets attention fast.

And Inception doesn't need custom silicon to pull any of this off. Several competitors are pouring hundreds of millions into specialized AI chips designed to outrun Nvidia's inference speed. MatX, founded by two Google chip alumni, raised more than $500 million in a round led by Jane Street just this week. Inception is making the opposite bet. The right software architecture, running on commodity Nvidia GPUs already deployed in every major data center on the planet, can match or beat purpose-built hardware on speed. That's the claim, anyway.

Mayfield's managing partner Navin Chaddha told Bloomberg the approach amounts to "re-imagining how models should be built." Mayfield invested before Inception had proven the technology worked.

The production argument

Inception is not trying to dethrone frontier reasoning models. Mercury 2 targets a different race entirely, one defined by latency-sensitive production workloads where the wait actively degrades the experience. Code editors where a two-second spinner breaks your train of thought. Voice agents where any perceptible pause kills the illusion. Agentic loops punish latency the hardest. Thirty inference calls per task, and every slow one drags the whole chain.

The customers aren't shy about it. Skyvern CTO Suchintan Singh told Inception that Mercury 2 "is at least twice as fast as GPT-5.2." Zed co-founder Max Brunsfeld said suggestions arrive "fast enough to feel like part of your own thinking, not something you have to wait for." If you've watched a code completion spinner hang while the cursor blinks, doing nothing, you know exactly why that speed gap matters.

On pricing, the company is being aggressive. Inception claims Mercury 2 delivers higher quality than Claude 4.5 Haiku at roughly one-fifth the latency and less than a quarter of the cost. It ships with a 128K context window, native tool use, tunable reasoning depth, and structured JSON output aligned to user-defined schemas. OpenAI API compatibility means developers can swap Mercury 2 into existing integrations without rewriting a single line of code.

Menlo Ventures partner Tim Tully told Bloomberg the model has "pretty much replaced Google Search" for him. He thinks the speed advantage could finally make customer service bots tolerable. "I want to feel like I'm talking to a human," Tully said. "I don't want to feel like I'm talking to a computer."

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Voice AI companies see the clearest immediate value. Happyverse AI CEO Max Sapo called low latency "everything" for building lifelike video avatars that hold real-time conversations. Mercury 2 "has been a big unlock in our voice stack," he said. OpenCall CEO Oliver Silverstein called the model's speed "excellent" for responsive voice agents. Every one of these companies says the same thing in different words. Speed is not a nice feature. It's what determines whether the product works at all.

There's also a less obvious angle to the pricing. At $0.25 per million input tokens, Mercury 2 is cheap enough to slot into high-volume, low-margin production pipelines that couldn't justify calling a frontier model at all. Customer support routing. Document classification at scale. Real-time search result summarization. These are workloads where the alternative isn't a better model. The alternative is a rule-based system or no AI at all. Inception is betting that speed and price together open an entirely new category of customer.

Diffusion models gain ground across the industry

Inception is not the only lab chasing this architecture. Google's Gemini Diffusion has demonstrated what researchers describe as commercial-grade parity with autoregressive models on key benchmarks. And Together AI released a post-training technique called Consistency Diffusion Language Models earlier this month that cuts inference latency by up to 14.5 times on coding benchmarks without destroying output quality.

Together AI's technique tackles a specific weakness in diffusion-based text generation. Standard diffusion language models produce tokens in parallel but pay a heavy computational price for it. Full bidirectional attention has to be recomputed across the entire context at every denoising step. Cutting the number of steps to save time normally causes accuracy to collapse. CDLM solves both problems through block-wise causal attention masks that enable KV caching for completed blocks, a fix that required rethinking how attention flows through the denoising process at the GPU kernel level. On GSM8K chain-of-thought reasoning, that delivered an 11.2x latency improvement. MBPP coding benchmarks hit 14.5x.

A year ago, Inception was the only company shipping diffusion language models commercially. Now Google, Together AI, and a growing number of academic labs are converging on the same conclusion. Parallel text generation is architecturally faster than sequential generation, and the quality penalty is shrinking with each new paper. That research momentum validates the core technical claim Inception has staked its business on. If diffusion for text turned out to be a dead end, Inception would be stranded. Google investing in the same architecture means Inception was right about the physics of the problem, even if Google eventually becomes the competition.

What Inception still needs to prove

Speed alone doesn't win model selection decisions. Even Inception's own backers frame this carefully. Tully pitched Mercury 2 for latency-sensitive applications specifically, not as a replacement for frontier reasoning models that handle complex multi-step chains.

Mercury 2 calls itself "competitive with leading speed-optimized models" on quality benchmarks, but Inception hasn't published detailed head-to-head comparisons against frontier models on harder reasoning tasks. The benchmarks on the company's blog cover coding, instruction following, math, and knowledge recall. Those are real categories, and they matter for production workloads. But for the kind of deep multi-step reasoning that OpenAI and Anthropic are optimizing their flagship models around, Inception hasn't shown its cards. The company is selling speed. It hasn't yet had to defend quality in the territory where autoregressive models do their best work.

The market, though, is tilting in Inception's direction. The AI industry's pivot toward agents creates enormous demand for exactly the speed profile Mercury 2 offers. An agentic workflow that chains 30 inference calls to complete a task cares less about peak reasoning quality on any single call and more about getting consistent, fast responses across all of them. If you can get most of GPT-5.2's reasoning capability at five times the speed and a fraction of the cost, a lot of production teams will take that deal without much debate.

Inception has partnerships with Microsoft, Amazon, and Nvidia already in place. The model runs on the most widely deployed GPU hardware on the planet. And the company has backing from names that open enterprise doors before the demo even loads on screen.

But the window matters. Google's Gemini Diffusion work shows that the major labs are absorbing these techniques into their own model families, and they look unbothered by the head start. Groq and Cerebras are pushing inference speed from the hardware side. For Inception, establishing Mercury as the default fast-inference option requires moving before the giants do.

The editor is getting faster. The typist hasn't changed. A thousand tokens per second, on GPUs already sitting in every major data center. For now, nobody else ships that number commercially.

Frequently Asked Questions

What is a diffusion language model?

Instead of generating text one token at a time like GPT or Claude, a diffusion language model starts with noise and refines the entire output in parallel over a few steps. The approach was adapted from image generation, where diffusion models power Stable Diffusion and DALL-E.

How does Mercury 2's speed compare to other models?

Inception claims 1,009 tokens per second on standard Nvidia GPUs, more than five times faster than comparable systems. Skyvern's CTO said it runs at least twice as fast as GPT-5.2. Inception also claims higher quality than Claude 4.5 Haiku at one-fifth the latency.

Who funded Inception and how much have they raised?

Menlo Ventures led a $50 million seed round. Backers include Microsoft's M12, Snowflake Ventures, Databricks, and angel investors Eric Schmidt, Andrew Ng, and Andrej Karpathy. Mayfield also invested before the technology was proven.

Why does inference speed matter so much for AI agents?

Agentic workflows chain dozens of inference calls per task. Each slow response compounds across every step. At 30 calls per task, shaving milliseconds off each one adds up fast. Speed also determines whether voice interfaces feel natural or robotic.

Are other companies building diffusion language models?

Yes. Google's Gemini Diffusion has shown commercial-grade parity with autoregressive models. Together AI released CDLM, a technique cutting inference latency by up to 14.5x on coding benchmarks. A year ago Inception was alone. Now multiple labs are converging on the architecture.

Implicator

Implicator