Meta is preparing to release its first AI models developed under chief AI officer Alexandr Wang, with plans to eventually offer open-source versions, Axios reported Monday. Before any public release, the company intends to keep some components proprietary and run safety evaluations, according to sources familiar with the plans. Not a full reversal. The announcement lands after months of speculation that Meta would abandon open source entirely, and it suggests the company has settled on a middle ground.

Key Takeaways

- Meta will open-source some new AI models but keep its largest proprietary, shifting to a hybrid strategy under Alexandr Wang

- The delayed "Avocado" model trails Google, OpenAI, and Anthropic on reasoning and coding in internal tests

- Wang's consumer-focused approach relies on Meta's 3.5 billion daily platform users as a distribution advantage

- Industry-wide retreat from open-source AI as Alibaba also keeps its most powerful models closed

AI-generated summary, reviewed by an editor. More on our AI guidelines.

A hybrid bet, not a full retreat

Wang has indicated that some of Meta's largest new models will remain proprietary, with the company planning to release open-source versions of others over time. That is a sharp departure from the company's earlier approach, when it released full Llama model weights for anyone to download and modify at will.

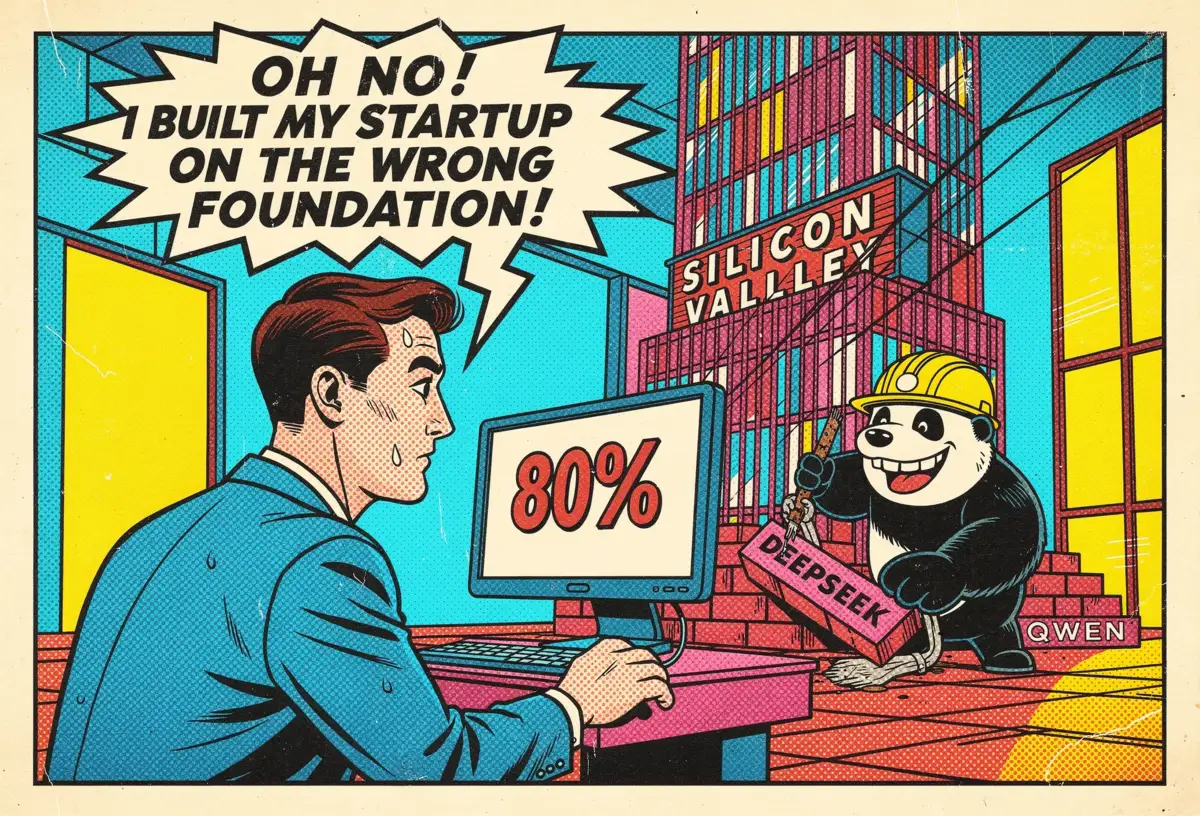

The shift follows a bruising year inside Meta's AI divisions. DeepSeek's R1 model incorporated pieces of Llama's architecture to build a competitive system at a fraction of the cost. A Chinese rival, powered by Meta's own openness. People inside the AI division were livid. Llama 4 dropped in April 2025, and the response from developers was brutal. Coding benchmarks? Behind Google. Reasoning? Behind Anthropic and OpenAI. Not close, either.

But Wang has not abandoned the philosophy entirely. "We'll probably release a mixture of closed and open source models, but we're still very committed to open source as a company," he told the Economic Times in February. The difference now is operational. Meta picks what it shares and when, rather than defaulting to openness as a brand identity.

Avocado still isn't ripe

The new model family arrives under a cloud. Meta's next-generation model, codenamed "Avocado," was supposed to ship in March. It did not. Internal tests showed the model lagging behind Google's Gemini 3, OpenAI's GPT-5 updates, and Anthropic's Claude on reasoning and coding. The release slipped to May or June. Worse, Meta's leadership reportedly discussed licensing Gemini from Google, temporarily, to keep its own consumer AI products functional while engineers at the Menlo Park campus work through the problems.

Wang joined Meta through a $14.3 billion deal that gave the company a 49% stake in his startup Scale AI last June. He has since led Meta's Superintelligence Labs and the elite TBD Lab where Avocado is being built. The models now carry the full weight of that investment.

Meta acknowledges the gap with competitors. Sources told Axios the company "knows its new models may not be competitive across the board" with upcoming releases from rival labs, but believes it will find consumer-facing strengths others lack. The financial pressure behind that confidence is enormous. Meta raised its 2026 capital expenditure guidance to $115 billion to $135 billion. If you are spending at that scale, a model that merely "shows the rapid trajectory we're on," as a Meta spokesperson told CNET, does not reassure anxious investors.

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

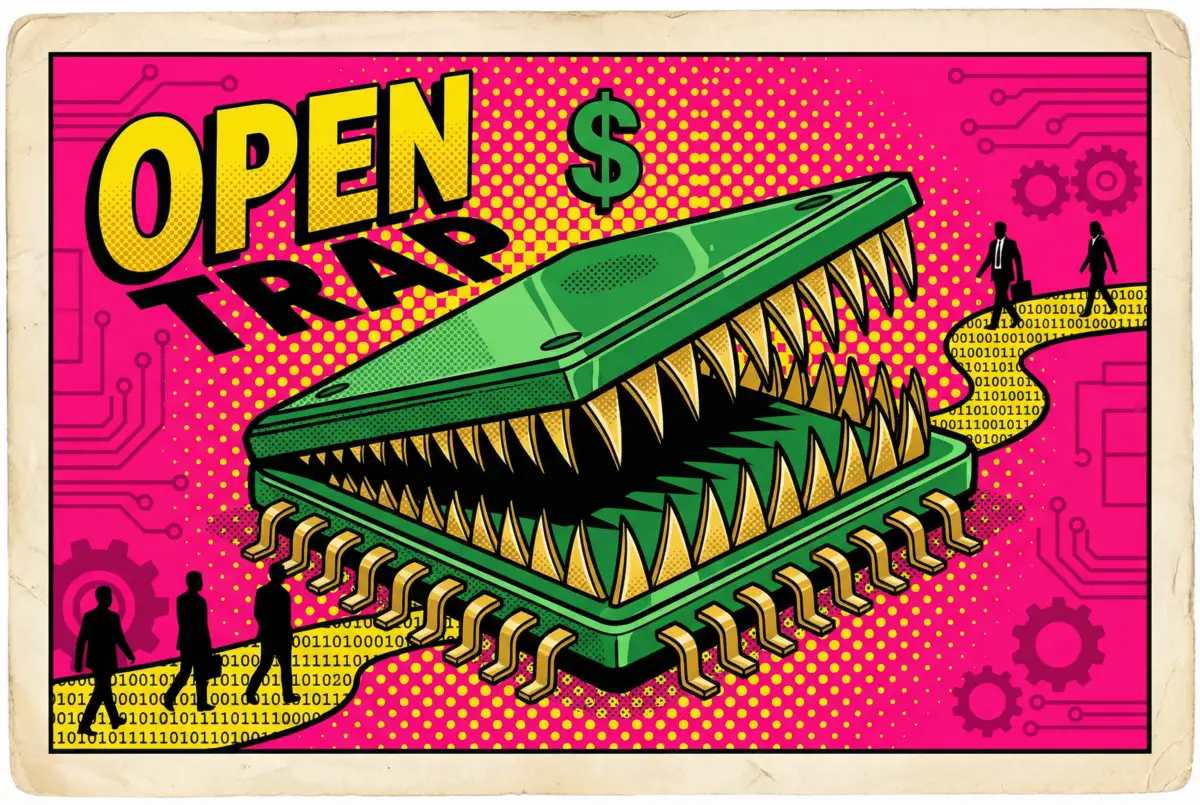

Open source under pressure everywhere

Meta's hedging fits a pattern now visible across the industry. Alibaba recently kept its most powerful new Qwen models proprietary, reversing years of open releases. The playbook is consistent on both sides of the Pacific: seed the developer ecosystem with open models, then close the door on the systems that matter most. If you have watched the open-source AI space over the past year, this should feel familiar.

And yet open source still produces concrete results. Cursor, the AI coding startup valued at $29.3 billion, built its Composer 2 on top of Moonshot AI's open-source Kimi 2.5. Roughly a quarter of the final model's training compute came from that base. The rest was Cursor's own reinforcement learning, layered on top. One product launch, and the practical value of open weights snaps into focus. The tension between strategic caution and ecosystem demand is exactly what Meta is trying to thread.

Distribution as the real bet

Wang sees the competitive map differently from other lab leaders. OpenAI and Anthropic are increasingly focused on governments and enterprise contracts. Meta's play, per sources, targets consumers.

Three and a half billion people use Meta's platforms daily. AI already runs inside WhatsApp, Facebook, and Instagram. Free global services that no pure-play AI lab can replicate. Build the widest funnel, not necessarily the best model. That is the wager.

The competition is not waiting. Anthropic is testing a model it describes as a "step change" beyond its current offerings. OpenAI is signaling its own significant advances. Both could ship before Meta does.

What the first models under Wang actually look like, whether the open-source versions follow weeks or months behind the closed ones, and how Avocado performs against rivals that have already lapped it will reveal more about Meta's real AI strategy than anything its executives have promised so far.

Frequently Asked Questions

What is Meta's new open-source AI strategy?

Meta plans to release open-source versions of some AI models developed under chief AI officer Alexandr Wang, while keeping its largest and most powerful models proprietary. This hybrid approach replaces Meta's earlier strategy of releasing full Llama model weights openly.

What happened to Meta's Avocado AI model?

Avocado, Meta's next-generation foundation model, was delayed from its original March 2026 target to May or June. Internal testing showed it falling behind Google, OpenAI, and Anthropic on reasoning and coding tasks. Reports suggest Meta discussed temporarily licensing Google's Gemini.

Why did Meta shift away from fully open-source AI?

DeepSeek used Llama's architecture to build a competitive Chinese AI system, upsetting Meta insiders. Llama 4's poor developer reception in April 2025 also weakened the case for full openness. The new strategy aims to protect competitive advantages while maintaining developer ecosystem engagement.

How does Meta plan to compete with OpenAI and Anthropic?

Meta is betting on distribution rather than model supremacy. With 3.5 billion daily users across WhatsApp, Facebook, and Instagram, Meta can embed AI into services already used globally. Wang sees this consumer focus as distinct from rivals that increasingly target governments and enterprises.

Who is Alexandr Wang and what is his role at Meta?

Wang is Meta's chief AI officer and head of Superintelligence Labs. He joined through a $14.3 billion deal when Meta acquired a 49% stake in his company Scale AI in June 2025. He leads the elite TBD Lab developing the new AI models.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

IMPLICATOR

IMPLICATOR