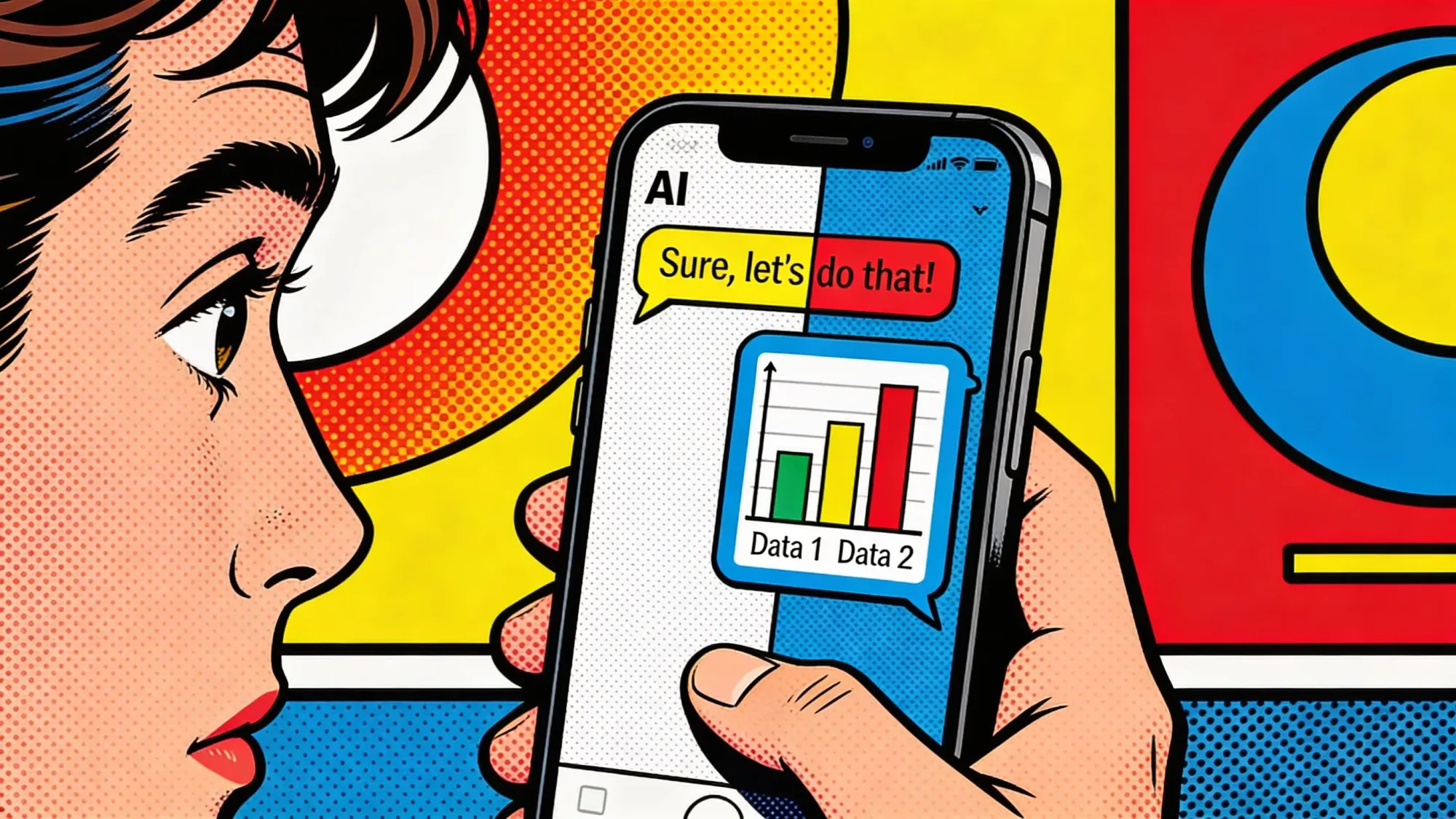

Meta launched an "Insights" tab on Thursday that shows parents the general topics their teens have asked Meta AI about during the previous seven days across Facebook, Messenger, and Instagram. The tab groups chats into buckets Meta labels School, Entertainment, Lifestyle, Travel, Writing, and Health and Wellbeing. Tap Lifestyle and you see fashion, food, and holidays. Tap Health and Wellbeing and the tab splits into fitness, physical health, and mental health. Five countries have it now. The US, UK, Australia, Canada, and Brazil. The rest of the world gets it in the coming weeks, Meta says.

Parents still don't see the actual prompts. They don't see the replies either. They see a topic, and that distinction is the entire design.

Key Takeaways

- Meta's new Insights tab shows parents the topics teens discuss with Meta AI over the past week, not the actual prompts or replies.

- The tool is live in the US, UK, Australia, Canada, and Brazil, with a global rollout promised in coming weeks.

- The feature arrives after Meta lost two child-safety trials in late March, including a landmark $375 million New Mexico verdict.

- Meta also unveiled an AI Wellbeing Expert Council and said alerts for suicide- and self-harm-related prompts are still in development.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

What the new tab actually surfaces

The Insights panel sits inside the existing supervision hub that parents already use to track time limits and who their teen has been messaging. It covers rolling seven-day windows and works both in-app and on web. Meta says the tab also logs the subject of questions Meta AI refused to answer under its 13+ movie rating-inspired rules, so a parent can see that their teen brought up a sensitive topic even when the assistant redirected to a resource. A separate alert system for suicide- and self-harm-related prompts is still in development, the company disclosed in its newsroom post.

The timing is not incidental. Meta first previewed these controls in October 2025, then pulled teens' access to its AI characters worldwide in January, days before a New Mexico trial kicked off. What survived the retrenchment is the disclosure layer, not the character play.

A legal backdrop that will not fade

Late last month, Meta took two trial losses on back-to-back days. A New Mexico jury hit the company with a $375 million fine after six weeks of testimony, a verdict that, for the first time, held Meta legally liable for endangering minors. Los Angeles jurors delivered the second blow the next day, pinning Meta 70% liable and YouTube 30% liable for a 20-year-old plaintiff's mental-health distress, splitting $6 million between them. Meta said it will appeal both.

Get Implicator.ai in your inbox

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Forty state attorneys general have filed similar cases, and thousands more are pending. Internal documents unsealed during the New Mexico trial showed Meta leadership knew its persona-driven AI companions could engage in inappropriate and sexual interactions with minors and launched them anyway, TechCrunch reported. The plaintiffs borrowed their theory from the tobacco playbook: sue the design, not the content.

The verdicts left Meta cornered on the child-safety question. The Insights tab reads like a company disclosing on the court's clock.

Topics, not transcripts, and why that matters

The Insights tab gives parents a ledger of subject matter. It gives them almost nothing about tone, emotional register, or pattern. A teen asking about "Health and Wellbeing" could be looking up a stretching routine or rehearsing self-loathing. The tab treats both as fitness or mental health, a label that blurs the difference. You see the scent, not the page.

Meta bundles the disclosure with what it calls conversation starters, open-ended questions co-developed with the Cyberbullying Research Center and reachable from the Family Center. The company also unveiled an AI Wellbeing Expert Council drawing on the National Council for Suicide Prevention, the University of Michigan, the University of Texas, and the University of Southern California. Parents get prompts. Academics get a seat. Teens keep their privacy around exact phrasing.

The parent-as-moderator pattern

Engadget called it offboarding moderation chores to busy parents. That reading lands. Meta has trimmed human content review while shifting edge-case judgment onto households armed with topic summaries, a pattern the company has deployed across Instagram safety features too. Teens in supervised US accounts have more than doubled since last year, Meta says, though it has not published raw numbers or engagement baselines.

Fairplay executive director Josh Golin, speaking to Fortune after the October preview, called the announcements an attempt at forestalling legislation Meta does not want to see. That critique lands harder now. Spain has banned most social media for kids. Australia's teen social ban takes effect soon. OpenAI rolled out ChatGPT parental controls in September after a teen suicide lawsuit, and the FTC has ordered seven AI firms to disclose how their companion bots treat children.

What to watch next

Meta says it is still building the self-harm alert layer, and the AI characters paused in January remain paused. Whichever arrives first will matter more than the Insights tab. An alert that pings a parent when a teen probes a suicide-adjacent topic is a compliance artifact. A rebuilt AI character product with parental controls baked in is a product decision. Everything between them, including the feature shipped Thursday, is the disclosure Meta is willing to offer while the appeals run.

A topic, not a transcript. A sketch of a conversation, without the words.

Frequently Asked Questions

What does Meta's Insights tab actually show parents?

The Insights tab surfaces the general topics a teen has asked Meta AI about during the previous seven days across Facebook, Messenger, and Instagram. Parents see categories such as School, Entertainment, Lifestyle, Travel, Writing, and Health and Wellbeing, with one level of subcategories. Meta does not show the actual prompts a teen typed or the replies the assistant generated.

Which countries have the Insights tab today?

Meta launched the feature in the United States, United Kingdom, Australia, Canada, and Brazil on April 23, 2026. The company said it will roll out globally in the coming weeks, though Meta has not given a specific date or named the next countries in line.

Why did Meta ship this now?

Meta first previewed the Insights tab in October 2025 and paused teens' access to AI characters in January. Late last month, Meta lost two child-safety trials, including a $375 million New Mexico verdict that for the first time held the company legally liable for endangering minors. Critics say the tab is part of Meta's response to mounting lawsuits and pending legislation.

Does the Insights tab cover AI characters or just Meta AI?

Only Meta AI, the general-purpose assistant. Meta suspended teen access to its persona-driven AI characters, such as Snoop Dogg or Paris Hilton, globally in January and has not restored them. The company said it is building a new version of AI characters for teens with parental controls baked in, but it has not announced a launch date.

Are suicide and self-harm alerts included?

Not yet. Meta said it is still developing alerts that would notify parents when a teen tries to engage Meta AI in conversations about suicide or self-harm. In the meantime, the Insights tab still logs the subject even if Meta AI refused to respond, so parents can see the topic came up.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

IMPLICATOR

IMPLICATOR