Less than two weeks. That is how long OpenAI waited after releasing GPT-5.4 before shipping two smaller versions designed to do most of the same work at a fraction of the price. GPT-5.4 mini launched Tuesday at $0.75 per million input tokens. The full model costs $2.50. Nano arrived at $0.20, roughly 12x cheaper than the flagship on inputs alone.

The benchmarks tell the story more plainly than any press release. On GPQA Diamond, a graduate-level reasoning test, mini scored 88% against the flagship's 93%. On SWE-bench Pro, which measures real-world coding ability through actual GitHub issues, mini hit 54.4% versus the flagship's 57.7%. And on OSWorld-Verified, a test of whether a model can operate a desktop computer by reading screenshots, mini landed at 72.1%, just below the human baseline of 72.4%, while the flagship reached 75.0%.

Those numbers should make anyone running a large AI budget nervous. Not because the models are bad. Because they are good enough. And good enough at 70% off is exactly how procurement teams start questioning the invoice. The billion-dollar question in enterprise AI just flipped. It used to be "which model is smartest?" Now it is "which model is cheapest per correct answer?"

The short version: GPT-5.4 mini and nano hit 94% to 96% of the flagship on key benchmarks. Mini costs 70% less per input token. Nano costs 92% less. Mini is in ChatGPT, Codex, and the API. Nano is API-only at $0.20 per million input tokens. The competitive gravity in AI just shifted from raw capability to cost-per-task, and that changes how companies will architect agentic systems going forward.

The Argument

- GPT-5.4 mini hits 94-96% of flagship scores on key benchmarks at 70% lower input-token cost; nano costs 92% less

- OpenAI pitches subagent architecture: flagship plans, mini and nano execute subtasks in parallel for speed and savings

- Long context remains the flagship's edge: mini scores 47.7% vs 86.0% on MRCR multi-needle tests

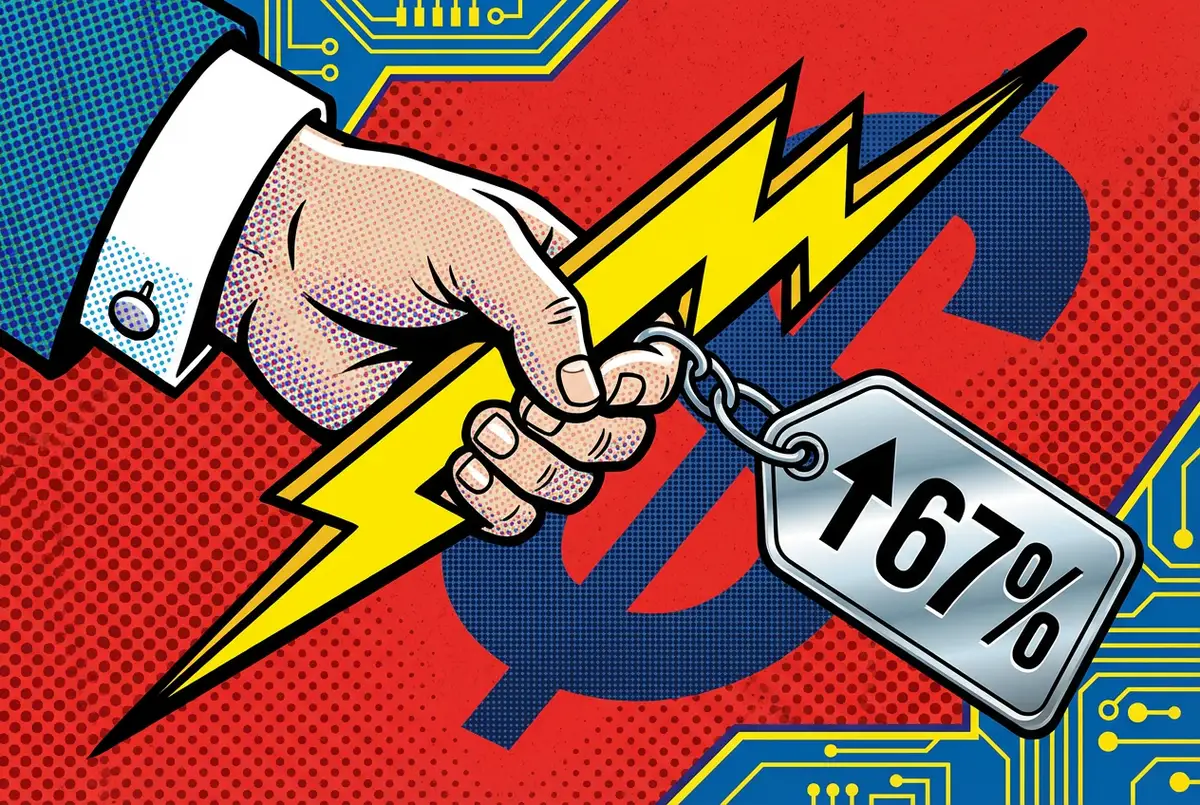

- Small-model prices are rising fast: mini is 3x GPT-5 mini's cost, nano is 4x, complicating the budget narrative

The factory floor ate the flagship

Think of what happened in manufacturing. The artisan workshop gave way to the assembly line. Not overnight. Over decades, one factory floor at a time. The craftsmen did not lose their skills. They lost their pricing power, because ten specialized workers doing narrow tasks at speed will always outrun one generalist doing everything. The AI model market just hit that same wall.

OpenAI is not hiding this. The company's own announcement describes GPT-5.4 mini as purpose-built for "workloads where latency directly shapes the product experience." Read that again. They are telling you to stop using the flagship for most tasks. The pitch is a two-tier architecture: GPT-5.4 plans and coordinates, then hands off subtasks to mini or nano subagents running in parallel. Search a codebase here, review a document there, classify a form somewhere else.

Not a suggestion. A product architecture. In Codex, mini burns only 30% of the GPT-5.4 quota. OpenAI says the flagship can delegate lighter work to mini subagents, though the current Codex docs clarify that subagents are spawned when explicitly requested. The automatic-delegation dream is real, but it is not shipping yet.

Perplexity's Deputy CTO Jerry Ma, after testing both models, confirmed the split personality works in practice: "Mini delivers strong reasoning, while nano is responsive and efficient for live conversational workflows." The implication is clear. One brain is not enough. You need a team.

Why the numbers matter more than they look

Anyone following the small-model wars might shrug at another incremental release. Don't. The gap between mini and flagship has compressed to the point where most production workloads cannot tell the difference.

Look at coding. GPT-5.4 mini pulls 54.4% on SWE-bench Pro. The flagship gets 57.7%. A gap of 3.3 points. Seven months back, GPT-5 mini managed 45.7%, trailing the best model by a full 12 points. Two-thirds of that gap gone in under a year. The performance ceiling that justified flagship pricing? Collapsing faster than most enterprise budgets can adjust.

Stay ahead of the curve

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Terminal-Bench 2.0 tells it even more bluntly. Mini went from 38.2% to 60.0%. That is a 57% relative gain. The flagship sits at 75.1%, sure, but nano already hits 46.3%, which puts the cheapest current model ahead of where the previous mini generation landed.

But the compression has a price, and it shows up in the one place where small models still choke. Long context. On OpenAI's own MRCR test, eight needles buried in 64K-128K tokens, mini scored 47.7%. The flagship hit 86.0%. That is not a gap. That is a canyon. If the job is tracking dozens of details across a 200-page contract, the flagship still earns every cent. For the other 90% of API calls? Harder and harder to justify the markup.

And the pricing itself carries a wrinkle. Small-model costs have been creeping upward across the industry even as vendors market them as budget options. GPT-5.4 mini at $0.75 per million input tokens is 3x the price of GPT-5 mini's $0.25. Nano at $0.20 costs 4x what GPT-5 nano charged at $0.05. The performance gains are real, but "cheaper than the flagship" is not the same as "cheap."

The Anthropic problem OpenAI can't ignore

CNET framed the release as "part of an effort by OpenAI to lean into coding as it battles with rival Anthropic for the AI software engineering market." That framing understates the anxiety.

Anthropic's Claude Code went GA in May 2025 and spread fast through developer circles. Anthropic says its own internal coding use of Claude grew sharply that year, which tracks with what anyone watching GitHub already suspected. Codex arrived later. It has been chasing ever since. Mini and nano are OpenAI's answer, and the bet is not on brainpower. It is on speed and cost. A coding assistant that sits and thinks for 45 seconds before editing three lines of code loses to one that fires back in under a second, even if the fast one is slightly less accurate.

OpenAI is emboldened by the pace it can ship. GPT-5.3 Instant on March 3. GPT-5.4 two days later. Mini and nano twelve days after that. Four model variants across three announcements in two weeks. A year ago, that kind of cadence would have been absurd. Release cycles measured in quarters back then.

But shipping fast and getting adopted are two different things. OpenAI's competitive position rests on whether developers actually restructure their apps around the subagent pattern. Anthropic is betting the opposite direction: fewer models, more capable, less wiring required. That is not some abstract product-strategy debate. It is the central architectural bet separating the two most valuable AI companies alive, and production workloads at scale will be the only jury that matters.

And then there is the open-source flank. DeepSeek's V3.1 dropped last August under an MIT license and posted coding benchmarks competitive with the best proprietary models at a fraction of the inference cost. Mistral shipped Small 4 the same week as GPT-5.4 mini. Apache 2.0 license, 119 billion parameters spread across 128 experts. Runs on four H100 GPUs. If open-source small models keep closing the gap, the pricing power that bankrolls OpenAI's research edge bleeds out from below.

Who wins and who pays

The short-term winners are obvious. Startups running high-volume API workloads just got a real cost cut for tasks that do not need deep reasoning. Nano at $0.20 per million input tokens makes classification and extraction pipelines viable at scales that were financially out of reach six months ago. Hebbia's CTO confirmed the economics work, noting that mini "achieved higher end-to-end pass rates and stronger source attribution than the larger GPT-5.4 model" in their document analysis workflows.

Notion's AI engineering lead made the point sharper: until recently, only the most expensive models could reliably handle agentic tool calling, the ability for a model to invoke external software on a user's behalf. Today, mini and nano can do it. Tool calling used to be a premium feature. Now it is a commodity.

The losers are less obvious. Companies that built their competitive moat around access to the biggest, most expensive model just watched that moat shrink. If you are charging customers a premium because GPT-5.4 runs under the hood, the question from procurement is now obvious: why not switch to mini at one-third the cost for the same result? Enterprise procurement teams will notice.

The middleware layer gets squeezed hardest. Dozens of startups raised venture money over the past two years on one thesis: wrap the best model in a vertical interface, charge a margin, stay ahead by integrating each new release before anyone else does. That only works if the model stays expensive enough to justify the markup. It only works if the integration stays hard enough to scare off competitors. Mini and nano blow up both assumptions at once. The model cost drops. OpenAI's own subagent SDK handles the orchestration. The thing middleware companies sold as proprietary? OpenAI is giving it away.

OpenAI itself faces a tension. Every dollar shifted from flagship to mini is a dollar of reduced revenue per API call. The company needs developers to run more total volume on mini and nano to compensate, which means the subagent architecture is not just a product vision. It is a business necessity.

The test that comes next

The assembly line metaphor has a dark side. OpenAI's product team knows it even if the marketing team would rather not say it out loud. Specialized workers are efficient. They are also replaceable. If mini does 94% of the flagship's work today, what happens when GPT-5.5 mini closes the gap to 97%? At some point the flagship stops being a production tool and becomes a research artifact. Something you benchmark against. Something you never actually deploy.

That is the future OpenAI is building toward, whether it admits it or not. Four variants in two weeks is not a victory lap. It is a company running hard to make sure its small models reach production before somebody else's do. The first vendor to own the cheap-and-good-enough tier of enterprise AI will control where volume lands for years. Watch the next quarterly earnings from companies building on OpenAI's API. If flagship usage drops while total token volume rises, the assembly line won. If developers stick with the flagship because that 3% gap still matters for their use case, the artisan workshop limps on a little longer.

Either way, the question has changed. It is no longer "how smart is your AI?" It is "how smart does your AI need to be?"

Frequently Asked Questions

What is the price difference between GPT-5.4 and GPT-5.4 mini?

GPT-5.4 costs $2.50 per million input tokens. Mini costs $0.75, a 70% reduction. Nano costs $0.20, 92% cheaper than the flagship. However, these smaller models cost more than their predecessors: mini is 3x GPT-5 mini ($0.25), and nano is 4x GPT-5 nano ($0.05).

Where does GPT-5.4 mini fall short compared to the flagship?

Long-context tasks. On OpenAI's MRCR test with eight needles in 64K-128K token windows, mini scored 47.7% versus the flagship's 86.0%. Mini also falls just below the human baseline on OSWorld-Verified at 72.1% versus 72.4%.

What is the subagent architecture OpenAI is promoting?

A two-tier system where GPT-5.4 handles planning and coordination while mini or nano subagents execute lighter subtasks in parallel. In Codex, mini uses 30% of the flagship's quota. Current docs say subagents are spawned on explicit request, not automatically.

How does GPT-5.4 mini compare to Anthropic's Claude for coding?

Mini scores 54.4% on SWE-bench Pro, close to the flagship's 57.7%. OpenAI is betting on speed and cost to compete with Claude Code, which went GA in May 2025. The architectural bet differs: OpenAI favors many small specialized models, Anthropic favors fewer, more capable ones.

What does this mean for companies building on OpenAI's API?

Companies paying for flagship access face pricing pressure. If mini delivers comparable results at one-third the cost, procurement teams will ask why the switch has not happened. Middleware startups built on model access and integration complexity are most exposed.

IMPLICATOR

IMPLICATOR