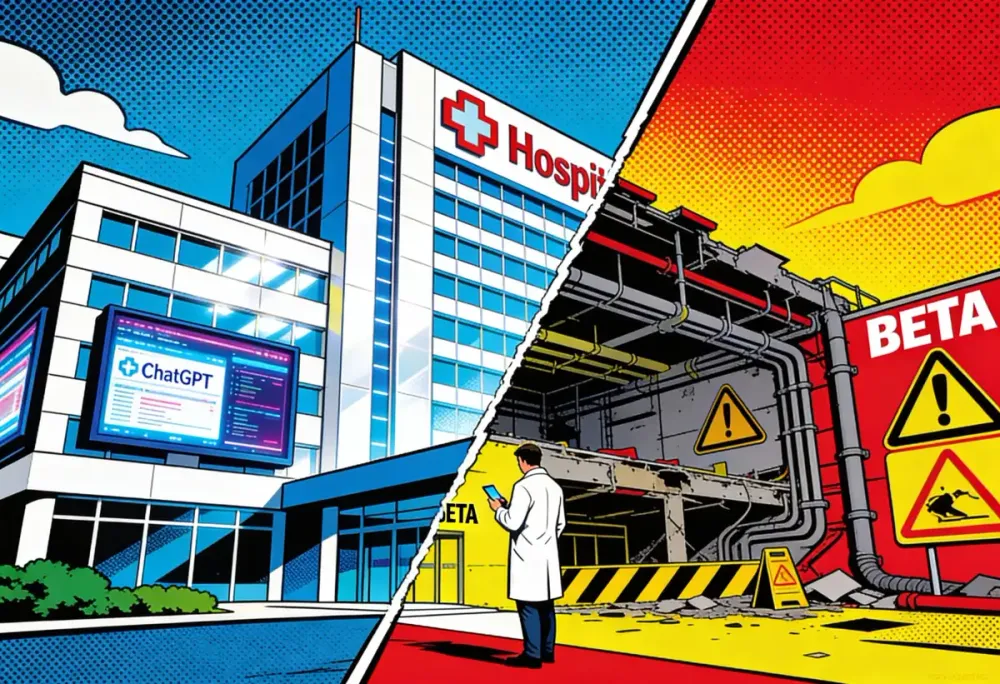

John Brownstein runs innovation at Boston Children's Hospital. He's deploying OpenAI's new healthcare product across his organization. He's also predicting it will hurt someone.

"I'm worried about the potential of an AI adverse event that could set the whole field back," Brownstein told Bloomberg this week. Then the admission. "I think it's very likely we'll see it."

That sentence should stop you. A senior executive at one of America's top pediatric hospitals, signing contracts with OpenAI while publicly acknowledging the technology will probably cause harm. Not might. Will. He's betting the benefits outweigh the casualties. Parents bringing their kids to Boston Children's didn't get a vote on that bet.

Key Takeaways

• OpenAI launched two healthcare products: a consumer app that bypasses HIPAA and an enterprise tool for hospitals that embraces it

• 30% of Boston Children's staff already use unofficial OpenAI tools for medical workflows, ahead of the official product launch

• Hospital innovation chief John Brownstein publicly predicts an "AI adverse event" while deploying the technology anyway

• In Chicago and LA, 73-89% of Black-majority neighborhoods are trauma deserts where AI may replace rather than supplement care

Two products, two regulatory strategies

OpenAI announced two distinct healthcare products this week. The difference between them amounts to a zoning loophole.

The consumer version launched first. ChatGPT Health pulls medical records through a partnership with b.well, grabs data from Apple Health, and accepts whatever users want to upload. Lab results. Prescriptions. That diagnosis you've been worried about. The legal trick is in the classification. "In the case of consumer products, HIPAA doesn't apply," OpenAI executive Nate Gross explained. When you voluntarily hand over your data, the federal privacy rules that bind hospitals and insurers don't touch the company receiving it.

Think of it as building a commercial structure in a residential zone by calling it a "wellness shed." Same footprint. Same traffic. Different permit requirements.

Then came the enterprise product. ChatGPT for Healthcare ships with HIPAA compliance, a Business Associate Agreement, and the governance features compliance officers require: audit logs, encryption keys, data residency options. AdventHealth, Baylor Scott & White, HCA Healthcare, Memorial Sloan Kettering, Cedars-Sinai, Stanford Medicine Children's Health, and UCSF are already deploying it.

Same company. Same underlying models. Consumer product sidesteps regulation by wearing the wellness label. Enterprise product embraces regulation to win hospital contracts. OpenAI gets the data either way.

Adoption is outrunning the product

At Boston Children's, 30% of employees are already using a custom tool built on OpenAI's technology. Not the official product, which just launched this week. A predecessor they assembled themselves while waiting for the approved version.

That number tells a story about desperation more than enthusiasm. The resident hiding a phone screen during rounds to query a differential diagnosis isn't waiting for the governance committee to finish deliberating. She's solving a problem in the hallway because the official channels move too slowly and the patient needs an answer now.

Brownstein called it "one of the most in-demand and viral spreading use cases that we've seen, that I've maybe ever seen in a physician population." The American Medical Association backs this up. Physician use of AI nearly doubled in a single year. More than 200 million people ask ChatGPT health questions every week, nearly one in three of the platform's 800 million weekly users.

Demand exists. Safeguards are playing catch-up.

Brownstein frames the risk like autonomous vehicle executives do. Aggregate improvements, over time, should outweigh the accidents. Boston Children's is running studies comparing diagnostic accuracy with and without AI. Results aren't published yet. The deployment is already happening.

The FDA's convenient timing

Same day OpenAI announced its healthcare products, the FDA released guidance signaling a hands-off approach to AI clinical decision support. The agency wants to separate systems that collect and output data from systems that diagnose specific conditions.

Andrew Crawford, senior policy counsel at the Center for Democracy & Technology, doesn't buy the distinction. "ChatGPT Health seems to be designed to specifically work in the gray space between simple data collection and software that is intended to provide decision support for the diagnosis, treatment, prevention, cure, or mitigation of diseases," he told Data Breach Today.

OpenAI's marketing exploits exactly this ambiguity. The product page shows prompts like "summarize my latest bloodwork" and promises to help users "interpret materials like test results." Scroll to the legal disclaimers. "Not intended for diagnosis or treatment." The marketing says interpretation. The lawyers say no diagnosis. Try explaining that distinction to someone staring at their phone at 2 AM, wondering if the number on their lab report means cancer.

Crawford raised another gap OpenAI hasn't addressed. "If law enforcement requests OpenAI turn over a user's reproductive health data for an investigation, will OpenAI comply? Will they notify the user?"

OpenAI's documentation says health data remains accessible "through valid legal processes or emergency situations." In states prosecuting abortion, that's not hypothetical.

The graveyard nobody mentions

Ari Robicsek spent years running hospitals before moving into health-tech consulting. His assessment carries the fatigue of someone who's watched this story repeat.

"The battleground of health tech is littered with the bodies of big companies that didn't know anything about health, but that sort of marched in confidently," he told Bloomberg. "It's a hard space."

IBM learned this expensively. Watson Health consumed years and billions trying to crack oncology. MD Anderson paid $62 million for an implementation that never reached patients. IBM eventually sold the division for parts.

Google started pushing AI diagnostics in 2016. Dermatology apps. Diabetic retinopathy screening. Pathology tools. Ten years later, those products exist but haven't reshaped clinical practice the way the press releases promised. Lab conditions aren't hospital conditions. Controlled demos aren't messy ERs.

OpenAI arrives with advantages. GPT-5.2 models went through 260 physicians across 60 countries, more than 600,000 reviewed outputs. The company built HealthBench, an evaluation framework testing clinical reasoning and safety. Guardrails that predecessors skipped.

But the core problem persists. ChatGPT processes language. Medicine involves bodies. A model explains what a lab value typically indicates. It can't see that the patient across the desk looks pale in a way the numbers don't capture, or that she's downplaying symptoms because admitting fear means admitting the diagnosis might be real, or that the sample was drawn after breakfast when fasting was required.

The race to own the interface

Anthropic scheduled their own healthcare event for next week. The company's CEO, Dario Amodei, spent years in biophysics before pivoting to AI. That background matters when you're selling to doctors. Claude has been getting updates aimed at scientific research. One week after OpenAI's announcement? Not a coincidence.

Both companies see the same numbers. Healthcare consumes more than $4 trillion annually in the US. Administrative burden eats roughly a quarter of that. If AI can cut the paperwork keeping clinicians from patients, the market is enormous.

But the features that make healthcare lucrative make it dangerous. Screw up a travel booking and someone ends up at the wrong train station. Screw up diagnostic support and someone ignores chest pain that needed immediate attention.

Robicsek asked the question directly. "What happens if it gives you bad advice about how to manage whether or not you should go to the emergency department tonight with your abdominal pain?"

OpenAI's answer is that ChatGPT Health supplements care rather than replacing it. Users should consult doctors. The AI provides information, not treatment.

That framing ignores who actually uses these tools. This isn't about inconvenience for people with good insurance and a primary care doctor they can call. The Lown Institute mapped the gaps. Chicago: 73% of Black-majority neighborhoods are trauma deserts. Los Angeles is worse, 89%. GoodRx puts the national number at 120 million Americans living in counties without adequate pharmacy or primary care access.

When ChatGPT Health becomes the only doctor available at 2 AM, it isn't supplementing care for these communities. It's replacing what was never there.

The honest assessment

Brownstein's willingness to predict an AI casualty while deploying the technology is unusual. Most executives in his position stick to the upside. He's doing something harder. Acknowledging that the improvement he expects requires accepting losses he can't prevent.

Boston Children's is betting that AI catches more diagnostic errors than it creates. That efficiency gains give clinicians more time with patients. That the 30% of staff already using these tools informally will do better with governance and support.

Maybe the bet pays off. Models have improved. Physician input has been extensive. The safeguards exist this time. None of that changes what Brownstein already conceded.

Healthcare is not a debugging problem. The bodies moving through hospitals aren't edge cases for the next patch. When the AI adverse event Brownstein expects finally arrives, it will have a name and a family and a chart that looked fine until it didn't.

OpenAI is building a healthcare business on the assumption that aggregate improvement justifies individual risk. The hospitals deploying these tools agree. If you're the patient, nobody asked for your vote.

❓ Frequently Asked Questions

Q: What's the difference between ChatGPT Health and ChatGPT for Healthcare?

A: ChatGPT Health is the consumer product. You download it, connect your Apple Health data, upload lab results. No HIPAA protection. ChatGPT for Healthcare is the enterprise product sold to hospitals. It comes with HIPAA compliance, encryption controls, and audit logs. Same AI models underneath, different legal frameworks depending on whether you're paying as an individual or an institution.

Q: Why doesn't HIPAA protect my data if I use ChatGPT Health?

A: HIPAA only covers "covered entities" like hospitals, insurers, and doctors. When you voluntarily share health information with a consumer app, that app isn't bound by HIPAA rules. OpenAI classified ChatGPT Health as a wellness product, not a healthcare service. The data you upload gets OpenAI's standard privacy protections, not federal healthcare privacy law.

Q: Can police or prosecutors access health data I share with ChatGPT?

A: Yes. OpenAI's documentation states health data remains accessible "through valid legal processes or emergency situations." That means subpoenas, warrants, and court orders. In states with restrictive abortion laws, reproductive health information shared with ChatGPT Health could be requested by law enforcement. OpenAI hasn't said whether they'd notify users.

Q: What happened when IBM tried this with Watson Health?

A: IBM spent years and billions on Watson Health, focusing on oncology. MD Anderson Cancer Center paid $62 million for a Watson implementation that never actually treated patients. The technology worked in demos but failed in messy real-world hospital environments. IBM eventually sold Watson Health for parts in 2022. It's the most expensive cautionary tale in healthcare AI.

Q: What is b.well and how does it connect my medical records to ChatGPT?

A: b.well is a health data platform that aggregates medical records from about 2.2 million US healthcare providers. Through OpenAI's partnership with b.well, ChatGPT Health users can pull records from their doctors, hospitals, and labs into the app. You authorize the connection, and b.well transfers the data. You can revoke access anytime, but data already shared stays with OpenAI.

IMPLICATOR

IMPLICATOR