OpenClaw creator Peter Steinberger said in a video interview on Saturday that Anthropic appears to have turned against his project's use of Claude consumer subscriptions, recommending developers switch to API keys instead. "I don't think Anthropic likes it anymore," Steinberger told interviewer Peter Yang, without specifying whether Anthropic communicated this directly or through rate-limit changes.

The disclosure came during a wide-ranging conversation for Yang's Behind the Craft series, in which Steinberger also revealed that OpenClaw has grown to 300,000 lines of code as of this week and drawn 2 million visitors in a single seven-day stretch. He described a project that started as a one-hour WhatsApp-to-Claude-Code hookup in November 2025 and has since become one of the most-starred agent frameworks on GitHub.

What makes the interview uncomfortable for the AI coding community is what followed. Steinberger, whose tool is synonymous with agent orchestration, told Yang he personally rejects most of the practices his own users rely on. He doesn't use MCPs. He doesn't use plan mode. He thinks the most hyped multi-agent frameworks produce garbage. If you build AI-powered tools for a living, the creator of the platform you admire just told you he doesn't believe in your workflow.

What Anthropic's pushback means

OpenClaw added support for Claude subscription keys "because it's kind of what everyone does," Steinberger said. Then the hedge: "I would recommend using an API key."

The Breakdown

• Steinberger says Anthropic is pushing OpenClaw developers away from Claude consumer subscriptions toward API keys

• OpenClaw has reached 300,000 lines of code and 2 million visitors in one week, starting from a one-hour WhatsApp hack in November 2025

• The creator of one of the most popular agent frameworks doesn't use MCPs, plan mode, or multi-agent orchestration himself

• Steinberger predicts 80% of single-purpose phone apps will disappear as AI agents gain persistent API access to services

He didn't explain what "doesn't like it anymore" means in practice. We know OpenClaw supports subscription-based access. We know Steinberger now advises against it. We don't know whether Anthropic communicated displeasure formally, adjusted throttling, or simply made it harder for agent frameworks to piggyback on consumer plans.

The friction makes commercial sense. A $20-per-month Claude Pro subscription was built for individual chat sessions, not as a backend for an autonomous agent that fires hundreds of API calls while its owner sleeps. If thousands of OpenClaw users route heavy agent workloads through consumer subscriptions, Anthropic is subsidizing infrastructure it never priced for. That's not a business disagreement. It's the feeling of watching your consumer product get arbitraged into someone else's backend, and the quiet frustration that follows when you can't say so publicly without alienating the open-source community that drives your adoption.

The voice message that started it all

The origin story has been circulating secondhand on X for weeks. Steinberger told it in his own words for the first time.

He was on a birthday trip in Morocco when he sent a voice message to his bot. He hadn't built voice support. The typing indicator appeared. A reply came back. Casual, as if voice messages had always worked.

"I'm like, wow. How the F did you do that?" Steinberger said. The agent walked him through its reasoning. It received a file with no extension. Inspected the file header, identified the Opus audio format, located FFmpeg on Steinberger's machine, converted the audio to WAV, found an OpenAI API key in his system, used curl to send the file to Whisper's transcription API, got the transcript back, and replied.

Steinberger didn't know his agent could do any of that. The agent figured it out by chaining tools it discovered on its own. No MCP server. No skill configuration. Just a model with file system access and the resourcefulness to chain six steps that no one had scripted.

"This is much more interesting than using ChatGPT on the web because it's like unshackled ChatGPT," he said.

Airline check-in as the AI Turing test

The interview's most vivid section wasn't about code. It was about what happens when you hand your daily life to an autonomous agent, then stand back.

OpenClaw checked Steinberger into a British Airways flight by browser automation. The agent found his passport on Dropbox, extracted the relevant data, filled in the airline's forms, and clicked through CAPTCHA checks. The first attempt took 20 minutes. Now it finishes in minutes. Steinberger calls airline check-in websites "the AI Turing test" because the multi-step forms, identity verification, and anti-bot measures represent exactly the kind of messy, real-world friction that separates a capable agent from a chatbot.

He gave OpenClaw access to his Philips Hue lights, his Sonos speakers, his home security cameras. He reverse-engineered the Eight Sleep API so the agent could control his bed temperature. He built a CLI that scraped his local food delivery service for real-time ETAs.

Stay ahead of the curve

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

His Vienna apartment uses Nuki smart locks. OpenClaw has access to those too. "It could literally lock me out of my house," Steinberger said. When asked, the agent refuses. For now.

The security camera episode was the strangest. Steinberger told OpenClaw to watch for strangers. It monitored his couch all night, taking periodic screenshots, because a blurry camera image looked like someone sitting there. Nobody was. The agent spent hours documenting an empty sofa.

"I don't use MCPs or any of that crap"

This was the line that will travel furthest. OpenClaw is built to support MCP servers, the protocol Anthropic created for connecting AI models to external tools. Steinberger, the person who built the framework, doesn't personally use them.

"I don't use MCPs or any of that crap," he told Yang.

The statement stings because it comes from someone with 20 years of professional software engineering at the system level, not from a skeptic dismissing tools they haven't tried. Steinberger built OpenClaw. He understands what MCPs do. He considers them unnecessary complexity for his own work.

His coding setup is simpler than most AI influencers would admit to. Multiple terminal sessions. Multiple checkouts of the same repository, cloudpod1, cloudpod2, cloudpod3, with agents working in parallel across them. No work trees. No orchestration layer. He compared the workflow to managing units in a real-time strategy game. When all terminals are busy, he calls it "a factory."

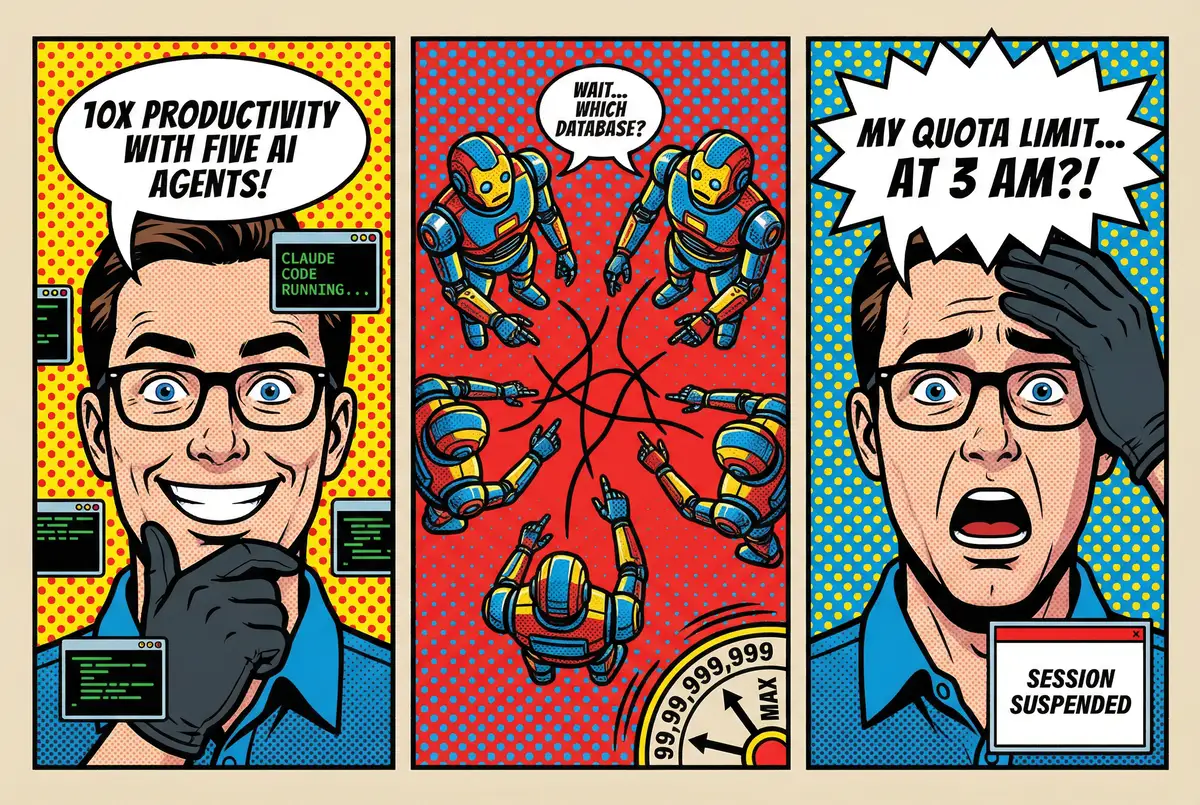

The agentic trap

Steinberger reserved his sharpest language for the agent orchestration trend. He named Gastown specifically, calling it "Sloptown," a framework where users run dozens of agents simultaneously with watchers, overseers, and what Steinberger described as "a mayor."

"Just because you can build everything doesn't mean you should," he said.

The deeper argument is about the human-machine loop. Steinberger's own process works differently. He starts with a rough idea. Builds something. Feels the result. Refines. Builds again. Each prompt depends on what he sees and thinks about the current state. If you front-load everything into a specification and let agents grind overnight, you lose the feedback loop that produces anything worth using.

"I don't know how something good can come out without having feelings in the loop almost," he said. "Taste."

He had a similar take on plan mode, the feature Anthropic added to Claude Code that forces the model to outline an approach before writing code. "Plan mode was a hack that Anthropic had to add because the model is so trigger friendly and would just run off and build code," he said. With newer models, he skips it entirely. He says "let's discuss, give me options" and has a conversation first.

The AI coding community on X won't love hearing this. Plan mode, MCP servers, multi-agent orchestration, these are the subjects of the most-shared threads and most-watched tutorials right now. Steinberger is telling a crowd that has built its identity around tooling sophistication that the tools are the distraction.

Pull requests are prompt requests

Steinberger's former business partner, a lawyer with no engineering background, now sends him pull requests built entirely with AI assistance. Steinberger doesn't merge them directly. He extracts the intent and rebuilds the code himself.

"I see pull requests more as prompt requests," he said. "It's enough to understand the intent."

He connected this to programming languages becoming irrelevant. After 20 years in Objective-C and Swift, he moved OpenClaw to TypeScript. The switch would have been agonizing without AI. Every unfamiliar syntax lookup, every "what's a prop?" moment that makes senior engineers feel slow in a new stack. AI erased that friction entirely.

"Languages don't matter anymore," he said. "My engineering thinking mattered." Domain expertise, system architecture, knowing which dependencies to trust. Those transferred. Syntax didn't have to.

Eighty percent of your phone apps will disappear

Steinberger's broadest claim was also his most testable. Single-purpose mobile apps become redundant, he argued, when an AI agent has API access to the same services plus persistent memory about you.

Why use MyFitnessPal to track food when your agent already knows your eating habits, can accept a photo of your meal, calculate calories, store the data, and remind you to hit the gym? Why use a sleep tracker app when the agent controls your bed temperature directly? Why use a dedicated check-in app when the agent handles British Airways itself?

"This will blend away probably 80% of the apps that you have on your phone," he said.

The argument depends on two things happening. First, the services behind those apps need accessible APIs, and many deliberately restrict third-party access. Second, users need to trust an AI agent with the kind of persistent, cross-service data that would make most security researchers nervous. Steinberger is comfortable with that level of exposure. He gave his agent access to his email, calendar, cameras, door locks, bed, and a copy of his passport.

The Moltbook security incident from this week showed what happens when agent infrastructure gets access management wrong: 32,000 bots with their API keys sitting in an open database. Steinberger is betting the future looks like giving your AI everything. The 2 million visitors in a week suggest plenty of people are curious enough to follow him there.

Whether Anthropic wants to power that future on a $20 subscription is a different question, and based on what Steinberger said on Saturday, the answer appears to be no.

Frequently Asked Questions

Q: What is OpenClaw and how did it start?

A: OpenClaw is an open-source AI assistant that connects to messaging platforms like WhatsApp, Telegram, and iMessage. Creator Peter Steinberger built the first version in one hour in November 2025 by hooking up WhatsApp to Claude Code. It has since grown to 300,000 lines of code.

Q: Why is Anthropic reportedly pushing back on OpenClaw's use of Claude subscriptions?

A: Steinberger said Anthropic no longer favors OpenClaw users routing agent workloads through $20/month Claude Pro subscriptions, which were designed for individual chat use. The economics break down when autonomous agents fire hundreds of API calls through consumer plans. Steinberger now recommends API keys instead.

Q: What are MCPs and why does Steinberger reject them?

A: MCP stands for Model Context Protocol, a standard Anthropic built for connecting AI models to external tools. Despite OpenClaw supporting MCPs, Steinberger personally considers them unnecessary complexity. He codes with multiple parallel terminal sessions and simple repository checkouts instead.

Q: What is the 'agentic trap' Steinberger describes?

A: Steinberger argues that developers fall into building increasingly sophisticated orchestration tools instead of actual products. He calls multi-agent frameworks with watchers, overseers, and automated loops 'vanity metrics' that produce work without taste because they remove the human feedback loop from the creative process.

Q: How does Steinberger use OpenClaw in his personal life?

A: He gave the agent access to his smart home lights, speakers, security cameras, door locks, bed temperature controls, email, calendar, and passport. OpenClaw has checked him into flights via browser automation, monitored security cameras overnight, and can control his Vienna apartment's Nuki smart locks.

IMPLICATOR

IMPLICATOR