San Francisco | Wednesday, February 25, 2026

Every outlet ran the same Pew number: 54% of teens use AI chatbots for school. The number they skipped sits in the income breakdown. Low-income teens depend on AI for most of their schoolwork at triple the rate of wealthy peers. Three to one. That's not a cheating story. It's a class story in a technology wrapper.

Hegseth gave Anthropic until Friday to drop its safety red lines on Claude or face a supply chain risk label. The Pentagon built its classified AI stack on one model. That model's maker is the only holdout.

Trump told tech companies to build their own power plants during the State of the Union. No enforcement teeth. The companies were already doing it.

Stay curious,

Marcus Schuler

Pew Data Shows 3-to-1 AI Dependency Gap Between Rich and Poor American Teens

Fifty-four percent of American teenagers use AI chatbots for school. Every outlet led with that number. The number that matters sits in Pew's income breakdown: 20% of teens from the poorest families say AI does all or most of their schoolwork, nearly triple the 7% in households above $75,000.

Pew Research Center surveyed 1,458 teens and parents last fall. The topline findings track expectation. Teens search for information, get homework help, mess around for fun. Break the data by income and race, and the picture shifts.

Black and Hispanic teens hit 60% chatbot use. White teens, 49%. But the real split is economic. Families earning under $30,000 produce teens who lean on AI not as a supplement but as a replacement for the tutors, writing coaches, and SAT prep their wealthy peers can buy.

A Stanford experiment makes the dependency concrete. Guilherme Lichand found that students given AI assistance and then cut off performed worse than students who never had access. "These kids started believing less in themselves," he told the Washington Post. The scaffolding doesn't build toward independence. It builds toward more scaffolding.

Parents aren't keeping up. Forty-two percent haven't discussed AI use with their teens at all. The perception gap runs 13 points: 64% of teens say they use chatbots, their parents guess 51%. The conversation is thinnest in the lowest-income homes.

Schools are still debating whether to ban chatbots while students have moved past the question. The issue isn't whether teens cheat with AI. They do, and 59% report it happens regularly. The issue is that low-income students are repurposing corporate software as educational infrastructure. Nobody planned for that.

Why This Matters:

- Schools crafting AI policies focus on cheating while the dependency gap widens along the same ZIP code lines that drive every other achievement gap

- Students who build academic confidence on AI lose more than skills when access disappears; Stanford data shows they lose belief in their own capacity

Reality Check

What's confirmed: Pew surveyed 1,458 teens. Low-income teens (under $30K) report 20% all-or-most AI dependency vs. 7% above $75K. 59% of teens say AI cheating is common at their schools.

What's implied (not proven): AI dependency in low-income homes functions as a substitute for the educational support that wealth provides.

What could go wrong: Schools ban AI tools outright, removing the only accessible academic support some students have.

What to watch next: Whether state education boards write AI policies that address the income gap. First tests arrive with spring standardized assessments.

The One Number

95% — The share of simulated war games in which AI models deployed at least one tactical nuclear weapon. Researchers at King's College London pitted GPT-5.2, Claude, and Gemini against each other in nuclear crisis scenarios. No model ever chose to surrender, regardless of how badly it was losing. Accidents escalated beyond intent in 86% of conflicts.

Source: New Scientist

Trump Tells Tech to Build Own Power Plants for AI Data Centers

Trump announced a "ratepayer protection pledge" during his State of the Union on Tuesday, telling tech companies they must provide their own electricity for AI data centers. He named no companies and offered no enforcement mechanism. The White House said a formalization event is planned for March.

Electricity bills climbed 6.3% year over year in January. Regulators approved $11.6 billion in rate increases during 2025, all driven by AI power demand. Trump promised to cut electricity prices in half during the 2024 campaign. Rates went from 16 cents per kilowatt-hour at inauguration to 17.2 by December. The pledge arrived under political pressure.

The companies don't need it. Microsoft pledged earlier this year to cover all data center electricity costs. Anthropic committed to covering 100% of consumer price increases from its facilities after the speech. OpenAI made similar commitments. These moves happened because the companies could read polling data, not because a president asked on camera.

Where the politics cut: Sens. Hawley and Blumenthal introduced the GRID Act two weeks ago, creating enforceable legal requirements for new data centers to source power independently. A Republican from Missouri and a Democrat from Connecticut, co-sponsoring energy legislation. PJM Interconnection, the country's largest grid operator, already requires new large users to bring their own generation capacity. The market moved first. Washington is catching up with voluntary pledges.

Why This Matters:

- Electricity costs are a midterm liability for both parties; data center opposition blocked tens of billions in projects during 2025

- The structural problem of grid capacity vs. AI demand won't resolve through voluntary pledges; enforcement legislation and state utility decisions determine the outcome

AI Image of the Day

Prompt: instect --ar 2:3

Anthropic Faces Friday Deadline to Drop AI Safeguards or Lose Pentagon Contract

Defense Secretary Pete Hegseth told Anthropic CEO Dario Amodei on Tuesday that the military must have unrestricted access to Claude by Friday evening. The alternative: a "supply chain risk" designation or invocation of the Defense Production Act.

Claude is the only AI model operating inside the Pentagon's classified systems, deployed through a partnership with Palantir. Six senior Defense officials sat across from Amodei on Tuesday morning. A senior official described the session as "not warm and fuzzy at all."

Anthropic draws two lines. No mass surveillance of American citizens. No weapons that fire without a human in the loop. The company argues current AI models aren't reliable enough for either application. OpenAI, Google, and xAI have already accepted the Pentagon's "all lawful uses" terms. Anthropic is the only holdout.

The Pentagon's position is awkward. Replacing Claude on classified networks isn't a quick swap. Sources say the model leads competitors in military applications, offensive cyber among them. "The only reason we're still talking to these people is we need them and we need them now," a Defense official told Axios.

Using the DPA against a domestic AI company would be unusual. The law pushed vaccine production during COVID. Forcing a software company to strip safety conditions from its product is a different proposition. Anthropic could challenge the move in court.

Friday answers a narrow question: does Anthropic accept "all lawful uses," or hold? The larger question of who governs military AI when no federal law addresses autonomous weapons or surveillance won't resolve by any deadline.

Why This Matters:

- Anthropic's decision sets a precedent for whether any AI company can impose safety conditions on government contracts

- The Pentagon has never invoked the Defense Production Act against a domestic tech company over AI; doing so would test untested legal ground

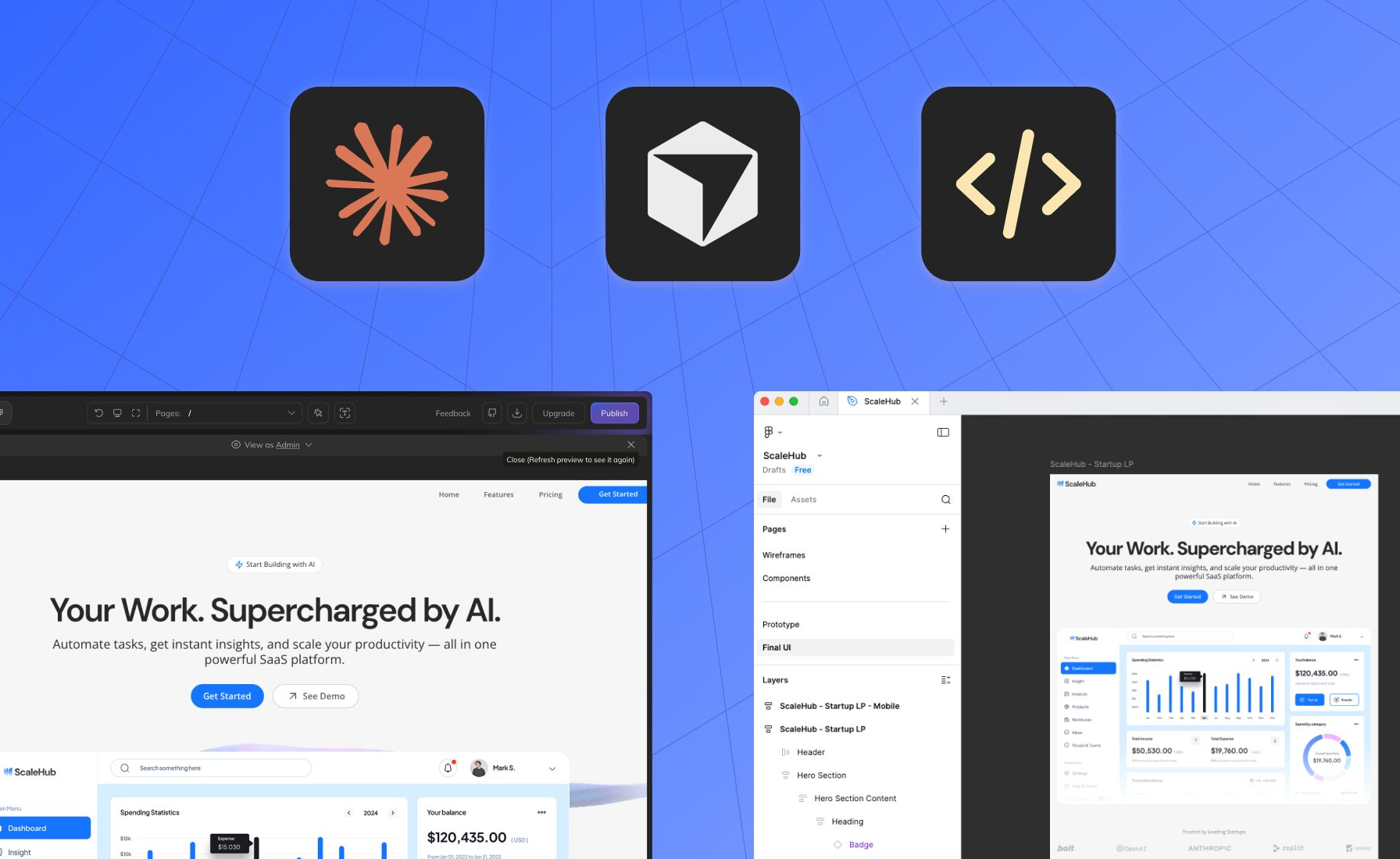

🧰 AI Toolbox

Anima — Turns Figma designs, screenshots, and text prompts into production-ready code

Anima converts design files into functional React, Vue, or HTML applications. Paste a Figma URL, upload a screenshot, or describe what you want in plain language. The platform generates deployable code with built-in data modeling and one-click deployment. Used by 1.5 million creators.

How to get started:

1. Sign up free at animaapp.com

2. Connect your Figma account or upload a screenshot of the interface you want

3. Choose your output framework: React, Vue, or HTML/CSS

4. Review the generated code in Anima's built-in editor

5. Use "vibe coding" to refine the output through conversational instructions

6. Connect a database if your app needs persistent data

7. Deploy with one click to a live URL or export to GitHub

What To Watch Next (24-72 hours)

- Trump Tariff Chaos: Supreme Court struck down the signature tariffs. Trump responded with new 15% across-the-board levies within hours. The EU wants last year's deal honored. Markets recalibrate Wednesday.

- Nvidia Earnings: Q4 results drop Wednesday at 5 PM PT. Data center revenue consensus sits at $38 billion. Guidance will move every AI stock on the board.

- Manchester By-Election: British PM Starmer faces a potential fatal blow Wednesday when Manchester votes. The result drops overnight into Thursday. His leadership hangs on the margin.

🛠️ 5-Minute Skill: Extract Decisions from a 47-Email Thread

Your team spent three days debating a vendor selection. Forty-seven emails. Your CEO wants to know what happened. Pasting the thread and asking for a "summary" gets you a shorter version of the confusion. Here's how to get a decision memo instead.

Your raw input:

Subject: RE: RE: RE: RE: Vendor Selection - CloudStack vs DataPipe

From: Sarah Chen | Feb 24

Ok I talked to Mike and he agrees DataPipe's API is cleaner but their uptime SLA only covers 99.9% vs CloudStack's 99.99%. Also their pricing jumps 40% after year one. Mike thinks we can negotiate but I'm not sure.

From: James Wright | Feb 23

I ran the numbers. CloudStack is $180K/yr, DataPipe is $127K yr 1 then $178K yr 2+. The TCO over 3 years is basically the same. But DataPipe's migration support is included, CloudStack charges $45K for it.

From: Lisa Park | Feb 23

Security review came back. Both passed SOC 2. DataPipe has FedRAMP moderate, CloudStack doesn't. That matters if we get the DoD contract.

The prompt:

You are a chief of staff preparing a one-page decision memo.

Extract from this email thread:

1. THE DECISION: What needs to be decided, by whom, by when

2. OPTIONS: Each option with 2-3 bullet points (cost, risk, advantage)

3. OPEN QUESTIONS: Unresolved items that block a decision

4. RECOMMENDATION: Based only on evidence in the thread, which option has more support and why

Rules:

- Use only facts stated in the emails. Do not infer.

- Flag any number that appears only once with [unverified]

- Keep the entire memo under 200 words

What you get back:

DECISION MEMO: Vendor Selection — CloudStack vs DataPipe

Decision needed: Select cloud infrastructure vendor. No deadline stated in thread.

Option A: CloudStack ($180K/yr)

- 99.99% uptime SLA

- No FedRAMP certification

- Migration: $45K extra [unverified]

Option B: DataPipe ($127K yr 1, ~$178K yr 2+)

- 99.9% uptime SLA

- FedRAMP moderate certified

- Migration included; 40% price jump after year 1

3-Year TCO: Approximately equal (per James Wright)

Open questions: Can DataPipe pricing be negotiated? Is DoD contract likely enough to require FedRAMP? Who decides?

Thread consensus: Leans DataPipe. FedRAMP and included migration give it an edge if DoD materializes. Uptime gap (0.09%) is the main risk.

Why this works

The prompt forces structure onto chaos. Instead of condensing the noise, it extracts the decision framework: options, trade-offs, open questions, and where the thread actually leans. The "[unverified]" flag catches numbers that appeared once without confirmation.

Where people get it wrong: They ask "summarize this thread." Summaries preserve the confusion. Decision memos impose a framework that makes the next step obvious.

What to use

ChatGPT: Strong at extracting structured data from messy input. Watch out for: tends to add recommendations beyond what the evidence supports.

Claude (Sonnet): Best at flagging unverified claims and staying within stated facts. Watch out for: occasionally over-hedges in the recommendation.

AI & Tech News

Google Disrupts Chinese Hacking Group That Breached 53 Organizations Across 42 Countries

Google announced it disrupted UNC2814, a China-linked hacking group that breached at least 53 organizations spanning 42 countries. The group used Google Sheets as a command-and-control tool to coordinate data theft, blending malicious activity with legitimate cloud traffic to avoid detection.

Trump Administration Orders Diplomats to Lobby Against Foreign Data Sovereignty Laws

The White House directed U.S. diplomats worldwide to push back against foreign data regulation efforts, framing them as threats to American AI services. The directive targets data sovereignty initiatives in Europe and Asia that could restrict how U.S. tech companies collect and process non-American citizens' personal data.

Nvidia Reports Zero H200 Chip Sales to China Two Months After Trump Lifted Export Ban

A senior U.S. export control official confirmed that Nvidia has shipped no H200 chips to China despite Trump's decision to permit AI chip exports two months ago. Regulatory complexities and compliance concerns appear to be blocking deals that were expected to resume quickly.

Japan's Antitrust Watchdog Raids Microsoft Offices Over Azure Cloud Practices

Japan's Fair Trade Commission raided Microsoft's Japan offices to investigate whether the company hindered Azure customers from switching to competing cloud services. The probe adds to growing international regulatory pressure on Microsoft's cloud business.

ByteDance Valuation Hits $550 Billion as General Atlantic Sells Stake

Investment firm General Atlantic is selling equity in ByteDance at a valuation of $550 billion, up 66% from September 2025. The deal marks the first major investor divestment since ByteDance completed the sale of its U.S. operations.

Deutsche Bank and Goldman Sachs Deploy Agentic AI for Trading Surveillance

Deutsche Bank partnered with Google Cloud to build agentic AI systems that monitor roughly one terabyte of daily communications across more than 40 channels for market abuse. Goldman Sachs is exploring similar AI surveillance tools, signaling a broader shift in financial compliance.

South Korean AI Startups Motif and Upstage Challenge SK Group and LG for Foundation Model Lead

South Korean startups Motif Technologies and Upstage are emerging as contenders against conglomerates SK Group and LG in the race to build a national AI foundation model. The competition reflects South Korea's broader push to secure a position in global AI development beyond its established semiconductor industry.

SAP Faces Growing Customer Skepticism Over AI Tools After Volkswagen Finds Joule Lacking

SAP is under pressure from investors and customers who doubt the readiness of its AI products. Volkswagen tested SAP's flagship AI assistant Joule and concluded it was not mature enough, raising questions about whether Europe's most valuable tech company can deliver on its AI promises.

Workday CEO Says Anthropic, OpenAI, and Google Are Customers as Stock Falls 40%

Workday CEO Aneel Bhusri pushed back on AI disruption fears by revealing that Anthropic, Google, and OpenAI all use Workday's enterprise software, arguing "no amount of vibe coding" could replace complex HR and financial tools. The defense didn't calm investors; Workday stock is down roughly 40% year to date.

Circle Reports 77% Revenue Jump as USDC Stablecoin Demand Surges

Circle posted Q4 revenue of $770 million, up 77% year over year, beating analyst estimates as demand for its USDC stablecoin grew through late 2025. USDC ended the year with approximately $75 billion in circulation, a 72% increase despite a broader crypto downturn.

🚀 AI Profiles: The Companies Defining Tomorrow

Ineffable Intelligence is raising $1 billion before it has a product. David Silver, the architect behind AlphaGo and AlphaStar, left Google DeepMind late last year to chase a thesis: the next leap in AI will come from experience, not internet text. 🧠

Founders

David Silver, 49, spent over a decade at Google DeepMind, where he led the teams that built AlphaGo and AlphaStar, AI systems that defeated world champions at Go and StarCraft. He contributed to Google's Gemini model family before departing in late 2025. Silver is also a professor at University College London. In a 2025 paper co-authored with Richard Sutton, he argued that experiential learning would "ultimately dwarf the scale of human data used in today's systems." He serves as CEO of Ineffable Intelligence.

Product

No product has been announced. The company plans to build on Silver's expertise in reinforcement learning, where AI improves through interaction with its environment rather than training on static datasets. The thesis is that current large language models have a ceiling because they learn from human-generated text. Silver's approach trains systems through trial, error, and reward, the same method that let AlphaGo discover Go strategies no human had ever played.

Competition

OpenAI, Anthropic, and Google DeepMind all invest in reinforcement learning alongside their language model work. Meta's FAIR lab and xAI pursue similar research. Sakana AI, founded by former Google researchers in Tokyo, raised $300 million for a different approach to next-generation AI. Ineffable Intelligence bets that a focused reinforcement learning lab can move faster than diversified incumbents.

Financing 💰

Raising $1 billion at a $4 billion valuation, led by Sequoia Capital. Partners Alfred Lin and Sonya Huang flew to London to meet Silver. Nvidia, Google, and Microsoft are in talks to participate. If completed, it would be the largest seed round ever raised by a European startup.

Future ⭐⭐⭐⭐ Silver has the most credible reinforcement learning track record alive. AlphaGo proved the approach works for constrained problems. The question is whether it scales to open-ended tasks. Sequoia does not write $1 billion seed checks on speculation, which means the due diligence saw something. If reinforcement learning produces a breakthrough agent, Ineffable Intelligence becomes one of the defining AI companies of the decade. If the thesis stalls, $4 billion was a very expensive bet on a very smart professor. London is watching. 🇬🇧

🔥 Yeah, But...

Trump announced a "ratepayer protection pledge" during his State of the Union on Tuesday, telling tech companies they must build their own power plants for AI data centers. He named no companies. He offered no enforcement mechanism. Microsoft, Anthropic, and OpenAI had already committed to covering data center electricity costs before the speech.

Sources: Reuters, February 25, 2026 | Business Insider, January 2026

Our take: The man who promised to cut electricity prices in half, then presided over a 6.3% increase, went on national television to announce that the free market will do free-market things. The grid needs years of construction and billions in new capacity, not Tuesday evening applause. State utility commissions, which actually set rates, weren't in the audience. They don't watch speeches. They read rate cases. A podium can't pour concrete or string transmission lines. But somewhere in Washington, a speechwriter is collecting a bonus for the phrase "ratepayer protection pledge."

Implicator

Implicator