San Francisco | Monday, February 23, 2026

Google restricted AI Ultra accounts, cutting off subscribers who accessed Gemini through OpenClaw's OAuth client. No warning, no explanation, no way to reach a human. At $249.99 a month, the restrictions can cascade into Gmail, Workspace, and every linked Google service. Anthropic banned the same access pattern two days earlier but at least told people why. OpenAI hired OpenClaw's founder instead.

Sam Altman coined a term at the India AI Impact Summit: "AI washing." Under 1% of 2025 job losses trace to AI. The other 99% just borrowed the excuse.

We want to see what you've built. Implicator.ai is collecting the best projects made with Claude Code, Codex, and Gemini CLI. Linus Torvalds already submitted his.

Stay curious,

Marcus Schuler

Google and Anthropic Both Restrict Third-Party AI Access in the Same Week

Google locked AI Ultra accounts without warning. The developer forum thread is the only public record of what happened.

Google restricted accounts of AI Ultra subscribers who accessed Gemini models through OpenClaw, a third-party OAuth client with over 200,000 GitHub stars. The restrictions arrived without explanation, cutting off users paying $249.99 per month from Gemini 2.5 Pro. Google's AI products share the broader account infrastructure, so a Gemini restriction can cascade into Gmail, Workspace, and cloud storage. Several forum users flagged exactly this risk.

Anthropic moved first. Two days earlier, the company updated its Consumer Terms of Service to explicitly ban OAuth tokens from Claude Free, Pro, and Max accounts in any third-party tool. Engineer Thariq Shihipar explained the economics publicly: third-party harnesses create "unusual traffic patterns" that break the usage assumptions built into flat-rate subscriptions. Token arbitrage. Pay the subscription, burn through tokens faster than the pricing model assumed.

The contrast matters. Anthropic updated legal terms, had an engineer post reasoning publicly, and gave tool makers days to adjust. OpenCode removed Claude support by Thursday. Google started restricting accounts with no policy update, no public statement, and no explanation for customers paying nearly $3,000 a year.

OpenAI took the opposite approach. The company hired OpenClaw creator Peter Steinberger on February 15 and endorsed using Codex subscriptions with third-party harnesses. Competitive positioning dressed as developer friendliness.

The security picture adds urgency. Censys identified 21,639 exposed OpenClaw instances on the public internet. Five CVEs were patched in a single week. A Vidar infostealer variant already exfiltrated one user's full configuration, including gateway tokens and cryptographic keys.

Why This Matters:

- Two of the three largest AI providers locked down third-party access in the same week, signaling that subscription pricing was never built for automated agent workloads

- Developers choosing AI platforms now weigh a new risk: the chance a provider will retroactively restrict how you access what you already paid for

Reality Check

What's confirmed: Google restricted AI Ultra accounts that used OpenClaw OAuth. Anthropic explicitly banned third-party token usage in updated terms. OpenAI hired OpenClaw's founder and endorsed third-party access.

What's implied (not proven): Subscription pricing was always subsidized, and both providers are enforcing implicit limits the math requires.

What could go wrong: Developer migration to local open-source models erodes subscription revenue that funds frontier model research.

What to watch next: Whether Google issues a public policy statement or keeps enforcement silent while the forum thread grows.

The One Number

1.3 billion — Videos labeled as AI-generated on TikTok as of February 2026. A Kapwing study found that a third of the first 500 YouTube Shorts shown to new accounts are AI slop. The platforms built their businesses on user-generated content. Now the machines are generating the content, the users are drowning in it, and the platforms are scrambling to filter what they incentivized.

Source: CNET

Altman Coins 'AI Washing' at India Summit While Entry-Level Jobs Drop 13%

Sam Altman accuses companies of using AI as cover for planned layoffs. The macro data backs him up. The entry-level data does not.

OpenAI's CEO told a live audience at the India AI Impact Summit that companies blame AI for cuts they would have made regardless. "There's some AI washing where people are blaming AI for layoffs that they would otherwise do," Altman told CNBC-TV18. The accusation landed during a brutal stretch for workers: US employers cut 108,000 jobs in January 2026 alone, a record-high monthly surge. Challenger, Gray & Christmas tracked roughly 55,000 layoffs attributed to AI across all of 2025. That is less than 1% of total US job losses. A National Bureau of Economic Research paper found 90% of executives said AI had zero workforce impact over three years.

The reassurance has limits. Stanford researchers found a 13% employment decline among early-career software engineers and customer service workers since 2022. Anthropic CEO Dario Amodei predicted half of all entry-level white-collar jobs could disappear within five years. Altman himself conceded the trajectory: "I would expect that the real impact of AI doing jobs in the next few years will begin to be palpable."

In one version, AI is wrongly blamed for layoffs. In the other, real displacement is coming. Altman asks the room to hold both premises at once. The 108,000 workers who cleared their desks in January are not interested in the distinction.

Why This Matters:

- Companies from Amazon to Citigroup use AI as rhetorical cover for routine cost-cutting, creating a "boy who cried wolf" problem that hides where displacement actually occurs

- The real impact concentrates at the entry level, exactly where workers have the least power and the fewest alternatives

AI Image of the Day

Prompt: A miniature bedroom with a bed, night lamp, and a sleeping person inside the "home" key on an old beige computer keyboard. The word "home" is written in bold letters above it. There's also a desk next to that, and there should be no other keys visible except for the ones featuring the letters P, G, or H. This scene captures the simplicity of home life within the minimalistic space created by a single white space bar.

16 AI Agents Built a C Compiler. Implicator.ai Wants to See What You Built.

The best AI-built software ships to production. We are collecting it in one place, and submissions are open.

Sixteen Claude Opus 4 agents built a complete C compiler in Rust without human intervention. It supports x86-64, ARM, and RISC-V architectures and compiles the Linux 6.9 kernel, PostgreSQL, SQLite, Redis, and FFmpeg. Christopher Chedeau used Claude Code to port Pokemon Showdown's battle simulator from TypeScript to Rust, achieving 99.997% behavioral parity across 2.4 million test seeds over 5,000 commits. Linus Torvalds shipped AudioNoise, mixing hand-coded C with AI-generated Python visualizers for digital audio processing. It has 4,200 GitHub stars. Reuven Cohen's Claude-Flow, a multi-agent orchestration platform for Claude Code instances, sits at 14,000 stars with 1,600 forks.

These are not demos. These are working tools that real developers use.

Implicator.ai is building a curated showcase of projects made with Claude Code, OpenAI Codex, and Google Gemini CLI. The criteria: original solutions to real problems, public GitHub repos, fully functional implementations, and meaningful AI integration beyond autocomplete. Submit through the form on the showcase page or email marcus@implicator.ai. Review takes two weeks. If you have built something worth showing, the inbox is open.

Why This Matters:

- AI coding tools now produce production-grade software autonomously, from compilers that build Linux kernels to game ports with 99.997% behavioral parity

- The showcase invites developers to submit their own projects, building a public record that separates working code from marketing demos

🧰 AI Toolbox

How to Generate Production-Ready Vector Graphics with Recraft

Recraft is the first AI design tool that generates native vector graphics (SVG), not pixels. Describe an illustration, icon, or graphic and get back a scalable file you can edit in Illustrator or Figma. The V4 model, released February 2026, handles text rendering, brand-consistent styles, and both raster and vector output. Free plan available.

Tutorial:

- Go to recraft.ai and sign up for a free account

- Choose your output format: Vector Art for complex illustrations, Icon for simplified flat graphics, or Image for raster output

- Type a prompt describing what you need ("A minimalist line icon set for a fintech app: wallet, chart, shield, globe")

- Select a style preset to lock in visual consistency across multiple generations

- Use the "Brand Style" feature to upload your brand colors and typography, so every output matches your guidelines

- Iterate by adjusting the prompt or using inpainting to modify specific regions of the generated graphic

- Export as SVG for scalable use in presentations, websites, or print, or download as PNG for quick sharing

URL: https://recraft.ai

What To Watch Next (24-72 hours)

- Nvidia Earnings: Q4 results expected Wednesday after market close. Data center revenue is the only number that matters. If AI infrastructure spending is slowing, Nvidia's guidance will show it first. Expect every AI stock to move on the call.

- OpenAI Mega-Round Aftermath: Bloomberg reported last week that OpenAI's $100 billion-plus round was nearing close at an $850 billion valuation. If confirmed this week, it resets the entire private market. Watch for SoftBank's final commitment size.

- MWC Barcelona: Mobile World Congress runs through Thursday. AI-powered devices and on-device models dominate the agenda. Samsung, Qualcomm, and Huawei keynotes will signal how fast AI moves from cloud to pocket.

- Pentagon vs. Anthropic: Defense Secretary Hegseth summoned Anthropic CEO Dario Amodei to the Pentagon for Tuesday morning talks over Claude's role in military operations. Sources describe the meeting as likely tense, signaling growing friction over how Anthropic's technology is deployed or restricted in defense contexts.

🛠️ 5-Minute Skill: Turn a Legal Contract Into Plain-English Terms

Your landlord just sent a 12-page lease renewal. Or your SaaS vendor updated their terms of service. Or your company's outside counsel delivered a partnership agreement full of indemnification clauses and force majeure language. You need to understand what you are actually agreeing to before you sign.

Your raw input:

[Paste the full contract or the sections you care about. Include

headers, clause numbers, and any definitions section. The more

complete the paste, the better the output. Even a photo of a

printed contract works if you paste the OCR text.]

The prompt:

You are a corporate paralegal explaining a contract to a

non-lawyer executive who needs to understand the key terms

before signing.

From this contract, produce:

1. **What you're agreeing to** (3-5 sentences): The plain-English

summary a smart person needs before they sign.

2. **Money terms** (table): Obligation | Amount | When Due |

What Triggers It — include every financial commitment.

3. **What they can do to you** (bulleted): Every clause that gives

the other party power over you — termination rights, penalties,

IP claims, audit rights, non-competes.

4. **What you can do to them** (bulleted): Your termination rights,

liability caps, cure periods.

5. **Hidden landmines**: Clauses that look standard but contain

unusual terms. Quote the exact language and explain why it

matters.

6. **Missing protections**: Standard clauses that are NOT in this

contract but should be. What did they leave out?

Contract:

[paste here]

Rules:

- If a clause is ambiguous, say so. Do not interpret in favor of

either party.

- Quote exact contract language for any clause you flag as unusual.

- Use "you" for the person signing and "they" for the other party.

- Assume the reader has zero legal training.

What you get back:

What you're agreeing to: You are licensing their software for 36 months at $4,200/month with automatic renewal unless you give 90 days' written notice. You are granting them a perpetual, irrevocable license to use any data processed through their platform for "product improvement." You cannot use a competing product during the contract or for 12 months after termination.

Money terms:

What they can do to you:

- Terminate for "material breach" with only 15 days to cure (industry standard is 30)

- Audit your usage with 5 business days' notice

- Use your company name and logo in marketing materials unless you opt out in writing within 30 days

- Retain and use your data perpetually, even after termination (Section 9.3)

What you can do to them:

- Terminate for material breach with 30-day cure period (note: they get 15, you give 30)

- Cap your total liability at 12 months of fees paid

- Terminate at renewal with 90 days' written notice

Hidden landmines:

- Section 9.3: "Customer grants Provider a perpetual, irrevocable, worldwide license to use, modify, and create derivative works from Customer Data for purposes of product improvement and service optimization." This is unusually broad. Most SaaS contracts limit data use to providing the service. This one lets them use your data forever for any product purpose.

- Section 14.2: Non-compete clause restricts you from using "substantially similar" products for 12 months post-termination. "Substantially similar" is undefined. This could be interpreted to cover any product in the same category.

- Section 7.4: The 8% annual price escalator has no cap and no negotiation requirement. Over 3 years, your $4,200/month could become $5,290/month without discussion.

Missing protections:

- No SLA (service level agreement) with uptime guarantees or credits for downtime

- No data deletion clause after termination — they keep your data under Section 9.3

- No cap on their ability to change terms mid-contract

Why this works

The prompt forces the model to think adversarially on your behalf. Most people read contracts looking for what they are getting. This prompt looks for what they are giving away. The "hidden landmines" section catches the clauses that sound standard but contain unusual terms. And "missing protections" is the section most people would never think to check, because you cannot spot what is not there without knowing what should be.

Where people get it wrong: Asking AI to "summarize this contract." You get a neutral summary that treats both parties equally. This prompt treats the contract as a negotiation document and reads it from your side of the table.

What to use

Claude (Claude Opus 4.6): Best for long contracts. Handles the full document without truncation and excels at the "hidden landmines" section because it compares clauses against standard practice. Watch out for: Adds legal disclaimers ("this is not legal advice") that clutter the output.

ChatGPT (GPT-5.2): Clean table formatting for the money terms. Good at the adversarial framing. Watch out for: Can miss subtle asymmetries between what each party gets.

Gemini (Gemini 2.5 Pro): Strong at pulling specific clause numbers and quoting exact language. Watch out for: Narrative sections can read like a legal brief rather than plain English.

Bottom line: Use Claude for contracts over 10 pages. Use ChatGPT for shorter agreements where clean formatting matters. Either way, the "missing protections" section is the part you should read first, because it tells you what the other side did not want to include.

AI & Tech News

Pentagon Summons Anthropic CEO Amodei to Tense Meeting Over Claude Usage

Defense Secretary Pete Hegseth called Anthropic CEO Dario Amodei to the Pentagon for Tuesday morning talks over how Claude is deployed in military operations. The meeting signals growing friction between the Defense Department and one of the leading AI companies over restrictions on military use of its technology.

Goldman Sachs and JPMorgan Say AI Added 'Basically Zero' to US GDP in 2025

Economists at both banks concluded that the massive wave of AI investment contributed nothing measurable to United States economic growth last year. The findings sharply contradict industry claims that AI could drive GDP growth by as much as 92%.

OpenAI Scrambles for Computing Power as Stargate Project Stalls

The $500 billion Stargate AI data center project stalled due to clashes between OpenAI and SoftBank, forcing OpenAI to pursue alternative computing arrangements. Building its own data centers is not a near-term priority for the company, sources say.

Altman and Huang Acknowledge Growing Public Resistance to AI Adoption

OpenAI's CEO admitted AI adoption faces greater resistance than he anticipated, while Nvidia's Jensen Huang cautioned that the "doomer narrative" may be gaining the upper hand. The comments mark a notable shift in tone from two of the most prominent figures driving the AI boom.

South Korea Chip Exports Surge 134% as AI Demand Fuels Trade Growth

South Korea's semiconductor exports jumped 134% year-over-year in the first 20 days of February, with computer peripherals up 129%. Both sectors continue to ride strong global demand driven by AI infrastructure buildout.

Nvidia and MediaTek Team Up to Power Next-Generation Arm-Based Laptops

Dell and Lenovo are working with Nvidia on Arm-based laptops powered by a chip developed jointly with MediaTek, with devices expected in the first half of 2026. The push marks Nvidia's return to the consumer PC processor market.

Samsung Integrates Perplexity AI Into Galaxy S26 With Dedicated Hardware Button

Samsung's upcoming Galaxy S26 will feature Perplexity built directly into the phone, activated by a "Hey Plex" wake phrase or a physical button. The partnership embeds third-party AI search into Samsung's flagship hardware for the first time.

CISA Faces Staff Cuts and Leadership Vacuum Under Trump's Second Term

The nation's top cyber defense agency faces significant operational challenges from substantial job cuts and a prolonged vacancy at the top. The diminished capacity raises concerns about defending critical infrastructure during an escalating threat environment.

Japan's Tech-Founded Party Team Mirai Wins 11 Parliamentary Seats

Software engineers running on a technology-forward platform secured 11 of 465 seats in Japan's parliament, promising self-driving buses and high-tech jobs. The party represents a growing movement of tech professionals entering politics to shape AI policy directly.

Uber Launches Autonomous Solutions Division for Self-Driving Fleet Partners

Uber unveiled a dedicated operation providing insurance, roadside assistance, fleet financing, and mission control tools for autonomous vehicle companies. The division aims to accelerate AV commercialization by removing key operational barriers for partners.

🚀 AI Profiles: The Companies Defining Tomorrow

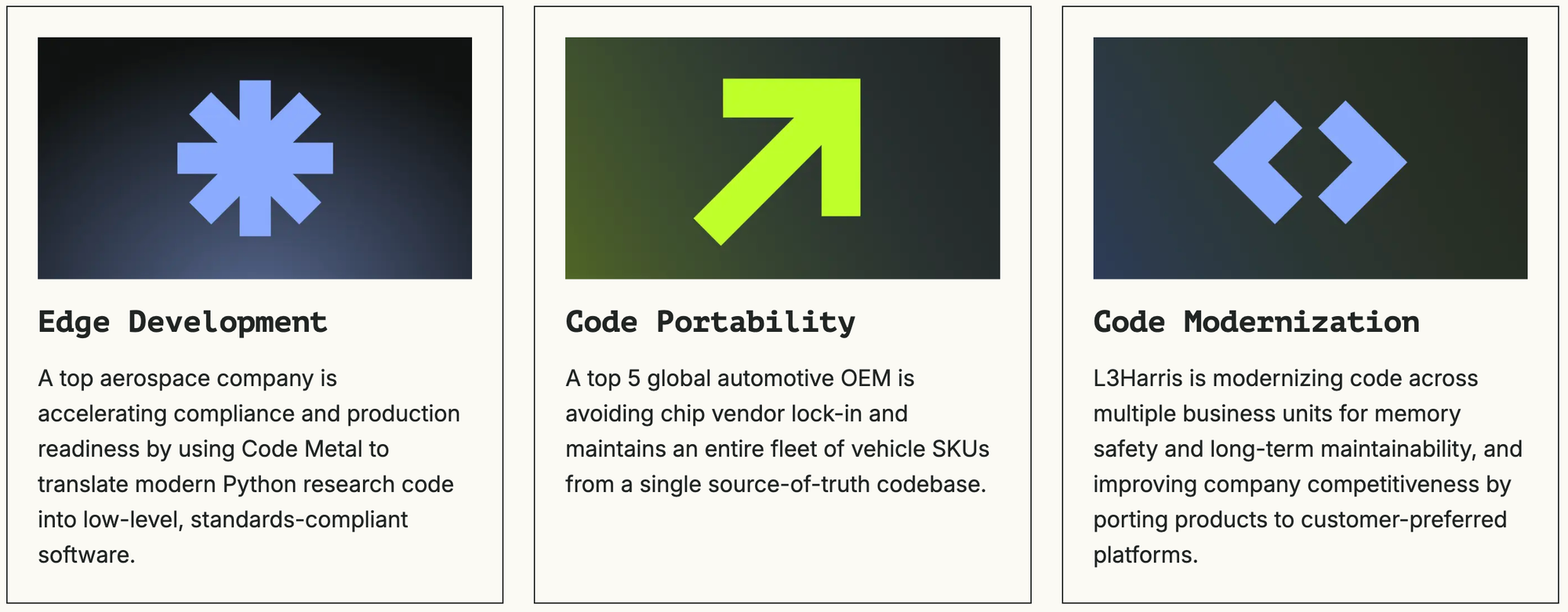

Code Metal uses AI to translate aging Pentagon software into modern programming languages, then mathematically proves the translation is correct. The Boston startup just hit unicorn status on the back of defense contracts nobody else can safely execute. 🛡️

Founders

Peter Morales (CEO) built AI reasoning systems for the F-35 at BAE Systems and MIT Lincoln Laboratory before moving to Microsoft to work on HoloLens. CTO Alex Showalter-Bucher spent a decade across the Navy, Army, and Department of Homeland Security. The team includes engineers from Intel, NASA, and OpenAI. Founded in 2023, the company recently hired Ryan Aytay, former Tableau CEO, as president and COO.

Product

Feed it code in Ada, COBOL, Fortran, or C++ and the engine outputs Rust, CUDA, or whatever the target hardware needs. The difference from dozens of AI coding tools on the market: formal verification checks every translation step mathematically, proving the new code behaves identically to the original. Code Metal claims MC/DC compliance, the standard used for flight control software. If it cannot verify a translation, it refuses the job.

Competition

GitHub Copilot and Amazon CodeWhisperer handle code generation but lack verified correctness. Defense primes like Raytheon and L3Harris do manual translation internally. Code Metal's moat is the verification layer. The risk: the company is secretive about its methodology, and nobody has proved formal verification scales to million-line legacy codebases.

Financing 💰

$125M Series B in February 2026 led by Salesforce Ventures at a $1.25B valuation. Previous: $36.5M Series A from Accel at $250M and $16.5M seed from J2 Ventures and Shield Capital. Claims profitability and eight-figure revenue, though no audited financials released.

Future ⭐⭐⭐⭐

The Pentagon spends $66 billion a year on IT, and 60-70% goes to keeping legacy systems alive. The programmers who wrote those systems are retiring. Code Metal sells the only tool that promises speed and mathematical correctness in the same package. The risk: pilot contracts are not production contracts, and defense procurement takes a decade to build trust. But L3Harris, RTX, and the Air Force are already customers. The pipeline is real. 🛡️

🔥 Yeah, But...

Mark Zuckerberg personally overruled 18 internal wellbeing experts who recommended removing beauty filters from Instagram, according to evidence presented in Meta's ongoing social media addiction trial. The filters, which alter facial features in real time, were flagged by Meta's own researchers as harmful to teen body image. Zuckerberg approved keeping them anyway.

Sources: Financial Times, February 19, 2026 | The Verge, February 19, 2026

Our take: Eighteen experts is a lot of people to hire, listen to, and then ignore. That is not a rounding error in judgment. That is an entire department's worth of "no" getting overruled by one person's "yes." Meta employs a wellbeing team, the way some companies employ a compliance department, so someone can testify later that they had one. Zuckerberg's defense is essentially that the filters are popular. Which is true. So was lead paint. The prosecution rested. The algorithm kept serving beauty filters to teenagers. Somewhere in Menlo Park, 18 researchers updated their resumes.

IMPLICATOR

IMPLICATOR