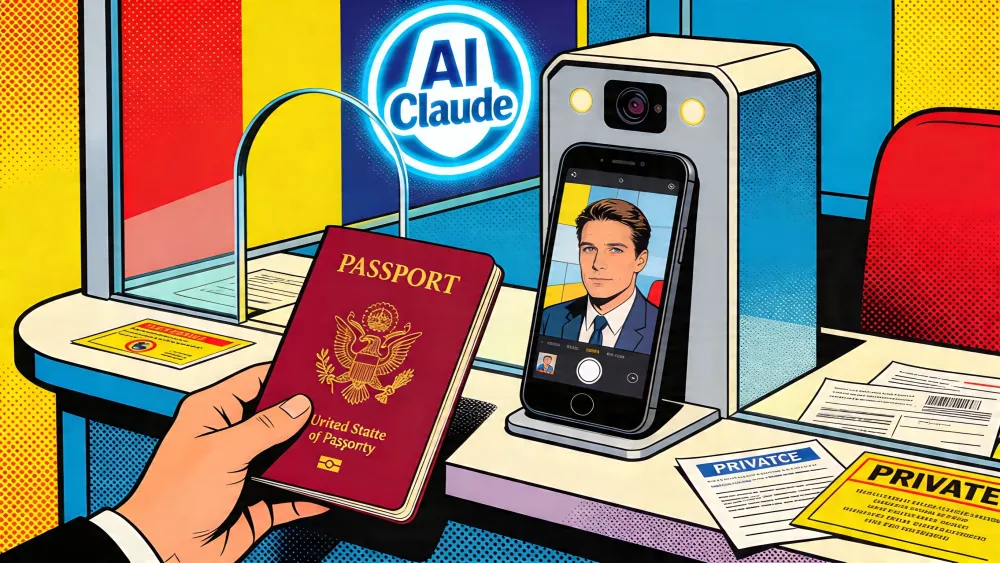

Anthropic has begun asking some Claude users to verify their identity with a government photo ID and, in some cases, a live selfie, according to a new company help page that says the checks apply to selected capabilities, platform integrity reviews, and safety or compliance measures.

The change lands less than two months after Claude drew a surge of users from OpenAI's ChatGPT during Anthropic's public fight with the Pentagon over mass surveillance and autonomous weapons. TechCrunch reported that Claude reached more than 1 million sign-ups per day in early March, while its mobile app passed ChatGPT in U.S. daily downloads on March 2, citing Appfigures and Anthropic. Now the company that benefited from privacy anxiety is asking part of that same audience to pass through an identity checkpoint.

That is the real story. Anthropic is not abandoning its privacy pitch. It is narrowing it. The company is telling users that Claude will not train on their ID, will not store the images in Anthropic systems, and will not share them for marketing. But the new rule changes the emotional contract. Claude was sold, and recently rewarded, as the AI lab that would say no to surveillance. The passport prompt says the cost of safety may be the oldest surveillance token of all: your papers.

Key Takeaways

- Anthropic now asks some Claude users for government ID and, in some cases, a live selfie.

- The move follows Claude's privacy-driven surge after Anthropic resisted Pentagon AI-use demands.

- Persona handles the documents, but third-party custody does not erase breach or appeal risks.

- The trust test is whether verification stays rare, clear, and tied to real abuse signals.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

Anthropic built the tollbooth itself

Start with what Anthropic says. Its identity verification page says verification helps the company prevent abuse, enforce usage policies, and meet legal duties. The checks are not universal. Users may see them when accessing certain capabilities, during routine platform integrity checks, or under other safety and compliance measures. Anthropic told Decrypt the rule applies to "a small number of cases" tied to potentially fraudulent or abusive activity.

The documents required are specific. Anthropic accepts a physical passport, driver's license, state or provincial ID, or national identity card from most countries. It rejects photocopies, screenshots, scans, mobile IDs, student IDs, employee badges, library cards, bank cards, and temporary paper IDs. A phone or computer camera may be needed for a live selfie.

The company chose Persona Identities as its verification partner. Anthropic says it remains the data controller. Persona collects and holds the ID and selfie, processes them on Anthropic's instructions, encrypts the data in transit and at rest, and deletes it under retention limits set by Anthropic and applicable law. Anthropic says it does not copy or store the images itself, though it can access verification records through Persona's platform when needed, including for appeals.

That distinction matters legally. It matters less emotionally. If you are the user staring at a camera with a passport in your hand, the custody diagram is not the point. The point is that the AI assistant now has a tollbooth.

The privacy win created the privacy trap

Anthropic earned this problem by winning the last fight.

In February, CEO Dario Amodei said Anthropic could not accept Pentagon contract language that, in the company's view, failed to prevent Claude's use for mass surveillance of Americans or fully autonomous weapons. The Associated Press reported that the dispute put Anthropic at risk of losing its government contract, being labeled a supply-chain risk, or facing pressure under the Defense Production Act. Pentagon officials denied any plan to use AI for illegal domestic surveillance or human-free weapons.

The public saw a simpler story. OpenAI, Google, and xAI were in the military network. Anthropic was the holdout. Users who already felt watched had a new place to go. TechCrunch reported Claude's mobile daily active users hit 11.3 million on March 2, up 183% from the start of the year, citing Similarweb. Anthropic said daily active users had more than tripled since the beginning of 2026 and paid subscribers had doubled.

Growth like that brings abuse. It brings fraud. It brings banned regions, resellers, bots, account farms, minors, scammers, scraped credentials, and users trying to route around rules. Anthropic's March 2025 misuse report described influence operations, credential scraping, recruitment fraud, and malware development detected on Claude. A company with that record does not need to invent a reason for tougher controls.

Still, the timing is brutal. Anthropic's brand just absorbed the hopes of users who wanted distance from state power. Then the company installed a mechanism that links Claude access to state-issued identity. The bridge lifted for the crowd that had just crossed it.

This is not hypocrisy in the cheap sense. It is worse for Anthropic because it is operational tension. The company wants to be the safe lab, the privacy lab, the national-security skeptic, and the abuse-resistant platform. Those jobs pull against each other. Institutional caution says verify more people. Institutional fear says do not become the next platform blamed for fraud, minors, or foreign abuse. User trust says do not ask for passports unless there is no other way.

Persona does not make the risk disappear

Anthropic's strongest argument is that it is not building an internal ID vault. Persona handles the images. Persona's policy says it acts as a processor for customers that control the relationship with users. For identity checks, Persona may collect names, contact details, demographic data, government documents, identifiers, device data, public data, general location inferred from IP address, and ID and selfie images. It may compare facial geometry from the document with facial geometry from the selfie to confirm identity.

Persona says it does not use personal data, including biometric data, for AI or model training. It says it does not sell or share that data for marketing. It says ID and selfie scan data are destroyed when identity services are complete or within three years of the user's last interaction, depending on the customer's instructions and legal process. Persona also says it may use cloud providers including AWS, Google Cloud, and MongoDB to process biometric data.

That is a serious privacy stack. It is not a guarantee.

The AI trust bargain is changing.

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Discord supplied the warning label in October. The company said a third-party customer service provider had been compromised, exposing data from users who contacted customer support or trust and safety teams. Discord said about 70,000 users may have had government-ID photos exposed, tied to age-related appeals. Discord stressed that it was not a breach of Discord itself. That did not make the IDs less exposed.

The lesson is not that Persona is unsafe. The lesson is that outsourcing moves the blast radius. It does not erase it. Once a platform creates a reason for users to upload passports, it creates a class of data attackers want and users cannot rotate. You can replace a password. You cannot replace your face.

Age checks show where this goes next

Claude's passport prompt is not arriving alone. Anthropic's December 2025 user-wellbeing post said Claude.ai users must be 18 or older, must affirm their age at account setup, and may have accounts disabled if they self-identify as minors in conversation. Anthropic also said it was developing a classifier to detect subtler signs that a user might be underage.

MediaNama reported this week that multiple users on Reddit and X said Anthropic wrongly flagged them as minors and suspended their Claude accounts. The reported Anthropic email said the team had found signals that the account was used by a child and offered a 30-day link to verify age. Some users said they were paying adults. One said project histories broke while the appeal played out.

Treat those posts carefully. They are user reports, not audited error rates. But they point to the pressure that makes identity checks spread. A safety classifier flags a risk. A human review needs a rule. A rule needs proof. Proof becomes an ID flow. Soon the exception looks like infrastructure.

That is the tollbooth problem. It rarely stays at the first lane.

More than 400 security and privacy researchers warned in a March open letter, reported by MediaNama, that large age-assurance systems can collect biometrics, behavioral signals, and context data, produce false positives, exclude people without documents or compatible devices, and link online access to broader identity systems. Their warning was about age checks, not Claude alone. But Claude is now part of the same policy current.

You do not need to think Anthropic is acting in bad faith to see the direction. Safety systems start as narrow gates. Abuse teams widen them when attackers adapt. Regulators widen them when harms make headlines. Product teams widen them when a checked account becomes more trusted than an unchecked one.

The trust test is narrower than the policy

Anthropic can survive this if the identity prompt stays rare, transparent, and tied to clear abuse signals. It will have a harder time if users experience it as random, vague, or punitive.

The company should publish more than a help page. It should say which broad categories can trigger verification, how often it happens, how many appeals succeed, how long Persona keeps each class of data under Anthropic's instructions, and what users lose while an appeal is pending. It should publish false-positive ranges for age and fraud classifiers. It should state whether verification affects access to account history, Claude Code projects, billing, or export tools.

That information would not help attackers much if it stays at category level. It would help users decide whether Claude still deserves the trust premium Anthropic spent the Pentagon fight earning.

Right now, the company's line is technically coherent and politically fragile. It says: we refused mass surveillance, but we may ask for your passport; we do not store the image, but our vendor will hold it; we do not train on it, but it can be used for fraud prevention; we protect privacy, but our Trust and Safety team can access conversations when policy review requires it.

Each sentence can be defended. Together, they sound like the voice of a company learning that safety at scale often looks like control.

Anthropic did not lose the privacy crowd by adding a narrow ID check. Not yet. The test comes later, when the first wave of users cannot tell why the prompt appeared, when an appeal stalls, when a developer loses access to a project midstream, or when a regulator asks platforms to verify more categories of users. That is when the checkpoint stops looking temporary.

Claude won converts by standing on the far side of the surveillance fight and waving people across. Now it has built a booth at the bridge. The next question is not whether Anthropic can explain the booth. It is whether users still feel like they are crossing into safer ground.

Frequently Asked Questions

Why is Anthropic asking some Claude users for ID?

Anthropic says identity verification helps prevent abuse, enforce usage policies, and meet legal duties. The company says prompts may appear for selected capabilities, platform integrity checks, or safety and compliance reviews.

What documents does Claude identity verification accept?

Anthropic says users may need a physical government-issued photo ID such as a passport, driver's license, state or provincial ID, or national identity card. Screenshots, scans, mobile IDs, student IDs, and temporary paper IDs are not accepted.

Does Anthropic store users' passport images?

Anthropic says Persona collects and holds ID and selfie images, not Anthropic's own systems. Anthropic says it can access verification records through Persona when needed, such as during appeals.

Why does this matter for Claude's privacy reputation?

Claude gained users after Anthropic resisted Pentagon AI-use demands tied to surveillance and autonomous weapons. Asking some users for government ID changes the trust bargain for privacy-conscious users.

What is the main risk of using Persona for verification?

Persona may be a serious verification provider, but outsourcing does not remove risk. Discord's 2025 vendor breach showed that government-ID photos can be exposed through third-party systems.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

IMPLICATOR

IMPLICATOR