Anthropic's engineers tripled their code output last year. That created a problem. On Monday, Anthropic started charging $15 to $25 to fix it.

The company launched Code Review, a multi-agent system that dispatches teams of AI to scrutinize pull requests for bugs before human reviewers see the code. The pitch is depth over speed, insurance over productivity. Twenty minutes per review, up to $25 a pop, for the kind of thorough analysis that overstretched developers routinely skip.

On the same day, Anthropic filed two lawsuits against the Trump administration over its designation as a national security supply chain risk. Contracts are being cancelled. Hundreds of millions in government revenue sit in jeopardy. The timing of a premium enterprise product launch alongside a federal legal battle tells you something about where Anthropic sees its future. And it's not in Washington.

Code Review is the third major enterprise expansion in two months, following MCP Apps in January and Claude Code Security in February. Each one absorbs another piece of the software development workflow. Together they form a pattern that should make engineering leaders pay attention. Possibly get nervous.

The Breakdown

- Anthropic's Code Review dispatches AI agents per pull request at $15-25 each, targeting Teams and Enterprise customers.

- Internal stats show 84% of large PRs get findings, but the sub-1% rejection rate relies on opt-in disagreement metrics.

- Code Review completes a vertical stack: one vendor now handles code generation, review, security scanning, and CI/CD.

- Pentagon supply chain risk label and lawsuits create new vendor risk for enterprise buyers on launch day.

The toll road nobody asked to be on

Here's the economics at the center of this. Claude Code helped Anthropic's own engineers increase code output by 200% in the past year. That's the company's number. Cat Wu, Anthropic's head of product for Claude Code, told TechCrunch what followed was predictable. "A much higher demand for code review."

More code means more pull requests. More pull requests mean more reviews. More reviews mean a bottleneck. Anthropic's solution is to sell you a way through the bottleneck that its own tool created.

Anthropic widened the highway. Traffic tripled. Now it charges $20 every time you want to use an exit ramp.

Compare that to CodeRabbit. Unlimited AI code reviews for $24 a month. Anthropic wants $15 to $25 for a single PR. Run the math on a hundred-person shop where each developer opens one PR a day. That's $40,000 a month, or $480,000 annualized. Not a rounding error. A headcount.

Anthropic frames this differently. "The cost of a shipped bug dwarfs $20/review," a spokesperson told VentureBeat. "A single production incident can cost more in engineer hours than a month of Code Review." The framing is deliberate. If you think of Code Review as a productivity tool, the math is terrible. If you think of it as catastrophe prevention, the math is survivable. Anthropic needs you to think about the rollback, the hotfix, the 3 AM page. Not the monthly invoice.

The 1% number that isn't what it looks like

Anthropic's internal data makes the strongest case for Code Review. On large pull requests exceeding 1,000 changed lines, 84% of reviews surface at least one finding. The average is 7.5 issues per review. On small PRs under 50 lines, 31% get flagged, averaging half an issue. Engineers mark fewer than 1% of findings as incorrect.

That last number is doing heavy lifting in every press release. Nobody's pushing back on it hard enough.

VentureBeat pressed Anthropic on methodology. The spokesperson explained that "marked incorrect" means an engineer actively resolved a comment without fixing the underlying issue. This is opt-in disagreement. A developer has to take the affirmative step of dismissing a finding.

Think about how you actually work. Under deadline pressure, with twelve other PRs waiting, are you going to formally dismiss a finding you disagree with? Or scroll past it and move on? Marking something wrong takes effort. Ignoring it takes none. Any metric that requires humans to register objections will undercount objections. Anthropic basically conceded the point, saying it will "continue to monitor engagement data." Corporate for "we know."

Stay ahead of the curve

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Look at Anthropic's own before-and-after. Before Code Review, 16% of Anthropic's internal PRs received substantive comments from human reviewers. After deployment, 54%. That's a clear improvement in review coverage. It also means 84% of PRs were getting waved through with minimal scrutiny before Anthropic built a tool to address the gap. Put differently, Anthropic admitted its review process was buckling under the code its own AI was writing. Then it packaged the fix and started charging for it.

Give Anthropic this much: the bugs they caught are real. A one-line production change that would have broken authentication, caught before merge. A type mismatch in TrueNAS's ZFS encryption code that was silently wiping the key cache on every sync. These are real bugs, the kind that wreck production environments and generate incident reports nobody wants to write. But anecdotes chosen by the vendor selling the product aren't the same thing as independent benchmarks. Anthropic has not published comparative evaluations against CodeRabbit, GitHub Copilot's built-in review, or expert human reviewers.

Vertical integration disguised as a product suite

Code Review doesn't exist in isolation. Place it next to the other pieces Anthropic has shipped since January and a different picture forms.

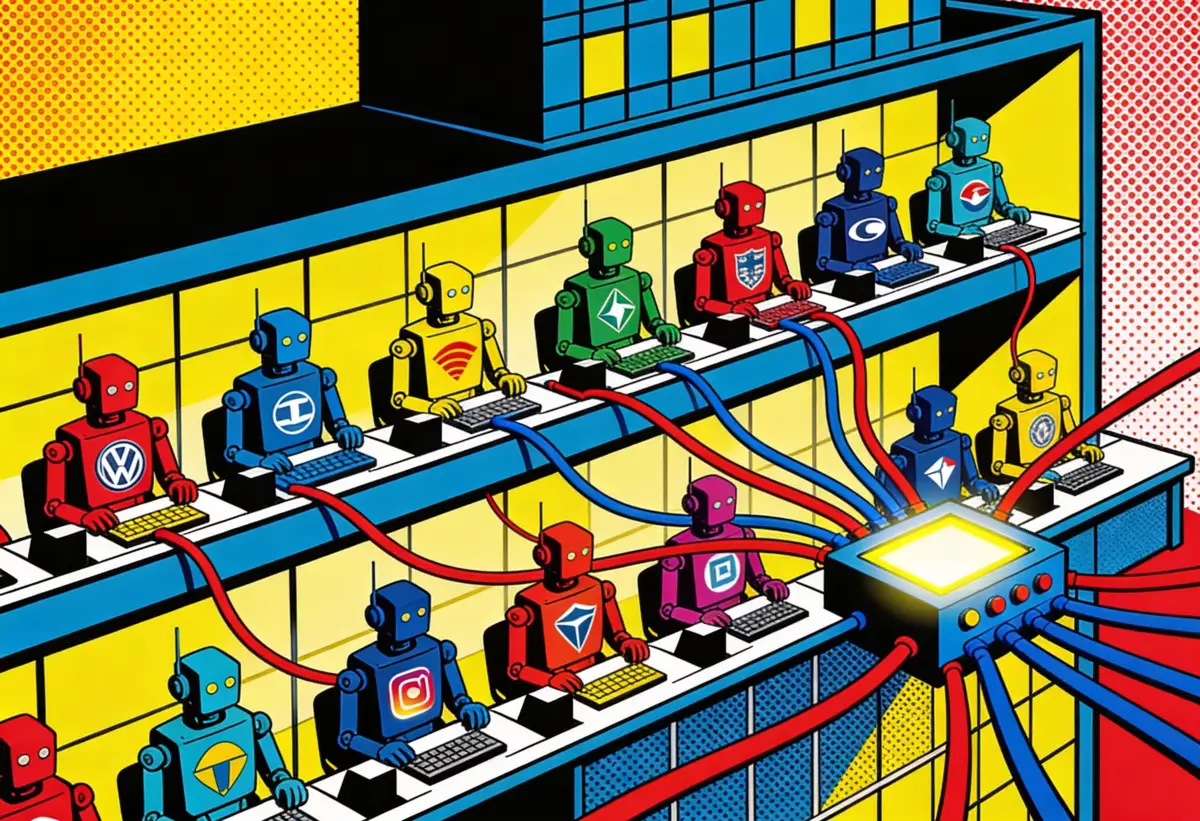

Claude Code writes the code. Code Review checks it for logic errors, and Claude Code Security, launched three weeks ago, scans the full codebase for vulnerabilities. The GitHub Action handles the lighter CI/CD work. If you're an enterprise customer already paying for Claude Code, every new product pulls you further into Anthropic's workflow.

In January, Anthropic launched MCP Apps, embedding nine business tools directly inside Claude. The move absorbed Slack, Google Drive, and Jira into a single interface. Now the developer tooling side mirrors the same strategy. Write, review, secure, ship. All Anthropic.

The closed loop is elegant and, for competitors, suffocating. Cursor, the AI code editor valued at $30 billion, built its business on the space between writing code and shipping it. But if Anthropic owns code generation, review, and security scanning natively, the standalone code review market shrinks to whatever falls outside the Anthropic workflow. GitHub Copilot includes basic review capabilities in its subscription. Amazon's CodeWhisperer does too. Neither charges $20 per PR. Neither runs a fleet of specialized agents, either. The premium tier of code review may end up being a market of one, because nobody else has the same incentive to build it. Anthropic created the volume problem. Of course it built the volume solution.

As VentureBeat noted, Anthropic "isn't just building models, it's building opinionated developer workflows around them." Opinionated is the right word. Each product assumes you'll use the others. Code Review can feed its findings back to Claude Code for automated fixes. Security findings live in the same dashboard. The friction of staying inside the ecosystem drops with every integration. The friction of leaving climbs.

If you're an engineering leader evaluating these tools, ask yourself where vendor convenience tips into vendor dependency. Anthropic now touches code generation, code review, security scanning, and CI/CD automation. That's four of the five steps between an idea and a deployed feature. The fifth, human approval, is the only one the company says it won't automate.

For now.

The Pentagon shadow over your vendor stack

The Code Review launch happened on the same day Anthropic sued the Department of Defense. Not a coincidence. A hedge.

The Pentagon wanted unrestricted access to Claude for "all lawful purposes." Anthropic drew two lines: no fully autonomous weapons, no mass domestic surveillance. When negotiations collapsed on February 27, Defense Secretary Pete Hegseth designated Anthropic a supply chain risk. A label typically reserved for foreign adversaries like Huawei.

Practical fallout extends past government contracts. In procurement offices from Rosslyn to Crystal City, vendor risk assessments are being updated. Defense contractors must now certify they don't use Claude in Pentagon-related work. For companies that serve both commercial and defense clients, the compliance overhead alone could push them toward alternatives.

But Anthropic looks emboldened rather than cornered. Microsoft, Google, and Amazon all confirmed Claude remains available for non-defense workloads the same day. Microsoft went further, announcing Claude integration into Microsoft 365 Copilot. Three of the world's largest technology companies publicly backing Anthropic while it sued the federal government tells you something about the commercial gravity Claude has built.

Enterprise procurement teams aren't cloud providers, though. They're risk-averse by training and by mandate. A vendor labeled a national security threat by the Pentagon, however dubiously the label was applied, will trigger review committees and risk assessments at exactly the organizations Anthropic wants buying $20 code reviews. Defense contractors face the sharpest bind: they must certify their supply chains are clean, and a designation this broad makes Claude radioactive for anything touching government work. Claude Code's $2.5 billion run rate and quadrupled enterprise subscriptions suggest momentum is winning that argument for now. Whether it keeps winning depends on how long the lawsuit drags and whether the label sticks.

The exit ramp that keeps getting further away

Anthropic's strategy is working. Revenue numbers don't lie. The company has built a developer tooling business growing faster than almost anything in enterprise software, and Code Review adds a recurring, usage-based revenue stream on top of it.

But the strategy also carries a specific risk, the kind that doesn't show up in quarterly earnings. When one vendor writes your code, reviews your code, and scans it for vulnerabilities, you have a single point of interpretive authority over your entire codebase. Every finding gets filtered through one company's models, one company's definition of "bug," one company's tolerance for false positives.

That might be fine today. Anthropic's models are strong, and the bug catches genuinely impressive. But vendor concentration in something as critical as code quality isn't a technical decision. It's a governance decision. And most engineering organizations aren't treating it as one.

The highway Anthropic built is fast, well-lit, and getting wider every month. The guardrails work. The toll keeps going up. And the exits are harder to find than they were a year ago.

Frequently Asked Questions

How does Code Review pricing compare to alternatives?

CodeRabbit charges $24 per month for unlimited reviews. Code Review costs $15-25 per single PR based on token usage. A 100-developer team at one PR per day could spend $480,000 annually.

What does Code Review actually check for?

Multiple AI agents analyze code in parallel for logic errors, security vulnerabilities, edge cases, and regressions. It skips style and formatting issues to reduce false positives. Average review takes about 20 minutes.

How reliable is the sub-1% incorrect finding rate?

The metric counts engineers who actively dismiss a finding. Developers under deadline pressure may ignore irrelevant findings rather than formally marking them wrong, meaning false positives likely go undercounted.

What does the Pentagon supply chain risk label mean for enterprise users?

Defense contractors must certify they don't use Claude in Pentagon work. Microsoft, Google, and Amazon confirmed Claude remains available for non-defense workloads, but procurement teams may trigger vendor risk reviews.

Can Code Review work independently of other Anthropic tools?

It integrates with GitHub and runs automatically on PRs. But findings feed back into Claude Code for fixes, and Claude Code Security handles deeper scans. The free Claude Code GitHub Action offers a lighter standalone alternative.

IMPLICATOR

IMPLICATOR