San Francisco | March 19, 2026

A homelab builder spent a year assembling a dual RTX 3090 server. A $599 Mac Mini M4 beat it by 27% on the same model, at one-twentieth the power draw. The hardware community is still arguing about the wrong category.

Apple blocked Replit and Vibecode from pushing iOS updates, citing rules against in-app code execution. The same Apple that shipped AI coding agents inside Xcode last month.

And Anthropic surveyed 80,508 Claude users about what they want from AI. The top answer was productivity. The real answer, buried in follow-ups: more time with their families.

Stay curious,

Marcus Schuler

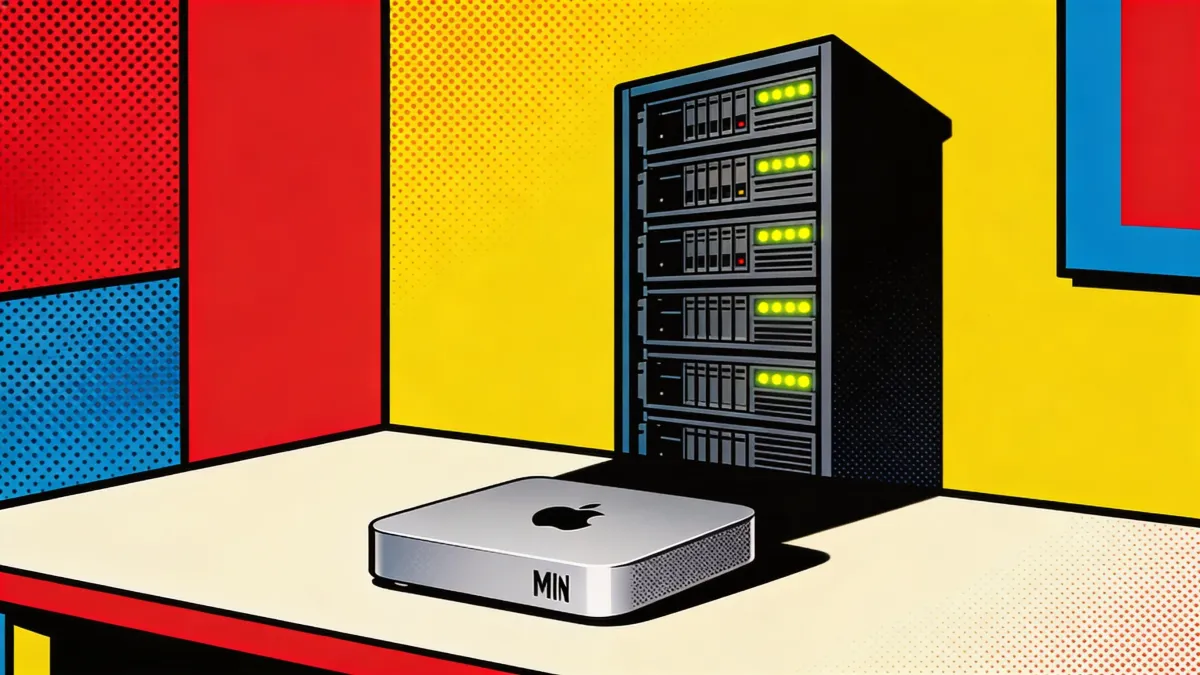

Mac Mini M4 Beats Dual RTX 3090s by 27% on LLM Inference at 40 Watts

A homelab builder sank a year into a dual-GPU tower. Apple's cheapest desktop beat it on the benchmark that matters most for running AI locally.

A Mac Mini M4 loaded with 64 gigabytes of unified memory hit 11.7 tokens per second on Qwen3 32B. A dual RTX 3090 server with 48 gigabytes of VRAM managed 9.2. Twenty-seven percent slower, pulling 700 watts to the Mini's 40. Independent tester Stephane Thirion published the results after spending over a year building the GPU rig.

The reason is architectural. LLM inference is memory-bandwidth bound. Model weights move constantly between memory and processing cores. When a model spans two GPUs, communication runs over PCIe, and that bus becomes the bottleneck. Unified memory eliminates it. One pool, no copying, no waiting.

The Mac Mini caps out at 13B models on 32 gigabytes and cannot serve multiple users, fine-tune, or run batch inference. macOS throttles background processes when the display sleeps. Docker runs inside a Linux VM, adding overhead. Nvidia still owns training, image generation, and multi-user workloads.

But single-user, single-model inference, the thing most people actually do when they run AI locally, rewards bandwidth over raw compute. The Mac Mini delivers exactly that at $599. Nvidia's DGX Spark ships at $3,999 with 128 gigabytes of unified memory. Early benchmarks showed 2.7 tokens per second on Llama 70B.

The industry keeps comparing the Mac Mini to GPU servers because there is no other frame of reference. It is not a server. It is a personal inference appliance: silent, private, good enough for one person's daily AI work. Professional studios did not close when Apple shipped GarageBand. Most people just stopped needing one.

Why This Matters:

- Privacy-sensitive professionals gain local AI inference at $599 with zero API costs, keeping every prompt on their own network

- The hardware market is splitting into personal inference appliances and GPU infrastructure, a category the industry has not named yet

Reality Check

What's confirmed: Mac Mini M4 with 64GB hit 11.7 tokens/sec on Qwen3 32B vs 9.2 for dual RTX 3090s in one independent benchmark, at 40W vs 700W.

What's implied (not proven): That unified memory's architectural advantage generalizes across all models and use cases for personal AI inference.

What could go wrong: macOS energy management throttles sustained workloads, and a second concurrent user tanks performance entirely.

What to watch next: Whether Apple acknowledges the personal inference appliance category in marketing or keeps positioning the Mini as a general desktop.

The One Number

45.3% — ChatGPT's U.S. mobile market share as of January 2026, down from 69.1% one year earlier. Daily time spent with Claude tripled from ten minutes to over thirty in the same period. Separately, Ramp spending data shows 70% of businesses choosing an AI provider for the first time now pick Anthropic. The AI market that looked like a one-horse race 12 months ago has three contenders and a leader losing ground by the month.

Source: The Register / Ramp / Apptopia

Anthropic Surveys 80,508 Users, Finds Most Want Better Lives Over Productivity

Eighty thousand Claude users said they wanted productivity. Follow-up questions revealed what they actually meant: more time for life outside work.

Anthropic published what it calls the largest qualitative AI study ever conducted. Over one week in December, 80,508 Claude users across 159 countries sat down with Anthropic Interviewer, a specialized version of Claude built to conduct adaptive conversations about AI hopes and fears.

Nineteen percent named professional excellence as their top desire. Researchers pushed respondents on why they wanted productivity gains. The answers drifted. "Using AI to automate emails became, in actuality, a desire to spend more time with family," the researchers wrote. A third of all visions collapsed into one request: make room for the parts of life that modern work has crowded out.

Eighty-one percent reported concrete AI benefits, with productivity gains at 32% and cognitive partnership at 17%. But 19% said AI fell short, citing hallucinated citations and outputs requiring so much verification the time savings vanished.

Decision-making was the only tension where negatives outweighed positives. Thirty-seven percent cited unreliability versus 22% praising AI judgment. People who valued emotional support from AI were three times more likely to also fear becoming dependent on it. The tensions do not sort people into camps. They coexist inside the same individual.

Anthropic acknowledged selection bias. All respondents were active Claude users from disproportionately wealthy, technical backgrounds. The study captures what devoted AI users want. It does not capture what the world wants.

Why This Matters:

- AI companies pitch productivity tools, but users want AI to reclaim personal time, revealing a gap between product framing and actual demand

- Decision-making unreliability outweighs perceived benefits, flagging the largest trust deficit in current AI products

AI Image of the Day

Prompt: Van Gogh style painting of the Bund in Shanghai, historic architecture along the Huangpu River, thick impasto brushstrokes, swirling clouds in the sky, bold cobalt blue and chrome yellow palette, post-impressionist masterpiece, rich oil paint texture, golden hour lighting, 4k, detailed --ar 3:4

Apple Blocks Replit and Vibecode iOS Updates While Promoting AI Coding in Xcode

Apple froze two of the most popular vibe coding apps on iOS, citing rules written before AI could generate software from a prompt.

Replit and Vibecode cannot push App Store updates after Apple flagged violations of Guideline 2.5.2, a rule prohibiting apps from executing code that changes their functionality after review. When Replit generates an app from a user's prompt, it displays that app inside the Replit app itself. Apple considers that a violation.

Replit's path back: push generated apps to an external browser. Vibecode faces a steeper ask. Apple told the company to remove the ability to generate software specifically for Apple devices, stripping a core selling point.

The freeze carries a real cost. Replit has not pushed a mobile update since January and fell from first to third in Apple's free developer tools rankings. For a company that raised $400 million at a $9 billion valuation this month, two months of frozen updates amount to a competitive penalty applied through process.

Apple shipped Xcode updates in February with built-in AI coding agents from OpenAI and Anthropic. Same concept as Replit. Apps built through Xcode go through Apple's review process. Third-party vibe coding tools produce apps that can bypass the App Store entirely. Vercel's v0 has not received the same treatment, likely because it generates web apps rather than software for Apple devices. The vibe coding market has raised billions. The question is no longer whether Apple can enforce Guideline 2.5.2, but whether the guideline makes sense when anyone with a prompt can ship software.

Why This Matters:

- Apple constrains third-party AI coding tools while promoting its own, creating a two-tier system for who builds software on iOS

- Vibe coding apps face a structural iOS disadvantage that could push developers and non-coders toward web-only tools or Android

🧰 AI Toolbox

How to Run Personalized Product Demos 24/7 Without a Sales Rep Using Naoma

Naoma is an AI video sales agent that delivers live, interactive product demonstrations to website visitors on demand. A prospect clicks a button, and an AI avatar walks them through your product in a personalized demo adapted to their role, industry, and goals. No scheduling, no waiting, no time zone constraints. It runs in 33 languages and logs every interaction to your CRM. The company claims conversion rates jump from 1-2% to 6-20%. Usage-based pricing.

How to get started:

- Go to naoma.ai and sign up for an account

- Connect your product information, demo scripts, and CRM system

- Choose your AI agent's appearance: pick a human-like avatar or a branded mascot

- Configure personalization rules so the demo adapts based on visitor role, industry, and stated goals

- Embed the demo widget on your website, in-app, or include it in email outreach

- Prospects click to start an instant, conversational product demo with no scheduling required

- Review qualified leads, transcripts, and follow-up actions logged automatically in your CRM

URL: https://www.naoma.ai

What To Watch Next (24-72 hours)

- Triple Witching + S&P Rebalance: Friday brings the first quarterly Triple Witching of 2026 alongside the S&P 500 rebalance. AI infrastructure stocks with heavy options exposure could see outsized moves unrelated to fundamentals. Highest-volume session of Q1.

- FedEx Earnings: Fiscal Q3 results after the bell today. AI-driven route optimization makes FedEx an AI-adjacent bellwether. Friday morning's reaction sets the tone for industrials heading into Q2.

- Y Combinator Demo Day: W26 batch presents Tuesday in San Francisco. Over half the companies sit in AI and machine learning, with agentic AI dominating. First batch since GTC's inference-cost announcements.

🛠️ 5-Minute Skill: Turn a Generic Image Request Into a Photorealistic Scene of a Real Place

You need an image of a specific building, landmark, or species for a presentation. You type "photo of a church in southern France" and get something obviously AI-generated with fictional architecture. Google's latest image model can now search the web for visual references before generating, producing accurate depictions of real places.

Your raw input:

"I need a realistic photo of a European church for my client deck"

The prompt:

Generate a cinematic, golden-hour photograph of the main historical

church in Voiron, France. Ensure the architectural details, the spire,

the surrounding square, and the setting are accurate to reality.

Street-level perspective, warm directional light.

What you get back:

A photorealistic image with accurate architectural details pulled from web reference images, not hallucinated spires or invented windows.

Why this works

The key word is a real, specific place name. Generic prompts produce generic results. When you name an actual location, the model searches for reference images and reproduces real details. Works for buildings, landmarks, animal species, and botanical specimens. Does not work for people.

What to use

Google Nano Banana 2: Only model with visual grounding that searches real images before generating. Best for real locations and specific species. Watch out for: Cannot search for people.

ChatGPT: Handles general image requests well but cannot look up real locations for reference. Better for abstract or fictional scenes.

AI & Tech News

Walmart Drops OpenAI's Instant Checkout After Conversion Rates Fall 3x Short

Walmart will embed its proprietary chatbot Sparky directly into ChatGPT and Google Gemini after finding that OpenAI's built-in checkout converted at one-third the rate of transactions where users clicked out to complete purchases externally. The shift signals that major retailers want control over the customer experience rather than outsourcing it to AI platforms.

PwC Tells Partners to Adopt AI or Leave the Firm

PwC's US chief Paul Griggs told partners that those who resist AI have no place at the consultancy, as the firm plans to convert professional services into AI-powered automated tools. The ultimatum marks one of the most aggressive moves by a Big Four firm to reshape operations around AI.

Senate Releases Federal AI Framework to Override State Laws

Senator Marsha Blackburn released the TRUMP AMERICA AI Act, a federal framework designed to replace state AI laws with a unified national standard. The draft incorporates the Kids Online Safety Act and the NO FAKES Act into sweeping national AI policy.

Apple AI App Revenue Triples to Nearly $1 Billion Annual Pace

Revenue from generative AI apps on Apple's platform surged from $35 million in January 2025 to $101 million by August, with ChatGPT commanding 75% of that total. Grok trails at roughly 5% market share on Apple's platform.

Apple's China Smartphone Sales Jump 23% While Market Declines 4%

Apple posted a 23% year-over-year increase in China smartphone sales during the first nine weeks of 2026, outperforming a broader market that declined 4%. Rising Android phone prices drove the industrywide downturn while Apple gained share.

Google Upgrades Stitch With AI-Native Design Canvas and Reasoning Agent

Google announced a major update to Stitch, its AI design tool that transforms natural language prompts into high-fidelity UI designs, now featuring a reasoning design agent. The platform lets anyone create professional-quality interface designs by describing what they want in plain language.

Figma Stock Falls 8% on Google Stitch Threat, Down 80% From IPO

Figma shares dropped 8% after Google's Stitch announcement, adding to a devastating trajectory that puts the stock down roughly 80% from its August 2025 IPO price. AI-powered design tools are eroding the position Figma held as the default UI design platform.

Chip Testing Becomes Latest Chokepoint in AI Semiconductor Supply Chain

Testing companies are racing to keep pace with increasingly complex AI chip designs from Nvidia and Google, with industry executives identifying testing as the newest bottleneck. Shares of Advantest, Teradyne, and Chroma have more than tripled over the past year on surging demand.

Fal Seeks $8 Billion Valuation as GenAI Hosting Revenue Doubles

The cloud service for hosting generative AI models is in talks to raise $300-350 million at an $8 billion valuation. Annualized revenue hit $400 million, doubling from $200 million in October 2025.

Baidu and Alibaba Raise Cloud Prices Up to 30% on AI Demand

Baidu followed Alibaba in hiking AI computing cloud prices by 5-30%, with new pricing taking effect from April 18. Analysts describe the increases as a response to surging demand for AI infrastructure across China.

🚀 AI Profiles: The Companies Defining Tomorrow

Edra

Edra turns operational data into a self-writing automation platform. The New York startup just emerged from stealth with $30 million, Sequoia's backing, and enterprise customers including HubSpot and easyJet. 🧠

Founders

Eugen Alpeza and Yannis Karamanlakis met 13 years ago studying computational statistics and machine learning at University College London. Both spent nearly a decade at Palantir, where they co-created the Forward Deployed AI Engineer role. Alpeza led the launch of Palantir's AI Platform and ran major commercial accounts including AT&T. Karamanlakis was Palantir's first Forward Deployed AI Engineer. The company has roughly 17 employees across New York and London.

Product

Edra connects to ServiceNow, JIRA, Zendesk, and Outlook, then reverse-engineers how a business operates from tickets, logs, emails, and chat histories. It auto-generates transparent, editable playbooks rather than requiring humans to document processes. AI agents execute those playbooks inside existing tools. Current deployments focus on IT service management and customer support for HubSpot, ASOS, Cushman & Wakefield, and easyJet.

Competition

ServiceNow acquired Moveworks for $2.85 billion to add conversational AI to its IT platform. Serval raised $75 million from Sequoia at a $1 billion valuation to replace ServiceNow entirely. Glean raised $260 million for enterprise AI search. Edra's edge: it sits on top of existing systems and auto-generates the knowledge that powers automation. Its risk: ServiceNow's Now Assist is building the same capabilities inside the platform enterprises already pay for.

Financing 💰

$30 million total across a $6.5 million seed led by 8VC and A* (Kevin Hartz) in October 2024 and a Series A led by Sequoia. Both investors participated in both rounds.

Future ⭐⭐⭐⭐

Palantir built a $60 billion company by embedding engineers inside customers to make data useful. Edra is betting AI can do the embedding. The founders literally created the role they are now automating. The customer list crosses airlines, SaaS, commercial real estate, and fashion retail, which suggests the product generalizes. Seventeen employees and four enterprise logos is an unusual ratio. The question: can Edra scale before ServiceNow ships the same capability to everyone already paying for its platform? 🗽

🔥 Yeah, But...

FBI Director Kash Patel told the Senate Intelligence Committee on Wednesday that the FBI is buying commercially available data to track people's movements and location history. It is the first confirmation that the agency is actively purchasing such data since former Director Christopher Wray said in 2023 that the practice had stopped. The Supreme Court ruled in 2018 that law enforcement needs a warrant to obtain location data from cell phone providers. Data brokers sell the same information without one.

Sources: Politico, March 18, 2026

Our take: The Supreme Court ruled in 2018 that the government needs a warrant to get your location data from your phone company. The FBI said understood and started buying the same data from brokers who got it from your phone company.

Committee Chair Cotton defended the practice because the data is "commercially available," which is true in the same way a locksmith's tools are commercially available.

Senators Wyden and Lee introduced legislation requiring a warrant to buy what already requires a warrant to subpoena. The Defense Intelligence Agency confirmed it does the same thing. Patel called the data "valuable intelligence." The Fourth Amendment calls it "effects." The difference is apparently a receipt.

Implicator

Implicator