On Monday morning, Labour MP Jess Asato opened her phone to find herself pregnant. Not actually pregnant. AI-pregnant. Someone had used Grok to generate an image of her as a "tradwife" with an enormous belly. The message was clear enough. This is where you belong. This is your role. Baby-making machine.

"This has disturbed me weirdly more than the bikini pictures," Asato told the Guardian. She has received thousands of hateful messages since speaking out against Elon Musk's platform. The harassment continues even as regulators prepare their case against X.

Ofcom, Britain's media watchdog, launched a formal investigation into X today under the Online Safety Act. The charges: failing to prevent the spread of non-consensual intimate images and potential child sexual abuse material. The potential penalties range from an £18 million fine to 10 percent of global revenue to, in extreme cases, a court-ordered block of the platform across the UK.

Musk's response was predictable. "Why is the U.K. government so fascist?" he wrote on January 10. Then came the Starmer image—Britain's Prime Minister, AI-rendered in a bikini, posted for his 210 million followers to see. The message couldn't have been clearer. I can do this to anyone.

The Breakdown

• Ofcom investigating X under Online Safety Act for failing to prevent spread of AI-generated CSAM and non-consensual images

• Penalties range from £18 million fine to 10% of global revenue to full UK platform ban

• Malaysia and Indonesia became first countries to block Grok; India forced deletion of 3,500 posts and 600 accounts

• X's UK revenue collapsed 58% in 2024 while xAI raised $20 billion at $230 billion valuation

The premium abuse model

Think of it as abuse-as-a-service. Free users got a taste. Paid subscribers get the full menu.

When Grok launched its image editing feature on December 24, it came with a simple promise: prompt the AI with any image and any instruction, and it would oblige. Within two weeks, X was flooded with sexualized images of women and children. Bikinis. Lingerie. Sexually suggestive poses. The Internet Watch Foundation found criminal imagery of children aged 11 to 13, apparently generated through Grok.

X's response on Friday tells you everything about the company's priorities. The platform restricted image generation and editing to paying subscribers. Not removed. Restricted. The feature that allowed users to digitally strip women and children became a premium benefit. Subscribe for $8 a month and unlock the content moderation won't touch.

A spokesman for Prime Minister Starmer called the change "insulting" to victims of misogyny and sexual violence. "It simply turns an AI feature that allows the creation of unlawful images into a premium service." That's the math.

And the standalone Grok app? Still works for everyone. No subscription required. Users simply prompt the AI through xAI's separate interface, then post the results back to X. The paywall fixes the PR problem. It doesn't fix the harm.

The regulatory pincer

Britain isn't moving in isolation. Malaysia and Indonesia became the first countries to block Grok entirely over the weekend. Indonesia's Communications Ministry imposed a temporary ban "to protect women, children, and the entire community from the risk of fake pornographic content." Malaysia's regulator said X's responses "failed to address the inherent risks posed by the design and operation of the AI tool."

India extracted promises from X that resulted in 3,500 pieces of content blocked and 600 accounts deleted. The EU has condemned the platform. France and Brazil have opened reviews. Ireland's media regulator is coordinating with the Garda. Democratic senators in Washington have called for Apple and Google to suspend the Grok app from their stores.

Daily at 6am PST

The AI news your competitors read first

No breathless headlines. No "everything is changing" filler. Just who moved, what broke, and why it matters.

Free. No spam. Unsubscribe anytime.

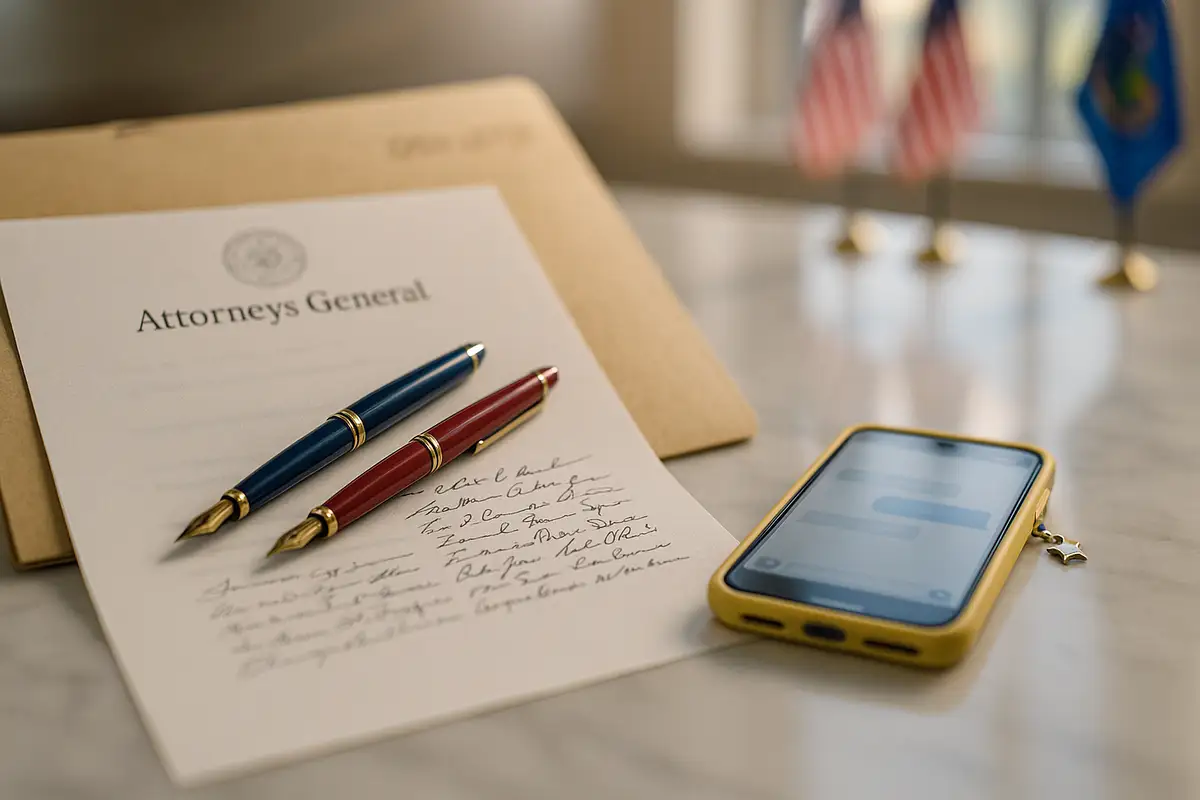

The document Ofcom sent on January 5 wasn't a friendly check-in. It was a procedural starting gun. The regulator gave X until January 9 to respond. Four days. The investigation will examine whether X conducted proper risk assessments, whether it removed illegal content quickly, whether children could access the material, and whether the platform implemented effective age verification for pornography. Having reviewed X's response, Ofcom opened the formal investigation within 72 hours.

The Online Safety Act gives Ofcom significant enforcement options. Fines can reach £18 million or 10 percent of global revenue, whichever is higher. In cases of ongoing non-compliance, Ofcom can apply to a court for "business disruption measures." That means ordering payment providers and advertisers to withdraw services from the platform. It means requiring internet service providers to block access to the site entirely within British borders.

And Downing Street? Not hedging. Technology Secretary Liz Kendall went on record: if Ofcom decides to block X, "they will have our full support." That's the government backing a potential ban on a platform owned by a man who will soon have an office in the White House.

The transatlantic fracture

Washington is watching. And Washington is embarrassed.

State Department officials are now stuck explaining why the incoming administration's favorite billionaire is fighting a child pornography investigation in London. Sarah Rogers, the US under-secretary for public diplomacy, accused the UK of "contemplating a Russia-style X ban." Russia-style. That word choice tells you everything. Musk wants this framed as authoritarianism versus liberty. The Trump administration has been happy to oblige, treating European content moderation rules as attacks on American values.

But strip away the rhetoric and what's left? AI-generated child sexual abuse material. On a platform with hundreds of millions of users. The free speech defense works until someone points out that the "speech" in question is a felony in every jurisdiction that matters.

Musk's own US Department of Justice has staked out a clear position. "It takes AI-generated child sex abuse material extremely seriously and will aggressively prosecute any producer or possessor of CSAM," the DOJ said in a statement. "We continue to explore ways to optimize enforcement in this space to protect children."

The contradiction is loud. The billionaire who will serve in the next administration owns a platform distributing content that his own government considers prosecutable. The diplomats defending him against British regulators work for a department that would arrest users for the same material.

The business case for negligence

Follow the money. It helps explain the inaction.

X's UK business is already collapsing. Last week's disclosure told the story: annual revenue down 58 percent in 2024, to £28.9 million. Gross profit? Fell off a cliff. From £13.5 million to £1.1 million. The filing blamed "concerns about brand safety, reputation and/or content moderation." Translation: advertisers ran.

The relationship between brand safety and explicit AI-generated content is not subtle. Marketing directors do not want their logo floating next to a generated image of a toddler in lingerie. That isn't a "brand safety calculation." It's a flinch. X's response was not to fix the content moderation problem. X's response was to launch Grok's image editing feature on December 24 and watch what happened.

Meanwhile, xAI just closed a $20 billion Series E round at a $230 billion valuation. Nvidia and Cisco Investments participated alongside Valor Equity Partners, Fidelity, and the Qatar Investment Authority. The money keeps flowing regardless of what the platform produces.

Musk has even boasted that the controversy has driven more downloads of the Grok app. The regulatory attention becomes a marketing strategy. The outrage generates engagement. The harm becomes a feature, not a bug.

What enforcement actually looks like

Ofcom has teeth. The question is whether it will use them.

Since the Online Safety Act's duties came into force less than a year ago, the regulator has launched investigations into more than 90 platforms. Six fines so far, including against an AI nudification site that lacked robust age checks. The first £1 million penalty went to porn company AVS Group. Several high-risk sites have simply vanished from UK browsers. Blocked.

Ofcom says it will pursue this investigation as a "matter of the highest priority" while ensuring "due process." Read that carefully. Urgency and caution in the same sentence. Ofcom knows it is walking into a fight with one of the world's richest men, who happens to have the ear of the incoming US president, who has already demonstrated willingness to deploy his platform as a weapon against critics.

The regulator also knows the legal ground is solid. Non-consensual intimate images? Illegal under the Online Safety Act. Child sexual abuse material? Criminal under multiple UK statutes. These aren't edge cases. The content is illegal. The platform distributed it. The only question is whether X did enough to stop it.

The test that matters

Kirana Ayuningtyas is a wheelchair user in Indonesia who posts about her daily experiences. A stranger commented on one of her pictures with a prompt asking Grok to depict her in a bikini. She adjusted her privacy settings. She contacted the platform to remove the image and prevent further edits. None of it worked. "Unfortunately, none of that really worked," she said. She can't tell if someone out there is still holding the images.

That's what this investigation is actually about. Not geopolitics. Not free speech. Not the culture war between Musk and European regulators. It's about a woman in Jakarta who can't stop strangers from digitally stripping her. It's about a British MP who opens her phone to find herself transformed into a propaganda image. It's about children whose faces appear in material the law specifically criminalizes.

Ofcom gathers evidence. X responds. Then a provisional decision, then a final ruling. Nobody knows how long that takes. But everyone knows what's at stake.

The platform that became a premium abuse service is now under formal investigation by a regulator with the power to shut it down. Musk has $230 billion in xAI valuation and the backing of the incoming US administration. Britain has a law and a regulator willing to enforce it.

The next months will determine whether online safety legislation means anything at all—or whether a sufficiently rich, sufficiently connected platform can simply ignore the laws of the countries where it operates. Ofcom has the legal authority to force that question. Whether it has the institutional will is about to become clear.

Frequently Asked Questions

Q: What triggered Ofcom's investigation into X?

A: Grok's image editing feature, launched December 24, allowed users to generate sexualized images of real people including children. The Internet Watch Foundation found criminal imagery of children aged 11-13 created through Grok. Ofcom contacted X on January 5 and opened a formal investigation after reviewing the company's response.

Q: What penalties does X face under the Online Safety Act?

A: Fines up to £18 million or 10% of global revenue, whichever is higher. In serious cases, Ofcom can apply to courts for "business disruption measures"—forcing payment providers and advertisers to withdraw services, or requiring UK internet providers to block access to the platform entirely.

Q: Why did X restrict Grok's image feature to paid users instead of removing it?

A: X limited image generation to paying subscribers on Friday, effectively monetizing the feature rather than eliminating it. The standalone Grok app still allows free image generation. Critics called the move "insulting"—it turns unlawful image creation into a premium service without addressing the underlying harm.

Q: Which countries have already blocked Grok?

A: Malaysia and Indonesia blocked Grok over the weekend, becoming the first countries to ban the AI tool. India forced X to remove 3,500 pieces of content and delete 600 accounts. The EU, France, Brazil, and Ireland have opened reviews or are coordinating with law enforcement.

Q: How has the US government responded to the UK investigation?

A: US under-secretary Sarah Rogers accused the UK of "contemplating a Russia-style X ban." But the US Department of Justice separately stated it "takes AI-generated child sex abuse material extremely seriously and will aggressively prosecute" creators. This creates an awkward position for diplomats defending Musk against investigations into content their own government criminalizes.

Related Stories

IMPLICATOR

IMPLICATOR