On April 2, a GitHub issue landed with the kind of numbers that make a vendor stop and count.

The author said she had analyzed 6,852 Claude Code session files, 17,871 thinking blocks, and 234,760 tool calls. The claim was not that Claude felt off after a bad afternoon. It was that the tool had started reading less code before editing it, stopping earlier, looping more often, and requiring more human correction in complex engineering work. Within two weeks, the complaint had become a broader accusation: Anthropic had nerfed Claude.

That accusation is too clean. The public evidence does not prove that Anthropic secretly swapped model weights, lowered precision, or degraded Opus 4.6 to save compute. The viral benchmark drop is weaker than it looks. Anthropic has denied demand-based quality reduction before, and the strongest public reports still lack independent raw data.

But the denial misses the larger problem. Anthropic did not need to nerf Claude for Claude Code to become a different product. Effort defaults, adaptive thinking, cache duration, context compaction, quota policy, and status incidents can all change the experience while the model name stays the same.

That is the black box Anthropic built. Now users are tapping the side and asking what moved inside.

Key Takeaways

- The public case for a secret Claude nerf is weak, but the product changed anyway.

- Adaptive thinking, effort defaults, cache duration, and quotas now shape Claude Code quality.

- The strongest user evidence is a measured workflow regression, not a viral benchmark chart.

- Anthropic needs session telemetry so customers can see which operating condition moved.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

The strongest complaint is not the viral benchmark

The best evidence against Claude Code is not the BridgeBench chart that raced across X. It is the GitHub issue that made a narrow, measurable claim about one demanding workflow.

The issue's strongest number is the reported fall in reads before edits, from 6.6 to 2.0. For a coding agent, that is not a cosmetic metric. Reading before editing is the difference between inspecting a codebase and guessing at it. If a tool opens fewer files, checks fewer call sites, and writes sooner, it may save tokens on the current turn while creating more cleanup work across the session.

None of that proves a global model downgrade. The dataset is one user's environment, and the raw logs were not published as a neutral benchmark. Claude Code versions, hooks, custom prompts, repository state, effort settings, cache state, and context size could all matter. The right inference is narrower and more uncomfortable: a serious user measured a real workflow regression, and Anthropic has not given users enough session-level data to isolate the layer that changed.

That is where the company's public response becomes more revealing than a denial. In the Hacker News discussion and coverage summarized by VentureBeat, Claude Code lead Boris Cherny reportedly pointed to adaptive thinking, medium effort defaults for some users, and the distinction between visible thinking and internal reasoning. He also reportedly said telemetry showed some disputed sessions were high effort, which pushes the issue away from one simple setting and toward a deeper product-orchestration problem.

Anthropic sounded defensive because it had a defensible point and an exposed flank. No, a redacted thinking header does not automatically mean less reasoning. No, a bad session does not prove secret model fraud. But yes, Claude Code had become a stack of hidden policy choices that users could not fully inspect.

The model name stopped being the product

Opus 4.6 was introduced as a better model for coding, agentic work, larger codebases, code review, debugging, and long-context tasks. Anthropic's own launch material stressed adaptive thinking, effort controls, context compaction, and a 1 million token context beta. That framing matters. Claude Code is not just a model endpoint. It is a delivered system.

The company now recommends adaptive thinking for Claude 4.6 models. Its current documentation says Claude decides when and how much to think, while lower effort levels may skip thinking for simpler problems. That is a rational design for cost and latency. It is also a failure point for software work, because a turn can look simple before the agent has read enough code to know it is not.

If you ask Claude to fix one generator bug, the visible edit may sit in a single file. The real work may sit in templates, generated output, tests, migrations, and downstream consumers. A reasoning allocator that classifies the turn too early can produce the exact behavior users hate: a plausible local patch, followed by a slow argument with reality.

This is why "same model" has become a weak promise. A model can be unchanged while its operating conditions change. The black box can move from high effort to medium effort. It can decide to think less on a turn. It can compact context differently. It can preserve less session state. It can keep the badge on the outside while changing the machinery inside.

Anthropic's Claude Code changelog makes the point without intending to. Effort defaults changed for some segments, then Anthropic moved API-key users, Bedrock, Vertex, Foundry, Team, and Enterprise users to high effort on April 7. That does not prove a regression. It proves the default was a live product variable.

For a casual chat user, that may be fine. For a team letting an agent edit production code, it is a procurement problem.

The cache fight explains the anger

The second GitHub complaint, about cache duration, does not prove Claude became less intelligent. It explains why users felt cheated.

Get the signal before the settings move

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

Anthropic's prompt-caching docs say automatic caching uses a 5-minute time-to-live by default, with a 1-hour option at higher write cost. The disputed GitHub issue alleged that Claude Code behavior shifted from mostly 1-hour cache use in February to much more 5-minute behavior around March 6, based on 119,866 API calls across two machines. The Register reported that Anthropic had moved many Claude Code requests back toward five-minute caching, while Jarred Sumner argued that shorter caching can be cheaper for many request patterns.

Both sides can be right. A one-shot request does not need an expensive hourlong cache. A developer staring at test output for ten minutes does. Long coding sessions are full of pauses: read a diff, run a build, answer Slack, review a failing test, return to the agent. If the cache expires during that gap, the next turn can recreate expensive context instead of reading it cheaply.

Quota burn then becomes part of perceived intelligence. A model that uses fewer deep attempts before the limit hits feels worse. A session that loses cache after a pause feels worse. A user who starts rationing effort feels worse. The checkpoint may not have changed, but the work envelope did.

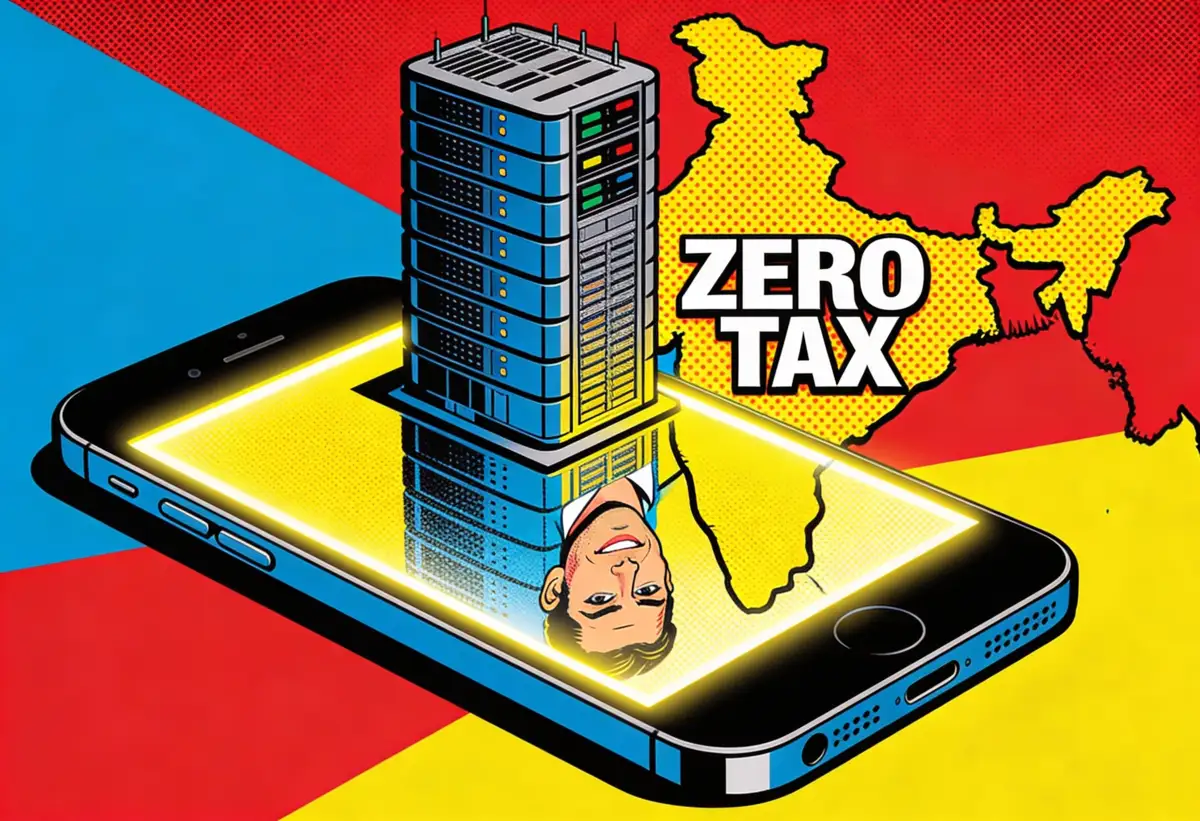

Anthropic's institutional emotion here is anxiety. The company is trying to sell frontier coding agents into flat-rate products while agentic workflows gulp tokens in loops, branches, retries, background sessions, and long contexts. That math forces optimization. But optimization without visibility feels like shrinkflation.

The benchmark proof is too thin

The BridgeBench claim gave the controversy its easy headline: Opus 4.6 supposedly fell from 83.3 percent accuracy to 68.3 percent in a retest. That number traveled because it turned an operational complaint into a scoreboard.

It should not carry the case. VentureBeat and Yahoo Tech reported that critics argued the first run used six tasks while the later run used 30. If the task set changed, the comparison is not a clean before-and-after measurement. It is a different test wearing the same label.

Benchmark research points in the same direction. SWE-rebench warns that coding-agent trajectories are stochastic and calls for repeated runs rather than single samples. Terminal-Bench leaderboards show the same model can score very differently under different agent scaffolds. That matters because Claude Code is a scaffold, not just a model.

The viral chart may still be worth watching. It is not proof.

That distinction matters because Anthropic benefits when critics overclaim. If the public case depends on a shaky benchmark screenshot, the company can knock down the screenshot and leave the harder product question standing behind it. The harder question is not whether one benchmark moved. It is whether customers can tell which operating conditions they are getting when they pay for Claude Code.

Anthropic has seen this movie before

Anthropic's strongest defense is its own 2025 quality postmortem. The company said it does not reduce model quality because of demand, time of day, or server load. That cuts directly against the most aggressive nerf theory.

The same postmortem also acknowledged three infrastructure bugs that degraded Claude's response quality. Users noticed. Anthropic's internal evaluations did not catch everything quickly. Privacy controls made debugging harder without user reports. In other words, the company has already admitted that real quality degradation can happen in production without a simple demand-throttling conspiracy.

That history should make Anthropic more humble, not less. A user saying "Claude got worse" may be wrong about the cause and right about the symptom. The past failure mode was not mass hallucination by customers. It was production complexity outrunning the company's measurement system.

The current Claude Code fight fits that pattern too well. Adaptive thinking can under-allocate reasoning. Effort defaults can shift. Cache policy can punish long sessions. Quotas can change behavior before users notice why. Context compaction can lose detail. Status incidents can sit outside the user's mental model. Stack those layers together and a paying developer can experience a worse tool without anyone inside Anthropic touching the model weights.

That is why a denial is not enough.

Trust now needs telemetry

The fix is not for Anthropic to promise that Claude is still smart. The fix is to show users what operating state they bought.

Every serious Claude Code session should expose the model identifier, Claude Code version, effort level, adaptive-thinking state, context tier, compaction events, cache TTL, cache reads, cache writes, quota accounting, and any correlated incident state. Anthropic does not need to reveal chain-of-thought or proprietary routing logic to do this. It needs to publish the product facts that determine whether two sessions are comparable.

Teams should do the same on their side. Pin effort settings. Record Claude Code versions. Keep a small suite of representative tasks. Run repeated trials after vendor changes. Measure files read before edit, tests passed, correction turns, cache behavior, and quota burn. If you depend on a coding agent, "it felt worse this week" is too weak for procurement and too vague for vendor escalation.

The deeper lesson is that AI products have outgrown simple model branding. A frontier agent is a black box of weights, prompts, tools, budgets, caches, limits, context policies, and recovery behavior. Users used to argue about which model was smarter. Now they need to ask which operating conditions are stable enough to trust.

Claude may still be the right tool for many hard coding tasks. It may even be the best one under high effort and the right session shape. But that is no longer the same as saying "use Opus 4.6." The delivered system matters.

The next time Claude Code gets accused of being nerfed, Anthropic can answer with another denial. Or it can open the box enough for customers to see which dial moved.

Frequently Asked Questions

Did Anthropic secretly nerf Claude Opus 4.6?

The public evidence does not prove a secret model-weight downgrade or deliberate demand-based quality cut. The stronger case is that Claude Code's served product changed through effort defaults, adaptive thinking, cache behavior, context handling, and quota policies.

Why did Claude Code users think the model got worse?

One GitHub issue alleged that Claude Code read fewer files before edits, stopped earlier, looped more often, and required more human correction in complex engineering sessions. That points to a real workflow regression, even if it does not prove a hidden model downgrade.

What is adaptive thinking?

Adaptive thinking lets Claude decide when and how much reasoning to spend. That can reduce latency and cost, but it can misjudge coding turns that look simple before the agent has inspected enough files, tests, or generated outputs.

How does prompt caching affect Claude Code?

Prompt caching determines whether long context is reused cheaply or recreated. A shorter cache duration can be cheaper for one-shot requests but painful for long coding sessions with pauses, large context, and repeated turns.

What should teams using Claude Code do now?

Teams should pin effort settings, record Claude Code versions, keep representative regression tasks, measure files read before edit, track cache behavior, and maintain fallback routes. The goal is to evaluate the delivered system, not just the model name.

AI-generated summary, reviewed by an editor. More on our AI guidelines.

IMPLICATOR

IMPLICATOR