Good Morning from San Francisco,

Brussels just pulled out its secret weapon against Trump: hitting Big Tech's wallet. 💪 The EU threatens to tax US giants like Meta and Google if trade talks collapse.

Von der Leyen means business. She paused her $23 billion counter-tariffs when Trump backed down, but won't budge on tech regulations. Smart move - US firms control 80% of Europe's digital market. That's a lot of ad dollars to tax. 🎯

Meanwhile, she's keeping an eye on China. No dumping their surplus here, thank you very much. 🚫

The kicker? Trade wars now run on algorithms, not steel mills. 💻

Stay curious,

Marcus Schuler

EU Threatens Tech Tax in Trump Trade Showdown

The EU is loading its biggest gun yet in the trade war with Trump: a potential tax on US tech giants, reports the Financial Times.

European Commission president Ursula von der Leyen warns she'll target digital advertising revenue from companies like Meta and Google if trade talks fail. The move would mark the first use of the EU's new anti-coercion weapon.

Trump paused his latest tariffs for 90 days. The EU matched by freezing its planned retaliation on $23 billion of US goods. But von der Leyen isn't blinking. She wants a "completely balanced" deal - or else.

Brussels knows where to apply pressure. US companies dominate 80% of Europe's digital services market. A tax on their ad revenue would sting.

Von der Leyen dismisses any talk of changing EU tech regulations to appease Washington. Those rules are "untouchable." She's also keeping watch on China, warning against dumping their tariff-hit goods in Europe.

Why this matters:

- The EU finally found Trump's pressure point: Silicon Valley's profits

- Global trade wars are starting to look less like steel and soybeans, more like clicks and algorithms

Read on, my dear:

- Financial Times: EU could tax Big Tech if Trump trade talks fail, says von der Leyen

AI Photo of the Day

Prompt:

Mickey mouse with golden Cuban chain and cigar in mouth, looked at side view through a doorviewer lens, fisheye lens, in the background streetview, 8K

Inside Apple's Struggle to Keep Up in the AI Race

Apple's ambitious AI plans have crashed into harsh reality, the New York Times reports in a brilliant piece by Tripp Mickle.

The company's attempt to revamp Siri hit major snags, forcing a delay in the virtual assistant's spring release. Internal tests revealed the system botched nearly one-third of requests.

Leadership reshuffles followed the stumble. Software chief Craig Federighi stripped AI head John Giannandrea of Siri responsibilities. Vision Pro leader Mike Rockwell now holds the reins. The shake-up exposes deeper troubles at Apple's core.

Behind the scenes, penny-pinching hobbled progress. When Giannandrea asked CEO Tim Cook for more AI chips, finance chief Luca Maestri slashed the approved budget increase. Apple's aging data centers house just 50,000 GPUs - a fraction of what rivals like Microsoft and Meta deploy.

Cook's hands-off approach to product development hasn't helped. At 64, the operations-focused CEO rarely provides direct guidance on innovation. Meanwhile, newer leaders lack the product development experience of their predecessors.

Why this matters:

- Apple's legendary innovation machine is sputtering just when it needs to sprint in the AI race

- The company that once wrote tech's future now struggles to keep pace with the present

Read on, my dear:

- The New York Times: What’s Wrong With Apple?

- Bloomberg: Why Trump’s Dream of Made-in-the-USA iPhones Isn’t Going to Happen

AI & Tech News

Why Made-in-USA iPhones Won't Happen Soon

The White House wants Apple to build iPhones in America, but there's a slight problem: no US city could match China's "iPhone City," where hundreds of thousands of skilled workers live and breathe iPhone assembly. Apple CEO Tim Cook put it bluntly: you could fill football fields with tooling engineers in China, but in America, they'd struggle to fill a room.

Startup That Raised $50M Had Zero Automation, DOJ Says

A founder who claimed his shopping app used AI to handle checkouts got caught using humans instead. The DOJ charged Albert Saniger with fraud after discovering his company Nate, which raised $50 million from top investors, secretly employed hundreds of workers in the Philippines to complete purchases that were supposed to be automated.

OpenAI Adds Chat Memory, Makes AI Feel More Personal

ChatGPT will now remember what you've told it before. OpenAI just rolled out this feature to paid users, letting the AI draw on previous conversations to give more relevant answers - though users in Europe will have to wait due to local regulations.

White House Orders Security Clearance Cuts at SentinelOne After Krebs Hire

Trump ordered security clearances stripped from SentinelOne after the cybersecurity firm hired Chris Krebs, his former cyber chief who refused to back false election fraud claims. The industry's silence speaks volumes - of 36 cyber organizations asked to comment; only one defended the company.

Ex-OpenAI CTO Seeks Record $2B for New AI Startup

Mira Murati wants $2 billion for her new AI company - double what she sought two months ago. The former OpenAI CTO has recruited top talent from her old firm, including several ChatGPT creators, but hasn't revealed what exactly they'll build.

Canva unleashes AI army: coding, spreadsheets, and photo magic

Canva just stuffed its design platform with AI features that let anyone create apps through simple prompts, edit photos with a click, and wrangle data in smart spreadsheets. The move signals a sprint toward AI adoption in creative software, even as artists push back against AI tools - though Canva's product chief Cameron Adams insists this isn't about replacing creators but "changing and adapting" to new tech possibilities.

Google slashes federal software prices to snag Microsoft's turf

Google just offered U.S. federal agencies a 71% discount on its Workspace apps in a bold move to steal market share from Microsoft's iron grip on government software. The deal could save agencies $2 billion if widely adopted, though Microsoft still dominates with 85% of the federal market - for now.

Online sellers scramble as China tariffs skyrocket overnight

Trump just cranked up tariffs on Chinese imports to 145 percent - three dramatic hikes in three days - leaving small online sellers like Gina Castagnozzi and her eco-friendly dog bag business reeling. The snap decisions put American entrepreneurs who source from China in an impossible spot: they can't pivot to U.S. manufacturing fast enough, but they also can't afford the new tariffs that could turn their next shipment into a money-losing nightmare.

OpenAI's Race to Market Sparks Safety Concerns

OpenAI has cut its AI safety testing from months to days, reports the Financial Times. The $300 billion startup is racing to beat competitors like Meta and Google, but this sprint carries risks.

For its latest o3 model, some testers got less than a week to check for dangers. That's a stark shift from GPT-4, which underwent six months of safety testing before launch. One tester of the earlier model noted they found dangerous capabilities only after two months of probing.

OpenAI defends its approach. The company says automated tests have made the process more efficient. Head of safety systems Johannes Heidecke claims they've found "a good balance" between speed and thoroughness.

But current testers aren't convinced. One person examining the upcoming o3 model warned the rushed timeline could prove "catastrophic." The stakes are rising as these AI models grow more powerful – and potentially more dangerous.

OpenAI has also scaled back its specialized safety testing. While it promised to build custom versions of its models to check for misuse – like creating biological weapons – it's only done limited testing on older, weaker models.

Why this matters:

- OpenAI chose speed over safety just as AI got powerful enough to need extra scrutiny

- The race to market is creating exactly the kind of corner-cutting that safety experts warned about

Read on, my dear:

- Financial Times: OpenAI slashes AI model safety testing time

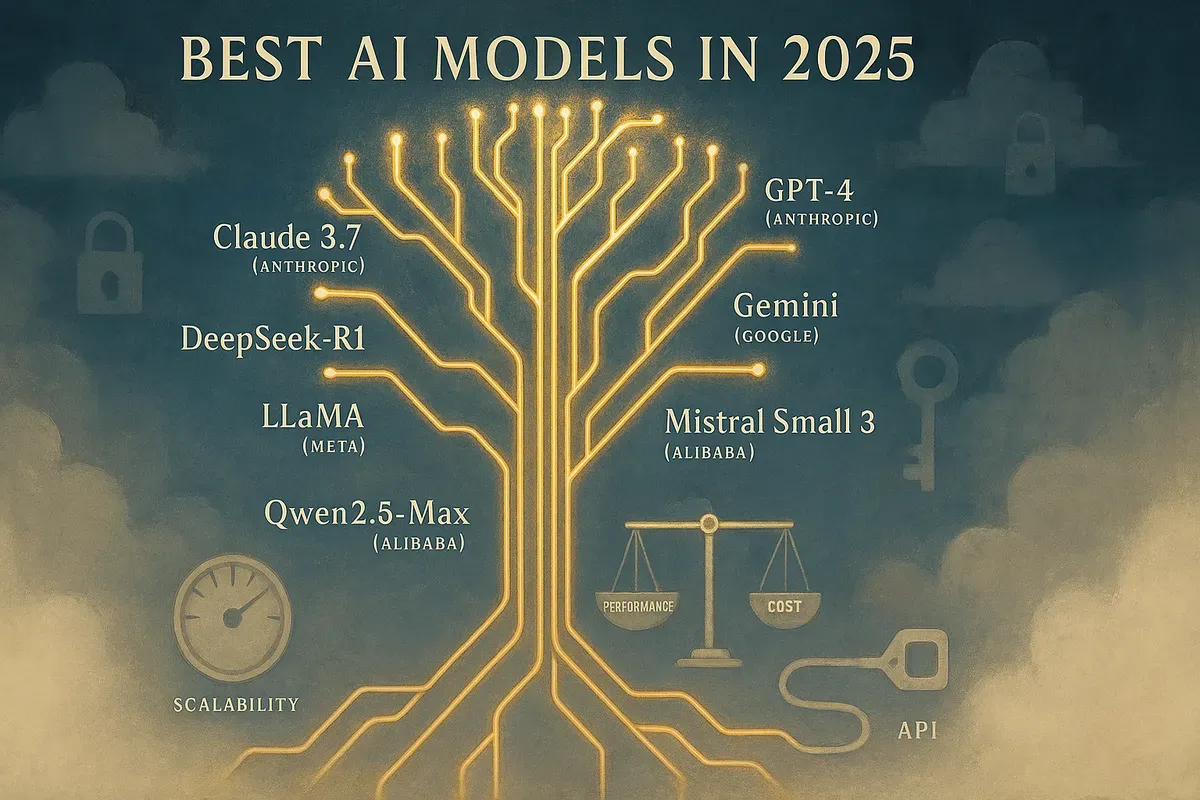

Best AI Models in 2025

Leading AI Models

- OpenAI: GPT-3, GPT-3.5, GPT-4

- Strengths: Advanced conversation, reasoning, efficient computing, real-time interactions

- Weaknesses: Requires paid license/subscription

- Anthropic: Claude 3.7

- Strengths: Strong contextual understanding, human-like interactions, coding abilities

- Weaknesses: Credit-based subscription, higher enterprise costs

- Google: Gemini

- Strengths: Large context windows, faster processing, reasoning, multimodal capabilities

- Weaknesses: Closed-source, potential privacy issues

- DeepSeek: DeepSeek-R1 (open-source)

- Strengths: Cost-efficient, fast, handles complex tasks, works with enterprise data

- Weaknesses: Less recognized than alternatives

- Meta: LLaMA (open-source)

- Strengths: Multimodal features, improved context window, competitive performance

- Weaknesses: Higher computational requirements

- Mistral AI: Mistral Small 3 (Apache 2.0 license)

- Strengths: Low latency, easy deployment, works on limited hardware

- Weaknesses: Smaller parameter count than competitors

- Alibaba: Qwen2.5-Max (open-source)

- Strengths: Enhanced large-scale NLP, low latency, high efficiency

- Weaknesses: Limited public parameter/token window information

Model Limitations

- Cost considerations

- Data privacy concerns

- Resource requirements

- Access restrictions

- Fine-tuning capabilities

Strategic Implementation

- API integration planning

- Scalability assessment

- Responsible AI practices

- Performance monitoring

- Value tracking

- Cost optimization