On Tuesday, Meta announced a multiyear deal with Nvidia worth tens of billions of dollars. Blackwell GPUs and Vera Rubin rack-scale systems, millions of chips flowing into data centers across 26 U.S. sites, with Grace CPUs arriving as standalone deployments for the first time. On Thursday, the New York Times reported that Meta's flagship AI model, code-named Avocado, cannot keep up with Google's Gemini 3.0. The release has been pushed from March to at least May.

Same week. Same company.

The infrastructure tab runs to $600 billion through 2028. This year's share alone could hit $135 billion, roughly double what Meta burned in 2025. Add the $14.3 billion Scale AI deal that installed Alexandr Wang as chief AI officer. And nine months into that bet, the company's AI leadership discussed temporarily licensing Gemini, Google's model, to power Meta's own products.

The company building more AI data centers than anyone on Earth is considering renting someone else's intelligence. That's the tell. $135 billion buys GPUs. It doesn't buy a model that can beat Google's.

The Breakdown

- Meta delayed its Avocado AI model from March to at least May after it failed to match Google's Gemini 3.0 on internal tests.

- Company leadership discussed temporarily licensing Google's Gemini to power Meta's own AI products while Avocado catches up.

- Wang's $14.3 billion hire produced organizational turmoil: LeCun departed, 600 jobs cut, multiple restructurings in nine months.

- Meta's $135 billion annual AI spend dwarfs competitors but has yet to produce a frontier-class model.

Money talks. Benchmarks don't.

Meta's AI spending dwarfs every competitor in raw dollar terms. OpenAI raised roughly $13 billion in total venture funding through 2025. Meta plans to spend ten times that in a single calendar year. Anthropic raised around $8 billion. Meta's Scale AI acquisition alone cost more than both companies' combined fundraising.

Spending and capability, though, measure different things. Avocado, the model Wang's TBD Lab has been building since late last year, beat Meta's previous Llama 4 and outperformed Google's Gemini 2.5 from March 2025. It could not match Gemini 3.0, shipped in November. In frontier AI, beating last year's models is table stakes. Competitors keep raising the bar. Meta arrived late to a checkpoint that Google cleared four months ago.

The delay from March to May matters less than what caused it. Three sources told the Times that Avocado fell short on internal tests for reasoning, coding, and writing. Not obscure benchmarks. These are the core capabilities that determine whether a model qualifies as frontier-class. Meta's model, backed by the largest AI budget in corporate history, could not clear the bar.

A company that cannot produce competitive frontier models faces a cascading problem. Frontier capability drives developer adoption, which feeds enterprise contracts, which generates the feedback loops that make the next model better. Miss one cycle and you are playing catch-up. Miss two and the gap compounds.

What $14.3 billion actually bought

Wang joined Meta last June after Zuckerberg told him to build superintelligence. He assembled TBD Lab, a group of roughly 100 researchers, and started work on Avocado alongside Mango, an image and video model. The lab adopted a "demo, don't memo" culture. Seventy-hour weeks became the norm.

Nine months later, the output is organizational turmoil.

Yann LeCun, Meta's chief AI scientist and one of the most respected researchers in the field, left the company rather than report to Wang. Chris Cox, the 20-year veteran chief product officer, no longer oversees the AI division. Meta cut 600 jobs from its Superintelligence Labs in October, hitting the Fundamental Artificial Intelligence Research unit hardest. Wang clashed openly with Cox and CTO Andrew Bosworth over whether the new models should feed Meta's advertising machine or compete directly with Google and OpenAI.

The cultural transformation ran deeper than org charts. Wang and Nat Friedman, former GitHub CEO who runs MSL's product arm, brought startup-style intensity to a company shaped by two decades of consensus-driven product development. The collision has been jarring for longtime employees. Friedman faces his own scrutiny over the September launch of Vibes, an AI video feed that sources described as rushed and inferior to OpenAI's Sora 2. The app shipped without realistic lip-synced audio. Another product, another gap between ambition and delivery.

Last week, Zuckerberg restructured the AI organization yet again. Engineering teams, data pipelines, even development of Avocado and Mango shifted away from Wang's direct control to a new applied AI unit under Maher Saba, a Reality Labs executive.

Meta insists Wang's role is expanding. Zuckerberg posted a selfie with Wang on Threads on Monday, captioned "Meanwhile at Meta HQ." The performative reassurance tells you everything about the anxiety in Menlo Park. When companies feel confident, they ship products. When they feel cornered, they post selfies.

You can't compress institutional knowledge

Here is what the spending narrative misses entirely. Google has been building large language models since its Transformer paper in 2017, iterating through BERT, LaMDA, PaLM, and multiple Gemini generations across eight years of compounding research. Anthropic's founding team left OpenAI in 2021 and carried five years of GPT development experience with them. OpenAI itself has trained frontier models since GPT-1 in 2018.

Meta's Superintelligence Labs opened nine months ago. TBD Lab finished Avocado's pre-training at the end of 2025 and began post-training in January. The target release was mid-March. Eight weeks of post-training before the expected ship date.

If you are building a frontier AI model and your entire post-training window is two months, something is off. Your timeline, your expectations, or both.

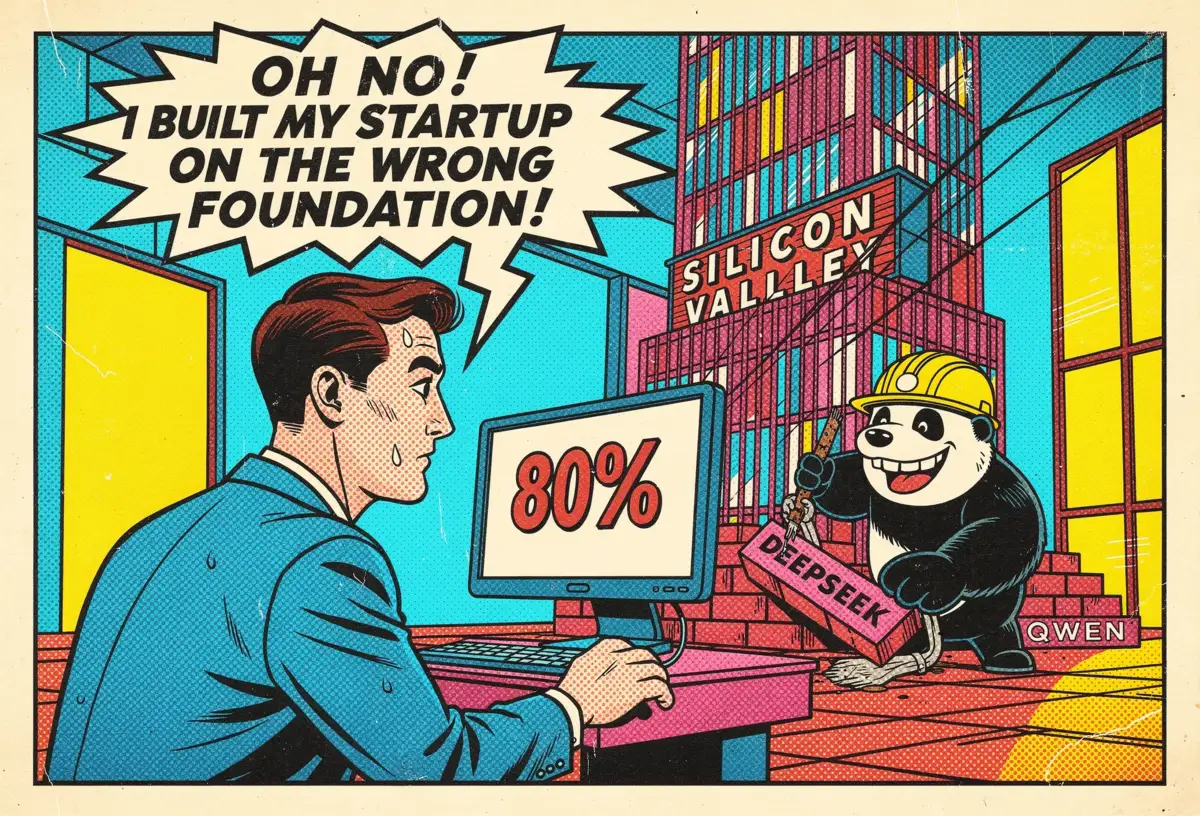

The pattern repeats. Llama 4 launched in April 2025 and disappointed developers. Vibes shipped in September trailing Sora 2. Now Avocado arrives late and underperforms. Each product reflects the same structural problem. Meta has the compute and the budget. What it lacks is the accumulated knowledge of building frontier models through years of failed experiments and hard-won architectural decisions. That knowledge lives in organizational muscle memory, not on a balance sheet.

Consider what Google's eight-year head start actually looks like in practice. Scar tissue from training runs that melted down. Architectural bets that failed quietly and taught the team what not to try next. Post-training insights that only emerged after years of processing real-world queries at scale. Meta can't acquire that history. It can only start accumulating its own.

Stay ahead of the curve

Strategic AI news from San Francisco. No hype, no "AI will change everything" throat clearing. Just what moved, who won, and why it matters. Daily at 6am PST.

No spam. Unsubscribe anytime.

You can hire individual researchers for record-breaking compensation packages. You can't purchase a decade of compounding institutional learning.

The Gemini conversation changes everything

Delays happen in AI development. Models miss benchmarks. Post-training stretches beyond the timeline. Normal.

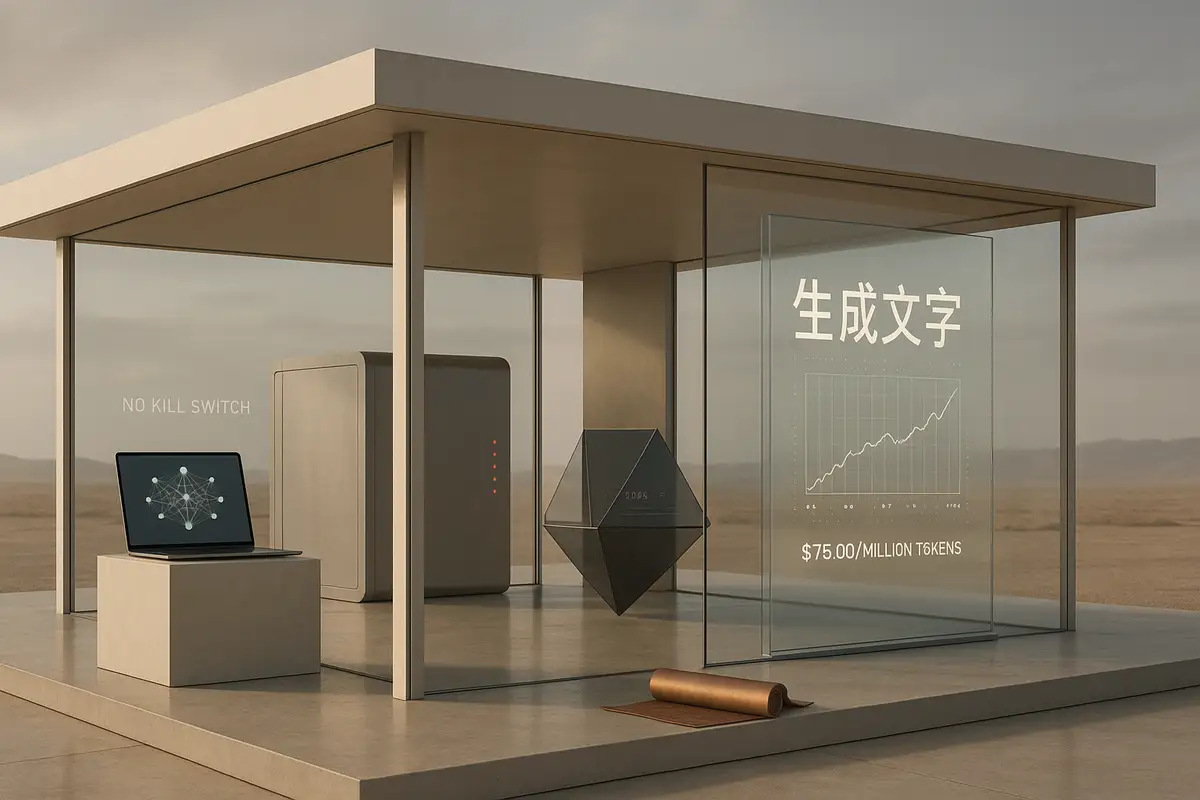

What is not normal: a company spending $135 billion on AI this year discussing whether to license a competitor's model to run its own products.

Meta's AI leadership reportedly explored temporarily licensing Google's Gemini while Avocado catches up. No decision was reached. But the conversation happened.

Think about what that discussion looked like from inside TBD Lab. Engineers who took jobs to build the most advanced AI on Earth sat in a room where leadership floated the idea of buying Google's model instead. A meeting like that sticks with people. Longer than any missed deadline.

Instagram, Facebook, WhatsApp, Threads. Roughly 3.3 billion people open one of those apps every day. If the company's internal models cannot deliver competitive AI features, it faces two options. Ship inferior products or pay Google for the capability Meta was supposed to build in-house. The first costs users. The second costs credibility. Neither plays well on an earnings call.

For a company that spent two years telling investors it would build superintelligence, licensing a rival's model is not a stopgap. It is an admission that the distance between aspiration and execution is wider than Menlo Park wants to say publicly.

Google understands the position this creates. When Nvidia released open AI models the same week reports surfaced about Meta abandoning open source last December, the positioning was deliberate. Companies that cannot build frontier models become customers of companies that can. Meta, the biggest AI spender on Earth, risks becoming the richest customer in the room.

The ROI question gets real

Avocado stalls. Meta keeps writing checks anyway. The Nvidia partnership puts millions of new chips into production. Four generations of Meta's in-house MTIA accelerators are scheduled for deployment through 2027. None of that hardware cares whether Meta has a model worth running on it.

This creates a specific kind of corporate exposure. Meta is constructing the most expensive AI infrastructure in the world on the assumption that its models will eventually justify the cost. Every month Avocado stays below frontier performance, the gap between capital deployed and value created widens.

And there is a deeper strategic cost. Meta built its AI reputation on open-source Llama models. Developers and entire research communities adopted Meta's weights as their default foundation. Good luck buying that kind of developer loyalty back. Meta walked away from open source for Avocado. If the closed model still can't compete, the company loses both bets at once.

Wall Street has noticed the strain. Meta's stock has trailed Alphabet's this year, a reversal from early 2025 when Meta entered as the market's favored AI play. "In many ways, Meta has been the opposite of Alphabet, where it entered the year as an AI winner and now faces more questions around investment levels and ROI," KeyBanc analysts wrote. When a company spending $135 billion per year cannot match a model Google shipped four months ago, the ROI question stops being theoretical.

The cadence test

Meta has already code-named its next model Watermelon. The fruit keeps getting bigger even if the models don't.

Zuckerberg's real test is not whether Avocado eventually ships. It will. Models improve through iteration. A May release with competitive benchmarks would quiet the loudest critics for a quarter, maybe two.

What matters is whether Wang's restructured lab can maintain a development cadence that matches Google and OpenAI, both of which ship updated frontier models roughly every three months. Meta needs that rhythm. Not as a one-time sprint but as a repeatable institutional capability built over years of accumulated failure and correction.

Right now, Meta has proven it can spend faster than any AI company in history. It has not yet proven it can build faster. In the frontier model race, only one of those determines whether you end up a competitor or a customer.

Zuckerberg's $14 billion answer is still loading.

Frequently Asked Questions

What is Meta's Avocado AI model?

Avocado is Meta's code-named frontier AI model, developed by the TBD Lab within Meta Superintelligence Labs. Led by chief AI officer Alexandr Wang, the project aims to succeed the Llama model series. Avocado completed pre-training in late 2025 and entered post-training in January 2026, with a planned mid-March release that has since slipped to at least May.

How does Avocado compare to competing AI models?

Avocado outperformed Meta's previous Llama 4 and Google's Gemini 2.5 from March 2025. However, it fell short of Google's Gemini 3.0, released in November 2025, on internal tests for reasoning, coding, and writing. Those gaps prompted Meta to delay the release by at least two months.

Why is Meta considering licensing Google's Gemini?

Meta's AI division discussed temporarily licensing Gemini to power the company's AI products while Avocado catches up to frontier performance. With 3.3 billion daily active users across its platforms, Meta needs competitive AI features. No decision has been reached, but the discussion signals how far behind Meta's own models currently sit.

What happened to Meta's open-source AI strategy?

Meta championed open-source AI through its Llama model series, which developers and research communities widely adopted. After Llama 4 disappointed and Chinese lab DeepSeek incorporated Llama architecture into its R1 model, Meta shifted toward closed, proprietary development for Avocado. The move risks alienating the developer community that gave Meta its distribution advantage.

Who is Alexandr Wang and what changed in his role?

Wang, 28, founded Scale AI and joined Meta as chief AI officer in June 2025 after Meta invested $14.3 billion in his startup. He leads Meta Superintelligence Labs and assembled TBD Lab to build Avocado. Recent restructuring shifted some teams away from his direct control to a new applied AI unit under Maher Saba.

IMPLICATOR

IMPLICATOR