San Francisco | Monday, April 27, 2026

Oakland gets the cleanest AI governance hearing Silicon Valley could not write for itself. Elon Musk wants roughly $150 billion from OpenAI and Microsoft over the nonprofit mission he says got commercialized. Sam Altman arrives with a new five-principle AGI framework, published one day before jury selection, and a concession hiding in plain sight: OpenAI is now big enough that its principles need revision.

The legal question is narrow. The industry question is not. Can a nonprofit mission survive a trillion-dollar compute race, a capped-profit arm, Microsoft contracts, and boardroom memory?

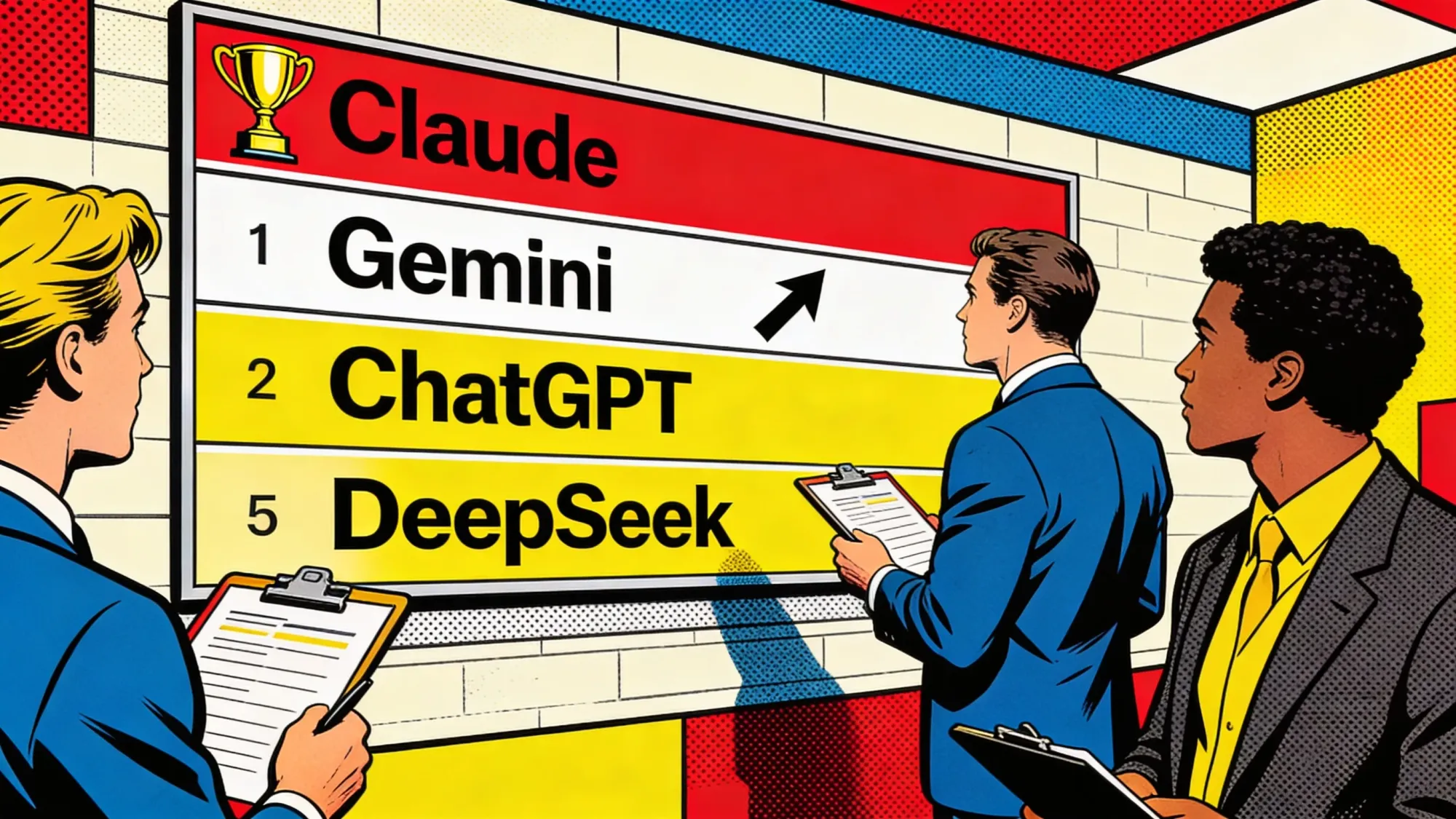

The model race is no calmer. Claude still leads our LLM Meter, but Gemini is closing after Google's enterprise push. Trust is becoming the product spec.

Stay curious,

Marcus Schuler

Know someone drowning in AI noise? Forward this briefing. They can subscribe free here.

Musk v. Altman Trial Opens With $150 Billion and OpenAI's Nonprofit on Trial

Jury selection begins today in Oakland in Elon Musk's case against OpenAI, Sam Altman and Microsoft. Two claims remain: breach of charitable trust and unjust gain.

The number is theatrical. The structure is not. Musk wants roughly $150 billion disgorged from OpenAI and Microsoft, plus the removal of Altman and Greg Brockman, with any money routed to OpenAI's nonprofit arm rather than to Musk personally. Judge Yvonne Gonzalez Rogers dismissed fraud and constructive fraud counts Friday at Musk's own request, leaving a narrower case about whether OpenAI abandoned the charitable mission that helped attract Musk's early support.

OpenAI's defense has a clean counterpunch. Court filings show Musk explored a for-profit OpenAI structure in 2017 before leaving the board in 2018. The trial therefore asks jurors to weigh not only the nonprofit mission, but who gets to enforce it after the same founder allegedly wanted a different corporate structure when control was still available.

The jury is advisory. Gonzalez Rogers will decide remedies if OpenAI is found liable. That matters because Musk's requested remedy, unwinding the for-profit conversion, is the kind of structural order courts rarely grant. But even a partial loss would land during OpenAI's IPO preparation window and force the company to explain its nonprofit control story under oath.

This is why the case matters beyond Musk and Altman. Every frontier lab is selling trust while buying compute at industrial scale. If the charitable wrapper fails in court, it becomes harder for AI companies to use mission language as cheap capital while operating like hyperscale infrastructure firms.

Why This Matters:

- OpenAI's nonprofit structure is no longer just an investor footnote. It is now trial evidence.

- A narrow ruling could still change how frontier labs describe mission, control and public benefit.

Reality Check

What's confirmed: Jury selection begins April 27 in Oakland. Fraud claims are out. Breach of charitable trust and unjust gain remain.

What's implied (not proven): That OpenAI's nonprofit mission has become a legal vulnerability rather than only a governance brand.

What could go wrong: The court treats Musk's requested structural remedy as too remote, leaving only a narrower damages fight.

What to watch next: Opening arguments Tuesday, witness sequencing, and whether Microsoft stays peripheral or becomes central to the charitable-trust claim.

The One Number

$150 billion: The damages Musk is seeking from OpenAI and Microsoft as jury selection begins Monday in Oakland over OpenAI's nonprofit-to-for-profit pivot. The money would go to OpenAI's nonprofit arm, not Musk personally. That turns the case from personal feud into a governance test hanging over OpenAI's planned IPO.

Source: Implicator.ai, April 26, 2026

OpenAI Posts Five Principles for AGI as Altman Rewrites the Mission Around Scale

OpenAI published a five-principle AGI framework Sunday that keeps the 2018 mission language but shifts the company's public case toward access, infrastructure and public oversight.

The timing is hard to miss. One day before jury selection in Musk v. Altman, OpenAI posted a fresh statement of principles for building artificial general intelligence. Altman put democratization first, promised to resist concentration of AI power, and tied universal prosperity to lower AI costs, data centers and compute.

That is not a legal replacement for the 2018 Charter. It is a political document for the scale era. The old Charter framed OpenAI as a research lab that might one day have to stop competing if another safety-conscious project neared AGI first. The new post reads like a company explaining why it needs infrastructure, product access and government coordination to fulfill the same mission.

The strongest line is also the most revealing one: OpenAI says it may need to update its principles as evidence changes. That is sensible for a fast-moving lab. It is also a permission structure. Every future change can now be described as adaptation rather than retreat.

The next pressure point is authority. OpenAI says powerful AI should have public oversight and democratic input, but the post does not define who gets binding power when public preference conflicts with product strategy, investor contracts or national-security demands.

Why This Matters:

- OpenAI is recasting scale as mission fulfillment before regulators, courts and IPO buyers define it differently.

- The next fight is not the wording of the principles. It is who gets to enforce them.

AI Image of the Day

Prompt: naive kindergarten childlike drawings and scribbles, colorful crayon and watercolor textures, playful composition, bright cheerful colors, joyful and imaginative --chaos 15 --ar 3:4 --profile xl9i7bg

LLM Meter Shows Gemini Gains as Claude Slips After Code Postmortem

Claude stayed first in The Implicator's April 26 LLM Meter at 86, but Google's Gemini rose to 84 after Cloud Next and a reported Siri role.

The weekly ranking moved less like a benchmark table and more like an enterprise procurement memo. Anthropic's Claude still leads, but fell two points after the company disclosed three product-layer changes that hurt Claude Code, Claude Agent SDK and Claude Cowork during March and April. Anthropic said the API was not affected and reset usage limits for subscribers, a useful gesture that also confirmed how long customers had been living with the issue.

Gemini gained three points after Google used Cloud Next '26 to push a broader enterprise-agent package: Agent Designer, agent inboxes, long-running agents, Skills, Projects, Agentic Data Cloud and new TPU positioning. The bigger distribution signal was Thomas Kurian's statement that Gemini will power the next generation of Siri. A billion-device assistant surface changes the buyer conversation even before anyone sees renewal data.

OpenAI's ChatGPT rose to 81 after GPT-5.5 shipped across paid ChatGPT tiers, Codex and the API. DeepSeek V4 added price pressure with V4-Pro listed at $1.74 input and $3.48 output per million tokens. The week showed the new scoring reality: model quality still matters, but reliability, distribution, price and procurement risk now move the table.

Why This Matters:

- Enterprise buyers are grading model vendors on operational trust, not only benchmark claims.

- Gemini's distribution gains make the next LLM Meter less about demos and more about default surfaces.

🧰 AI Toolbox

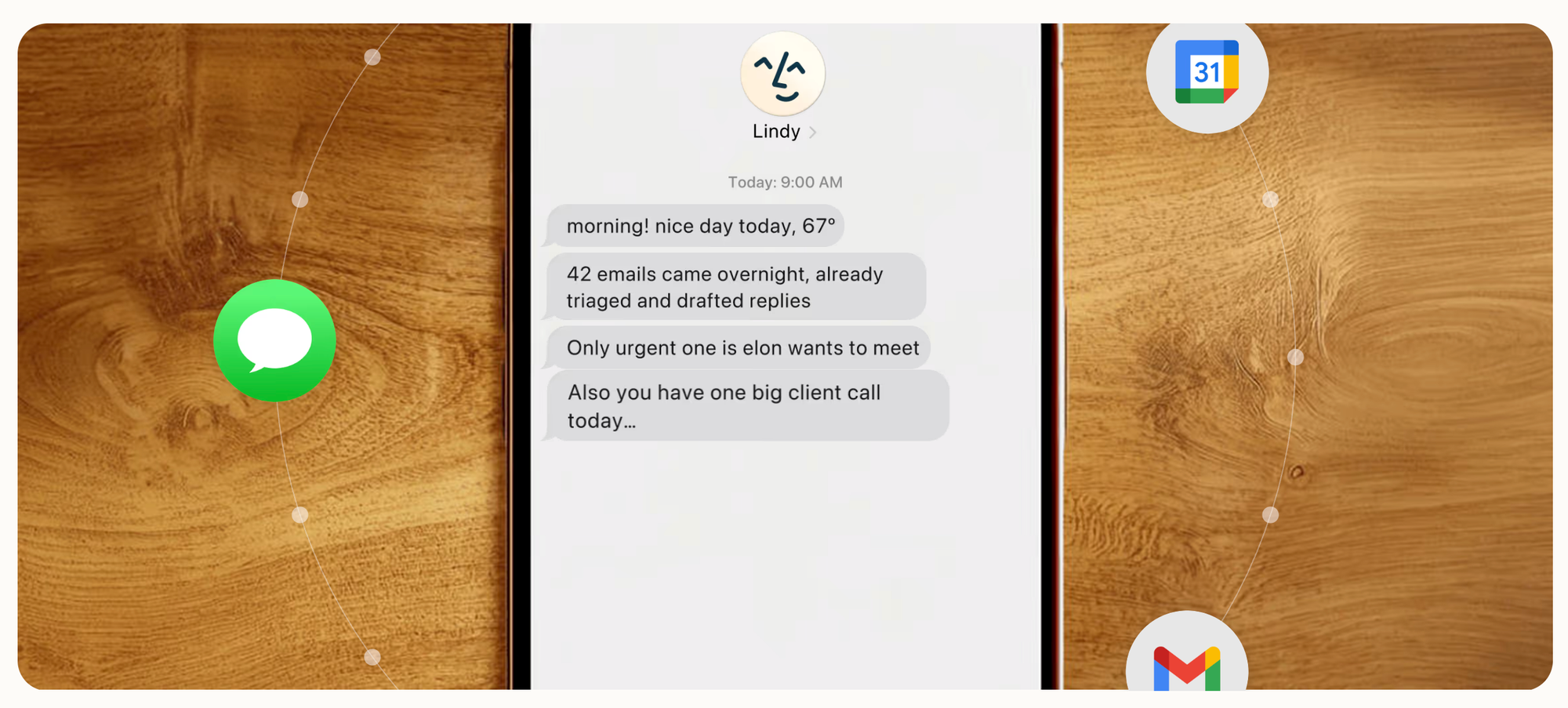

How to Build Custom AI Agents That Run Complex Business Workflows with Lindy

Lindy lets you build AI agents for specific business workflows without writing code. Pick a trigger such as an incoming email, calendar invite, form submission or Slack message, chain steps across 3,000+ integrated apps, and let the agent run autonomously. Agents handle recruiting triage, meeting prep, customer support and sales follow-up around the clock. Built on a no-code editor with built-in memory so each agent learns your preferences over time. Free tier includes 400 tasks per month.

Tutorial:

- Sign up free at lindy.ai and create your first agent from the template library or a blank slate

- Pick a trigger: new email in Gmail, new calendar event, form submission, or message in Slack

- Chain steps: read incoming data, call a model to extract information, look something up in a CRM, send a response

- Connect the apps the agent needs to touch, such as Gmail, Google Calendar, Salesforce, Notion or Airtable

- Test the agent in the editor by firing a sample trigger and watching each step execute with logs

- Enable memory so the agent remembers how you handled edge cases and applies the same reasoning next time

- Turn the agent live and monitor a shared dashboard for errors or items needing human review

URL: Lindy

What To Watch Next (24-72 hours)

|

||

|

||

|

💡 5-Minute Skill

Turn Three Days of Tooth Pain You're Hiding Into a Read on Whether It's a Root Canal

11:48 p.m., Sunday. Day three of a dull throb in your lower right molar that flares when you drink anything cold and now wakes you up around 3 a.m. You have not told your partner. You have not called the dentist. You have, however, opened ChatGPT, because asking a chatbot does not count as admitting it.

Your raw input:

Lower right molar, second from the back, no visible filling. Pain timeline: dull ache started Friday morning, sharp jolt on cold water Friday night, throbbing now wakes me at 3 a.m. and lingers 20 minutes. Hot coffee also hurts as of Sunday. Tapping the tooth with a fingernail: yes, sore. Gum around it: looks normal, no swelling, no bad taste. No fever. Last cleaning eight months ago, one cavity flagged on that tooth and never filled. Ibuprofen 400 mg helps for about three hours.

The prompt:

Based on the symptoms above, give me a calm, non-dramatic read on what's most likely going on, in this order: (1) the two or three most probable causes ranked by fit, (2) the specific symptom that would push it toward root canal versus a still-fixable cavity, (3) what counts as urgent versus "Monday morning is fine," (4) the exact thing to say when I call the dentist so I get seen this week and not in three. Plain language. No "consult a professional" disclaimer paragraph. I know.

The output:

Most likely: deep cavity now reaching the nerve, reversible pulpitis if cold pain stops within seconds, irreversible if it lingers 20+ minutes. Yours lingers. Less likely: cracked tooth. The 3 a.m. throbbing and lingering cold pain are the root-canal-leaning signs; the unfilled cavity from eight months ago is the probable culprit. Not an ER tonight, since there is no fever and no swelling. Call first thing Monday and say: "I have lingering pain to cold and spontaneous night pain in tooth #30, started Friday, getting worse. I need an emergency exam this week, not a routine slot."

Why this works:

The fear isn't the dentist. It's the not-knowing, which your brain inflates into worst-case at 3 a.m. A structured prompt forces the model to separate "probably a filling" from "probably a root canal" using the one symptom that actually distinguishes them, how long cold pain lingers. And the script at the end solves the real blocker: front-desk staff triage by the words you use. "Sensitive tooth" gets you a slot in three weeks. "Lingering pain to cold and spontaneous night pain" gets you in Tuesday.

What to use:

ChatGPT. It is less hedge-y than Claude on personal medical questions and will give you the ranked-likelihood read without four paragraphs of "I am not a doctor." Both will be wrong about 10 percent of the time, which is fine, because you are not asking for a diagnosis. You are asking for the right words to get an appointment and the right threshold for "go to the ER tonight." Do not let the chatbot replace the dentist. Let it replace the 3 a.m. spiral that has been keeping you from calling one.

📖 AI Alphabet

|

R

|

📖 AI Alphabet RAG RAG stands for retrieval-augmented generation. It means the model first pulls in outside information, then uses that material to produce a better grounded answer. |

AI & Tech News

Google Now Controls Roughly 25% of Global AI Compute Capacity

Google operates about 3.8 million TPUs and 1.3 million GPUs, giving it roughly a quarter of the world's AI compute, the Financial Times reported citing Epoch AI data. Cloud chief Thomas Kurian said current demand and revenue justify the build-out and position Google to take share against AWS and Azure.

Amateur Mathematician Solves 60-Year-Old Erdős Problem With GPT-5.4

An amateur researcher used a single prompt to GPT-5.4 Pro to produce a novel proof for a long-standing Erdős conjecture in combinatorics, Scientific American reported. Fields Medalist Terence Tao called it a nice achievement but said the long-term significance for mathematical practice is still unclear.

San Francisco Boutique Becomes First Store Run Entirely by an AI Agent

Andon Market, a pop-up boutique in San Francisco, says it is the first retail store fully managed by an AI agent built on Anthropic's Claude Sonnet 4.6, the New York Times reported. Andon Labs says the agent handles inventory, pricing, customer service and store layout, with humans kept on for safety and oversight.

Palantir Staff Raise Civil Liberties Concerns Over ICE and DOD Work

Internal Slack logs and staff interviews obtained by Ars Technica show Palantir employees questioning the company's civil liberties posture months into Trump's second term, particularly around ICE and Department of Defense contracts, Techmeme summarized. The debate centers on Palantir's manifesto and how its government portfolio fits the new administration.

Tech CEOs Add Visible Security as AI Backlash Grows

Tech leaders including NVIDIA's Jensen Huang and OpenAI's Sam Altman are adding armed personal security and tighter communications protocols in response to rising public hostility, The Information reported. The shift follows protests, online threats and a broader debate over AI risk and accountability.

Cyera Agrees to Buy Israeli AI Data-Governance Startup Ryft

Cyera will acquire Ryft, an Israeli startup that automates data access and governance for enterprise AI deployments, in a deal valued between $100 million and $130 million, Calcalist reported. Ryft, founded in 2024 with $8 million in prior funding, focuses on data security, compliance and lineage for AI systems.

ASML Plans 60 EUV Machines in 2026 to Feed AI Chip Demand

ASML plans to ship at least 60 standard extreme ultraviolet lithography machines this year, a 36% jump over 2025 sales, the Wall Street Journal reported. As the only supplier of EUV systems, ASML is the choke point for the most advanced AI and high-performance computing chips.

Genki Robotics Hits $1 Billion Valuation in Series A

Tokyo-based humanoid startup Genki Robotics, co-founded by Android creator Andy Rubin, closed a Series A at a roughly $1 billion valuation, Axios reported. The deal follows a $50 million seed in 2025 and underscores investor appetite for humanoid platforms.

Tech Founder Builds AI "Virtual Body Double" to Outsource Daily Life

Entrepreneur Bill Nguyen is using a custom AI assistant to outsource scheduling, communication and other day-to-day decisions in an attempt to build a digital twin that can act on his behalf, Semafor reported. Nguyen frames the project as both an experiment and a stress test for how far personal AI delegation can go.

Tokyo Electron Veteran Exits After Family China Stake Disclosure

Jay Chen, who led Tokyo Electron's China business, has left the Japanese chip-equipment maker after the company found his family held investments in Chinese semiconductor competitors, the Financial Times reported. The departure reflects rising corporate sensitivity to conflicts of interest in chip supply chains.

🚀 AI Profiles: The Companies Defining Tomorrow

Strider Technologies builds a private-sector intelligence platform that maps state actors, supply chain risk, IP theft and insider threats. The Utah company sells what spy agencies do for nation states, and just shipped its first agentic AI capability as Trump's China crackdown reshapes the buyer base.

Founders

Founded by twin brothers Greg and Eric Levesque. Greg is CEO and based in Sandy, Utah. Eric is president and runs the international footprint from London. The team has spent nearly a decade building what Greg calls "a digital twin of the industrial world down to the person level," staffed by a growing roster of former international intelligence officials.

Product

Strider ingests billions of public documents, corporate registries, trade records and foreign-language filings, then maps relationships between people, suppliers, technologies and front companies. Customers query the dataset for counterespionage signals, not automated conclusions. A live demo on US chip diversion to Russian and Iranian drone programs returned roughly 33,000 shipments worth $240 million in two minutes for under $20 in compute. Strider runs a "zero-touch" model and does not store customer data.

Competition

Sayari, Datenna and Exiger compete on supply chain risk. Recorded Future and Lexis cover IP theft. Strider does both plus strategic intelligence, which Pelion Ventures partner Blake Modersitzki argues is hard to replicate. The bigger risk is government intelligence agencies that historically held this work behind classified mandates and could push back as private platforms move closer to their turf.

Financing

Series C closed in September 2024 at a $450 million post-money valuation. Greg Levesque says revenue has nearly tripled since and the company is exploring additional investment. Strider already has small contracts with the US Air Force valued at over $8 million, plus state governments and NATO, and counts eight of the top 10 Fortune 500 companies as customers across 16 countries.

Future ⭐⭐⭐

The expanding US-China decoupling, state-level land bans and corporate fear of insider risk all push the buyer pool wider. The harder question is whether agentic AI on open-source intelligence can deliver reliable, actionable analysis at enterprise scale without privacy backlash or a high-profile false positive. If Strider clears that bar, counterespionage as a service stops being a curiosity and becomes a durable category.

🤨 Yeah, But...

Jury selection in Musk v. Altman begins today in Oakland, with Elon Musk seeking roughly $150 billion from OpenAI and Microsoft over OpenAI's shift from nonprofit research lab to for-profit business.

Judge Yvonne Gonzalez Rogers dismissed Musk's fraud and constructive fraud counts Friday at Musk's request, leaving breach of charitable trust and unjust gain; court filings also say Musk explored a for-profit OpenAI structure in 2017 before leaving the board. (Implicator.ai, April 26, 2026)

Our take: The role reversal is doing most of the legal work here. Musk has arrived as the guardian of charitable purity, carrying a damages request large enough to buy several actual charities, while OpenAI gets to point at his old paperwork and ask whether sainthood usually comes with a Delaware entity.

This is Silicon Valley theology now: everyone believes in the mission until the cap table appears, then rediscovers principle when someone else's cap table gets bigger. The jury is advisory, the judge decides remedies, and the public charity is somehow both the wounded party and the prize hamper. Oakland has hosted stranger proceedings, but not many with this much grant-funding cosplay.

IMPLICATOR

IMPLICATOR