San Francisco | Friday, April 17, 2026

Anthropic shipped Opus 4.7 on Thursday with harder cyber safeguards and a sibling model, Mythos, it refused to release. Pricing held at $5/$25 per million tokens while Gemini 3.1 Pro charges $2/$12 and GPT-5.4 sits at $2.50/$15. Coding jumped 11 points on SWE-bench Verified. The gap is wider than the lead.

Chinese labs that gave their best models away last year finally found the money. Zhipu raised API prices 83%,away their best models last year have and call volume rose 400%. Tencent Hunyuan quadrupled. Agents, not chatbots, opened the wallet.

A Reddit sub-skill called "Caveman Claude Code" promises 65% in ,output savings. The invoice barely moves. Most tokens were never on screen to begin with.

Stay curious,

Marcus Schuler

Know someone drowning in AI noise? Forward this briefing. They can subscribe free here.

Anthropic Ships Opus 4.7 Broadly, Keeps Sibling Model Mythos Behind Cyber Safeguards

Anthropic released Claude Opus 4.7 on Thursday with tighter cyber safeguards and a new ASL-3 uplift evaluation. A sibling model called Mythos stayed inside the lab.

Opus 4.7 posted 87.6% on SWE-bench Verified, 64.3% on SWE-bench Pro, and 69.4% on Terminal-Bench 2.0. Anthropic held pricing at $5 per million input tokens and $25 output. Gemini 3.1 Pro charges $2 and $12. GPT-5.4 sits at $2.50 and $15.

The company framed the dual release as an "airlock." Opus ships to everyone. Mythos, more capable on offensive cyber tasks, is restricted to a Cyber Verification Program for vetted security professionals. UK AISI reported Mythos completes a cyber range in 3 out of 10 attempts, averaging 22 of 32 steps.

Opus 4.7 improved visual acuity to a 2,576-pixel ceiling at 3.75 megapixels, up from 1,568 pixels at 1.15 megapixels. XBOW visual-acuity scored 98.5%, up from 54.5% on Opus 4.6.

Why This Matters:

- Anthropic is now the only frontier lab publicly gating a model on cyber-uplift grounds, a precedent regulators in the UK and US will cite in coming months.

- Developers get the capability gains without the full capability. The Cyber Verification Program serves as a test case for whether tiered access works outside classified settings.

Reality Check

What's confirmed: Opus 4.7 shipped at $5/$25 per million tokens with 87.6% SWE-bench Verified. Mythos stays inside a Cyber Verification Program.

What's implied (not proven): That gated access will hold once rivals match Mythos-level capability and face the same choice.

What could go wrong: A leaked weights incident or a competitor releasing equivalent capability openly would collapse the airlock framing overnight.

What to watch next: Whether OpenAI or Google adopt a similar gated tier, or whether open-weights releases from China force a race to the bottom.

The One Number

58% — TSMC's Q1 2026 net profit jump to a record NT$572.48 billion, beating the NT$543.32 billion consensus on revenue of $35 billion. Gross margin hit 66.2%, blowing past the 63-65% guidance range and signaling pricing power the market has not seen from a foundry in this cycle.

CEO C.C. Wei called AI chip demand exceptionally strong and raised full-year 2026 revenue growth guidance to more than 30% in US dollar terms, with a Q2 guide of $39 billion to $40.2 billion implying roughly 45% year-over-year growth at the midpoint. Advanced nodes now generate 61% of revenue, with 3-nanometer alone at 25% despite its early lifecycle stage.

The AI capex thesis just got its strongest Q1 confirmation. The only drag is the slow pace of new fab construction, which caps upside into 2027.

Source: CNBC, April 16, 2026

China Turns Free AI Models Into a Business as Agents Drive 400% Surge in API Use

Chinese AI labs that gave their best models away in 2025 are booking real revenue in 2026 as agents, not chatbots, pull API usage up by an order of magnitude.

Zhipu raised API prices 83% and still grew call volume 400%, according to company data cited this week. Tencent Hunyuan API usage quadrupled. Moonshot's Kimi K2.5 generated more revenue in 20 days than the lab earned in all of 2025. Alibaba reorganized around Eddie Wu's mandate to "create, deliver, apply tokens," and MiniMax moved its M2.7 model to a paid-only commercial license. Daily tokens processed across Chinese labs rose from 100 billion in 2024 to 140 trillion in March 2026.

Revenue is still small relative to infrastructure burn. MiniMax reported a $250 million loss on $79 million in revenue. Zhipu lost $680 million on $104.8 million. Alibaba's Joe Tsai said in November his company does not make money from AI. Agentic workloads, which chain dozens of API calls per task, are what finally closed the gap.

Why This Matters:

- The open-source giveaway that worried US policymakers has become a distribution channel. Huizenga's Deterring American AI Model Theft Act moves through a Congress that watched China monetize the same playbook it warned about.

- DeepSeek's next model is rumored to land this month. If it lands at GPT-5.4 level on agent benchmarks and ships at Chinese prices, the pricing premium Opus 4.7 just set becomes the harder sell this summer.

AI Image of the Day

Prompt: fairy child, princess, beautiful, big blue eyes curly, curly, curly long hair she is standing in a garden filled with flowers. She is wearing Bohemian calming has flowers in her hair. They are hearts everywhere Reiki healing life. This little light of mine. I'm gonna make a SHEIN happy happy happy childhood illustration acrylic watercolor.

Reddit's Caveman Claude Code skill cuts output tokens, barely touches the bill

A Reddit sub-skill called Caveman Claude Code went viral this week claiming 65% to 75% output token reduction. The actual invoice savings are closer to 1% to 2%.

The skill instructs Claude Code to answer in clipped, caveman-style prose. Output tokens do drop sharply. The problem is arithmetic. In a normal Claude Code session, visible output is 2% of total tokens. Cached input is 71%, uncached input is 23%, and thinking is 4%. Cutting output by 65% saves about 1.3% of the total. One Reddit user posted a $1 saving on a $100 bill and called it a win.

A sibling skill in the same repo, caveman-compress, targets the memory file itself and cuts 46% off long-running context. That one actually compounds. The broader lesson: the levers that matter are prompt caching, scoped file reads, and capped tool output, not prose style.

Why This Matters:

- Viral claims about AI cost savings rarely survive a pie chart. Teams chasing the Caveman headline will reach Q2 reviews with the same bill and a pile of unreadable AI outputs.

- The useful compression target is context, not output. Expect more sub-skills this quarter aimed at caching strategy, file scoping, and tool output trimming, the parts of the token budget that are actually 70% of the invoice.

🧰 AI Toolbox

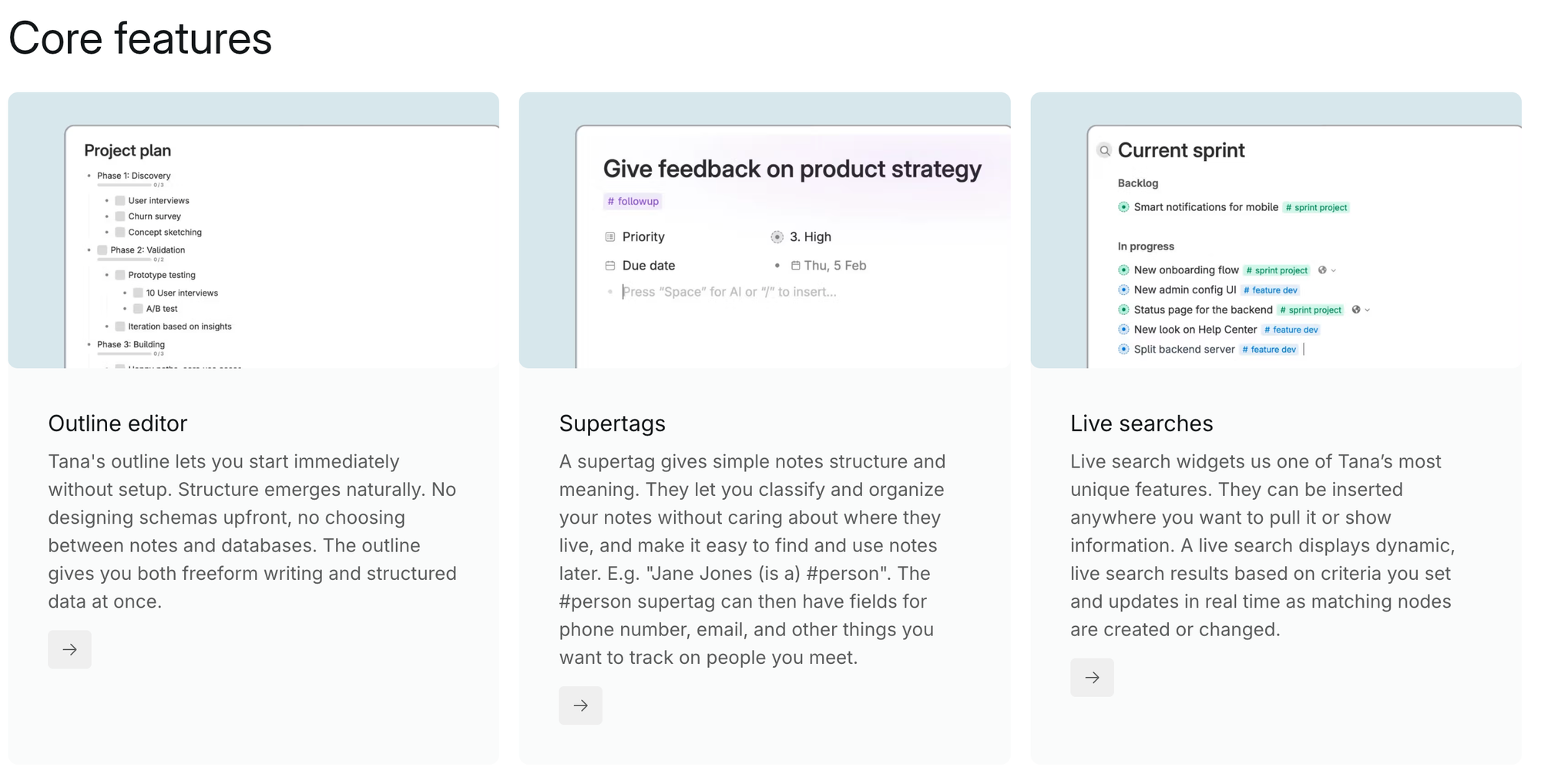

How to Turn Meetings into Structured Actions and a Self-Building Knowledge Graph with Tana

Tana sends an AI agent into your video calls that does more than transcribe. It extracts action items, decisions, and key entities, then links everything to a shared knowledge graph that grows with every conversation. Ask a question six months later and Tana surfaces the meeting where the decision was made, who was there, and what happened next. The graph compounds automatically, so context that took hours to reconstruct now takes seconds. Free tier available with 500 AI credits per month.

Tutorial:

- Sign up free at outliner.tana.inc and connect your Google Calendar

- Open an upcoming meeting and click "Add Meeting Agent" to attach the AI bot

- Start the call on Zoom, Google Meet, or Microsoft Teams. The Tana agent joins automatically, transcribes with speaker labels, and works in over 60 languages

- After the call, open Tana to find structured notes with extracted action items, decisions, and tagged participants

- Click any person or project name to see every meeting where they appeared, with linked context and prior decisions

- Route action items to external tools: send tasks to Linear, post summaries to Slack, or create GitHub issues directly from the meeting output

- Run a natural language query across all past meetings: "What did we decide about the pricing model in March?" and get a sourced answer from the graph

URL: outliner.tana.inc

What To Watch Next (24-72 hours)

|

||

|

||

|

||

|

🛠️ 5-Minute Skill: Turn Six Streaming Apps Into "Watch This Tonight"

It is Friday, 8:47 PM. You, your partner, and a glass of wine. Netflix, Max, Apple TV+, Prime, Disney+, Hulu. Thirty minutes of scrolling, nothing picked, the wine is gone, and you are annoyed at each other for no reason.

Your raw input:

Screenshots of the home screens from Netflix, Max, Apple TV+, Prime Video. Two people watching. Mood: brain is cooked, do not want to think, no subtitles, under two hours, nothing about work or dystopian tech. Last thing we finished: Severance season 2. We bounced off The Bear and anything Scandinavian.

The prompt:

Pick one thing to watch tonight from these home screens. One pick only, not a shortlist. One sentence on why it fits the mood, one sentence on what we will give up by picking it. No trailers, no runtime disclaimers, no "you might also like." Decide.

The output:

Watch: Paddington in Peru on Prime.

Why it fits: Ninety minutes, zero cognitive load, actually funny for adults, and nobody has to track a timeline.

What you give up: You are not going to text friends about it Monday. That is the point.

Why this works:

Streaming-app home screens are engineered to make you browse, not decide. The prompt strips the choice architecture: one answer, one reason, one tradeoff. You either accept the pick or override it, and either way the scrolling is over.

What to use:

Gemini 3 Pro or Claude read multi-app home-screen screenshots best, pulling actual titles from the tile art. ChatGPT is fine if you type the short list yourself. Paste the mood and the last thing you finished underneath, run the prompt, press play.

📖 AI Alphabet

|

I

|

📖 AI Alphabet Inference Inference is the moment a trained model is actually used to generate an answer, prediction, or action. Training builds the system; inference is when the system goes to work. |

AI & Tech News

Anthropic CEO Walks into the White House as Mythos Deal Brews

Dario Amodei is meeting White House chief of staff Susie Wiles on Friday, the clearest sign yet that the administration wants access to Anthropic's Mythos model despite the Pentagon blacklist and Anthropic's active lawsuit. Parts of the intelligence community and CISA are already testing Mythos, Treasury wants in, and a source close to the talks warned that freezing out the new model would be "a gift to China."

OpenAI Commits Over $20B to Cerebras Chips and Takes Equity Stake

OpenAI will spend more than twenty billion dollars for Cerebras Wafer-Scale Engine servers, double the previously reported figure, and may receive an equity stake in return. The deal signals a serious push to diversify beyond Nvidia as compute bills climb.

Cerebras Files for IPO at $35B+ Valuation, Targets $3B Raise

The AI chipmaker plans to file as early as Friday, seeking over three billion dollars at a valuation exceeding thirty-five billion, a sixty percent jump from its February mark. Demand for Wafer-Scale Engine hardware is pulling the company onto public markets ahead of schedule.

SpaceX Pulls Employee Share Vesting Forward Ahead of Potential IPO

Elon Musk's rocket company moved the vesting date for staff-awarded shares from May into next week, clearing the way for an earlier liquidity window. The timing change lines up with mounting IPO preparation signals, though SpaceX has not formally committed.

Alibaba Releases Qwen3.6-35B-A3B, an Open-Weight MoE Built for Coding Agents

The new Qwen model carries thirty-five billion total parameters with just three billion active per inference, hitting dense-model performance on agentic coding benchmarks at a fraction of the compute cost. Weights are live on Hugging Face and ModelScope for commercial and research use.

China Opens Multi-Agency Probe into Meta's $2B Manus Acquisition

SAMR and the Cyberspace Administration are now reviewing Meta's Manus deal on national security grounds, with some officials privately warning the crackdown risks freezing foreign investment. The signal matters: Beijing is tightening the screws on cross-border AI M&A.

Google Rolls Out Side-by-Side AI Mode in Chrome Desktop

Chrome users can now open web pages next to the AI interface for real-time context, plus search across multiple open tabs on desktop and mobile. It is Google's most aggressive push yet to fold Gemini-powered search into everyday browsing.

Mozilla Launches Thunderbolt, an Open-Source Client for Self-Hosted AI

The Thunderbolt release targets enterprises and individuals who want local models without cloud dependencies, with full source available on GitHub. Mozilla is staking out privacy-first territory as the commercial AI stack consolidates.

Roblox Pays $12.5M and Rolls Out Universal Age Checks in Nevada Settlement

Nevada extracted mandatory age verification for every Roblox user after claiming the platform failed to shield minors from predators and harmful content. The settlement sets a template other state attorneys general are likely to copy.

OnlyFans in Talks to Sell Minority Stake at $3B, Down from $5.5B

Architect Capital is negotiating under twenty percent of the platform at a valuation cut nearly in half from last year's figure, following founder Leonid Radvinsky's sudden death. The haircut reflects governance uncertainty, not user decline.

Manycore Tech Jumps 187% on Hong Kong Debut After Robotics Pivot

The Hangzhou firm, led by a former Nvidia executive, raised one hundred fifty-six million dollars and surged on day one as investors bet on its pivot from real estate software to AI training data for robotics manufacturers. China's humanoid and industrial robot boom is feeding a new data-supplier tier.

🚀 AI Profiles: The Companies Defining Tomorrow

Thinking Machines Lab is the year-old AI startup from former OpenAI CTO Mira Murati, shipping fine-tuning infrastructure while quietly building its own frontier models. 🧠

Founders

Mira Murati founded Thinking Machines Lab in February 2025 after leaving OpenAI, where she served as chief technology officer through the GPT-4 launch and the November 2023 board crisis. Chief scientist John Schulman, co-founder of OpenAI and co-creator of ChatGPT, joined alongside roughly thirty researchers and engineers pulled from OpenAI, Meta AI, and Mistral. Headquartered in San Francisco, the company has grown past one hundred employees in under fourteen months.

Product

Tinker, launched October 2025, is an API that lets researchers and developers fine-tune open-weight language models without managing GPU clusters or distributed training infrastructure. The product gives engineers control over algorithms and data while abstracting the compute layer. Schulman told Cursor's podcast that Thinking Machines plans to release its own proprietary models in 2026 and extend Tinker with multimodal capabilities. The strategy is unusual: ship infrastructure first, build the foundation model in public view through the same pipeline customers already use.

Competition

Frontier-lab peers OpenAI, Anthropic, Google DeepMind, and xAI compete on the model side. On the fine-tuning and inference infrastructure side, Together AI, Fireworks, and Modal hold the developer mindshare. Thinking Machines' differentiation rests on research pedigree and the bet that fine-tuning access, not just base model quality, becomes the enterprise lock-in layer.

Financing 💰

$2 billion seed round in July 2025 at a $12 billion valuation, led by Andreessen Horowitz with Nvidia, AMD, Cisco, and Jane Street participating. Bloomberg reports the company is in talks for a new round at roughly $50 billion, a four-fold markup in under a year.

Future ⭐⭐⭐⭐

If the 2026 model launch lands near the frontier and Tinker keeps compounding developer distribution, Thinking Machines clears the bar to become the fourth name in the serious frontier conversation. The risk is execution speed in a market where OpenAI and Anthropic ship monthly and the capital raised at $50 billion buys a lot less compute than it did in 2023. 🧠

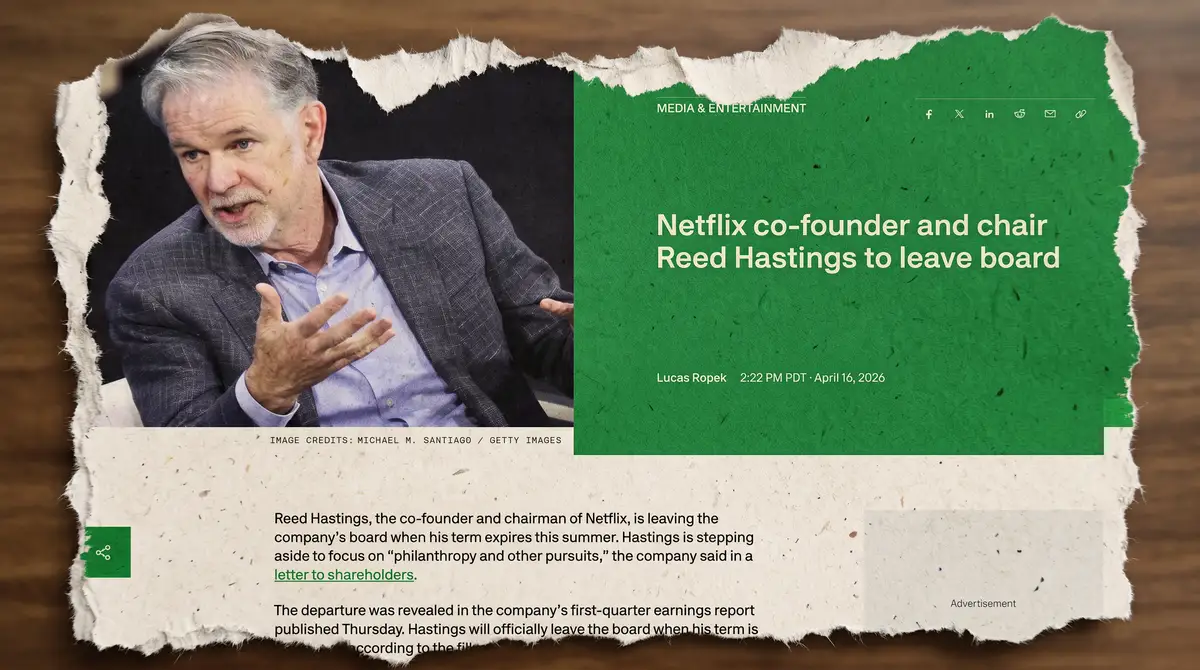

🔥 Yeah, But...

Netflix posted Q1 2026 revenue of $12.25 billion, up 16% year-over-year, with net profit jumping 82% to $5.28 billion and diluted EPS of $1.23 against a $0.76 consensus. Ad-tier revenue is on track to double to $3 billion this year. The stock fell as much as 10% in after-hours trading. Buried in the same investor letter: co-founder and chairman Reed Hastings will not stand for re-election at the June annual meeting, ending a 29-year run. Netflix told the SEC the exit is "not as a result of any disagreement with the Company."

(Bloomberg, April 16, 2026; TechCrunch, April 16, 2026)

Our take:

The quarter was immaculate. Revenue up sixteen percent, profit up eighty-two, ad tier on pace to double to three billion, earnings sixty-two percent above consensus. The stock fell ten.

In the same letter, Reed Hastings, the man who turned a DVD-by-mail side project into a two-hundred-billion-dollar cultural utility, mentioned he will not stand for re-election in June. Not, the company hastened to add, "as a result of any disagreement," a phrase nobody uses unless it is.

He is leaving to focus on philanthropy, which is what founders call the phase of life where they no longer have to answer questions about guidance. Clean quarter. Exit in the footnote. Room full of people already doing the math on what comes next.

IMPLICATOR

IMPLICATOR