San Francisco | Tuesday, May 5, 2026

Washington is relearning a word it tried to retire: review. The White House calls it model safety, but the sharper ask is first access, especially when a cyber-capable system like Mythos can look like both product and state asset.

In Oakland, OpenAI's nonprofit promise is getting the same treatment. Greg Brockman's near-$30 billion stake gives Musk's lawyers a clean number, while OpenAI points to a Foundation stake it says is even larger.

Hermes makes the private version of the same problem practical. One command installs the agent. The real decision is what a persistent system may read, remember, run, and touch after the demo ends.

Stay curious,

Marcus Schuler

Know someone drowning in AI noise? Forward this briefing. They can subscribe free here.

White House Weighs AI Model Review as Mythos Shifts Access Fight

The Trump administration is talking about model safety again. The difference is that safety now sounds a lot like first access.

The White House is considering a working group and possible review process for frontier AI models before public release, after Anthropic's restricted Mythos model created the test case. Mythos can find exploitable software flaws, so it is hard to describe as a normal product launch.

That is why the NSA's reported Mythos use matters. The Pentagon still treats Anthropic as a supply-chain risk, while another part of the national-security system wants the model early enough to harden networks.

The policy label is safety. The operational question is access: who sees a cyber-capable model first, who gets blamed after a breach, and whether review becomes procurement by another name.

Why This Matters:

- Labs now face a government that wants oversight and early access in the same meeting.

- Restricted cyber models are becoming a policy category before Congress has settled the rules.

Reality Check

What's confirmed: The Times reported White House talks on a model-review process; Anthropic limited Mythos to about 40 organizations.

What's implied (not proven): First access could become the practical price of launching cyber-capable frontier models.

What could go wrong: Review turns into informal favoritism for agencies and favored vendors.

What to watch next: Whether an executive order creates review power, access rules, or only another working group.

The One Number

$1.5 billion - The reported committed capital behind Anthropic's new enterprise AI services venture with Blackstone, Hellman & Friedman, Goldman Sachs, and other investors. That is not another model round. It is a distribution vehicle for turning Claude into private-equity operating work, where software, consulting, and portfolio access become one product.

Source: TechCrunch, May 4, 2026

Brockman's $30 Billion Stake Puts OpenAI Mission on Trial

Greg Brockman gave the jury the number Musk's lawyers wanted. A mission fight is easier to understand when one founder's stake is worth nearly $30 billion.

Brockman testified Monday in Oakland that his OpenAI stake is worth close to $30 billion. Musk attorney Steven Molo then asked why Brockman had not given the nonprofit the portion above $1 billion, a figure from Brockman's own 2017 journal.

OpenAI's answer is control. The company says the Foundation still controls OpenAI Group PBC and holds a stake worth far more than Brockman's. That may be true and still leave the jury with a simple problem: the Foundation, employees, Microsoft, and founders all became richer through the same conversion.

The trial is no longer only about whether OpenAI changed. It is about who captured the value when it did.

Why This Matters:

- Musk's charity argument now has personal equity, not only abstract governance language.

- OpenAI must show that commercial wealth funds the mission instead of replacing it.

AI Image of the Day

Prompt: A close-up portrait captures a young woman from the upper chest up, her face angled slightly to her right as she looks directly at the viewer with large, light blue eyes....

Hermes Agent Turns Easy Setup Into a Permissions Problem

Hermes Agent is not another chatbot wrapper. It is a persistent service that can remember, schedule, call tools, and answer through chat channels after the user leaves.

The install path is intentionally simple: a shell command, setup flow, model-provider choice, gateway pairing, tools, memory, and cron jobs. The hard part starts after the command works.

Hermes can run on a laptop, home server, or VPS. It can connect to Telegram, Discord, Slack, WhatsApp, email, local models, hosted models, memory files, skills, and tools. That makes it useful for research, file retrieval, infrastructure checks, and recurring work.

It also makes the security model the product. A persistent agent needs a dedicated account, strict allowlists, a test folder before a home directory, and manual approval before shell or file writes expand.

Why This Matters:

- Personal agents are moving from browser tabs into services with memory, credentials, and schedules.

- The next adoption barrier is not installation. It is deciding what the agent may touch.

🧰 AI Toolbox

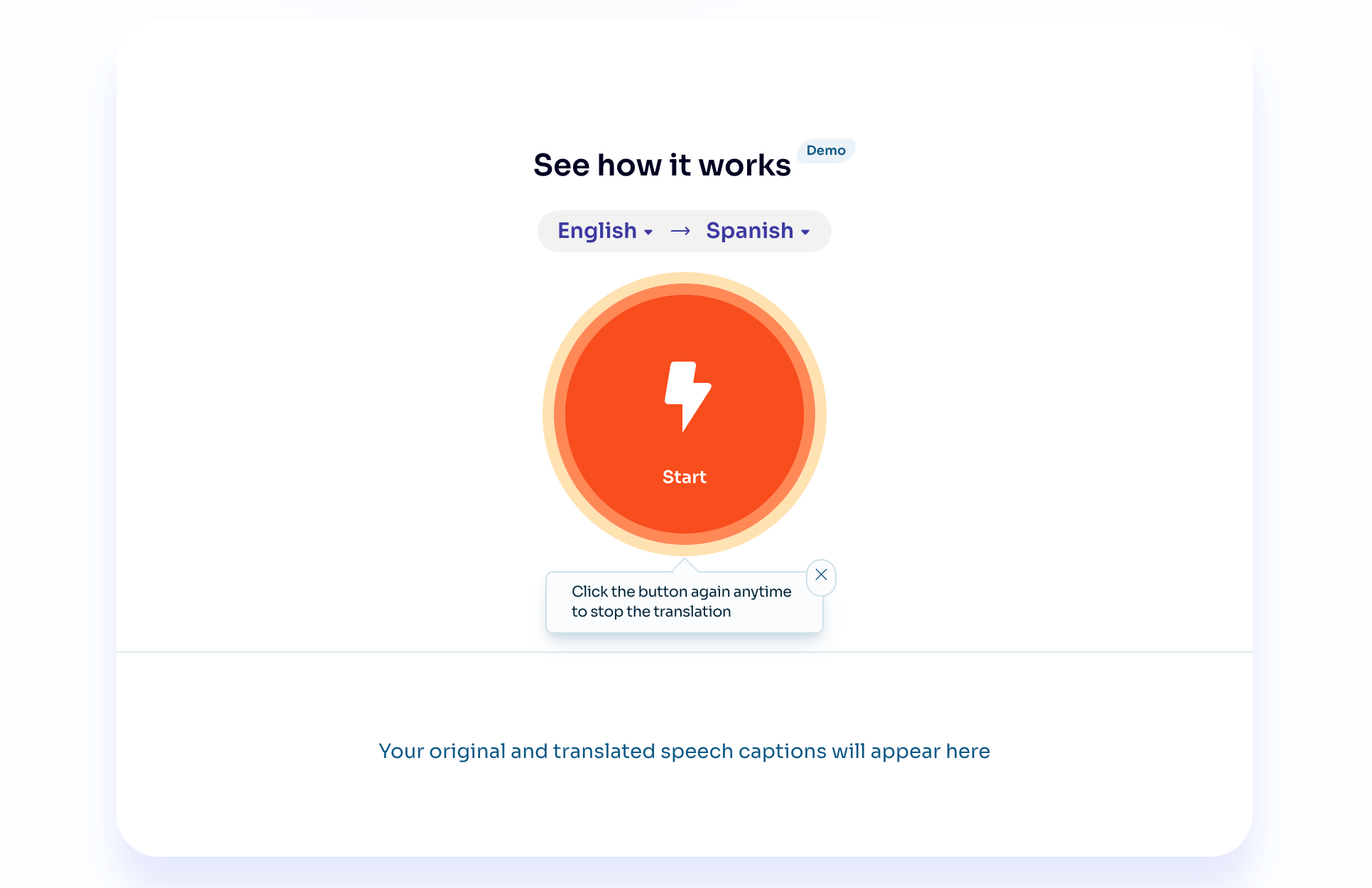

How to Translate Any Live Call in Real Time While Keeping Your Own Voice with Palabra.ai

Palabra.ai is a real-time speech translation tool that converts your spoken language into another language in under a second, while preserving the voice, tone, and speaking style of the speaker. Works inside Zoom, Google Meet, and Microsoft Teams without a separate app. Supports more than 60 languages and can auto-clone a speaker's voice from a short sample, so everyone sounds like themselves in every language. Palabra does not store any conversation data. Free tier available.

Tutorial:

- Sign up at palabra.ai and install the browser extension or desktop app for Mac or Windows

- Pick your spoken language and the target language each participant will hear

- Upload a 30-second voice sample to clone your voice so your translated audio sounds like you, not a generic TTS voice

- Join a Zoom, Google Meet, or Microsoft Teams call and enable Palabra in the meeting controls

- Speak naturally, Palabra translates your audio into the target language in under one second and routes it to the right participants

- Turn on live captions so remote attendees who missed audio can read the translation in their language

- For events and conferences, connect Palabra to your streaming software to translate a broadcast into multiple languages simultaneously

URL: Palabra.ai

What To Watch Next

|

||

|

||

|

💡 5-Minute Skill

Turn an AI Vendor Demo Into a Procurement Trap List

The vendor demo looked clean because demos are where messy data goes to wear a suit. Before the follow-up call, turn the transcript and your notes into questions that make the salesperson leave the happy path.

Your raw input:

Vendor: Acme Agents. Claims: automates support triage, reads Zendesk, Salesforce, and Slack, cuts handle time 35%, deploys in 30 days. Demo used perfect tickets. Unknowns: permissions, audit logs, fallback, pricing after pilot, data retention, who owns prompt changes. Stakeholders: support VP excited, security skeptical, finance wants ROI.

The prompt:

Act like a skeptical procurement lead and security reviewer. Turn these demo notes into ten trap questions grouped by data access, workflow failure, pricing, implementation, and proof. For each question, tell me what a good answer sounds like and what answer should stop the deal. Keep it blunt. No vendor-management jargon.

The output:

Data access: Which systems can the agent write to, not just read from? Good answer: write actions are scoped by role and logged separately. Stop sign: "we use your existing permissions" with no agent audit trail. Proof: Show one customer where handle time fell after full rollout, not only during a pilot.

Why this works:

A demo shows the product at its easiest. This prompt turns excitement into falsifiable checks: permissions, failure modes, costs, and proof after rollout. The "what stops the deal" line is the power move because it gives the room permission to say no before the calendar fills with vendor workshops.

What to use:

Claude. Good at turning vague notes into adversarial but fair questions for a business audience.

ChatGPT. Strong if you paste the vendor page and ask it to compare claims against your internal constraints.

📖 AI Alphabet

|

G

|

📖 AI Alphabet Guardrails Guardrails are rules or controls designed to keep an AI system within acceptable boundaries. They can limit unsafe outputs, reduce errors, or block actions the system should not take. |

AI & Tech News

Google, Microsoft and xAI Give CAISI Early Model Access

Google, Microsoft and xAI have joined OpenAI and Anthropic in giving the Commerce Department's CAISI early access to evaluate advanced models before public release. That turns the model-review fight from a White House idea into a live government access channel.

China Targets 70% Domestic Silicon Wafer Use

Chinese authorities want chipmakers to use more than 70% domestic silicon wafers by 2026, Nikkei Asia reports. The target pushes semiconductor self-reliance deeper into the supply chain, where high-purity 12-inch wafers remain hard to localize.

RadixArk Raises $100 Million to Cut AI Inference Memory Costs

RadixArk, founded by former xAI engineer Ying Sheng, raised a $100 million seed round at a $400 million valuation, according to the Journal. Its open-source SGLang engine attacks the memory and overhead costs that make large-scale inference expensive.

Nscale Plans 66,000 Rubin GPUs for Microsoft Portugal Site

Nscale Global Holdings will invest €695 million in Portugal to expand data-center infrastructure tied to Microsoft. The plan includes more than 66,000 Nvidia Rubin GPUs from late 2027, another sign that AI capacity deals are being booked years ahead.

Microsoft Finds AI Anxiety Is Outrunning Workplace Rewards

Microsoft's 2026 Work Trend Index finds that 65% of workers fear falling behind on AI, while only 13% say experimentation is rewarded at work. The gap explains why many companies talk about adoption while employees wait for incentives, permission, and time.

Meta Uses AI Age Signals to Find Under-13 Accounts

Meta is using AI systems that analyze bone structure, height, and other visual cues to estimate whether Facebook and Instagram users are under 13. The company says this is not traditional facial recognition, but the child-safety and privacy fight is already moving toward visual inference.

Coinbase Cuts 14% of Workforce as AI Reshapes Operations

Coinbase will cut about 700 jobs, roughly 14% of its workforce, Reuters reports. CEO Brian Armstrong cited AI-driven productivity as part of the operating shift, making crypto one more sector where automation is now tied directly to head count.

YC's OpenAI Stake Is Reportedly Worth More Than $5 Billion

Y Combinator still holds roughly 0.6% of OpenAI through the YC Research stake seeded in 2016, according to Techmeme's link to John Gruber's reporting. At OpenAI's current valuation, that minority position is worth more than $5 billion and adds another ownership thread to the governance debate.

a16z Crypto Raises $2.2 Billion for Fifth Fund

a16z crypto raised $2.2 billion for its fifth fund, down from the $4.5 billion vehicle it closed in 2022. The firm is betting that stablecoins and crypto infrastructure can carry investor demand even after the last cycle's excess fades.

Zyg Raises $60 Million at $500 Million Valuation

Zyg, the AI automation startup founded by ironSource veterans, raised $60 million at a $500 million valuation only two months after emerging from stealth. The pitch is familiar but still fundable: automate enterprise work before incumbents absorb the same workflows.

Kore.ai Opens Bay Area Hub for Enterprise Agent Push

Kore.ai has established a strategic headquarters in the San Francisco Bay Area while keeping its official headquarters in Orlando. The company says it added more than 100 enterprise customers in the last fiscal year, deepened Microsoft and AWS partnerships, and hired Bay Area leaders across growth, strategy, and marketing.

🚀 AI Profiles: The Companies Defining Tomorrow

Enzo Health is trying to make home health less dependent on after-hours charting, referral paperwork and reimbursement cleanup. The Lehi, Utah startup just raised a $20 million Series A for AI workflow software built around post-acute care, where every OASIS field can become either a payment lever or a compliance headache. 🩺

Founders

Founded in 2023 by Zach Newman and Dan Conger. Newman is CEO and co-founder; Conger is listed as founder, and early backer Tandem describes the pair as a sales-and-engineering duo. The company is based in Lehi, Utah, close enough to the home health software market to know the boring parts are the business.

Product

Enzo sells a connected platform for home health agencies: Intake analyzes referrals and eligibility, Scribe turns visits into OASIS-aligned documentation, and QA checks charts for coding, reimbursement and regulatory problems before they turn into denials. The company says agencies can move from referral to admission in five minutes and capture an average $200 more per patient per month, based on early customer results.

Competition

The narrow race is crowded: nVoq, Lime Health AI, AutoMynd, Vivid Health, CareGen AI and Nestmed all pitch pieces of the same post-acute AI stack. The bigger threat is distribution. WellSky, Axxess, MatrixCare and other workflow systems already sit where Enzo wants to live, and every ambient-scribe vendor would like to turn clinical notes into a compliance product.

Financing 💰

$20 million Series A led by N47, according to Axios Pro on May 4, 2026. CEO Zach Newman told Axios the company plans to prepare for a Series B next year, targeting $30 million to $40 million. Earlier backers include Tandem Venture Partners, which says it partnered with Enzo in 2024.

Future ⭐⭐⭐

Home health is a good AI market precisely because it is not glamorous: labor is scarce, documentation is painful, and reimbursement depends on details humans hate entering. Enzo's opening is a vertical workflow where accuracy pays directly. The risk is that agencies will not tolerate hallucinated compliance, and incumbents can bundle "good enough" AI into systems customers already use. If Enzo proves trust at the chart level, the Series B pitch writes itself. 🏠

🤨 Yeah, But...

The New York Times reported Monday that the Trump administration is considering a working group and possible review process for new AI models before release, after Anthropic's Mythos cyber model changed the risk calculus. Axios has reported that the NSA is using Mythos despite the Pentagon's supply-chain-risk fight with Anthropic.

(NYT, May 4, 2026; Axios, April 19, 2026)

Our take: The administration spent a year treating AI oversight as a museum piece from the previous presidency, then met a model good enough at finding software holes and suddenly rediscovered process. The Pentagon says Anthropic is a risk. The intelligence agencies say, yes, and can we borrow the risk for work. This is not deregulation giving way to regulation. It is a building discovering fire codes after smoke comes through the vents. Silicon Valley asked government to move fast. It did. It moved directly to the front of the access line.

IMPLICATOR

IMPLICATOR