San Francisco | Wednesday, April 29, 2026

Oakland has the cleanest version of the OpenAI fight: nine jurors, one judge, and two founders arguing over who stole the origin story. Musk calls it a charity taken private. Altman says the founder who left came back only after ChatGPT made the lab valuable. The verdict is advisory, but the myth damage is not.

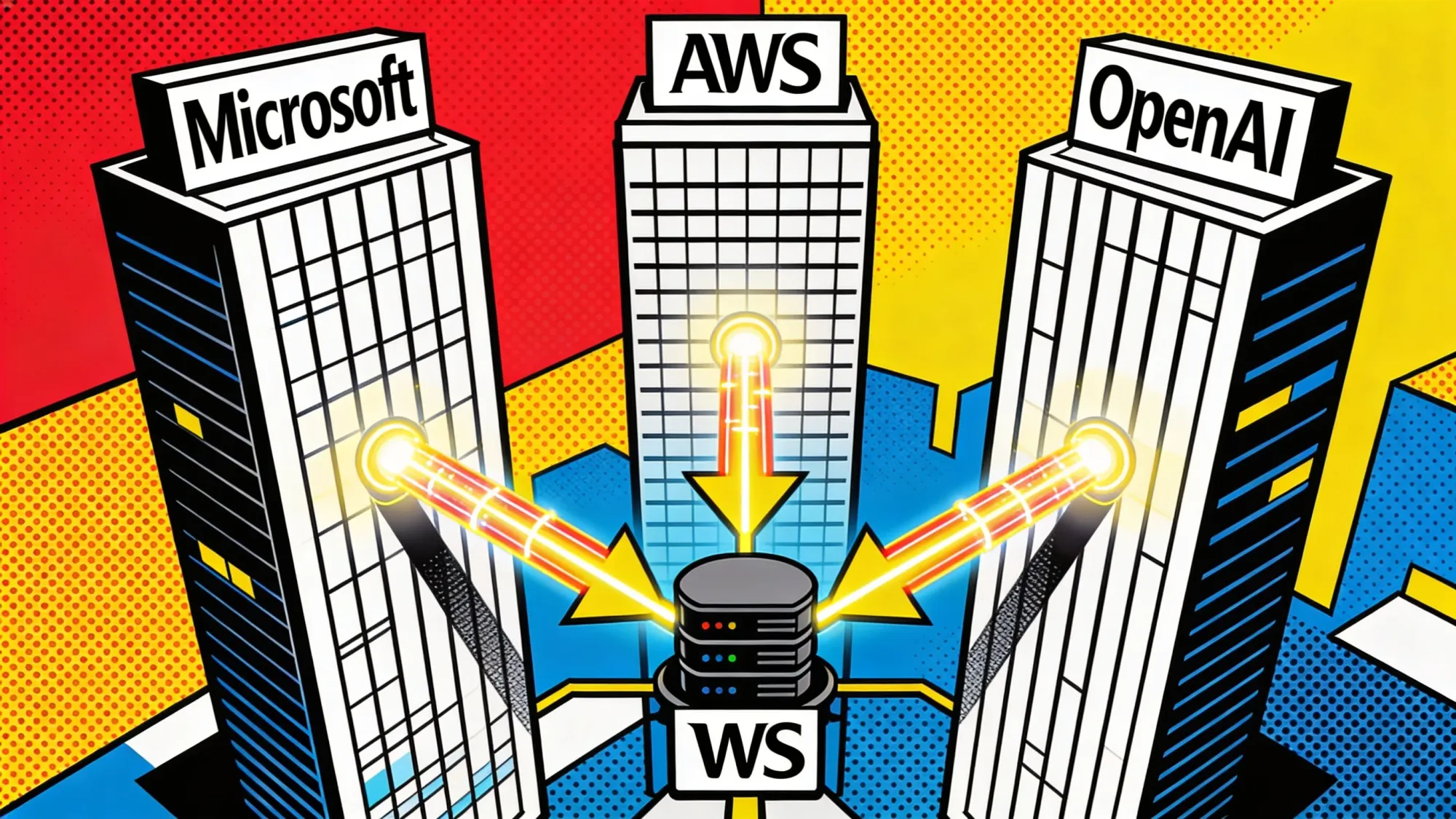

Across the bay, OpenAI has moved on to distribution. Microsoft loses its model exclusive, AWS gets the agent runtime, and the harness around the model becomes the prize.

In Brussels, twelve hours of AI Act talks end with no deal. Companies now live with two calendars, one for rules as written, one for relief that may not arrive.

Stay curious,

Marcus Schuler

Know someone drowning in AI noise? Forward this briefing. They can subscribe free here.

Musk and Altman Put OpenAI's Charity Fight Before Nine Jurors

Nine jurors get the origin story. Judge Yvonne Gonzalez Rogers keeps the remedy.

Musk and Altman finally share a courtroom in Oakland, where the case is less about OpenAI paperwork than about who gets to own the founding myth. Musk says Altman and Greg Brockman stole a charity. OpenAI says a founder who lost control came back with a lawsuit after ChatGPT made the lab valuable.

The jury's findings are advisory. Gonzalez Rogers will use them as a guide, then decide the ruling herself. That matters because Musk is seeking up to $134 billion in disgorgement from OpenAI's for-profit arm to its nonprofit foundation, while OpenAI is trying to keep a clean IPO story around a company valued between $730 billion and $852 billion.

The record is awkward for both sides: Musk explored a for-profit path in 2017, while OpenAI now has to defend a mission claim beside Microsoft money and IPO math.

Why This Matters:

- The case puts OpenAI's nonprofit mission, cap table, and public-market story in the same courtroom.

- An advisory verdict still gives the judge a public record for any remedy before an IPO window opens.

Reality Check

What's confirmed: A nine-person jury is hearing the case; the judge keeps the final ruling; Musk seeks up to $134 billion for OpenAI's nonprofit arm.

What's implied (not proven): The trial can settle OpenAI's origin story. It can shape the record, not erase the politics around the company.

What could go wrong: A messy advisory verdict gives both sides enough material to keep the governance fight alive through an IPO process.

What to watch next: Altman and Microsoft CEO Satya Nadella testimony, then how Gonzalez Rogers frames the jury's findings.

The One Number

$115 billion: The minimum Meta says it expects to spend on capital expenditures this year as it builds AI infrastructure for Meta Superintelligence Labs and the core business. That is the floor investors will judge when Zuckerberg explains why compute has become the company's main product risk.

Source: Meta Platforms CFO outlook

OpenAI Gives AWS Exclusive Agent Runtime After Microsoft Drops Model Moat

OpenAI won the right to sell across clouds. Its first big move was an AWS-only agent layer.

Microsoft dropped Azure exclusivity from its OpenAI agreement on Monday. On Tuesday, AWS announced OpenAI models in Bedrock, plus Bedrock Managed Agents, a jointly built runtime that Altman told Stratechery is exclusive to Amazon.

The split is the tell. Model access becomes multi-cloud. The harness around the model, identity, permissions, memory, logs and guardrails, becomes the premium product. Microsoft keeps revenue-share payments from OpenAI and model rights through 2032. AWS gets the enterprise sales wedge.

Why This Matters:

- OpenAI is no longer selling only model access; it is choosing where the agent control plane lives.

- AWS now has a cleaner agent story than Azure just as hyperscaler AI spending faces investor scrutiny.

AI Image of the Day

Prompt: Bright, stylized breakfast illustration with bold blue "BON JOUR" block letters, small red hearts inside some letters, a red moka pot, a pink grid-pattern coffee mug filled with dark coffee, and a plate with three heart-shaped cookies on a blue-and-white checkered tablecloth. Strong blue, red, and pink palette on a light beige background, textured hand-drawn gouache or crayon-style look, cozy morning greeting mood.

EU AI Act Talks Fail After 12 Hours, Leaving August Deadline Intact

The softening package hit the same problem that made the AI Act hard in the first place: product safety.

EU countries and Parliament lawmakers failed to agree after 12 hours of talks Tuesday. The unresolved issue is whether industries already covered by sectoral rules, including product safety law, should be exempt from AI Act obligations.

That keeps the current calendar alive. Many high-risk obligations remain tied to Aug. 2, 2026 unless the Digital Omnibus passes first. Council text would push stand-alone high-risk systems to Dec. 2, 2027 and product-embedded systems to Aug. 2, 2028. For now, companies have to plan for both clocks.

Why This Matters:

- Product makers cannot assume relief arrives before the current high-risk compliance date.

- The next May round decides whether Brussels delivers simplification or just another planning problem.

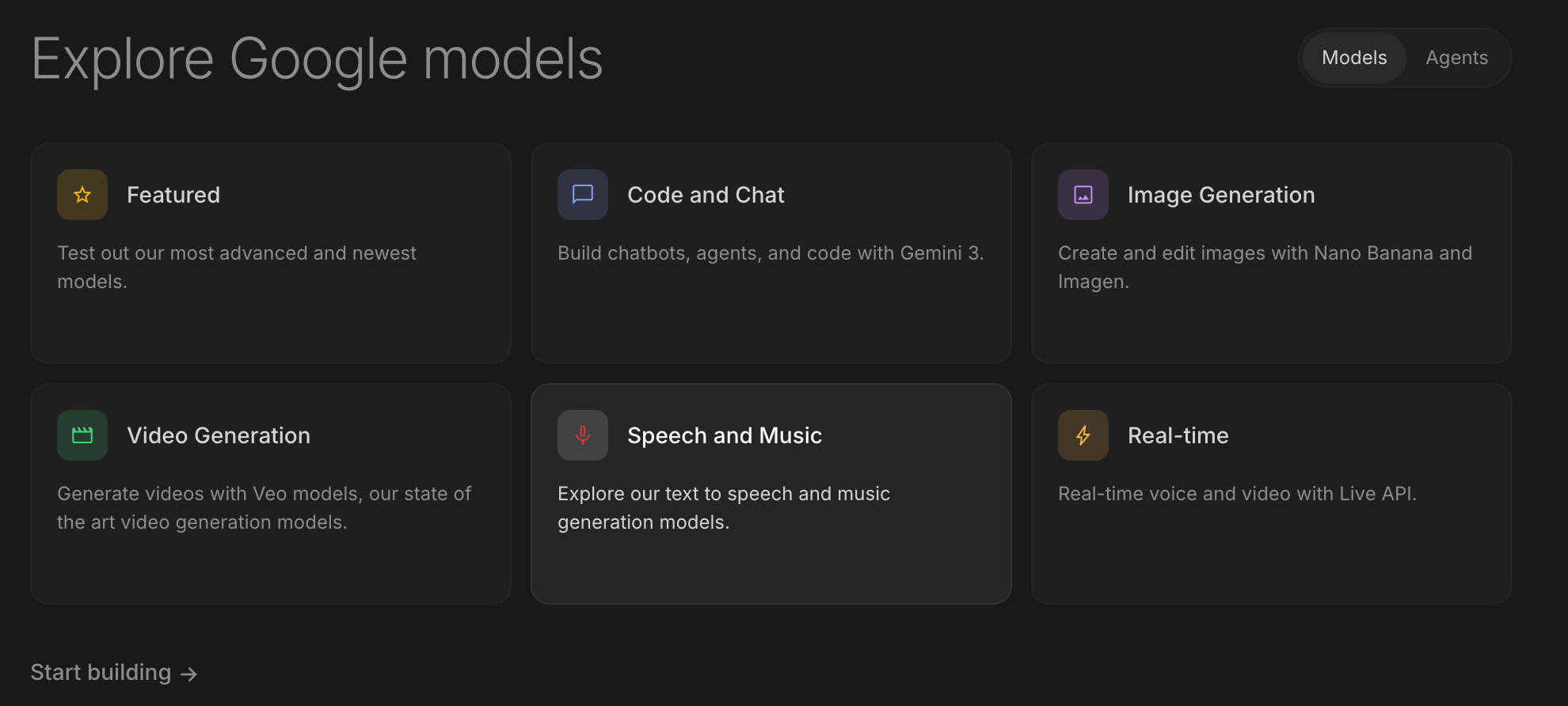

🧰 AI Toolbox

How to Prototype Production-Grade AI Apps in Minutes with Google AI Studio

Google AI Studio is the fastest way to experiment with Gemini 3 models, build app prototypes from a single prompt, and ship them to production. A browser-based playground lets you test prompts, tune parameters, and compare model versions side by side. The Build apps feature turns a natural-language description into a working React app with a live preview and deployable code. It includes native image generation, video, and live multimodal APIs. Free to use with generous quotas.

Tutorial:

- Go to Google AI Studio and sign in with a Google account, no credit card required

- Open a chat prompt and test Gemini 3 with your real task, tweaking system instructions and temperature in the right panel

- Click "Compare" to run the same prompt against multiple Gemini versions side by side and pick the best output

- Switch to the "Build apps" tab, describe an app in plain English ("a meal planner that suggests recipes based on photos of my fridge") and watch AI Studio generate a working React prototype

- Use the live preview to test the app, then click "Edit code" to refine it with the built-in AI assistant

- Generate images or short videos directly inside the prompt, useful for mocking up assets

- Deploy the app to a public URL with one click, or copy the API integration code to run it in your own infrastructure

URL: Google AI Studio

What To Watch Next (24-72 hours)

|

||

|

||

|

💡 5-Minute Skill

Turn the Weird Contents of Your Fridge Door Into a Lunch That Does Not Taste Like Surrender

Wednesday, 12:17 p.m. You have eight minutes before the next call and a fridge door that looks like a condiment museum curated by someone under duress. There is harissa, miso, pickles, a thumb of ginger, yogurt, one tortilla, and the emotional remains of Sunday optimism. Open ChatGPT and make it admit lunch is still possible.

Your raw input:

Ingredients I can actually use: one flour tortilla, half a can of chickpeas, Greek yogurt, harissa, white miso, pickles, lemon, ginger, frozen peas, one egg, old parsley, butter, rice from Monday. Equipment: pan, microwave, toaster oven. Time: 12 minutes. Mood: suspicious. Make lunch, not a project.

The prompt:

Act like a practical line cook with no patience for lifestyle content. From these ingredients, give me one lunch plan in under 12 minutes. Use at least one weird condiment, but do not make it weird for sport. Output: dish name, exact steps, what to mix first, what to heat first, one backup if the tortilla tears, and one sentence explaining why the flavors make sense. No shopping list. No cheerful preamble.

The output:

Harissa-miso chickpea tortilla with cold pickle yogurt. Mix two spoonfuls yogurt, a little grated ginger, chopped pickle, lemon, and parsley. In a pan, melt butter, add chickpeas and peas, then stir in a small spoon of harissa and a tiny smear of miso loosened with lemon. Warm the tortilla in the toaster oven. Fry the egg in the same pan after the chickpeas are hot. Fill tortilla with chickpeas, egg, and cold pickle yogurt. If the tortilla tears, put everything over Monday rice. The flavors work because harissa brings heat, miso brings salt and depth, yogurt cools it down, and pickles keep it from becoming beige paste.

Why this works:

The trick is not asking AI for "recipes." That gives you SEO oatmeal and a lecture about pantry staples. Give it the exact inventory, the equipment, the clock, and the emotional weather. The line-cook role matters because it suppresses garnish poetry and forces sequencing. "What to mix first" is the hidden power move: most bad emergency lunches fail because sauce, heat, and assembly all happen at once.

What to use:

ChatGPT. It is good at turning weird constraints into something edible without needing food-blog ceremony. Claude also works, but may write a more elegant plan than the situation deserves. If you have a photo model handy, add one fridge-door image and ask it to identify only what is clearly visible. Do not let it invent cheese. That is how lunch becomes fan fiction.

📖 AI Alphabet

|

T

|

📖 AI Alphabet Token A token is a chunk of text a model reads and processes, often smaller than a word. Pricing, speed, and context limits are usually measured in tokens. |

AI & Tech News

Poolside Releases Open-Weight Coding Model Laguna XS.2

Poolside launched Laguna XS.2, a free open-weight mixture-of-experts model for local agentic coding, alongside the proprietary Laguna M.1. The split gives Poolside an open developer wedge while keeping its higher-capacity model behind a commercial layer.

Goldman Sachs Stops Anthropic Model Use in Hong Kong

Goldman Sachs told bankers in Hong Kong to stop using Anthropic's AI models, the Financial Times reported. Anthropic said its tools were not officially supported there, turning the issue into a compliance lesson for banks trying to use AI across regulated jurisdictions.

U.S. Orders Equipment Suppliers to Halt Some Hua Hong Shipments

The U.S. Commerce Department directed chip-equipment suppliers to stop some shipments to Hua Hong, Reuters reported. Targeting China's second-largest foundry extends the export-control fight from advanced chips into the manufacturing tools that decide future domestic capacity.

NXP Rallies as Automotive Chip Demand Lifts Forecast

NXP shares jumped after the company reported first-quarter revenue of $3.18 billion and gave a stronger second-quarter outlook. The automotive rebound matters because it gives chip investors a cleaner read on real industrial demand outside the AI accelerator trade.

OpenAI Projects $8 ChatGPT Go as Subscriber Growth Engine

OpenAI projected that its $8 ChatGPT Go tier could reach 112 million subscribers by year-end while the $20 Plus plan falls to about 9 million, The Information reported. The shift would trade revenue per user for scale, turning consumer AI into a pricing experiment.

Amazon Quick Gets Desktop App for Local AI Workflows

AWS introduced a desktop app for Amazon Quick, its generative AI assistant for building apps, dashboards, and presentations from local files and third-party tools. The product moves Amazon's assistant pitch closer to the worker's desktop, not only the cloud console.

Robinhood Misses Revenue Outlook as Crypto Trading Falls

Robinhood reported Q1 revenue of $1.07 billion, up 15% from a year earlier but below consensus, while crypto revenue fell 47%. The miss shows how quickly fintech earnings can sag when retail crypto activity cools.

Microsoft Rolls Out Copilot to 743,000 Accenture Employees

Microsoft is deploying Copilot across Accenture's roughly 743,000 employees, Reuters reported. It is Microsoft's largest single customer rollout and gives the company a reference account for enterprise AI adoption at full consulting-firm scale.

Nvidia Launches Nemotron 3 Nano Omni

Nvidia introduced Nemotron 3 Nano Omni, an open multimodal model for text, vision, and speech in agentic AI workflows. The release broadens Nvidia's software stack at the same time customers are weighing how much agent behavior should run close to GPU infrastructure.

Ghostty Moves Off GitHub After Repeated Outages

HashiCorp co-founder Mitchell Hashimoto said the Ghostty terminal project will migrate away from GitHub after recurring service outages. The move is small in user count but loud in signal: developer infrastructure loses trust fastest when the outage hits the build path.

🚀 AI Profiles: The Companies Defining Tomorrow

Parallel Web Systems is building search infrastructure for AI agents, not humans with browser tabs. The Palo Alto startup founded by former Twitter CEO Parag Agrawal just raised $100 million at a $2 billion valuation, a bet that long-running agents will need their own way to use the web for investment research, insurance claims, legal work, and government-contract digging.

Founders

Founded by Parag Agrawal, Twitter's former chief executive and former chief technology officer. Agrawal became Twitter CEO in 2021 after Jack Dorsey stepped down, then was ousted in late 2022 after Elon Musk completed the acquisition. Parallel is the cleaner second act: no consumer network, no moderation politics, just infrastructure for machines that need to retrieve information faster than people can click.

Product

Parallel gives AI agents a web-search layer built for task completion rather than page ranking. The company says agents use it for deep research workflows such as investment and risk underwriting, insurance claims processing, legal research, and government-contract analysis. Harvey uses Parallel to support research-heavy legal agents, because its customers need granular control over which websites agents access, not a generic "give the model Google" button.

Competition

Tavily and Exa are chasing the same agent-search layer. Google and Bing still dominate human search, while Perplexity owns the consumer answer-engine frame. Parallel's wedge is narrower and more enterprise: long-horizon agents need repeatable, controllable web access, with source selection and retrieval behavior that a customer can audit. The risk is that model labs, cloud platforms, and search incumbents bundle the same primitive into agent stacks.

Financing 💰

Series B of $100 million led by Sequoia Capital, valuing Parallel at $2 billion. Existing investors Kleiner Perkins, Index Ventures, and Khosla Ventures also participated. The company previously raised a $100 million Series A in November at a $740 million valuation and has raised $230 million in total. Parallel has about 50 employees and says more than 100,000 developers use its infrastructure. Source: WSJ, April 28, 2026.

Future ⭐⭐⭐

Agrawal's thesis is blunt: agents will use the web more than humans do. If that is right, agent search becomes infrastructure, not a feature. The market is early, but the customer pull is real whenever agents need to research, cite, and act over messy public information. Parallel now has the money and the board signal. The hard part is staying a layer, not becoming a feature someone else ships for free.

🤨 Yeah, But...

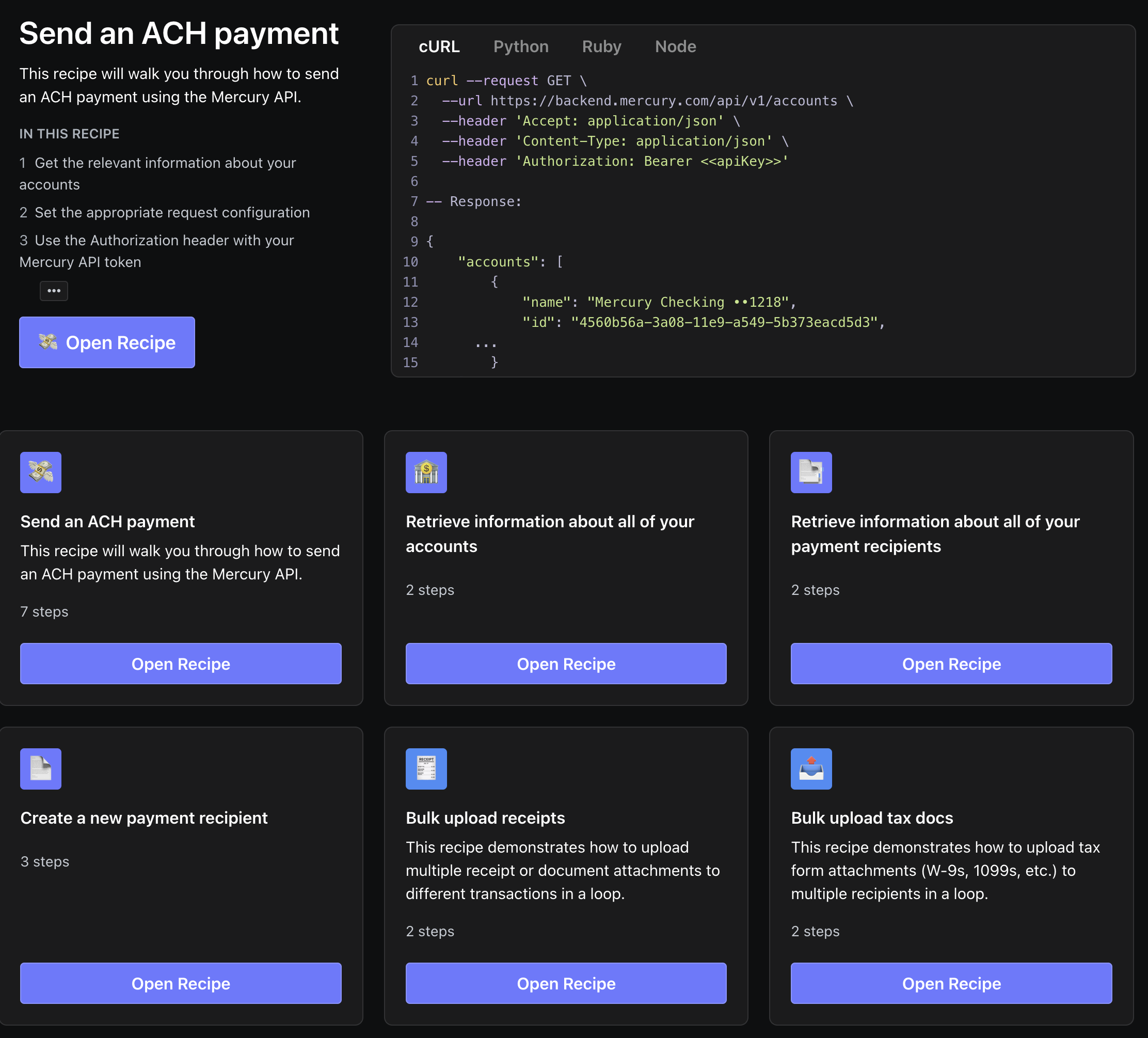

Payments Companies Have Discovered the Safest Place for AI Agents: Just Before the Money Moves

Finix* announced Tuesday that it is launching MCP integrations for ChatGPT, Claude, and Gemini so developers can explore its payments API, generate code, and prototype payment flows inside AI tools. One week earlier, Digits launched an MCP server for its real-time accounting ledger, giving firms and business owners read-only access to financial data inside Claude, ChatGPT, and Cursor. Mercury's MCP docs make the line explicit: its hosted server is beta, OAuth-based, and limited to read-only actions. The finance stack is opening its doors to agents. The cash drawer is still behind glass.

(Finix, April 28, 2026; Digits, April 21, 2026; Mercury MCP docs)*

Our take: There is something wonderfully adult about the AI payments revolution arriving with three companies all standing near the vault and saying, in effect, look but do not touch. Developers can ask an agent how to build a payment flow. Accountants can ask an agent why cash moved. Founders can ask an agent where the burn went.

What they cannot yet do, at least in the respectable versions, is tell the agent to send the money and go to lunch. This is not timidity. It is the first honest product decision the agent economy has made. A chatbot that hallucinates a restaurant is annoying. A chatbot that hallucinates a wire recipient is litigation with an OAuth screen. The useful future is not agentic banking. It is boring banking with agents kept on a very short leash.

IMPLICATOR

IMPLICATOR